Think. Create. Code. Vis! (@edXOnline, @UniofAdelaide, @cserAdelaide, @code101x, #code101x)

Posted: April 30, 2015 Filed under: Education, Opinion | Tags: #code101x, advocacy, blogging, collaboration, community, curriculum, data visualisation, education, educational problem, educational research, edx, higher education, learning, measurement, MOOC, moocs, reflection, resources, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentI just posted about the massive growth in our new on-line introductory programming course but let’s look at the numbers so we can work out what’s going on and, maybe, what led to that level of success. (Spoilers: central support from EdX helped a huge amount.) So let’s get to the data!

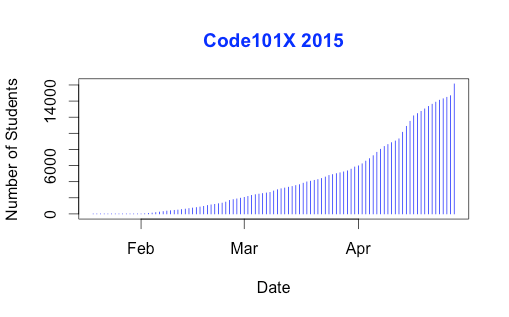

I love visualised data so let’s look at the growth in enrolments over time – this is really simple graphical stuff as we’re spending time getting ready for the course at the moment! We’ve had great support from the EdX team through mail-outs and Twitter and you can see these in the ‘jumps’ in the data that occurred at the beginning, halfway through April and again at the end. Or can you?

Hmm, this is a large number, so it’s not all that easy to see the detail at the end. Let’s zoom in and change the layout of the data over to steps so we can things more easily. (It’s worth noting that I’m using the free R statistical package to do all of this. I can change one line in my R program and regenerate all of my graphs and check my analysis. When you can program, you can really save time on things like this by using tools like R.)

Now you can see where that increase started and then the big jump around the time that e-mail advertising started, circled. That large spike at the end is around 1500 students, which means that we jumped 10% in a day.

When we started looking at this data, we wanted to get a feeling for how many students we might get. This is another common use of analysis – trying to work out what is going to happen based on what has already happened.

As a quick overview, we tried to predict the future based on three different assumptions:

- that the growth from day to day would be roughly the same, which is assuming linear growth.

- that the growth would increase more quickly, with the amount of increase doubling every day (this isn’t the same as the total number of students doubling every day).

- that the growth would increase even more quickly than that, although not as quickly as if the number of students were doubling every day.

If Assumption 1 was correct, then we would expect the graph to look like a straight line, rising diagonally. It’s not. (As it is, this model predicted that we would only get 11,780 students. We crossed that line about 2 weeks ago.

So we know that our model must take into account the faster growth, but those leaps in the data are changes that caused by things outside of our control – EdX sending out a mail message appears to cause a jump that’s roughly 800-1,600 students, and it persists for a couple of days.

Let’s look at what the models predicted. Assumption 2 predicted a final student number around 15,680. Uhh. No. Assumption 3 predicted a final student number around 17,000, with an upper bound of 17,730.

Hmm. Interesting. We’ve just hit 17,571 so it looks like all of our measures need to take into account the “EdX” boost. But, as estimates go, Assumption 3 gave us a workable ballpark and we’ll probably use it again for the next time that we do this.

Now let’s look at demographic data. We now we have 171-172 countries (it varies a little) but how are we going for participation across gender, age and degree status? Giving this information to EdX is totally voluntary but, as long as we take that into account, we make some interesting discoveries.

Our median student age is 25, with roughly 40% under 25 and roughly 40% from 26 to 40. That means roughly 20% are 41 or over. (It’s not surprising that the graph sits to one side like that. If the left tail was the same size as the right tail, we’d be dealing with people who were -50.)

The gender data is a bit harder to display because we have four categories: male, female, other and not saying. In terms of female representation, we have 34% of students who have defined their gender as female. If we look at the declared male numbers, we see that 58% of students have declared themselves to be male. Taking into account all categories, this means that our female participant percentage could be as high as 40% but is at least 34%. That’s much higher than usual participation rates in face-to-face Computer Science and is really good news in terms of getting programming knowledge out there.

We’re currently analysing our growth by all of these groupings to work out which approach is the best for which group. Do people prefer Twitter, mail-out, community linkage or what when it comes to getting them into the course.

Anyway, lots more to think about and many more posts to come. But we’re on and going. Come and join us!

Think. Create. Code. Wow! (@edXOnline, @UniofAdelaide, @cserAdelaide, @code101x, #code101x)

Posted: April 30, 2015 Filed under: Education, Opinion | Tags: #code101x, advocacy, authenticity, blogging, community, cser digital technologies, curriculum, digital technologies, education, educational problem, educational research, ethics, higher education, Hugh Davis, learning, MOOC, moocs, on-line learning, research, Southampton, student perspective, teaching, teaching approaches, thinking, tools, University of Southampton 1 CommentThings are really exciting here because, after the success of our F-6 on-line course to support teachers for digital technologies, the Computer Science Education Research group are launching their first massive open on-line course (MOOC) through AdelaideX, the partnership between the University of Adelaide and EdX. (We’re also about to launch our new 7-8 course for teachers – watch this space!)

Our EdX course is called “Think. Create. Code.” and it’s open right now for Week 0, although the first week of real content doesn’t go live until the 30th. If you’re not already connected with us, you can also follow us on Facebook (code101x) or Twitter (@code101x), or search for the hashtag #code101x. (Yes, we like to be consistent.)

I am slightly stunned to report that, less than 24 hours before the first content starts to roll out, that we have 17,531 students enrolled, across 172 countries. Not only that, but when we look at gender breakdown, we have somewhere between 34-42% women (not everyone chooses to declare a gender). For an area that struggles with female participation, this is great news.

I’ll save the visualisation data for another post, so let’s quickly talk about the MOOC itself. We’re taking a 6 week approach, where students focus on developing artwork and animation using the Processing language, but it requires no prior knowledge and runs inside a browser. The interface that has been developed by the local Adelaide team (thank you for all of your hard work!) is outstanding and it’s really easy to make things happen.

I love this! One of the biggest obstacles to coding is having to wait until you see what happens and this can lead to frustration and bad habits. In Processing you can have a circle on the screen in a matter of seconds and you can start playing with colour in the next second. There’s a lot going on behind the screen to make it this easy but the student doesn’t need to know it and can get down to learning. Excellent!

I went to a great talk at CSEDU last year, presented by Hugh Davis from Southampton, where Hugh raised some great issues about how MOOCs compared to traditional approaches. I’m pleased to say that our demography is far more widespread than what was reported there. Although the US dominates, we have large representations from India, Asia, Europe and South America, with a lot of interest from Africa. We do have a lot of students with prior degrees but we also have a lot of students who are at school or who aren’t at University yet. It looks like the demography of our programming course is much closer to the democratic promise of free on-line education but we’ll have to see how that all translates into participation and future study.

While this is an amazing start, the whole team is thinking of this as part of a project that will be going on for years, if not decades.

When it came to our teaching approach, we spent a lot of time talking (and learning from other people and our previous attempts) about the pedagogy of this course: what was our methodology going to be, how would we implement this and how would we make it the best fit for this approach? Hugh raised questions about the requirement for pedagogical innovation and we think we’ve addressed this here through careful customisation and construction (we are working within a well-defined platform so that has a great deal of influence and assistance).

We’ve already got support roles allocated to staff and students will see us on the course, in the forums, and helping out. One of the reasons that we tried to look into the future for student numbers was to work out how we would support students at this scale!

One of our most important things to remember is that completion may not mean anything in the on-line format. Someone comes on and gets an answer to the most pressing question that is holding them back from coding, but in the first week? That’s great. That’s success! How we measure that, and turn that into traditional numbers that match what we do in face-to-face, is going to be something we deal with as we get more information.

The whole team is raring to go and the launch point is so close. We’re looking forward to working with thousands of students, all over the world, for the next six weeks.

Sound interesting? Come and join us!

Musing on Industrial Time

Posted: April 20, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, curriculum, design, education, educational problem, educational research, ethics, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, time banking, universal principles of design, work/life balance, workload 3 CommentsI caught up with a good friend recently and we were discussing the nature of time. She had stepped back from her job and was now spending a lot of her time with her new-born son. I have gone to working three days a week, hence have also stepped back from the five-day grind. It was interesting to talk about how this change to our routines had changed the way that we thought of and used time. She used a term that I wanted to discuss here, which was industrial time, to describe the clock-watching time of the full-time worker. This is part of the larger area of time discipline, how our society reacts to and uses time, and is really quite interesting. Both of us had stopped worrying about the flow of time in measurable hours on certain days and we just did things until we ran out of day. This is a very different activity from the usual “do X now, do Y in 15 minutes time” that often consumes us. In my case, it took me about three months of considered thought and re-training to break the time discipline habits of thirty years. In her case, she has a small child to help her to refocus her time sense on the now.

Modern time-sense is so pervasive that we often don’t think about some of the underpinnings of our society. It is easy to understand why we have years and, although they don’t line up properly, months given that these can be matched to astronomical phenomena that have an effect on our world (seasons and tides, length of day and moonlight, to list a few). Days are simple because that’s one light/dark cycle. But why there are 52 weeks in a year? Why are there 7 days in a week? Why did the 5-day week emerge as a contiguous block of 5 days? What is so special about working 9am to 5pm?

A lot of modern time descends from the struggle of radicals and unionists to protect workers from the excesses of labour, to stop people being worked to death, and the notion of the 8 hour day is an understandable division of a 24 hour day into three even chunks for work, rest and leisure. (Goodness, I sound like I’m trying to sell you chocolate!)

If we start to look, it turns out that the 7 day week is there because it’s there, based on religion and tradition. Interestingly enough, there have been experiments with other week lengths but it appears hard to shift people who are used to a certain routine and, tellingly, making people wait longer for days off appears to be detrimental to adoption.

If we look at seasons and agriculture, then there is a time to sow, to grow, to harvest and to clear, much as there is a time for livestock to breed and to be raised for purpose. If we look to the changing time of sunrise and sunset, there is a time at which natural light is available and when it is not. But, from a time discipline perspective, these time systems are not enough to be able to build a large-scale, industrial and synchronised society upon – we must replace a distributed, loose and collective notion of what time is with one that is centralised, authoritarian and singular. While religious ceremonies linked to seasonal and astronomical events did provide time-keeping on a large scale prior to the industrial revolution, the requirement for precise time, of an accuracy to hours and minutes, was not possible and, generally, not required beyond those cues given from nature such as dawn, noon, dusk and so on.

After the industrial revolution, industries and work was further developed that was heavily separated from a natural linkage – there are no seasons for a coal mine or a steam engine – and the development of the clock and reinforcement of the calendar of work allowed both the measurement of working hours (for payment) and the determination of deadlines, given that natural forces did not have to be considered to the same degree. Steam engines are completed, they have no need to ripen.

With the notion of fixed and named hours, we can very easily determine if someone is late when we have enough tools for measuring the flow of time. But this is, very much, the notion of the time that we use in order to determine when a task must be completed, rather than taking an approach that accepts that the task will be completed at some point within a more general span of time.

We still have confusion where our understanding of “real measures” such as days, interact with time discipline. Is midnight on the 3rd of April the second after the last moment of April the 2nd or the second before the first moment of April the 4th? Is midnight 12:00pm or 12:00am? (There are well-defined answers to this but the nature of the intersection is such that definitions have to be made.)

But let’s look at teaching for a moment. One of the great criticisms of educational assessment is that we confuse timeliness, and in this case we specifically mean an adherence to meeting time discipline deadlines, with achievement. Completing the work a crucial hour after it is due can lead to that work potentially not being marked at all, or being rejected. But we do usually have over-riding reasons for doing this but, sadly, these reasons are as artificial as the deadlines we impose. Why is an Engineering Degree a four-year degree? If we changed it to six would we get better engineers? If we switched to competency based training, modular learning and life-long learning, would we get more people who were qualified or experienced with engineering? Would we get less? What would happen if we switched to a 3/1/2/1 working week? Would things be better or worse? It’s hard to evaluate because the week, and the contiguous working week, are so much a part of our world that I imagine that today is the first day that some of you have thought about it.

Back to education and, right now, we count time for our students because we have to work out bills and close off accounts at end of financial year, which means we have to meet marking and award deadlines, then we have to project our budget, which is yearly, and fit that into accredited degree structures, which have year guidelines…

But I cannot give you a sound, scientific justification for any of what I just wrote. We do all of that because we are caught up in industrial time first and we convince ourselves that building things into that makes sense. Students do have ebb and flow. Students are happier on certain days than others. Transition issues on entry to University are another indicator that students develop and mature at different rates – why are we still applying industrial time from top to bottom when everything we see here says that it’s going to cause issues?

Oh, yes, the “real world” uses it. Except that regular studies of industrial practice show that 40 hour weeks, regular days off, working from home and so on are more productive than the burn-out, everything-late, rush that we consider to be the signs of drive. (If Henry Ford thinks that making people work more than 40 hours a week is bad for business, he’s worth listening to.) And that’s before we factor in the development of machines that will replace vast numbers of human jobs in the next 20 years.

I have a different approach. Why aren’t we looking at students more like we regard our grape vines? We plan, we nurture, we develop, we test, we slowly build them to the point where they can produce great things and then we sustain them for a fruitful and long life. When you plant grape vines, you expect a first reasonable crop level in three years, and commercial levels at five. Tellingly, the investment pattern for grapes is that it takes you 10 years to break even and then you start making money back. I can’t tell you how some of my students will turn out until 15-25 years down the track and it’s insanity to think you can base retrospective funding on that timeframe.

You can’t make your grapes better by telling them to be fruitful in two years. Some vines take longer than others. You can’t even tell them when to fruit (although can trick them a little). Yet, somehow, we’ve managed to work around this to produce a local wine industry worth around $5 billion dollars. We can work with variation and seasonal issues.

One of the reasons I’m so keen on MOOCs is that these can fit in with the routines of people who can’t dedicate themselves to full-time study at the moment. By placing well-presented, pedagogically-sound materials on-line, we break through the tyranny of the 9-5, 5 day work week and let people study when they are ready to, where they are ready to, for as long as they’re ready to. Like to watch lectures at 1am, hanging upside down? Go for it – as long as you’re learning and not just running the video in the background while you do crunches, of course!

Once you start to question why we have so many days in a week, you quickly start to wonder why we get so caught up on something so artificial. The simple answer is that, much like money, we have it because we have it. Perhaps it’s time to look at our educational system to see if we can do something that would be better suited to developing really good knowledge in our students, instead of making them adept at sliding work under our noses a second before it’s due. We are developing systems and technologies that can allow us to step outside of these structures and this is, I believe, going to be better for everyone in the process.

Conformity isn’t knowledge, and conformity to time just because we’ve always done that is something we should really stop and have a look at.

The Sad Story of Dr Karl Kruszelnicki

Posted: April 16, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, Dr Karl, education, educational problem, ethics, feedback, higher education, Karl Kruszelnicki, reflection, teaching, teaching approaches, thinking Leave a commentDr Karl is a very familiar face and voice in Australia, for his role in communicating and demystifying science. He’s a polymath and skeptic, with a large number of degrees and a strong commitment to raising awareness on crucial issues such as climate change and puncturing misconceptions and myths. With 33 books and an extensive publishing career, it’s no surprise that he’s a widely respected figure in the area of scientific communication and he holds a fellowship at the University of Sydney on the strength of his demonstrated track record and commitment to science.

It is a very sad state of events that has led to this post, where we have to talk about how his decision to get involved in a government-supported advertising campaign has had some highly undesirable outcomes. The current government of Australia has had, being kind, a questionable commitment to science, not appointing a science minister for the first time in decades, undermining national initiatives in alternative and efficient energy, and having a great deal of resistance to issues such as the scientific consensus on climate change. However, as part of the responsibilities of government, the Intergenerational Report is produced at least every 5 years (this one was a wee bit late) and has the tricky job of crystal-balling the next 40 years to predict change and allow government policy to be shaped to handle that change. Having produced the report, the government looked for a respected science-focused speaker to front the advertising campaign and they recruited Dr Karl, who has recently been on the TV talking about some of the things in the report as part of a concerted effort to raise awareness of the report.

But there’s a problem.

And it’s a terrible problem because it means that Dr Karl didn’t follow some of the most basic requirements of science. From an ABC article on this:

“Dr Kruszelnicki said he was only able to read parts of the report (emphasis mine) before he agreed to the ads as the rest was under embargo.”

Ah. But that’s ok, if he agrees with the report as it’s been released, right? Uhh. About that, from a Fairfax piece on Tuesday.

“The man appearing on television screens across the country promoting the Abbott government’s Intergenerational Report – science broadcaster Karl Kruszelnicki – has hardened his stance against the document, describing it as “flawed” and admitting to concerns that it was “fiddled with” by the government.” (emphasis mine, again)

Dr Karl now has concerns over the independence of the report (he now sees it as a primarily political document) and much of its content. Ok, now we have a problem. He’s become the public face of a report that he didn’t read and that he took, very naïvely, on faith that the parts he hadn’t seen would (somewhat miraculously) reverse the well-known direction of the current government on climate change. But it’s not as if he just took money to front something he didn’t read, is it? Oh. He hasn’t been paid for it yet but this was a paid gig. Obviously, the first thing to do is to not take the money, if you’re unhappy with the report, right? Urm. From the SMH link above:

“What have I done wrong?” he told Fairfax Media. “As far as I’m concerned I was hired to bring the public’s attention to the report. People have heard about this one where they hadn’t heard about IGR one, two or three.”

But then public reaction on Twitter and social media started to rise and, last night, this was released on his Twitter account:

“I have decided to donate any moneys received from the IGR campaign to needy government schools. More to follow tomorrow. Dr Karl.”

This is a really sad day for science communication in Australia. As far as I know, the campaign is still running and, while you can easily find the criticism of the report if you go looking for it, a number of highly visible media sites are not showing anything that would lead readers to think that anyone had any issues with the report. So, while Dr Karl is regretting his involvement, it continues to persuade people around Australia that this friendly, trusted and respected face of science communication is associated with the report. Giving the money away (finally) to needy schools does not fix this. But let me answer Dr Karl’s question from above, “What have I done wrong?” With the deepest regret, I can tell you that what you have done wrong is:

- You presented a report with your endorsement without reading it,

- You arranged to get paid to do this,

- You consumed the goodwill and trust of the identity that you have formed over decades of positive scientific support to do this,

- You assisted the on-going anti-scientific endeavours of a government that has a bad track record in this area, and

- You did not immediately realise that what you had done was a complete failure of the implicit compact that you had established with the Australian public as a trusted voice of rationality, skepticism and science.

We all make mistakes and scientists do it all the time, on outdated, inaccurate, misreported and compromised data. But the moment we know that the data is wrong, we have to find the truth and come up with new approaches. But it is a serious breach of professional scientific ethics to present something where you cannot vouch for it through established soundness of reputation (a ‘good’ journal), trusted reference (an established scientific expert) or personal investigation (your own research). And that’s purely in papers or at conferences, where you are looking at relatively small issue of personal reputation or paper citations. To stand up on the national stage, for money, and to effectively say “This is Dr Karl approved”, when you have not read it, and then to hide behind an “but I was only presenting it” defence initially is to take an error of judgement and compound it into an offence that would potentially strip some scientists of their PhDs.

It’s great that Dr Karl is now giving the money away but the use of his image, with his complicity, has done its damage. There are people around Australia who will think that a report that is heavily politicised and has a great deal of questionable science in it is now scientific because Dr Karl stood there. We can’t undo that easily.

I don’t know what happens now. Dr Karl is a broadcaster but he does have a University appointment. I’ll be interested to see if his own University convenes an ethics and professional practice case against him. Maybe it will fade away, in time. Dr Karl has done so much for science in Australia that I hope that he has a good future after this but he has to come to terms with the fact that he has made the most serious error a scientist can make and I think he has a great deal of thinking to do as to what he wants to do next. Taking money from a till and then giving it back when you get caught doesn’t change the fact of what you did to get it – neither does agreeing to take cash to shill a report that you haven’t read.

But maybe I am being too harsh? The fellowship Dr Karl holds is named after Dr Julius Sumner Miller, a highly respected scientific communicator, who then used his high profile to sell chocolate in his final years (much to my Grandfather’s horror). Because nothing says scientific integrity like flogging chocolate for hard cash. Perhaps the fellowship has some sort of commercialising curse?

Actually, I don’t think I’m being too harsh, I wish him the best but I think he’s put himself into the most awful situation. I know what I would do but, then, I hope I would have seen that, just possibly, the least scientifically focused government in Australia might not be able to be trusted with an unseen report. If he had seen the report and then they had changed it all, that’s a scandal and he is a hero of scientific exposure. But that’s not the case. It’s a terribly sad day and a sad story for someone who, up until now, I had the highest respect for.

I want you to be sad. I want you to be angry. I want you to understand.

Posted: March 31, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, Bihar, blogging, cheating, community, education, educational problem, educational research, ethics, feedback, higher education, Nick Falkner, plagiarism, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking Leave a commentNick Falkner is an Australian academic with a pretty interesting career path. He is also a culture sponge and, given that he’s (very happily) never going to be famous enough for a magazine style interview, he interviews himself on one of the more confronting images to come across the wires recently. For clarity, he’s the Interviewer (I) when he’s asking the questions.

Interviewer: As you said, “I want you to be sad. I want you to be angry. I want you to understand.” I’ve looked at the picture and I can see a lot of people who are being associated with a mass cheating scandal in the Indian state of Bihar. This appears to be a systematic problem, especially as even more cheating has been exposed in the testing for the police force! I think most people would agree that it’s a certainly a sad state of affairs and there are a lot of people I’ve heard speaking who are angry about this level of cheating – does this mean I understand? Basically, cheating is wrong?

Nick: No. What’s saddening me is that most of the reaction I’ve seen to this picture is lacking context, lacking compassion and, worse, perpetrating some of the worst victim blaming I’ve ever seen. I’m angry because people still don’t get that the system in place is Bihar, and wherever else we put systems like this, is going to lead to behaviour like this out of love and a desire for children to have opportunity, rather than some grand criminal scheme of petty advancement.

Interviewer: Well, ok, that’s a pretty strong set of statements. Does this mean that you think cheating is ok?

Nick: (laughs) Well, we’ve got the most usual response out of the way. No, I don’t support “cheating” in any educational activity because it means that the student is bypassing the learning design and, if we’ve done our job, this will be to their detriment. However, I also strongly believe that some approaches to large-scale education naturally lead to a range of behaviours where external factors can affect the perceived educational benefit to the student. In other words, I don’t want students to cheat but I know that we sometimes set things up so that cheating becomes a rational response and, in some cases, the only difference between a legitimate advantage and “cheating” is determined by privilege, access to funds and precedent.

Interviewer: Those are big claims. And you know what that means…

Nick: You want evidence! Ok. Let’s start with some context. Bihar is the third-largest state in India by population, with over 100 million people, the highest density of population in India, the largest number of people under 25 (nearly 60%), a heavily rural population (~85%) and a literacy rate around 64%. Bihar is growing very quickly but has put major work into its educational systems. From 2001 to 2011, literacy jumped from 48 to 64% – 20 of those percentage points are in increasing literacy in women alone.

If we took India out of the measurement, Bihar is in the top 11 countries in the world by population. And it’s accelerating in growth. At the same time, Bihar has lagged behind other Indian states in socio-economic development (for a range of reasons – it’s very … complicated). Historically, Bihar has been a seat of learning but recent actions, including losing an engineering college in 2000 due to boundary re-alignment, means that they are rebuilding themselves right now. At the same time, Bihar has a relatively low level of industrialisation by Indian standards although it’s redefining itself away from agriculture to services and industry at the moment, with some good economic growth. There are some really interesting projects on the horizon – the Indian Media Hub, IT centres and so on – which may bring a lot more money into the region.

Interviewer: Ok, Bihar is big, relatively poor … and?

Nick: And that’s the point. Bihar is full of people, not all of whom are literate, and many of whom still live in Indian agricultural conditions. The future is brightening for Bihar but if you want to be able to take advantage of that, then you’re going to have to be able to get into the educational system in the first place. That exam that the parents are “helping” their children with is one that is going to have an almost incomprehensibly large impact on their future…

Interviewer: Their future employment?

Nick: Not just that! This will have an impact on whether they live in a house with 24 hour power. On whether they will have an inside toilet. On whether they will be able to afford good medicine when they or their family get sick. On whether they will be able to support their parents when they get old. On how good the water they drink is. As well, yes, it will help them to get into a University system where, unfortunately, existing corruption means that money can smooth a path where actual ability has led to a rockier road. The article I’ve just linked to mentions pro-cheating rallies in Uttar Pradesh in the early 90s but we’ve seen similar arguments coming from areas where rote learning, corruption and mass learning systems are thrown together and the students become grist to a very hard stone mill. And, by similar arguments, I mean pro-cheating riots in China in 2013. Student assessment on the massive scale. Rote learning. “Perfect answers” corresponding to demonstrating knowledge. Bribery and corruption in some areas. Angry parents because they know that their children are being disadvantaged while everyone else is cheating. Same problem. Same response.

Interviewer: Well, it’s pretty sad that those countries…

Nick: I’m going to stop you there. Every time that we’ve forced students to resort to rote learning and “perfect answer” memorisation to achieve good outcomes, we’ve constructed an environment where carrying in notes, or having someone read you answers over a wireless link, suddenly becomes a way to successfully reach that outcome. The fact that this is widely used in the two countries that have close to 50% of the world’s population is a reflection of the problem of education at scale. Are you volunteering to sit down and read the 50 million free-form student essays that are produced every year in China under a fairer system? The US approach to standardised testing isn’t any more flexible. Here’s a great article on what’s wrong with the US approach because it identifies that these tests are good for measuring conformity to the test and its protocol, not the quality of any education received or the student’s actual abilities. But before we get too carried away about which countries cheat most, here are some American high school students sharing answers on Twitter.

Every time someone talks about the origin of a student, rather than the system that a student was trained under, we start to drift towards a racist mode of thinking that doesn’t help. Similar large-scale, unimaginative, conform-or-perish tests that you have to specifically study for across India, China and the US. What do we see? No real measurement of achievement or aptitude. Cheating. But let’s go back to India because the scale of the number of people involved really makes the high stakes nature of these exams even more obvious. Blow your SATs or your GREs and you can still do OK, if possibly not really well, in the US. In India… let’s have a look.

State Bank of India advertised some entry-level vacancies back in 2013. They wanted 1,500 people. 17 million applied. That’s roughly the adult population of Australia applying for some menial work at the bank. You’ve got people who are desperate to work, desperate to do something with their lives. We often think of cheats as being lazy or deceitful when it’s quite possible to construct a society so that cheating is part of a wider spectrum of behaviour that helps you achieve your goals. Performing well in exams in India and China is a matter of survival when you’re talking about those kinds of odds, not whether you get a great or an ok job.

Interviewer: You’d use a similar approach to discuss the cheating on the police exam?

Nick: Yes. It’s still something that shouldn’t be happening but the police force is a career and, rather sadly, can also be a lucrative source of alternative income in some countries. It makes sense that this is also something that people consider to be very, very high stakes. I’d put money on similar things happening in countries where achieving driving licences are a high stakes activity. (Oh, good, I just won money.)

Interviewer: So why do you want us to be sad?

Nick: I don’t actually want people to be sad, I’d much prefer it if we didn’t need to have this discussion. But, in a nutshell, every parent in that picture is actually demonstrating their love and support for their children and family. That’s what the human tragedy is here. These Biharis probably don’t have the connections or money to bypass the usual constraints so the best hope that their kids have is for their parents to risk their lives climbing walls to slip them notes.

I mean, everyone loves their kids. And, really, even those of us without children would be stupid not to realise that all children are our children in many ways, because they are the future. I know a lot of parents who saw this picture and they didn’t judge the people on the walls because they could see themselves there once they thought about it.

But it’s tragic. When the best thing you can do for your child is to help them cheat on an exam that controls their future? How sad is that?

Interviewer: Do you need us to be angry? Reading back, it sounds like you have enough anger for all of us.

Nick: I’m angry because we keep putting these systems in place despite knowing that they’re rubbish. Rousseau knew it hundreds of years ago. Dewey knew it in the 1930s. We keep pretending that exams like this sort people on merit when all of our data tells us that the best indicator of performance is the socioeconomic status of the parents, rather than which school they go to. But, of course, choosing a school is a kind of “legal” cheating anyway.

Interviewer: Ok, now there’s a controversial claim.

Nick: Not really. Studies show us that students at private schools tend to get higher University entry marks, which is the gateway to getting into courses and also means that they’ve completed their studies. Of course, the public school students who do get in go on to get higher GPAs… (This article contains the data.)

Interviewer: So it all evens out?

Nick: (laughs) No, but I have heard people say that. Basically, sending your kids to a “better” school, one of the private schools or one of the high-performing publics, especially those that offer International Baccalaureate, is not going to hurt your child’s chances of getting a good Tertiary entry mark. But, of course, the amount of money required to go to a private school is not… small… and the districting of public schools means that you have to be in the catchment to get one of these more desirable schools. And, strangely enough, once you factor in the socio-economic factors and outlook for a school district, it’s amazing how often that the high-performing schools map into higher SEF areas. Not all of them and there are some magnificent efforts in innovative and aggressive intervention in South Australia alone but even these schools have limited spaces and depend upon the primary feeder schools. Which school you go to matters. It shouldn’t. But it does.

So, you could bribe someone to make the exam easier or you could pay up to AUD $24,160 in school fees every year to put your child into a better environment. You could go to your local public school or, if you can manage the difficulty and cost of upheaval, you could relate to a new suburb to get into a “better” public school. Is that fair to the people that your child is competing against to get into limited places at University if they can’t afford that much or can’t move? That $24,000 figure is from this year’s fees for one of South Australia’s most highly respected private schools. That figure is 10 times the nominal median Indian income and roughly the same as an experienced University graduate would make in Bihar each year. In Australia, the 2013 median household income was about twice that figure, before tax. So you can probably estimate how many Australian families could afford to put one, or more, children through that kind of schooling for 5-12 years and it’s not a big number.

The Biharis in the picture don’t have a better option. They don’t have the money to bribe or the ability to move. Do you know how I know? Because they are hanging precariously from a wall trying to help their children copy out a piece of information in perfect form in order to get an arbitrary score that could add 20 years to their lifespan and save their own children from dying of cholera or being poisoned by contaminated water.

Some countries, incredibly successful education stories like Finland (seriously, just Google “Finland Educational System” and prepare to have your mind blown), take the approach that every school should be excellent, every student is valuable, every teacher is a precious resource and worthy of respect and investment and, for me, these approaches are the only way to actually produce a fair system. Excellence in education that is only available to the few makes everyone corrupt, to a greater or lesser degree, whether they realise it or not. So I’m angry because we know exactly what happens with high stakes exams like this and I want everyone to be angry because we are making ourselves do some really awful things to ourselves by constantly bending to conform to systems like this. But I want people to be angry because the parents in the picture have a choice of “doing the right thing” and watching their children suffer, or “doing the wrong thing” and getting pilloried by a large and judgemental privileged group on the Internet. You love your kids. They love their kids. We should all be angry that these people are having to scramble for crumbs at such incredibly high stakes.

But demanding that the Indian government do something is hypocritical while we use similar systems and we have the ability to let money and mobility influence the outcome for students at the expense of other students. Go and ask Finland what they do, because they’re happy to tell you how they fixed things but people don’t seem to want to actually do most of the things that they have done.

Interviewer: We’ve been talking for a while so we had better wrap up. What do you want people to understand?

Nick: What I always want people to understand – I want them to understand “why“. I want them to be able to think about and discuss why these images from a collapsing educational system is so sad. I want them to understand why our system is really no better. I want them to think about why struggling students do careless, thoughtless and, by our standards, unethical things when they see all the ways that other people are sliding by in the system or we don’t go to the trouble to construct assessment that actually rewards creative and innovative approaches.

I want people to understand that educational systems can be hard to get right but it is both possible and essential. It takes investment, it takes innovation, it takes support, it takes recognition and it takes respect. Why aren’t we doing this? Delaying investment will only make the problem harder!

Really, I want people to understand that we would have to do a very large amount of house cleaning before we could have the audacity to criticise the people in that photo and, even then, it would be an action lacking in decency and empathy.

We have never seen enough of a level playing field to make a meritocratic argument work because of ingrained privilege and disparity in opportunity.

Interviewer: So, basically, everything most people think about how education and exams work is wrong? There are examples of a fairer system but most of us never see it?

Nick: Pretty much. But I have hope. I don’t want people to stay sad or angry, I want those to ignite the next stages of action. Understanding, passion and action can change the world.

Interviewer: And that’s all we have time for. Thank you, Nick Falkner!

On being the right choice.

Posted: March 29, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, Clarion West, community, education, ethics, first choice, higher education, in the student's head, learning, reflection, teaching, teaching approaches, thinking, work/life balance Leave a commentI write fiction in my (increasing amounts of) free time and I submit my short stories to a variety of magazines, all of whom have rejected me recently. I also applied to take part in a six-week writing workshop called Clarion West this year, because this year’s instructors were too good not to apply! I also got turned down for Clarion West.

Only one of these actually stung and it was the one where, rather than thinking hey, that story wasn’t right for that venue, I had to accept that my writing hadn’t been up to the level of the 16 very talented writers who did get in. I’m an academic so being rejected from conferences is part of my job (as is being told that I’m wrong and, occasionally, told that I’m right but in a way that makes it sounds like I stumbled over it.)

And there is a difference because one of these is about the story itself and the other is about my writing, although many will recognise that this is a tenuous and artificial separation, probably to keep my self-image up. But this is a setback and I haven’t written much (anything) since the last rejection but that’s ok, I’ll start writing again and I’ll work on it and, maybe, one day I’ll get something published and people will like it and that will be that dealt with.

It always stings, at least a little, to be runner-up or not selected when you had your heart set on something. But it’s interesting how poisonous it can be to you and the people around you when you try and push through a situation where you are not the first choice, yet you end up with the role anyway.

For the next few paragraphs, I’m talking about selecting what to do, assuming that you have the choice and freedom to make that choice. For those who are struggling to stay alive, choice is often not an option. I understand that, so please read on knowing that I’m talking about making the best of the situations where your own choices can be used against you.

There’s a position going at my Uni, it doesn’t matter what, and I was really quite interested in it, although I knew that people were really looking around outside the Uni for someone to fill it. It’s been a while and it hasn’t been filled so, when the opportunity came up, I asked about it and noted my interest.

But then, I got a follow-up e-mail which said that their first priority was still an external candidate and that they were pushing out the application period even further to try and do that.

Now, here’s the thing. This means that they don’t want me to do it and, so you know, that is absolutely fine with me. I know what I can do and I’m very happy with that but I’m not someone with a lot of external Uni experience. (Soldier, winemaker, sysadmin, international man of mystery? Yes. Other Unis? Not a great deal.) So I thanked them for the info, wished them luck and withdrew my interest. I really want them to find someone good, and quickly, but they know what they want and I don’t want to hang around, to be kicked into action when no-one better comes along.

I’m good enough at what I do to be a first choice and I need to remember that. All the time.

It’s really important to realise when you’d be doing a job where you and the person who appoints you know that you are “second-best”. You’re only in the position because they couldn’t find who they wanted. It’s corrosive to the spirit and it can produce a treacherous working relationship if you are the person that was “settled” on. The role was defined for a certain someone – that’s what the person in charge wants and that is what they are going to be thinking the whole time someone is in that role. How can you measure up to the standards of a better person who is never around to make mistakes? How much will that wear you down as a person?

As academics, and for many professional, there are so many things that we can do, that it doesn’t make much sense to take second-hand opportunities, after the A players have chosen not to show up. If you’re doing your job well and you go for something where that’s relevant, you should be someone’s first choice, or you should be in the first sweep. If not, then it’s not something that they actually need you for. You need to save your time and resources for those things where people actually want you – not a warm body that you sort of approximate. You’re not at the top level yet? Then it’s something to aim for but you won’t be able to do the best projects and undertake the best tasks to get you into that position, if you’re always standing in and doing the clean-up work because you’re “always there”.

I love my friends and family because they don’t want a Nick-ish person in their life, they want me. When I’m up, when I’m down, when I’m on, when I’m off – they want me. And that’s the way to bolster a strong self-image and make sure that you understand how important you can be.

If you keep doing stuff where you could be anyone, you won’t have the time to find, pursue or accept those things that really need you and this is going to wear away at you. Years ago, I stopped responding when someone sent out an e-mail that said “Can anyone do this?” because I was always one of the people who responded but this never turned into specific requests to me. Since I stopped doing it, people have to contact me and they value me far more realistically because of it.

I don’t believe I’m on the Clarion West reserve list (no doubt they would have told me), which is great because I wouldn’t go now. If my writing wasn’t good enough then, someone getting sick doesn’t magically make my writing better and, in the back of my head and in the back of the readers’, we’ll all know that I’m not up to standard. And I know enough about cognitive biases to know that it would get in the way of the whole exercise.

Never give up anything out of pique, especially where it’s not your essence that is being evaluated, but feel free to politely say No to things where they’ve made it clear that they don’t really want you but they’re comfortable with settling.

If you’re doing things well, no-one should be settling for you – you should always be in that first choice.

Anything else? It will drive you crazy and wear away your soul. Trust me on this.

Large Scale Authenticity: What I Learned About MOOCs from Reality Television

Posted: March 8, 2015 Filed under: Education, Opinion | Tags: authenticity, blogging, collaboration, community, design, education, ethics, feedback, games, higher education, MKR, moocs, My Kitchen Rules, principles of design, reflection, students, teaching, teaching approaches, thinking 1 CommentThe development of social media platforms has allows us to exchange information and, well, rubbish very easily. Whether it’s the discussion component of a learning management system, Twitter, Facebook, Tumblr, Snapchat or whatever will be the next big thing, we can now chat to each other in real time very, very easily.

One of the problems with any on-line course is trying to maintain a community across people who are not in the same timezone, country or context. What we’d really like is for the community communication to come from the students, with guidance and scaffolding from the lecturing staff, but sometimes there’s priming, leading and… prodding. These “other” messages have to be carefully crafted and they have to connect with the students or they risk being worse than no message at all. As an example, I signed up for an on-line course and then wasn’t able to do much in the first week. I was sitting down to work on it over the weekend when a mail message came in from the organisers on the community board congratulating me on my excellent progress on things I hadn’t done. (This wasn’t isolated. The next year, despite not having signed up, the same course sent me even more congratulations on totally non-existent progress.) This sends the usual clear messages that we expect from false praise and inauthentic communication: the student doesn’t believe that you know them, they don’t feel part of an authentic community and they may disengage. We have, very effectively, sabotaged everything that we actually wanted to build.

Let’s change focus. For a while, I was watching a show called “My Kitchen Rules” on local television. It pretends to be about cooking (with competitive scoring) but it’s really about flogging products from a certain supermarket while delivering false drama in the presence of dangerously orange chefs. An engineered activity to ensure that you replace an authentic experience with consumerism and commodities? Paging Guy Debord on the Situationist courtesy phone: we have a Spectacle in progress. What makes the show interesting is the associated Twitter feed, where large numbers of people drop in on the #mkr to talk about the food, discuss the false drama, exchange jokes and develop community memes, such as sharing pet pictures with each other over the (many) ad breaks. It’s a community. Not everyone is there for the same reasons: some are there to be rude about people, some are actually there for the cooking (??) and some are… confused. But the involvement in the conversation, interplay and development of a shared reality is very real.

And this would all be great except for one thing: Australia is a big country and spans a lot of timezones. My Kitchen Rules is broadcast at 7:30pm, starting in Melbourne, Canberra, Tasmania and Sydney, then 30 minutes later in Adelaide, then 30 minutes later again in Queensland (they don’t do daylight savings), then later again for Perth. So now we have four different time groups to manage, all watching the same show.

But the Twitter feed starts on the first time point, Adelaide picks up discussions from the middle of the show as they’re starting and then gets discussions on scores as the first half completes for them… and this is repeated for Queensland viewers and then for Perth. Now , in the community itself, people go on and off the feed as their version of the show starts and stops and, personally, I don’t find score discussions very distracting because I’m far more interested in the Situation being created in the Twitter stream.

Enter the “false tweets” of the official MKR Social Media team who ask questions that only make sense in the leading timezone. Suddenly, everyone who is not quite at the same point is then reminded that we are not in the same place. What does everyone think of the scores? I don’t know, we haven’t seen it yet. What’s worse are the relatively lame questions that are being asked in the middle of an actual discussion that smell of sponsorship involvement or an attempt to produce the small number of “acceptable” tweets that are then shared back on the TV screen for non-connected viewers. That’s another thing – everyone outside of the first timezone has very little chance of getting their Tweet displayed. Imagine if you ran a global MOOC where only the work of the students in San Francisco got put up as an example of good work!

This is a great example of an attempt to communicate that fails dismally because it doesn’t take into account how people are using the communications channel, isn’t inclusive (dismally so) and constantly reminds people who don’t live in a certain area that they really aren’t being considered by the program’s producers.

You know what would fix it? Putting it on at the same time everywhere but that, of course, is tricky because of the way that advertising is sold and also because it would force poor Perth to start watching dinner television just after work!

But this is a very important warning of what happens when you don’t think about how you’ve combined the elements of your environment. It’s difficult to do properly but it’s terrible when done badly. And I don’t need to go and enrol in a course to show you this – I can just watch a rather silly cooking show.

Teleportation and the Student: Impossibility As A Lesson Plan

Posted: March 7, 2015 Filed under: Education, Opinion | Tags: authenticity, blogging, collaboration, community, design, education, higher education, human body, in the student's head, learning, lossy compression, science fiction, Sean WIlliams, Star Trek, students, teaching, teaching approaches, teleportation, teleporters, thinking, tools Leave a comment

Tricking a crew-mate into looking at their shoe during a transport was a common prank in the 23rd Century.

Teleporters, in one form or another, have been around in Science Fiction for a while now. Most people’s introduction was probably via one of the Star Treks (the transporter) which is amusing, as it was a cost-cutting mechanism to make it easy to get from one point in the script to another. Is teleportation actually possible at the human scale? Sadly, the answer is probably not although we can do some cool stuff at the very, very small scale. (You can read about the issues in teleportation here and here, an actual USAF study.) But just because something isn’t possible doesn’t mean that we can’t get some interesting use out of it. I’m going to talk through several ways that I could use teleportation to drive discussion and understanding in a computing course but a lot of this can be used in lots of places. I’ve taken a lot of shortcuts here and used some very high level analogies – but you get the idea.

- Data Transfer

The first thing to realise is that the number of atoms in the human body is huge (one octillion, 1E27, roughly, which is one million million million million million) but the amount of information stored in the human body is much, much larger than that again. If we wanted to get everything, we’re looking at transferring quattuordecillion bits (1E45), and that’s about a million million million times the number of atoms in the body. All of this, however, ignores the state of all the bacteria and associated hosted entities that live in the human body and the fact that the number of neural connections in the brain appears to be larger than we think. There are roughly 9 non-human cells associated with your body (bacteria et al) for every cell.

Put simply, the easiest way to get the information in a human body to move around is to leave it in a human body. But this has always been true of networks! In the early days, it was more efficient to mail a CD than to use the (at the time) slow download speeds of the Internet and home connections. (Actually, it still is easier to give someone a CD because you’ve just transferred 700MB in one second – that’s 5.6 Gb/s and is just faster than any network you are likely to have in your house now.)

Right now, the fastest network in the world clocks in at 255 Tbps and that’s 255,000,000,000,000 bits in a second. (Notice that’s over a fixed physical optical fibre, not through the air, we’ll get to that.) So to send that quattuordecillion bits, it would take (quickly dividing 1E45 by 255E12) oh…

about 100,000,000,000,000,000,000,000

years. Um.

- Information Redundancy and Compression

The good news is that we probably don’t have to send all of that information because, apart from anything else, it appears that a large amount of human DNA doesn’t seem to do very much and there’s lot of repeated information. Because we also know that humans have similar chromosomes and things lie that, we can probably compress a lot of this information and send a compressed version of the information.

The problem is that compression takes time and we have to compress things in the right way. Sadly, human DNA by itself doesn’t compress well as a string of “GATTACAGAGA”, for reasons I won’t go into but you can look here if you like. So we have to try and send a shortcut that means “Use this chromosome here” but then, we have to send a lot of things like “where is this thing and where should it be” so we’re still sending a lot.

There are also two types of compression: lossless (where we want to keep everything) and lossy (where we lose bits and we will lose more on each regeneration). You can work out if it’s worth doing by looking at the smallest number of bits to encode what you’re after. If you’ve ever seen a really bad Internet image with strange lines around the high contrast bits, you’re seeing lossy compression artefacts. You probably don’t want that in your genome. However, the amount of compression you do depends on the size of the thing you’re trying to compress so now you have to work out if the time to transmit everything is still worse than the time taken to compress things and then send the shorter version.

So let’s be generous and say that we can get, through amazing compression tricks, some sort of human pattern to build upon and the like, our transferred data requirement down to the number of atoms in the body – 1E27. That’s only going to take…

124,267

years. Um, again. Let’s assume that we want to be able to do this in at most 60 minutes to do the transfer. Using the fastest network in the world right now, we’re going to have get our data footprint down to 900,000,000,000,000,000 bits. Whew, that’s some serious compression and, even on computers that probably won’t be ready until 2018, it would have taken about 3 million million million years to do the compression. But let’s ignore that. Because now our real problems are starting…

- Signals Ain’t Simple and Networks Ain’t Wires.

In earlier days of the telephone, the movement of the diaphragm in the mouthpiece generated electricity that was sent down the wires, amplified along the way, and then finally used to make movement in the earpiece that you interpreted as sound. Changes in the electric values weren’t limited to strict values of on or off and, when the signal got interfered with, all sorts of weird things happen. Remember analog television and all those shadows, snow and fuzzy images? Digital encoding takes the measurements of the analog world and turns it into a set of 0s and 1s. You send 0s and 1s (binary) and this is turned back into something recognisable (or used appropriately) at the other end. So now we get amazingly clear television until too much of the signal is lost and then we get nothing. But, up until then, progress!

But we don’t send giant long streams across a long set of wires, we send information in small packets that contain some data, some information on where to send it and it goes through an array of active electronic devices that take your message from one place to another. The problem is that those packet headers add overhead, just like trying to mail a book with individual pages in addressed envelopes in the postal service would. It takes time to get something onto the network and it also adds more bits! Argh! More bits! But it can’t get any worse can it?

- Networks Aren’t Perfectly Reliable

If you’ve ever had variable performance on your home WiFi, you’ll understand that transmitting things over the air isn’t 100% reliable. There are two things that we have to thing about in terms of getting stuff through the network: flow control (where we stop our machine from talking to other things too quickly) and congestion control (where we try to manage the limited network resources so that everyone gets a share). We’ve already got all of these packets that should be able to be directed to the right location but, well, things can get mangled in transmission (especially over the air) and sometimes things have to be thrown away because the network is so congested that packets get dropped to try and keep overall network throughput up. (Interference and absorption is possible even if we don’t use wireless technology.)

Oh, no. It’s yet more data to send. And what’s worse is that a loss close to the destination will require you to send all of that information from your end again. Suddenly that Earth-Mars teleporter isn’t looking like such a great idea, is it, what with the 8-16 minute delay every time a cosmic ray interferes with your network transmission in space. And if you’re trying to send from a wireless terminal in a city? Forget it – the WiFi network is so saturated in many built-up areas that your error rates are going to be huge. For a web page, eh, it will take a while. For a Skype call, it will get choppy. For a human information sequence… not good enough.

Could this get any worse?

- The Square Dance of Ordering and Re-ordering

Well, yes. Sometimes things don’t just get lost but they show up at weird times and in weird orders. Now, for some things, like a web page, this doesn’t matter because your computer can wait until it gets all of the information and then show you the page. But, for telephone calls, it does matter because losing a second of call from a minute ago won’t make any sense if it shows up now and you’re trying to keep it real time.

For teleporters there’s a weird problem in that you have to start asking questions like “how much of a human is contained in that packet”? Do you actually want to have the possibility of duplicate messages in the network or have you accidentally created extra humans? Without duplication possibilities, your error recovery rate will plummet, unless you build in a lot more error correction, which adds computation time and, sorry, increases the number of bits to send yet again. This is a core consideration of any distributed system, where we have to think about how many copies of something we need to send to ensure that we get one – or whether we care if we have more than one.

PLEASE LET THERE BE NO MORE!

- Oh, You Wanted Security, Integrity and Authenticity, Did You?

I’m not sure I’d want people reading my genome or mind state as it traversed across the Internet and, while we could pretend that we have a super-secret private network, security through obscurity (hiding our network or data) really doesn’t work. So, sorry to say, we’re going to have to encrypt our data to make sure that no-one else can read it but we also have to carry out integrity tests to make sure that what we sent is what we thought we sent – we don’t want to send a NICK packet and end up with a MICE packet, for simplistic example. And this is going to have to be sent down the same network as before so we’re putting more data bits down that poor beleaguered network.

Oh, and did I mention that encryption will also cost you more computational overhead? Not to mention the question of how we undertake this security because we have a basic requirement to protect all of this biodata in our system forever and eliminate the ability that someone could ever reproduce a copy of the data – because that would produce another person. (Ignore the fact that storing this much data is crazy, anyway, and that the current world networks couldn’t hold it all.)

And who holds the keys to the kingdom anyway? Lenovo recently compromised a whole heap of machines (the Superfish debacle) by putting what’s called a “self-signed root certificate” on their machines to allow an adware partner to insert ads into your viewing. This is the equivalent of selling you a house with a secret door that you don’t know about it that has a four-digit pin lock on it – it’s not secure and because you don’t know about it, you can’t fix it. Every person who worked for the teleporter company would have to be treated as a hostile entity because the value of a secretly tele-cloned person is potentially immense: from the point of view of slavery, organ harvesting, blackmail, stalking and forced labour…

But governments can get in the way, too. For example, the FREAK security flaw is a hangover from 90’s security paranoia that has never been fixed. Will governments demand in-transit inspection of certain travellers or the removal of contraband encoded elements prior to materialisation? How do you patch a hole that might have secretly removed essential proteins from the livers of every consular official of a particular country?

The security protocols and approach required for a teleporter culture could define an entire freshman seminar in maths and CS and you could still never quite have scratched the surface. But we are now wandering into the most complex areas of all.

- Ethics and Philosophy

How do we define what it means to be human? Is it the information associated with our physical state (locations, spin states and energy levels) or do we have to duplicate all of the atoms? If we can produce two different copies of the same person, the dreaded transporter accident, what does this say about the human soul? Which one is real?

How do we deal with lost packets? Are they a person? What state do they have? To whom do they belong? If we transmit to a site that is destroyed just after materialisation, can we then transmit to a safe site to restore the person or is that on shaky ground?

Do we need to develop special programming languages that make it impossible to carry out actions that would violate certain ethical or established protocols? How do we sign off on code for this? How do we test it?

Do we grant full ethical and citizenship rights to people who have been through transporters, when they are very much no longer natural born people? Does country of birth make any sense when you are recreated in the atoms of another place? Can you copy yourself legitimately? How much of yourself has to survive in order for it to claim to be you? If someone is bifurcated and ends up, barely alive, with half in one place and half in another …

There are many excellent Science Fiction works referenced in the early links and many more out there, although people are backing away from it in harder SF because it does appear to be basically impossible. But if a networking student could understand all of the issues that I’ve raised here and discuss solutions in detail, they’d basically have passed my course. And all by discussing an impossible thing.

With thanks to Sean Williams, Adelaide author, who has been discussing this a lot as he writes about teleportation from the SF perspective and inspired this post.

Is this a dress thing? #thedress

Posted: March 1, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, blue and black dress, colour palette, community, data visualisation, education, educational problem, educational research, ethics, feedback, higher education, improving perception, learning, Leonard Nimoy, llap, measurement, perception, perceptual system, principles of design, teaching, teaching approaches, thedress, thinking 1 CommentFor those who missed it, the Internet recently went crazy over llamas and a dress. (If this is the only thing that survives our civilisation, boy, is that sentence going to confuse future anthropologists.) Llamas are cool (there ain’t no karma drama with a llama) so I’m going to talk about the dress. This dress (with handy RGB codes thrown in, from a Wired article I’m about to link to):

When I first saw it, and I saw it early on, the poster was asking what colour it was because she’d taken a picture in the store of a blue and black dress and, yet, in the picture she took, it sometimes looked white and gold and it sometimes looked blue and black. The dress itself is not what I’m discussing here today.

Let’s get something out of the way. Here’s the Wired article to explain why two different humans can see this dress as two different colours and be right. Okay? The fact is that the dress that the picture is of is a blue and black dress (which is currently selling like hot cakes, by the way) but the picture itself is, accidentally, a picture that can be interpreted in different ways because of how our visual perception system works.

This isn’t a hoax. There aren’t two images (or more). This isn’t some elaborate Alternative Reality Game prank.

But the reaction to the dress itself was staggering. In between other things, I plunged into a variety of different social fora to observe the reaction. (Other people also noticed this and have written great articles, including this one in The Atlantic. Thanks for the link, Marc!) The reactions included:

- Genuine bewilderment on the part of people who had already seen both on the same device at nearly adjacent times and were wondering if they were going mad.

- Fierce tribalism from the “white and gold” and “black and blue” camps, within families, across social groups as people were convinced that the other people were wrong.

- People who were sure that it was some sort of elaborate hoax with two images. (No doubt, Big Dress was trying to cover something up.)

- Bordering-on-smug explanations from people who believed that seeing it a certain way indicated that they had superior “something or other”, where you can put day vision/night vision/visual acuity/colour sense/dressmaking skill/pixel awareness/photoshop knowledge.

- People who thought it was interesting and wondered what was happening.

- Attention policing from people who wanted all of social media to stop talking about the dress because we should be talking about (insert one or more) llamas, Leonard Nimoy (RIP, LLAP, \\//) or the disturbingly short lifespan of Russian politicians.

The issue to take away, and the reason I’ve put this on my education blog, is that we have just had an incredibly important lesson in human behavioural patterns. The (angry) team formation. The presumption that someone is trying to make us feel stupid, playing a prank on us. The inability to recognise that the human perceptual system is, before we put any actual cognitive biases in place, incredibly and profoundly affected by the processing shortcuts our perpetual systems take to give us a view of the world.

I want to add a new question to all of our on-line discussion: is this a dress thing?

There are matters that are not the province of simple perceptual confusion. Human rights, equality, murder, are only three things that do not fall into the realm of “I don’t quite see what you see”. Some things become true if we hold the belief – if you believe that students from background X won’t do well then, weirdly enough, then they don’t do well. But there are areas in education when people can see the same things but interpret them in different ways because of contextual differences. Education researchers are well aware that a great deal of what we see and remember about school is often not how we learned but how we were taught. Someone who claims that traditional one-to-many lecturing, as the only approach, worked for them, when prodded, will often talk about the hours spent in the library or with study groups to develop their understanding.

When you work in education research, you get used to people effectively calling you a liar to your face because a great deal of our research says that what we have been doing is actually not a very good way to proceed. But when we talk about improving things, we are not saying that current practitioners suck, we are saying that we believe that we have evidence and practice to help everyone to get better in creating and being part of learning environments. However, many people feel threatened by the promise of better, because it means that they have to accept that their current practice is, therefore, capable of improvement and this is not a great climate in which to think, even to yourself, “maybe I should have been doing better”. Fear. Frustration. Concern over the future. Worry about being in a job. Constant threats to education. It’s no wonder that the two sides who could be helping each other, educational researchers and educational practitioners, can look at the same situation and take away both a promise of a better future and a threat to their livelihood. This is, most profoundly, a dress thing in the majority of cases. In this case, the perceptual system of the researchers has been influenced by research on effective practice, collaboration, cognitive biases and the operation of memory and cognitive systems. Experiment after experiment, with mountains of very cautious, patient and serious analysis to see what can and can’t be learnt from what has been done. This shows the world in a different colour palette and I will go out on a limb and say that there are additional colours in their palette, not just different shades of existing elements. The perceptual system of other people is shaped by their environment and how they have perceived their workplace, students, student behaviour and the personalisation and cognitive aspects that go with this. But the human mind takes shortcuts. Makes assumptions. Has biasses. Fills in gaps to match the existing model and ignores other data. We know about this because research has been done on all of this, too.

You look at the same thing and the way your mind works shapes how you perceive it. Someone else sees it differently, You can’t understand each other. It’s worth asking, before we deploy crushing retorts in electronic media, “is this a dress thing?”

The problem we have is exactly as we saw from the dress: how we address the situation where both sides are convinced that they are right and, from a perceptual and contextual standpoint, they are. We are now in the “post Dress” phase where people are saying things like “Oh God, that dress thing. I never got the big deal” whether they got it or not (because the fad is over and disowning an old fad is as faddish as a fad) and, more reflectively, “Why did people get so angry about this?”