2025 ACM Global Computing Education Conference in Botswana, 21-22 October – Another Working Group!

Posted: May 8, 2025 Filed under: Education | Tags: botswana, community, comped, education, higher education, research, working groups Leave a commentWell, well, a third post in the year? Who made me research active? (I have not yet really been made research rigorous, despite some valiant attempts) I’m very excited to announce that we’ve got a working group advertised for the 2025 ACM Global Computing Education Conference in Botswana and we’d love to get more people involved. The Main conference runs Oct 23-25, Working Groups Oct 21-22 and Doctoral Consortium Oct 22.

You can join A/Prof Claudia Szabo, me, Dr. Munienge Mbodila, and Prof Judy Sheard as we work to assess impact, analyse the effects on higher ed institutions, and identify the challenges, with a focus on the Global South.

Brief motivation

This ACM working group, fitting within the broader remit of an ACM Task Force, aims to investigate the ethical and societal impacts of Generative AI tools within the higher computing education landscape within the Global South and to provide guidelines for the ethical incorporation of AI into teaching and assessment in CS1, CS2 and CS3, considering the desired learning outcomes at each level, student learning behaviour, validity of assessment and academic integrity, and local ethical frameworks. This working group will conduct a landscape analysis on Global South ethical questions related to the use of Generative AI tools in higher education contexts, identifying promising principles, challenges, and ways to navigate the implementation of Generative AI in ethical and principled ways. This working group builds on the ITiCSE working group with a similar name, and is developed under the guidance of two of that working group’s leaders, namely Alison Clear and Tony Clear. It focuses the lens on the Global South and specifically on CS1-3 perspectives within this space.

Get Involved!

If you want to read more or apply, please follow the link to the WG page and fill out your application before the end of May 9, anywhere around the world! There are excellent travel grants available to support this so check those out, too!

ITiCSE 2025: Working Group 1 – exciting news!

Posted: February 14, 2025 Filed under: Education | Tags: computer science education research, education, games, higher education, ITiCSE, learning, play, research, teaching, technology, thinking Leave a commentTwo posts in the same year? Something must be up… and it is! After the successful presentation of Dr Rebecca Vivian and my work at Koli as both DC tool and award winning poster/demo, I looked into taking this to a working group and Dr Miranda Parker agreed to co-lead it with me, as Rebecca is currently on leave. Miranda and I have been digging into all of the aspects of this in the middle of both our day jobs and it’s been a lot of fun to work on! You think you’ve got difficult collaborators? Miranda has to listen to me pontificate about ontologies, paradigms, and philosophies!

It’s really important to recognise Rebecca’s ongoing connection with this project, as it’s still very much Rebecca’s work that got us here and she will continue to be a significant part of this, we’re just making sure we have the co-leadership of people who aren’t on leave to make it work. It’s really exciting that our Workgroup has gone to the advertisement stage!

You can see all of the WG proposals here, and sign up (maybe to ours if you like what you read here) here. We’re happy to answer questions and it’s going to be an amazing combination of serious play, serious research, and great fun.

Here’s the ad as a cut and paste!

WG1 – Paradigms, Methods, and Outcomes, Oh My!: Refining and Evolving a Research Knowledge Development Activity for Computer Science Education

Leaders:

- Nick Falkner, nickolas.falkner@adelaide.edu.au

- Miranda Parker, miranda.parker@uncc.edu

Motivation:

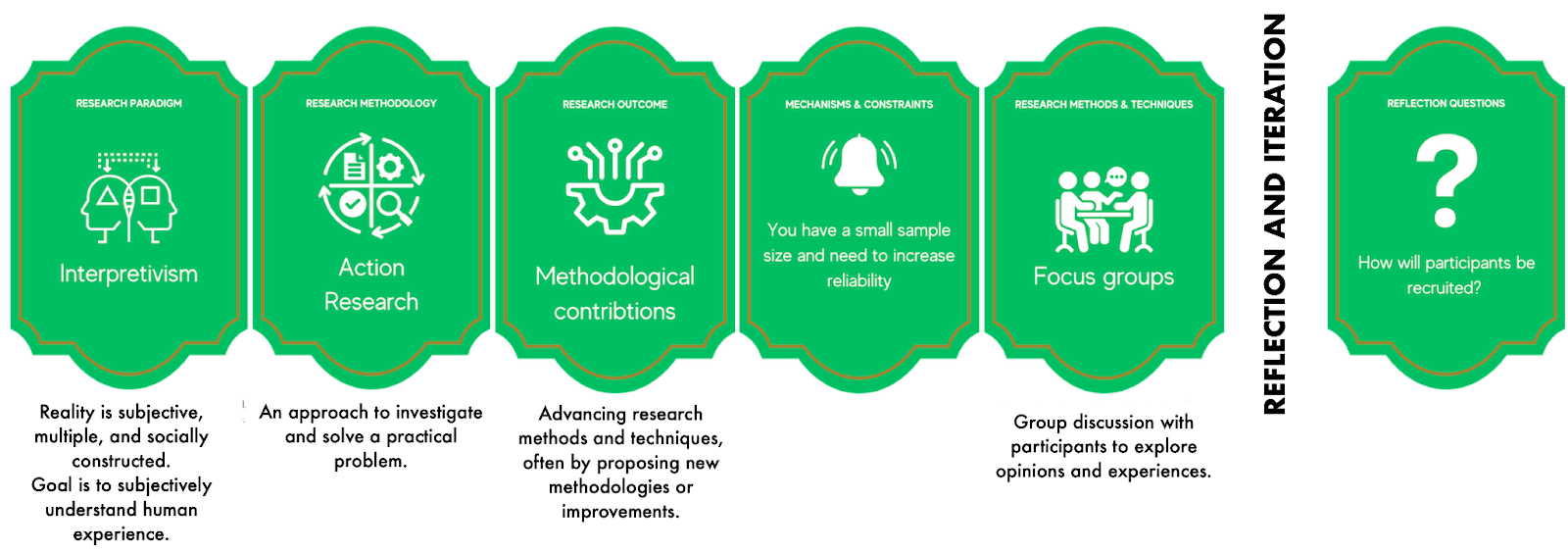

Computer Science Education Research (CSER) combines the frequently quantitative approaches of computer science, engineering, and mathematics with the often more qualitative techniques seen in psychology, sociology, behavioural science, and education. It can be challenging to select appropriate research methods in effective and efficient ways.

Inspired by the use of card-based techniques in the classroom, the Research Alternatives Exercise (RAE) is a pack of 105 cards introducing a wide range of possible research approaches. RAE provides alternatives to a participant’s current research plans using new random lenses, leading to the sketch of a new research design. The participant refers to their own design through the lens of the randomly drawn card, working to see how well this fits, informs, or improves what they have done.

The initial version of the card deck and examples of play won best paper/demo at Koli Calling 2024 and an example “run” is shown below:

Goals:

- review and modify the existing deck through collaboration in the WG

- develop a version of the deck that can be shared and used widely across the CSER community,

- develop a concise support glossary for the cards

Methodology:

The current deck will be shared with participants, to support targeted literature review, research, and consultation to:

- refine the terminology used for categories, which are currently paradigms, methodologies, outcomes, and methods,

- refine the components within categories,

- review the existing rules for suitability,

- develop the first draft of the support glossary, and

- develop different decks and play approaches for specific purposes.

Following kickoff at the end of March, we will work on Items 1 and 3, aiming for completion by the start of May. When categories are finalized, we will undertake Item 2, where each group member will work in small groups to review each category. Findings will be presented to the whole group by the beginning of June, for further discussion and collaboration. Each sub-group will be responsible for the glossary elements of their contribution, to be completed and reviewed for the start of the in-person WG time. Each working group member will be asked to share the deck with colleagues to provide feedback.

Member Selection:

We seek at least 8-10 individuals to share the required work manageably.

We are looking for participants with at least one of:

- Experience with a wide variety of research methodologies,

- Experience in supervising graduate students,

- Interest and knowledge in using game-based and facilitated techniques, or

- Experience with research skills development.

We actively invite applications from disciplines beyond computing for diversity in research skills development experience. We seek a diversity of experience, background, and culture, to ensure that the feedback encompasses the full range of CSER community experience. We also welcome student applications.

Successful applicants will:

- Attend fortnightly 60-90 minute online progress meetings, held from mid-late March to the end of June,

- Register for ITiCSE 2025,

- Physically attend the full duration of the working group, and

- Make significant contributions during the pre- and post-ITiCSE Working Group activities (3-4 hours a week).

Updated previous post: @WalidahImarisha #worldcon #sasquan

Posted: August 24, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, collaboration, community, curriculum, design, education, educational research, equity, fantasy, higher education, research, sasquan, science fiction, worldcon Leave a commentWalidah Imarisha very generously continued the discussion of my last piece with me on Twitter and I have updated that piece to include her thoughts and to provide vital additional discussion. As always, don’t read me talking about things when you can read the words of the people who are out there fixing, changing the narrative, fighting and winning.

Thank you, Walidah!

The Only Way Forward is With No Names @iamajanibrown @WalidahImarisha #afrofuturism #worldcon #sasquan

Posted: August 23, 2015 Filed under: Education | Tags: advocacy, AfroFuturism, Anonymous review, authenticity, blogging, collaboration, community, curriculum, design, education, educational research, equity, fantasy, higher education, research, sasquan, science fiction, SF&F, worldcon 5 CommentsEdit: Walidah Imarisha and I had a discussion in Twitter after I released this piece and I wanted to add her thoughts and part of our discussion. I’ve added it to the end so that you’ll have context but I mention it here because her thoughts are the ones that you must read before you leave this piece. Never listen to me when you can be listening to the people who are living this and fighting it.

I’m currently at the World Science Fiction Convention in Spokane, Washington state. As always, my focus is education and (no surprise to long term readers) equity. I’ve had the opportunity to attend some amazing panels. One was on the experience of women in art, publishing and game production of female characters for video gaming. Others were discussing issues such as non-white presence in fiction (#AfroFuturism with Professor Ajani Brown) and a long discussion of the changes between the Marvel Universe in film and comic form, as well as how we can use Science Fiction & Fantasy in the classroom to address social issues without having to directly engage the (often depressing) news sources. Both the latter panels were excellent and, in the Marvel one, Tom Smith, Annalee Flower Horne, Cassandra Rose Clarke, and Professor Brown, there was a lot of discussion of both the new Afro-American characters in movies and TV (Deathlok, Storm and Falcon) as well as how much they had changed from the comics.

I’m going to discuss what I saw and lead towards my point: that all assessment of work for its publishing potential should, where it is possible and sensible, be carried out blind, without knowledge of who wrote it.

I’ve written on this before, both here (where I argue that current publishing may not be doing what we want for the long term benefit of the community and the publishers themselves) and here, where we identify that systematic biases against people who are not western men is rampant and apparently almost inescapable as long as we can see a female name. Very recently, this Jezebel article identified that changing the author’s name on a manuscript, from female to male, not only included response rate and reduced time waiting, it changed the type of feedback given. The woman’s characters were “feisty”, the man’s weren’t. Same characters. It doesn’t matter if you think you’re being sexist or not, it doesn’t even matter (from the PNAS study in the second link) if you’re a man or a woman, the presence of a female name changes the level of respect attached to a work and also the level of reward/appreciation offered an assessment process. There are similar works that clearly identify that this problem is even worse for People of Colour. (Look up Intersectionality if you don’t know what I’m talking about.) I’m not saying that all of these people are trying to discriminate but the evidence we have says that social conditioning that leads to sexism is powerful and dominating.

Now let’s get back to the panels. The first panel “Female Characters in Video Games” with Andrea Stewart, Maurine Starkey, Annalee Flower Horne, Lauren Roy and Tanglwyst de Holloway. While discussing the growing market for female characters, the panel identified the ongoing problems and discrimination against women in the industry. 22% of professionals in the field are women, which sounds awful until you realise that this figure was 11% in 2009. However, Maurine had had her artwork recognised as being “great” when someone thought her work was a mans and “oh, drawn like a woman” when the true owner was revealed. And this is someone being explicit. The message of the panel was very positive: things were getting better. However, it was obvious that knowing someone was a woman changed how people valued their work or even how their activities were described. “Casual gaming” is often a term that describes what women do; if women take up a gaming platform (and they are a huge portion of the market) then it often gets labelled “casual gaming”.

So, point 1, assessing work at a professional level is apparently hard to do objectively when we know the gender of people. Moving on.

The first panel on Friday dealt with AfroFuturism, which looks at the long-standing philosophical and artistic expression of alternative realities relating to people of African Descent. This can be traced to the Egyptian origins of mystic and astrological architecture and religions, through tribal dances and mask ceremonies of other parts of Africa, to the P.Funk mothership and science-fiction works published in the middle of vinyl albums. There are strong notions of carving out or refining identity in order to break oppressive narratives and re-establish agency. AfroFuturism looks into creating new futures and narratives, also allowing for reinvention to escape the past, which is a powerful tool for liberation. People can be put into boxes and they want to break out to liberate themselves and, too often, if we know that someone can be put into a box then we have a nasty tendency (implicit cognitive bias) to jam them back in. No wonder, AfroFuturism is seen as a powerful force because it is an assault on the whole mean, racist narrative that does things like call groups of white people “protesters” or “concerned citizens”, and groups of black people “rioters”.

(If you follow me on Twitter, you’ve seen a fair bit of this. If you’re not following me on Twitter, @nickfalkner is the way to go.)

So point 2, if we know someone’s race, then we are more likely to enforce a narrative that is stereotypical and oppressive when we are outside of their culture. Writers inside the culture can write to liberate and to redefine identity and this probably means we need to see more of this.

I want to focus on the final panel, “Saving the World through Science Fiction: SF in the Classroom”, with Ben Cartwright, Ajani Brown (again!), Walidah Imarisha and Charlotte Lewis Brown. There are many issues facing our students on a day-to-day basis and it can be very hard to engage with some of them because it is confronting to have to address your own biases when you talk about the real world. But you can talk about racism with aliens, xenophobia with a planetary invasion, the horrors of war with apocalyptic fiction… and it’s not the nightly news. People can confront their biases without confronting them. That’s a very powerful technique for changing the world. It’s awesome.

Point 3, then, is that narratives are important and, with careful framing, we can discuss very complicated things and get away from the sheer weight of biases and reframe a discussion to talk about difficult things, without having to resort to violence or conflict. This reinforces Point 2, that we need more stories from other viewpoints to allow us to think about important issues.

We are a narrative and a mythic species: storytelling allows us to explain our universe. Storytelling defines our universe, whether it’s religion, notions of family or sense of state.

What I take from all of these panels is that many of the stories that we want to be reading, that are necessary for the healing and strengthening of our society, should be coming from groups who are traditionally not proportionally represented: women, People of Colour, Women of Colour, basically anyone who isn’t recognised as a white man in the Western Tradition. This isn’t to say that everything has to be one form but, instead, that we should be putting systems in place to get the best stories from as wide a range as possible, in order to let SF&F educate, change and grow the world. This doesn’t even touch on the Matthew Effect, where we are more likely to positively value a work if we have an existing positive relationship with the author, even if said work is not actually very good.

And this is why, with all of the evidence we have with cognitive biases changing the way people think about work based on the name, that the most likely approach to improve the range of stories that we will end up publishing is to judge as many works as we can without knowing who wrote it. If we wanted to take it further, we could even ask people to briefly explain why they did or didn’t like it. The comments on the Jezebel author’s book make it clear that, with those comments, we can clearly identify a bias in play. “It’s not for us” and things like that are not sufficiently transparent for us to see if the system is working. (Apologies to the hard-working editors out there, I know this is a big demand. Anonymity is a great start. 🙂 )

Now some books/works, you have to know who wrote it; my textbook, for example, depends upon my academic credentials and my published work, hence my identify is a part of the validity of academic work. But, for short fiction, for books? Perhaps it’s time to look at all of the evidence and to look at all of the efforts to widen the range of voices we hear and consider a commitment to anonymous review so that SF&F will be a powerful force for thought and change in the decades to come.

Thank you to all of the amazing panellists. You made everyone think and sent out powerful and positive messages. Thank you, so much!

Edit: As mentioned above, Walidah and I had a discussion that extended from this on Twitter. Walidah’s point was about changing the system so that we no longer have to hide identity to eliminate bias and I totally agree with this. Our goal has to be to create a space where bias no longer exists, where the assumption that the hierarchical dominance is white, cis, straight and male is no longer the default. Also, while SF&F is a great tool, it does not replace having the necessary and actual conversations about oppression. Our goal should never be to erase people of colour and replace it with aliens and dwarves just because white people don’t want to talk about race. While narrative engineering can work, many people do not transfer the knowledge from analogy to reality and this is why these authentic discussions of real situations must also exist. When we sit purely in analog, we risk reinforcing inequality if we don’t tie it back down to Earth.

I am still trying to attack a biased system to widen the narrative to allow more space for other voices but, as Walidah notes, this is catering to the privileged, rather than empowering the oppressed to speak their stories. And, of course, talking about oppression leads those on top of the hierarchy to assume you are oppressed. Walidah mentioned Katherine Burdekin & Swastika Nights as part of this. Our goal must be to remove bias. What I spoke about above is one way but it is very much born of the privileged and we cannot lose sight of the necessity of empowerment and a constant commitment to ensuring the visibility of other voices and hearing the stories of the oppressed from them, not passed through white academics like me.

Seriously, if you can read me OR someone else who has a more authentic connection? Please read that someone else.

Walidah’s recent work includes, with adrienne maree brown, editing the book of 20 short stories I have winging its way to me as we speak, “Octavia’s Brood: Science Fiction Stories from Social Justice Movements” and I am so grateful that she took the time to respond to this post and help me (I hope) to make it stronger.

Think. Create. Code. Wow! (@edXOnline, @UniofAdelaide, @cserAdelaide, @code101x, #code101x)

Posted: April 30, 2015 Filed under: Education, Opinion | Tags: #code101x, advocacy, authenticity, blogging, community, cser digital technologies, curriculum, digital technologies, education, educational problem, educational research, ethics, higher education, Hugh Davis, learning, MOOC, moocs, on-line learning, research, Southampton, student perspective, teaching, teaching approaches, thinking, tools, University of Southampton 1 CommentThings are really exciting here because, after the success of our F-6 on-line course to support teachers for digital technologies, the Computer Science Education Research group are launching their first massive open on-line course (MOOC) through AdelaideX, the partnership between the University of Adelaide and EdX. (We’re also about to launch our new 7-8 course for teachers – watch this space!)

Our EdX course is called “Think. Create. Code.” and it’s open right now for Week 0, although the first week of real content doesn’t go live until the 30th. If you’re not already connected with us, you can also follow us on Facebook (code101x) or Twitter (@code101x), or search for the hashtag #code101x. (Yes, we like to be consistent.)

I am slightly stunned to report that, less than 24 hours before the first content starts to roll out, that we have 17,531 students enrolled, across 172 countries. Not only that, but when we look at gender breakdown, we have somewhere between 34-42% women (not everyone chooses to declare a gender). For an area that struggles with female participation, this is great news.

I’ll save the visualisation data for another post, so let’s quickly talk about the MOOC itself. We’re taking a 6 week approach, where students focus on developing artwork and animation using the Processing language, but it requires no prior knowledge and runs inside a browser. The interface that has been developed by the local Adelaide team (thank you for all of your hard work!) is outstanding and it’s really easy to make things happen.

I love this! One of the biggest obstacles to coding is having to wait until you see what happens and this can lead to frustration and bad habits. In Processing you can have a circle on the screen in a matter of seconds and you can start playing with colour in the next second. There’s a lot going on behind the screen to make it this easy but the student doesn’t need to know it and can get down to learning. Excellent!

I went to a great talk at CSEDU last year, presented by Hugh Davis from Southampton, where Hugh raised some great issues about how MOOCs compared to traditional approaches. I’m pleased to say that our demography is far more widespread than what was reported there. Although the US dominates, we have large representations from India, Asia, Europe and South America, with a lot of interest from Africa. We do have a lot of students with prior degrees but we also have a lot of students who are at school or who aren’t at University yet. It looks like the demography of our programming course is much closer to the democratic promise of free on-line education but we’ll have to see how that all translates into participation and future study.

While this is an amazing start, the whole team is thinking of this as part of a project that will be going on for years, if not decades.

When it came to our teaching approach, we spent a lot of time talking (and learning from other people and our previous attempts) about the pedagogy of this course: what was our methodology going to be, how would we implement this and how would we make it the best fit for this approach? Hugh raised questions about the requirement for pedagogical innovation and we think we’ve addressed this here through careful customisation and construction (we are working within a well-defined platform so that has a great deal of influence and assistance).

We’ve already got support roles allocated to staff and students will see us on the course, in the forums, and helping out. One of the reasons that we tried to look into the future for student numbers was to work out how we would support students at this scale!

One of our most important things to remember is that completion may not mean anything in the on-line format. Someone comes on and gets an answer to the most pressing question that is holding them back from coding, but in the first week? That’s great. That’s success! How we measure that, and turn that into traditional numbers that match what we do in face-to-face, is going to be something we deal with as we get more information.

The whole team is raring to go and the launch point is so close. We’re looking forward to working with thousands of students, all over the world, for the next six weeks.

Sound interesting? Come and join us!

5 Things: Scientists

Posted: September 17, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, Biological scientists, blogging, community, education, einstein, five things, Google image search, learninge, newton, research, science, scientists, stereotype, stereotype threat, teaching, thinking, white coats Leave a commentAnother 5-pointer, inspired by a post I read about the stereotypes of scientists. (I know there are just as many about other professions but scientist is one of my current ones.)

- We’re not all “bushy-haired” confused old white dudes.

It’s amazing that pictures of 19th Century scientists and Einstein have had such an influence on how people portray scientists. This link shows you how academics (researchers in general but a lot of scientists are in here) are shown to children. I wouldn’t have as much of a problem with this if it wasn’t reinforcing a really negative stereotype about the potential uselessness of science (Professors who are not connected to the real world and who do foolish things) and the demography (it’s almost all men and white ones at that) which are more than likely having a significant impact on how kids feel about going into science.

It’s getting better, as we can see from a Google image search for scientists, which shows a very obvious “odd man out”, but that image search actually throws up our next problem. Can you see what it is?

- We don’t all wear white coats!

So we may have accepted that there is demographic diversity in science (but it still has to make it through to kid’s books) but that whole white coat thing is reinforced way too frequently. Those white coats are not a uniform, they’re protective clothing. When I was a winemaker, I wore heavy duty dark-coloured cotton clothing for work because I was expecting to get sprayed with wine, cleaning products and water on a regular basis. (Winemaking is like training an alcoholic elephant with a mean sense of humour.) When I was in the lab, if I was handling certain chemicals, I threw on a white coat as part of my protective gear but also to stop it getting on my clothes, because it would permanently stain or bleach them. Now I’m a computer scientist, I’ve hung up my white coat.

Biological scientists, scientists who work with chemicals or pharmaceuticals – any scientists who work in labs – will wear white coats. Everyone else (and there’s a lot of them) tend not to. Think of it like surgical scrubs – if your GP showed up wearing them in her office then you’d think “what?” and you’d be right.

- Science can be a job, a profession, a calling and a hobby – but this varies from person to person.

There’s the perception of scientist as a job so all-consuming that it robs scientists of the ability to interact with ‘normal’ people, hence stereotypes like the absent-minded Professor or the inhuman, toxic personality of the Cold Scientific Genius. Let’s tear that apart a bit because the vast majority of people in science are just not like that.

Some jobs can only be done when you are at work. You do the work, in the work environment, then you go home and you do something else. Some jobs can be taken home. The amount of work that you do on your job, outside of your actual required working time – including overtime, is usually an indicator of how much you find it interesting. I didn’t have the facilities to make wine at home but I read a lot about it and tasted a lot of wine as part of my training and my job. (See how much cooler it sounds to say that you are ‘tasting wine’ rather than ‘I drink a lot’?) Some mechanics leave work and relax. Some work on stock cars. It doesn’t have to be any particular kind of job because people all have different interests and different hobbies, which will affect how they separate work and leisure – or blend them.

Some scientists leave work and don’t do any thinking on things after hours. Some can think on things but not do anything because they don’t have the facilities at home. (The Large Hadron Collider cost close to USD 7 Billion, so no-one has one in their shed.) Some can think and do work at home, including Mathematicians, Computer Scientists, Engineers, Physicists, Chemists (to an extent) and others who will no doubt show up angrily in the comments. Yes, when I’m consumed with a problem, I’m thinking hard and I’m away with the pixies – but that’s because, as a Computer Scientist, I can build an entire universe to work with on my laptop and then test out interesting theories and approaches. But I have many other hobbies and, as anyone who has worked with me on art knows, I can go as deeply down the rabbit hole on selecting typefaces or colours.

Everyone can appear absent-minded when they’re thinking about something deeply. Scientists are generally employed to think deeply about things but it’s rare that they stay in that state permanently. There are, of course, some exceptions which leads me to…

- Not every scientist is some sort of genius.

Sorry, scientific community, but we all know it’s true. You have to be well-prepared, dedicated and relatively mentally agile to get a PhD but you don’t have to be crazy smart. I raise this because, all too often, I see people backing away from science and scientific books because “they wouldn’t understand it” or “they’re not smart enough for it”. Richard Feynman, an actual genius and great physicist, used to say that if he couldn’t explain it to Freshman at College then the scientific community didn’t understand it well enough. Think about that – he’s basically saying that he expects to be able to explain every well-understood scientific principle to kids fresh out of school.

The genius stereotype is a not just a problem because it prevents people coming into the field but because it puts so much demand on people already in the field. You could probably name three physicists, at a push, and you’d be talking about some of the ground-shaking members of the field. Involved in work leading up those discoveries, and beyond, are hundreds of thousands of scientists, going about their jobs, doing things that are valuable, interesting and useful, but perhaps not earth-shattering. Do you expect every soldier to be a general? Every bank clerk to become the general manager? Not every scientist will visibly change the world, although many (if not most) will make contributions that build together to change the world.

Sir Isaac Newton, another famous physicist, referred to the words of Bernard of Chartres when he famously wrote:

“If I have seen further it is by standing on the sholders [sic] of Giants”

making the point even more clearly by referring to a previous person’s great statement to then make it himself! But there’s one thing about standing on the shoulders of giants…

- There’s often a lot of wrong to get to right.

Science is evidence-based, which means that it’s what you observe occurring that validates your theories and allows you to develop further ideas about how things work. The problem is that you start from a position of not knowing much, make some suggestions, see if they work, find out where they don’t and then fix up your ideas. This has one difficult side-effect for non-scientists in that scientists can very rarely state certainty (because there may be something that they just haven’t seen yet) and they can’t prove a negative, as you just can’t say something won’t happen because it hasn’t happened yet. (Absence of evidence is not evidence of absence.) This can be perceived as weakness but it’s one of the great strengths of science. We work with evidence that contradicts our theories to develop our theories and extend our understanding. Some things happen rarely and under only very specific circumstances. The Large Hadron Collider was built to find evidence to confirm a theory and, because the correct tool was built, physicists now better understand how our universe works. This is a Good Thing as the last thing we want do is void the warranty through incorrect usage.The more complicated the problem, the more likelihood that it will take some time to get it right. We’re very certain about gravity, in most practical senses, and we’re also very confident about evolution. And climate change, for that matter, which will no doubt get me some hate on the comments but the scientific consensus is settled. It’s happening. Can we say absolutely for certain? No, because we’re scientists. Again – strength, not weakness.

When someone gets it wrong deliberately, and that sadly does happen occasionally, we take it very seriously because that whole “standing on shoulders of giants” is so key to our approach. A disingenuous scientist, like Andrew Wakefield and his shamefully bad and manipulated study on vaccination that has caused so much damage, will take a while to be detected and then we have to deal with the repercussions. The good news is that most of the time we find these people and limit their impact. The bad news is that this can be spun in many ways, especially by compromised scientists, and humans can be swayed by argument rather than fact quite easily.

The take away from this is that admitting that we need to review a model is something you should regard in the same light as your plane being delayed because of a technical issue. You’d rather we fixed it, immediately and openly, than tried to fly on something we knew might fail.

Dr Falkner Goes to Canberra Day 2, “Q and A”, (#smp2014 #AdelEd @foreignoffice)

Posted: March 18, 2014 Filed under: Education | Tags: Chief Scientific Advisor, collaboration, education, EU, european union, foreign office, higher education, research, science, Science Meets Parliament, smp2014 Leave a commentThe last formal event was a question and answer session with Professor Robin Grimes, the Chief Scientific Advisor for the UK Foreign and Commonwealth Office (@foreignoffice). I’ll recover from Question Time and talk about it later. The talk appears to have a secondary title of “The Role of the CSA Network, CSAs in SAGE, the CSA in the FCO & SIN”. Professor Grimes started by talking about the longstanding research collaboration between the UK and AUS. Apparently, it’s a unique relationship (in the positive sense), according to William Hague. Once again, we come back to explaining things to non-scientists or other scientists.

There are apparently a number of Chief Scientists who belong to the CSA Network, SAGE – Scientific Advisory Group for Emergencies – and the Science and Innovation Network (SIN). (It’s all a bit Quartermass really.) And here’s a picture that the speaker refers to as a rogue’s gallery. We then saw a patchwork quilt that shows how the UK Government Science Advisory Structure, which basically says that they work through permanent secretaries and ministers and other offices – imagine a patchwork quilt representation of the wars in the Netherlands as interpreted by Mondrian in pastoral shades and you have this diagram. There is also another complex diagram that shows that laboratories are many and advisors scale.

Did you know that there is a UK National Risk Register? Well, there is, and there’s a diagram with blue blobs and type I can’t see from the back of the room to talk about it. (Someone did ask why they couldn’t read it and the speaker joked that it was restricted. More seriously, things are rated on their relative likelihood and relative impact.

The UK CSAs and FCO CS are all about communication, mostly by acronym apparently. (I kid.) Also, Stanley Baldwin’s wife could rock a hat. More seriously, fracking is an example of poor communication. Scientific concerns (methane release, seismic events and loss of aquifer integrity) are not meeting the community concerns of general opposition to oil and gas and the NIMBY approach. The speaker also mentioned the L’Aquila incident, where scientists were convicted of a crime for making an incorrect estimation of the likelihood of a seismic event. What does this mean for scientific advice generally? (Hint: don’t give scientific advice in Italy.) Scientists should feel free to express their view and understanding conceding risks, their mitigation and management, freely to the government. If actions discourage scientists from coming forward, then it;s highly undesirable. (UK is common law so the first legal case will be really, really interesting in this regard.)

What is the role of the CSA in emergencies? This is where SAGE comes in. They are “responsible for coordinating and peer reviewing, as far as possible, scientific and technical advice to inform decision-making”. This is chaired by the GCSA, who report to COBRA (seriously! It’s the Cabinet Office Briefing Room A) and includes CSAs, sector experts and independent scientists. So swine flu, volcanic ash cloud, Fukushima and the Ash die-back – put up the SAGE signal!

What’s happened with SAGE intervention? Better relationships with science diplomacy. Also, when the media goes well, there is a lot of good news to be had.

The Foreign Office gets science-based advice which relate to security, prosperity and consular – the three priorities of the Foreign Office. It seems that everyone has more science than us. There are networks for Science Networks, Science Evidence and Scientific Leadership, but the Foreign Office is a science-using rather than a science-producing department. There is no dedicated local R&D, scientists and engineer cadre or a departmental science advisory committee.

The Science and Innovation Network (SIN) has two parent departments, Foreign Office (FCO) and Department for Business, Innovation and Skills (BIS, hoho). And we saw a slide with a lot of acronyms. This is the equivalent of the parents being Department of Industry and DFAT for our system. 90 people over 28 countries and territories, across 46 cities. There’s even one here (where here is Melbourne and Canberra). (So we support UK scientists coming out to do cool science here. Which is good. If only we had a Minister for Science, eh?) Apparently they produce newsletters and all sorts of tasty things.

They even talk to the EU (relatively often) and travels to the EU quite frequently in an attempt to make the relationships work through the EU and bi-laterally. There aren’t as many Science and Innovation officers in the EU as they can deal directly with the EU. There are also apparently a lot of student opportunities (sound of ears pricking up) but it’s for UK students coming to us. There are also opportunities for UK-origin scientists to either work back in the UK or for them to bring out UK academics that they know. (Paging Martin White!)

There is a Newton Fund (being developed at the moment), a science-based aid program for countries that are eligible for official development assistance (ODA) and this could be a bi-lateral UK-AUS collaboration.

Well, that’s it for the formal program. There’s going to be some wrap-up and then drinks with Adam Bandt, Deputy Leader of the Australian Greens, Hope you’ve enjoyed this!

When Does Failing Turn You Into a Failure?

Posted: December 17, 2012 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, ci2012, community, conventicle, curriculum, design, education, educational research, ethics, feedback, Generation Why, grand challenge, higher education, icer2012, in the student's head, learning, principles of design, reflection, research, resources, student perspective, teaching, teaching approaches, thinking, tools, workload Leave a commentThe threat of failure is very different from the threat of being a failure. At the Creative Innovations conference I was just at, one of the strongest messages there was that we learn more from failure than we do from success, and that failure is inevitable if you are actually trying to be innovative. If you learn from your failures and your failure is the genuine result of something that didn’t work, rather than you sat around and watched it burn, then this is just something that happens, was the message from CI, and any other culture makes us overly-cautious and risk averse. As most of us know, however, we are more strongly encouraged to cover up our failures than to celebrate them – and we are frequently better off not trying in certain circumstances than failing.

At the recent Adelaide Conventicle, which I promise to write up very, very soon, Dr Raymond Lister presented an excellent talk on applying Neo-Piagetian concepts and framing to the challenges students face in learning programming. This is a great talk (which I’ve had the good fortune to see twice and it’s a mark of the work that I enjoyed it as much the second time) because it allows us to talk about failure to comprehend, or failure to put into practice, in terms of a lack of the underlying mechanism required to comprehend – at this point in the student’s development. As part of the steps of development, we would expect students to have these head-scratching moments where they are currently incapable of making any progress but, framing it within developmental stages, allows us to talk about moving students to the next stage, getting them out of this current failure mode and into something where they will achieve more. Once again, failure in this case is inevitable for most people until we and they manage to achieve the level of conceptual understanding where we can build and develop. More importantly, if we track how they fail, then we start to get an insight into which developmental stage they’re at.

One thing that struck me with Raymond’s talk, was that he starts off talking about “what ruined Raymond” and discussing the dire outcomes promised to him if he watched too much television, as it was to me for playing too many games, and it is to our children for whatever high tech diversion is the current ‘finger wagging’ harbinger of doom. In this case, ruination is quite clearly the threat of becoming a failure. However, this puts us in a strange position, because if failure is almost inevitable but highly valuable if managed properly and understood, what is it about being a failure that is so terrible? It’s like threatening someone that they’ll become too enthusiastic and unrestrained in their innovation!

I am, quelle surprise, playing with words here because to be a failure is to be classed as someone for whom success is no longer an option. If we were being precise, then we would class someone as a perpetual failure or, more simply, unsuccessful. This is, quite usually, the point at which it is acceptable to give up on someone – after all, goes the reasoning, we’re just pouring good money after bad, wasting our time, possibly even moving the deck chairs on the Titanic, and all those other expressions that allow us to draw that good old categorical line between us and others and put our failures into the “Hey, I was trying something new” basket and their failures into the “Well, he’s just so dumb he’d try something like that.” The only problem with this is that I’m really not sure that a lifetime of failure is a guaranteed predictor of future failure. Likely? Yeah, probably. So likely we can gamble someone’s life on it? No, I don’t believe so.

When I was failing courses in my first degree, it took me a surprisingly long time to work out how to fix it, most of which was down to the fact that (a) I had no idea how to study but (b) no-one around me was vaguely interested in the fact that I was failing. I was well on my way to becoming a perpetual failure, someone who had no chance of holding down a job let alone having a career, and it was a kind and fortuitous intervention that helped me. Now, with a degree of experience and knowledge, I can look back into my own patterns and see pretty much what was wrong with me – although, boy, would I have been a difficult cuss to work with. However, failing, which I have done since then and I will (no doubt) do again, has not appeared to have turned me into a failure. I have more failings than I care to count but my wife still loves me, my friends are happy to be seen with me and no-one sticks threats on my door at work so these are obviously in the manageable range. However, managing failure has been a challenging thing for me and I was pondering this recently – how people deal with being told that they’re wrong is very important to how they deal with failing to achieve something.

I’m reading a rather interesting, challenging and confronting, article on, and I cannot believe there’s a phrase for this, rage murders in American schools and workplaces, which claims that these horrifying acts are, effectively, failed revolts, which is with Mark Ames, the author of “Going Postal” (2005). Ames seems to believe that everything stems from Ronald Reagan (and I offer no opinion either way, I hasten to add) but he identifies repeated humiliation, bullying and inhumane conditions as taking ordinary people, who would not usually have committed such actions, and turning them into monstrous killing machines. Ames’ thesis is that this is not the rise of psychopathy but a rebellion against breaking spirit and the metaphorical enslavement of many of the working and middle class that leads to such a dire outcome. If the dominant fable of life is that success is all, failure is bad, and that you are entitled to success, then it should be, as Ames says in the article, exactly those people who are most invested in these cultural fables who would be the most likely to break when the lies become untenable. In the language that I used earlier, this is the most awful way to handle the failure of the fabric of your world – a cold and rational journey that looks like madness but is far worse for being a pre-meditated attempt to destroy the things that lied to you. However, this is only one type of person who commits these acts. The Monash University gunman, for example, was obviously delusional and, while he carried out a rational set of steps to eliminate his main rival, his thinking as to why this needed to happen makes very little sense. The truth is, as always, difficult and muddy and my first impression is that Ames may be oversimplifying in order to advance a relatively narrow and politicised view. But his language strikes me: the notion of the “repeated humiliation, bullying and inhumane conditions”, which appears to be a common language among the older, workplace-focused, and otherwise apparently sane humans who carry out such terrible acts.

One of the complaints made against the radio network at the heart of the recent Royal Hoax, 2DayFM, is that they are serial humiliators of human beings and show no regard for the general well-being of the people involved in their pranks – humiliation, inhumanity and bullying. Sound familiar? Here I am, as an educator, knowing that failure is going to happen for my students and working out how to bring them up into success and achievement when, on one hand, I have a possible set of triggers where beating down people leads to apparent madness, and at least part of our entertainment culture appears to delight in finding the lowest bar and crawling through the filth underneath it. Is telling someone that they’re a failure, and rubbing it in for public enjoyment, of any vague benefit to anyone or is it really, as I firmly believe, the best way to start someone down a genuinely dark path to ruination and resentment.

Returning to my point at the start of this (rather long) piece, I have met Raymond several times and he doesn’t appear even vaguely ruined to me, despite all of the radio, television and Neo-Piagetian contextual framing he employs. The message from Raymond and CI paints failure as something to be monitored and something that is often just a part of life – a stepping stone to future success – but this is most definitely not the message that generally comes down from our society and, for some people, it’s becoming increasingly obvious that their inability to handle the crushing burden of permanent classification as a failure is something that can have catastrophic results. I think we need to get better at genuinely accepting failure as part of trying, and to really, seriously, try to lose the classification of people as failures just because they haven’t yet succeeded at some arbitrary thing that we’ve defined to be important.

Taught for a Result or Developing a Passion

Posted: December 13, 2012 Filed under: Education | Tags: advocacy, authenticity, blogging, Bloom, community, curriculum, design, education, educational problem, educational research, ethics, feedback, Generation Why, grand challenge, higher education, learning, measurement, MIKE, PIRLS, PISA, principles of design, reflection, research, resources, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design, workload Leave a commentAccording to a story in the Australian national broadcaster, the ABC, website, Australian school children are now ranked 27th out of 48 countries in reading, according to the Progress in International Reading Literacy Study, and that a quarter of Australia’s year 4 students had failed to meet the minimum standard defined for reading at their age. As expected, the Australian government has said “something must be done” and the Australian Federal Opposition has said “you did the wrong thing”. Ho hum. Reading the document itself is fascinating because our fourth graders apparently struggle once we move into the area of interpretation and integration of ideas and information, but do quite well on simple inference. There is a lot of scope for thought about how we are teaching, given that we appear to have a reasonably Bloom-like breakdown on the data but I’ll leave that to the (other) professionals. Another international test, the Program for International School Assessment (PISA) which is applied to 15 year olds, is something that we rank relatively highly in, which measures reading, mathematics and science. (And, for the record, we’re top 10 on the PISA rankings after a Year 4 ranking of 27th. Either someone has gone dramatically wrong in the last 7 years of Australian Education, or Year 4 results on PIRLS doesn’t have as much influence as we might have expected on the PISA).We don’t yet have the results for this but we expect it out soon.

What is of greatest interest to me from the linked article on the ABC is the Oslo University professor, Svein Sjoberg, who points out the comparing educational systems around the globe is potentially too difficult to be meaningful – which is a refreshingly honest assessment in these performance-ridden and leaderboard-focused days. As he says:

“I think that is a trap. The PISA test does not address the curricular test or the syllabus that is set in each country.

Like all of these tests, PIRLS and PISA measure a student’s ability to perform on a particular test and, regrettably, we’re all pretty much aware, or should be by now, that using a test like this will give you the results that you built the test to give you. But one thing that really struck me from his analysis of the PISA was that the countries who perform better on the PISA Science ranking generally had a lower measure of interest in science. Professor Sjoberg noted that this might be because the students had been encouraged to become result-focused rather than encouraging them to develop a passion.

If Professor Sjoberg is right, then is not just a tragedy, it’s an educational catastrophe – we have now started optimising our students to do well in tests but be less likely to go and pursue the subjects in which they can get these ‘good’ marks. If this nasty little correlation holds, then will have an educational system that dominates in the performance of science in the classroom, but turns out fewer actual scientists – our assessment is no longer aligned to our desired outcomes. Of course, what it is important to remember is that the vast majority of these rankings are relative rather than absolute. We are not saying that one group is competent or incompetent, we are saying that one group can perform better or worse on a given test.

Like anything, to excel at a particular task, you need to focus on it, practise it, and (most likely) prioritise it above something else. What Professor Sjoberg’s analysis might indicate, and I realise that I am making some pretty wild conjecture on shaky evidence, is that certain schools have focused the effort on test taking, rather than actual science. (I know, I know, shock, horror) Science is not always going to fit into neat multiple choice questions or simple automatically marked answers to questions. Science is one of the areas where the viva comes into its own because we wish to explore someone’s answer to determine exactly how much they understand. The questions in PISA theoretically fall into roughly the same categories (MCQ, short answer) as the PIRLS so we would expect to see similar problems in dealing with these questions, if students were actually having a fundamental problem with the questions. But, despite this, the questions in PISA are never going to be capable of gauging the depth of scientific knowledge, the passion for science or the degree to which a student already thinks within the discipline. A bigger problem is the one which always dogs standardised testing of any sort, and that is the risk that answering the question correctly and getting the question right may actually be two different things.

Years ago, I looked at the examination for a large company’s offering in a certain area, I have no wish to get sued so I’m being deliberately vague, and it became rapidly apparent that on occasion there was a company answer that was not the same as the technically correct answer. The best way to prepare for the test was not to study the established base of the discipline but it was to read the corporate tracts and practise the skills on the approved training platforms, which often involved a non-trivial fee for training attendance. This was something that was tangential to my role and I was neither of a sufficiently impressionable age nor strongly bothered enough by it for it to affect me. Time was a factor and incorrect answers cost you marks – so I sat down and learned the ‘right way’ so that I could achieve the correct results in the right time and then go on to do the work using the actual knowledge in my head.

However, let us imagine someone who is 14 or 15 and, on doing the practice tests for ‘test X’ discovers that what is important is in hitting precisely the right answer in the shortest time – thinking about the problem in depth is not really on the table for a two-hour exam, unless it’s highly constrained and students are very well prepared. How does this hypothetical student retain respect for teachers who talk about what science is, the purity of mathematics, or the importance of scholarship, when the correct optimising behaviour is to rote-learn the right answers, or the safe and acceptable answers, and reproduce those on demand. (Looking at some of the tables in the PISA document, we see that the best performing nations in the top band of mathematical thinking are those with amazing educational systems – the desired range – and those who reputedly place great value in high power-distance classrooms with large volumes of memorisation and received wisdom – which is probably not the desired range.)

Professor Sjoberg makes an excellent point, which is that trying to work out what is in need of fixing, and what is good, about the Australian education system is not going to be solved by looking at single figure representations of our international rankings, especially when the rankings contradict each other on occasion! Not all countries are the same, pedagogically, in terms of their educational processes or their power distances, and adjacency of rank is no guarantee that the two educational systems are the same (Finland, next to Shanghai-China for instance). What is needed is reflection upon what we think constitutes a good education and then we provide meaningful local measures that allow us to work out how we are doing with our educational system. If we get the educational system right then, if we keep a bleary eye on the tests we use, we should then test well. Optimising for the tests takes the effort off the education and puts it all onto the implementation of the test – if that is the case, then no wonder people are less interested in a career of learning the right phrase for a short answer or the correct multiple-choice answer.

AAEE 2012 – Yes, Another Conference

Posted: December 5, 2012 Filed under: Education | Tags: aaee2012, advocacy, ci2012, conventicle, education, educational research, feedback, Generation Why, higher education, in the student's head, learning, reflection, research, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design 3 CommentsIn between writing up the conventicle (which I’m not doing yet), the CI Conference (which I’m doing slowly) and sleep (infrequent), I’m attending the Australasian Association for Engineering Education 2012 conference. Today, I presented a paper on e-Enhancing existing courses and, through a co-author, another paper on authentic teaching tool creation experiences.

My first paper gave me a chance to look at the Google analytics and tracking data for the on-line material I created in 2009. Since then, there have been:

- 11,118 page views

- 2.99 pages viewed/visit

- 1,721 unique visitors

- 3,715 visits overall

The other thing that is interesting is that roughly 60% of the viewers return to view the podcasts again. The theme of my talk was “Is E-Enhancement Worth It” and I had the pleasure of pointing out that I felt that it was because, as I was presenting, I was simultaneously being streamed giving my thoughts of computer networks to students in Singapore and (strangely enough) Germany. As I said in the talk and in the following discussion, the podcasts are far from perfect and, to increase their longevity, I need to make them shorter and more aligned to a single concept.

Why?

Because while the way I present concepts may change, because of sequencing and scaffolding changes, the way that I present an individual concept is more likely to remain the same over time. My next step is to make up a series of conceptual podcasts that are maybe 3-5 minutes in duration. Then the challenge is how to assemble these – I have ideas but not enough time.

One of the ideas raised today is the idea that we are seeing the rise of the digital native, a new type of human acclimatised to a short gratification loop, multi-tasking, and a non-linear mode of learning. I must be honest and say that everything I’ve read on the multi-tasking aspect, at least, leads me to believe that this new generation don’t multi-task any better than anyone else did. If they do two things, then they do them more slowly and don’t achieve the same depth: there’s no shortage of research work on this and given the limits of working memory and cognition this makes a great deal of sense. Please note, I’m not saying that I don’t believe that Homo Multiplexor can’t emerge, it’s just that I have not yet any strong scientific evidence to back up the anecdotes. I’m perfectly willing to believe that default searching activities have changed (storing ways of searching rather than the information) because that is a logical way to reduce cognitive load but I am yet to see strong evidence that my students can do two things at once well and without any loss of time. Either working memory has completely changed, which we should be able to test, or we risk confusing the appearance of doing two things at once with actually doing two things at once.

This is one of those situations that, as one of my colleagues observed, leaves us in that difficult position of being told, with great certainty, about a given student (often someone’s child) who can achieve great things while simultaneously watching TV and playing WoW. Again, I do not rule out the possibility of a significant change in humanity (we’re good at it) but I have often seen that familiar tight smile and the noncommittal nod as someone doesn’t quite acknowledge that your child is somehow the spearhead of a new parallelised human genus.

It’s difficult sometimes to express ideas like this. Compare this to the numbers I cited above. Everyone who reads this will look at those numbers and, while they will think many things, they are unlikely to think “I don’t believe that”. Yet I know that there are people who have read this and immediately snorted (or the equivalent) because they frankly disbelieve me o the multi-tasking, with no more or less hard evidence than that supporting the numbers. I’m actually expecting some comments on this one because the notion of the increasing ability of young people to multitask is so entrenched. If there is a definitive set of work supporting this, then I welcome it. The only problem is that all I can find supports the original work on working memory and associated concepts – there are only so many things you can focus on and beyond that you might be able to function but not at much depth. (There are exceptions, of course, but the 0.1% of society do not define the rule.)

The numbers are pasted straight out of my Google analytics for the learning materials I put up – yet you have no more reason to believe them than if I said “83% of internet statistics are made up”, which is a made up statistic. (If is is true, it is accidentally true.) We see again one of the great challenges in education: numbers are convincing, evidence that contradicts anecdote is often seen as wrong, finding evidence in the first place can be hard.

One more day of conference tomorrow! I can only wonder what we’ll be exposed to.