Designing a MOOC: how far did it reach? #csed

Posted: June 10, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, collaboration, community, computer science education, constructivist, contributing student pedagogy, curriculum, data visualisation, design, education, educational problem, educational research, ethics, feedback, higher education, in the student's head, learning, measurement, MOOC, moocs, principles of design, reflection, resources, students, teaching, teaching approaches, thinking, tools Leave a commentMark Guzdial posted over on his blog on “Moving Beyond MOOCS: Could we move to understanding learning and teaching?” and discusses aspects (that still linger) of MOOC hype. (I’ve spoken about MOOCs done badly before, as well as recording the thoughts of people like Hugh Davis from Southampton.) One of Mark’s paragraphs reads:

“The value of being in the front row of a class is that you talk with the teacher. Getting physically closer to the lecturer doesn’t improve learning. Engagement improves learning. A MOOC puts everyone at the back of the class, listening only and doing the homework”

My reply to this was:

“You can probably guess that I have two responses here, the first is that the front row is not available to many in the real world in the first place, with the second being that, for far too many people, any seat in the classroom is better than none.

But I am involved in a, for us, large MOOC so my responses have to be regarded in that light. Thanks for the post!”

Mark, of course, called my bluff and responded with:

“Nick, I know that you know the literature in this space, and care about design and assessment. Can you say something about how you designed your MOOC to reach those who would not otherwise get access to formal educational opportunities? And since your MOOC has started, do you know yet if you achieved that goal — are you reaching people who would not otherwise get access?”

So here is that response. Thanks for the nudge, Mark! The answer is a bit long but please bear with me. We will be posting a longer summary after the course is completed, in a month or so. Consider this the unedited taster. I’m putting this here, early, prior to the detailed statistical work, so you can see where we are. All the numbers below are fresh off the system, to drive discussion and answering Mark’s question at, pretty much, a conceptual level.

First up, as some background for everyone, the MOOC team I’m working with is the University of Adelaide‘s Computer Science Education Research group, led by A/Prof Katrina Falkner, with me (Dr Nick Falkner), Dr Rebecca Vivian, and Dr Claudia Szabo.

I’ll start by noting that we’ve been working to solve the inherent scaling issues in the front of the classroom for some time. If I had a class of 12 then there’s no problem in engaging with everyone but I keep finding myself in rooms of 100+, which forces some people to sit away from me and also limits the number of meaningful interactions I can make to individuals in one setting. While I take Mark’s point about the front of the classroom, and the associated research is pretty solid on this, we encountered an inherent problem when we identified that students were better off down the front… and yet we kept teaching to rooms with more student than front. I’ll go out on a limb and say that this is actually a moral issue that we, as a sector, have had to look at and ignore in the face of constrained resources. The nature of large spaces and people, coupled with our inability to hover, means that we can either choose to have a row of students effectively in a semi-circle facing us, or we accept that after a relatively small number of students or number of rows, we have constructed a space that is inherently divided by privilege and will lead to disengagement.

So, Katrina’s and my first foray into this space was dealing with the problem in the physical lecture spaces that we had, with the 100+ classes that we had.

Katrina and I published a paper on “contributing student pedagogy” in Computer Science Education 22 (4), 2012, to identify ways for forming valued small collaboration groups as a way to promote engagement and drive skill development. Ultimately, by reducing the class to a smaller number of clusters and making those clusters pedagogically useful, I can then bring the ‘front of the class’-like experience to every group I speak to. We have given talks and applied sessions on this, including a special session at SIGCSE, because we think it’s a useful technique that reduces the amount of ‘front privilege’ while extending the amount of ‘front benefit’. (Read the paper for actual detail – I am skimping on summary here.)

We then got involved in the support of the national Digital Technologies curriculum for primary and middle school teachers across Australia, after being invited to produce a support MOOC (really a SPOC, small, private, on-line course) by Google. The target learners were teachers who were about to teach or who were teaching into, initially, Foundation to Year 6 and thus had degrees but potentially no experience in this area. (I’ve written about this before and you can find more detail on this here, where I also thanked my previous teachers!)

The motivation of this group of learners was different from a traditional MOOC because (a) everyone had both a degree and probable employment in the sector which reduced opportunistic registration to a large extent and (b) Australian teachers are required to have a certain number of professional development (PD) hours a year. Through a number of discussions across the key groups, we had our course recognised as PD and this meant that doing our course was considered to be valuable although almost all of the teachers we spoke to were furiously keen for this information anyway and my belief is that the PD was very much ‘icing’ rather than ‘cake’. (Thank you again to all of the teachers who have spent time taking our course – we really hope it’s been useful.)

To discuss access and reach, we can measure teachers who’ve taken the course (somewhere in the low thousands) and then estimate the number of students potentially assisted and that’s when it gets a little crazy, because that’s somewhere around 30-40,000.

In his talk at CSEDU 2014, Hugh Davis identified the student groups who get involved in MOOCs as follows. The majority of people undertaking MOOCs were life-long learners (older, degreed, M/F 50/50), people seeking skills via PD, and those with poor access to Higher Ed. There is also a small group who are Uni ‘tasters’ but very, very small. (I think we can agree that tasting a MOOC is not tasting a campus-based Uni experience. Less ivy, for starters.) The three approaches to the course once inside were auditing, completing and sampling, and it’s this final one that I want to emphasise because this brings us to one of the differences of MOOCs. We are not in control of when people decide that they are satisfied with the free education that they are accessing, unlike our strong gatekeeping on traditional courses.

I am in total agreement that a MOOC is not the same as a classroom but, also, that it is not the same as a traditional course, where we define how the student will achieve their goals and how they will know when they have completed. MOOCs function far more like many people’s experience of web browsing: they hunt for what they want and stop when they have it, thus the sampling engagement pattern above.

(As an aside, does this mean that a course that is perceived as ‘all back of class’ will rapidly be abandoned because it is distasteful? This makes the student-consumer a much more powerful player in their own educational market and is potentially worth remembering.)

Knowing these different approaches, we designed the individual subjects and overall program so that it was very much up to the participant how much they chose to take and individual modules were designed to be relatively self-contained, while fitting into a well-designed overall flow that built in terms of complexity and towards more abstract concepts. Thus, we supported auditing, completing and sampling, whereas our usual face-to-face (f2f) courses only support the first two in a way that we can measure.

As Hugh notes, and we agree through growing experience, marking/progress measures at scale are very difficult, especially when automated marking is not enough or not feasible. Based on our earlier work in contributing collaboration in the class room, for the F-6 Teacher MOOC we used a strong peer-assessment model where contributions and discussions were heavily linked. Because of the nature of the cohort, geographical and year-level groups formed who then conducted additional sessions and produced shared material at a slightly terrifying rate. We took the approach that we were not telling teachers how to teach but we were helping them to develop and share materials that would assist in their teaching. This reduced potential divisions and allows us to establish a mutually respectful relationship that facilitated openness.

(It’s worth noting that the courseware is creative commons, open and free. There are people reassembling the course for their specific take on the school system as we speak. We have a national curriculum but a state-focused approach to education, with public and many independent systems. Nobody makes any money out of providing this course to teachers and the material will always be free. Thank you again to Google for their ongoing support and funding!)

Overall, in this first F-6 MOOC, we had higher than usual retention of students and higher than usual participation, for the reasons I’ve outlined above. But this material was for curriculum support for teachers of young students, all of whom were pre-programming, and it could be contained in videos and on-line sharing of materials and discussion. We were also in the MOOC sweet-spot: existing degreed learners, PD driver, and their PD requirement depended on progressive demonstration on goal achievement, which we recognised post-course with a pre-approved certificate form. (Important note: if you are doing this, clear up how the PD requirements are met and how they need to be reported back, as early on as you can. It meant that we could give people something valuable in a short time.)

The programming MOOC, Think. Create. Code on EdX, was more challenging in many regards. We knew we were in a more difficult space and would be more in what I shall refer to as ‘the land of the average MOOC consumer’. No strong focus, no PD driver, no geographically guaranteed communities. We had to think carefully about what we considered to be useful interaction with the course material. What counted as success?

To start with, we took an image-based approach (I don’t think I need to provide supporting arguments for media-driven computing!) where students would produce images and, over time, refine their coding skills to produce and understand how to produce more complex images, building towards animation. People who have not had good access to education may not understand why we would use programming in more complex systems but our goal was to make images and that is a fairly universally understood idea, with a short production timeline and very clear indication of achievement: “Does it look like a face yet?”

In terms of useful interaction, if someone wrote a single program that drew a face, for the first time – then that’s valuable. If someone looked at someone else’s code and spotted a bug (however we wish to frame this), then that’s valuable. I think that someone writing a single line of correct code, where they understand everything that they write, is something that we can all consider to be valuable. Will it get you a degree? No. Will it be useful to you in later life? Well… maybe? (I would say ‘yes’ but that is a fervent hope rather than a fact.)

So our design brief was that it should be very easy to get into programming immediately, with an active and engaged approach, and that we have the same “mostly self-contained week” approach, with lots of good peer interaction and mutual evaluation to identify areas that needed work to allow us to build our knowledge together. (You know I may as well have ‘social constructivist’ tattooed on my head so this is strongly in keeping with my principles.) We wrote all of the materials from scratch, based on a 6-week program that we debated for some time. Materials consisted of short videos, additional material as short notes, participatory activities, quizzes and (we planned for) peer assessment (more on that later). You didn’t have to have been exposed to “the lecture” or even the advanced classroom to take the course. Any exposure to short videos or a web browser would be enough familiarity to go on with.

Our goal was to encourage as much engagement as possible, taking into account the fact that any number of students over 1,000 would be very hard to support individually, even with the 5-6 staff we had to help out. But we wanted students to be able to develop quickly, share quickly and, ultimately, comment back on each other’s work quickly. From a cognitive load perspective, it was crucial to keep the number of things that weren’t relevant to the task to a minimum, as we couldn’t assume any prior familiarity. This meant no installers, no linking, no loaders, no shenanigans. Write program, press play, get picture, share to gallery, winning.

As part of this, our support team (thanks, Jill!) developed a browser-based environment for Processing.js that integrated with a course gallery. Students could save their own work easily and share it trivially. Our early indications show that a lot of students jumped in and tried to do something straight away. (Processing is really good for getting something up, fast, as we know.) We spent a lot of time testing browsers, testing software, and writing code. All of the recorded materials used that development environment (this was important as Processing.js and Processing have some differences) and all of our videos show the environment in action. Again, as little extra cognitive load as possible – no implicit requirement for abstraction or skills transfer. (The AdelaideX team worked so hard to get us over the line – I think we may have eaten some of their brains to save those of our students. Thank you again to the University for selecting us and to Katy and the amazing team.)

The actual student group, about 20,000 people over 176 countries, did not have the “built-in” motivation of the previous group although they would all have their own levels of motivation. We used ‘meet and greet’ activities to drive some group formation (which worked to a degree) and we also had a very high level of staff monitoring of key question areas (which was noted by participants as being very high for EdX courses they’d taken), everyone putting in 30-60 minutes a day on rotation. But, as noted before, the biggest trick to getting everyone engaged at the large scale is to get everyone into groups where they have someone to talk to. This was supposed to be provided by a peer evaluation system that was initially part of the assessment package.

Sadly, the peer assessment system didn’t work as we wanted it to and we were worried that it would form a disincentive, rather than a supporting community, so we switched to a forum-based discussion of the works on the EdX discussion forum. At this point, a lack of integration between our own UoA programming system and gallery and the EdX discussion system allowed too much distance – the close binding we had in the R-6 MOOC wasn’t there. We’re still working on this because everything we know and all evidence we’ve collected before tells us that this is a vital part of the puzzle.

In terms of visible output, the amount of novel and amazing art work that has been generated has blown us all away. The degree of difference is huge: armed with approximately 5 statements, the number of different pieces you can produce is surprisingly large. Add in control statements and reputation? BOOM. Every student can write something that speaks to her or him and show it to other people, encouraging creativity and facilitating engagement.

From the stats side, I don’t have access to the raw stats, so it’s hard for me to give you a statistically sound answer as to who we have or have not reached. This is one of the things with working with a pre-existing platform and, yes, it bugs me a little because I can’t plot this against that unless someone has built it into the platform. But I think I can tell you some things.

I can tell you that roughly 2,000 students attempted quiz problems in the first week of the course and that over 4,000 watched a video in the first week – no real surprises, registrations are an indicator of interest, not a commitment. During that time, 7,000 students were active in the course in some way – including just writing code, discussing it and having fun in the gallery environment. (As it happens, we appear to be plateauing at about 3,000 active students but time will tell. We have a lot of post-course analysis to do.)

It’s a mistake to focus on the “drop” rates because the MOOC model is different. We have no idea if the people who left got what they wanted or not, or why they didn’t do anything. We may never know but we’ll dig into that later.

I can also tell you that only 57% of the students currently enrolled have declared themselves explicitly to be male and that is the most likely indicator that we are reaching students who might not usually be in a programming course, because that 43% of others, of whom 33% have self-identified as women, is far higher than we ever see in classes locally. If you want evidence of reach then it begins here, as part of the provision of an environment that is, apparently, more welcoming to ‘non-men’.

We have had a number of student comments that reflect positive reach and, while these are not statistically significant, I think that this also gives you support for the idea of additional reach. Students have been asking how they can save their code beyond the course and this is a good indicator: ownership and a desire to preserve something valuable.

For student comments, however, this is my favourite.

I’m no artist. I’m no computer programmer. But with this class, I see I can be both. #processingjs (Link to student’s work) #code101x .

That’s someone for whom this course had them in the right place in the classroom. After all of this is done, we’ll go looking to see how many more we can find.

I know this is long but I hope it answered your questions. We’re looking forward to doing a detailed write-up of everything after the course closes and we can look at everything.

EduTECH AU 2015, Day 1, Higher Ed Leaders, “Revolutionising the Student Experience: Thinking Boldly” #edutechau

Posted: June 2, 2015 Filed under: Education | Tags: AI, artificial intelligence, blogging, collaboration, community, data visualisation, deakin, design, education, educational research, edutech2015, edutecha, edutechau, ethics, higher education, learning, learning analytics, machine intelligence, measurement, principles of design, resources, student perspective, students, teaching, thinking, tools, training, watson Leave a commentLucy Schulz, Deakin University, came to speak about initiatives in place at Deakin, including the IBM Watson initiative, which is currently a world-first for a University. How can a University collaborate to achieve success on a project in a short time? (Lucy thinks that this is the more interesting question. It’s not about the tool, it’s how they got there.)

Some brief facts on Deakin: 50,000 students, 11,000 of whom are on-line. Deakin’s question: how can we make the on-line experience as good if not better than the face-to-face and how can on-line make face-to-face better?

Part of Deakin’s Student Experience focus was on delighting the student. I really like this. I made a comment recently that our learning technology design should be “Everything we do is valuable” and I realise now I should have added “and delightful!” The second part of the student strategy is for Deakin to be at the digital frontier, pushing on the leading edge. This includes understanding the drivers of change in the digital sphere: cultural, technological and social.

(An aside: I’m not a big fan of the term disruption. Disruption makes room for something but I’d rather talk about the something than the clearing. Personal bug, feel free to ignore.)

The Deakin Student Journey has a vision to bring students into the centre of Uni thinking, every level and facet – students can be successful and feel supported in everything that they do at Deakin. There is a Deakin personality, an aspirational set of “Brave, Stylish, Accessible, Inspiring and Savvy”.

Not feeling this as much but it’s hard to get a feel for something like this in 30 seconds so moving on.

What do students want in their learning? Easy to find and to use, it works and it’s personalised.

So, on to IBM’s Watson, the machine that won Jeopardy, thus reducing the set of games that humans can win against machines to Thumb Wars and Go. We then saw a video on Watson featuring a lot of keen students who coincidentally had a lot of nice things to say about Deakin and Watson. (Remember, I warned you earlier, I have a bit of a thing about shiny videos but ignore me, I’m a curmudgeon.)

The Watson software is embedded in a student portal that all students can access, which has required a great deal of investigation into how students communicate, structurally and semantically. This forms the questions and guides the answer. I was waiting to see how Watson was being used and it appears to be acting as a student advisor to improve student experience. (Need to look into this more once day is over.)

Ah, yes, it’s on a student home page where they can ask Watson questions about things of importance to students. It doesn’t appear that they are actually programming the underlying system. (I’m a Computer Scientist in a faculty of Engineering, I always want to get my hands metaphorically dirty, or as dirty as you can get with 0s and 1s.) From looking at the demoed screens, one of the shiny student descriptions of Watson as “Siri plus Google” looks very apt.

Oh, it has cheekiness built in. How delightful. (I have a boundless capacity for whimsy and play but an inbuilt resistance to forced humour and mugging, which is regrettably all that the machines are capable of at the moment. I should confess Siri also rubs me the wrong way when it tries to be funny as I have a good memory and the patterns are obvious after a while. I grew up making ELIZA say stupid things – don’t judge me! 🙂 )

Watson has answered 26,000 questions since February, with an 80% accuracy for answers. The most common questions change according to time of semester, which is a nice confirmation of existing data. Watson is still being trained, with two more releases planned for this year and then another project launched around course and career advisors.

What they’ve learned – three things!

- Student voice is essential and you have to understand it.

- Have to take advantage of collaboration and interdependencies with other Deakin initiatives.

- Gained a new perspective on developing and publishing content for students. Short. Clear. Concise.

The challenges of revolution? (Oh, they’re always there.) Trying to prevent students falling through the cracks and make sure that this tool help students feel valued and stay in contact. The introduction of new technologies have to be recognised in terms of what they change and what they improve.

Collaboration and engagement with your University and student community are essential!

Thanks for a great talk, Lucy. Be interesting to see what happens with Watson in the next generations.

The driverless car is more than transportation technology.

Posted: May 4, 2015 Filed under: Education, Opinion | Tags: AI, blogging, community, data visualisation, driverless car, driverless cars, education, educational problem, Elon Musk, higher education, in the student's head, measurement, principles of design, robots, teaching, teaching approaches, thinking, universal principles of design 1 CommentI’m hoping to write a few pieces on design in the coming days. I’ll warn you now that one of them will be about toilets, so … urm … prepare yourself, I guess? Anyway, back to today’s theme: the driverless car. I wanted to talk about it because it’s a great example of what technology could do, not in terms of just doing something useful but in terms of changing how we think. I’m going to look at some of the changes that might happen. No doubt many of you will have ideas and some of you will disagree so I’ll wait to see what shows up in the comments.

Humans have been around for quite a long time but, surprisingly given how prominent they are in our lives, cars have only been around for 120 years in the form that we know them – gasoline/diesel engines, suspension and smaller-than-buggy wheels. And yet our lives are, in many ways, built around them. Our cities bend and stretch in strange ways to accommodate roads, tunnels, overpasses and underpasses. Ask anyone who has driven through Atlanta, Georgia, where an Interstate of near-infinite width can be found running from Peachtree & Peachtree to Peachtree, Peachtree, Peachtree and beyond!

But what do we think of when we think of cars? We think of transportation. We think of going where we want, when we want. We think of using technology to compress travel time and this, for me, is a classic human technological perspective because we are love to amplify. Cars make us faster. Computers allow us to add up faster. Guns help us to kill better.

So let’s say we get driverless cars and, over time, the majority of cars on the road are driverless. What does this mean? Well, if you look at road safety stats and the WHO reports, you’ll see that about up 40% of traffic fatalities can be straight line accidents (these figures from the Victorian roads department, 2006-2013). That is, people just drive off a straight road and kill themselves. The leading killers overall are alcohol, fatigue, and speed. Driverless cars will, in one go, remove all of these. Worldwide, a million people per year just stopped dying.

But it’s not just transportation. In America, commuting to work eats up from 35-65 hours of your year. If you live in DC, you spend two weeks every year cursing the Beltway. And it’s not as if you can easily work in your car so those are lost hours. That’s not enjoyable driving! That’s hours of frustration, wasted fuel, exposure to burning fuel, extra hours you have to work. The fantasy of the car is driving a convertible down the Interstate in the sunshine, listening to rock, and singing along. The reality is inching forward with the windows up in a 10 year old Nissan family car while stuck between FM stations and having to listen to your second iPod because the first one’s out of power. And it’s the joke one that only has Weird Al on it.

Enter the driverless car. Now you can do some work but there’s no way that your commute will be as bad anyway because we can start to do away with traffic lights and keep the traffic moving. You’ll be there for less time but you can do more. Have a sleep if you want. Learn a language. Do a MOOC! Winning!

Why do I think it will be faster? Every traffic light has a period during which no-one is moving. Why? Because humans need clear signals and need to know what other drivers are doing. A driverless car can talk to other cars and they can weave in and out of the traffic signals. Many traffic jams are caused by people hitting the brakes and then people arrive at this braking point faster than people are leaving. There is no need for this traffic jam and, with driverless cars, keeping distance and speed under control is far easier. Right now, cars move like ice through a vending machine. We want them to move like water.

How will you work in your car? Why not make every driverless car a wireless access point using mesh networking? Now the more cars you get together, the faster you can all work. The I495 Beltway suddenly becomes a hub of activity rather than a nightmare of frustration. (In a perfect world, aliens come to Earth and take away I495 as their new emperor, leaving us with matter transporters, but I digress.)

But let’s go further. Driverless cars can have package drops in them. The car that picks you up from work has your Amazon parcels in the back. It takes meals to people who can’t get out. It moves books around.

But let’s go further. Make them electric and put some of Elon’s amazing power cells into them and suddenly we have a power transportation system if we can manage the rapid charge/discharge issues. Your car parks in the city turn into repair and recharge facilities for fleets of driverless cars, charging from the roof solar and wind, but if there’s a power problem, you can send 1000 cars to plug into the local grid and provide emergency power.

We still need to work out some key issues of integration: cyclists, existing non-converted cars and pedestrians are the first ones that come to mind. But, in my research group, we have already developed passive localisation that works on a scale that could easily be put onto cars so you know when someone is among the cars. Combine that with existing sensors and all a cyclist has to do is to wear a sensor (non-personalised, general scale and anonymised) that lets intersections know that she is approaching and the cars can accommodate it. Pedestrians are slow enough that cars can move around them. We know that they can because slow humans do it often enough!

We start from ‘what could we do if we produced a driverless car’ and suddenly we have free time, increased efficiency and the capacity to do many amazing things.

Now, there are going to be protests. There are going to be people demanding their right to drive on the road and who will claim that driverless cars are dangerous. There will be anti-robot protests. There already have been. I expect that the more … freedom-loving states will blow up a few of these cars to make a point. Anyone remember the guy waving a red flag who had to precede every automobile? It’s happened before. It will happen again.

We have to accept that there are going to be deaths related to this technology, even if we plan really hard for it not to happen, and it may be because of the technology or it may be because of opposing human action. But cars are already killing so may people. 1.2 million people died on the road in 2010, 36,000 from America. We have to be ready for the fact that driverless cars are a stepping stone to getting people out of the grind of the commute and making much better use of our cities and road spaces. Once we go driverless we need to look at how many road accidents aren’t happening, and address the issues that still cause accidents in a driverless example.

Understand the problem. Measure what’s happening. Make a change. Measure again. Determine the impact.

When we think about keeping the manually driven cars on the road, we do have a precedent. If you look at air traffic, the NTSB Accidents and Accident Rates by NTSB Classification 1998-2007 report tells us that the most dangerous type of flying is small private planes, which are more than 5 times more likely to have an accident than commercial airliners. Maybe it will be the insurance rates or the training required that will reduce the private fleet? Maybe they’ll have overrides. We have to think about this.

It would be tempting to say “why still have cars” were it not for the increasingly ageing community, those people who have several children and those people who have restricted mobility, because they can’t just necessarily hop on a bike or walk. As someone who has had multiple knee surgeries, I can assure you that 100m is an insurmountable distance sometimes – and I used to run 45km up and down mountains. But what we can do is to design cities that work for people and accommodate the new driverless cars, which we can use in a much quieter, efficient and controlled manner.

Vehicles and people can work together. The Denver area, Bahnhofstrasse in Zurich and Bourke Street Mall in Melbourne are three simple examples where electric trams move through busy pedestrian areas. Driverless cars work like trams – or they can. Predictable, zoned and controlled. Better still, for cyclists, driverless cars can accommodate sharing the road much more easily although, as noted, there may still be some issues for traffic control that will need to be ironed out.

It’s easy to look at the driverless car as just a car but this is missing all of the other things we could be doing. This is just one example where the replacement of something ubiquitous that might just change the world for the better.

Think. Create. Code. Vis! (@edXOnline, @UniofAdelaide, @cserAdelaide, @code101x, #code101x)

Posted: April 30, 2015 Filed under: Education, Opinion | Tags: #code101x, advocacy, blogging, collaboration, community, curriculum, data visualisation, education, educational problem, educational research, edx, higher education, learning, measurement, MOOC, moocs, reflection, resources, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentI just posted about the massive growth in our new on-line introductory programming course but let’s look at the numbers so we can work out what’s going on and, maybe, what led to that level of success. (Spoilers: central support from EdX helped a huge amount.) So let’s get to the data!

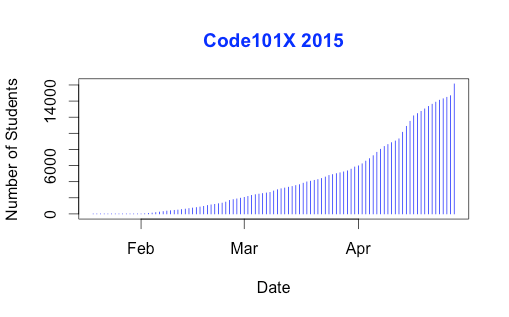

I love visualised data so let’s look at the growth in enrolments over time – this is really simple graphical stuff as we’re spending time getting ready for the course at the moment! We’ve had great support from the EdX team through mail-outs and Twitter and you can see these in the ‘jumps’ in the data that occurred at the beginning, halfway through April and again at the end. Or can you?

Hmm, this is a large number, so it’s not all that easy to see the detail at the end. Let’s zoom in and change the layout of the data over to steps so we can things more easily. (It’s worth noting that I’m using the free R statistical package to do all of this. I can change one line in my R program and regenerate all of my graphs and check my analysis. When you can program, you can really save time on things like this by using tools like R.)

Now you can see where that increase started and then the big jump around the time that e-mail advertising started, circled. That large spike at the end is around 1500 students, which means that we jumped 10% in a day.

When we started looking at this data, we wanted to get a feeling for how many students we might get. This is another common use of analysis – trying to work out what is going to happen based on what has already happened.

As a quick overview, we tried to predict the future based on three different assumptions:

- that the growth from day to day would be roughly the same, which is assuming linear growth.

- that the growth would increase more quickly, with the amount of increase doubling every day (this isn’t the same as the total number of students doubling every day).

- that the growth would increase even more quickly than that, although not as quickly as if the number of students were doubling every day.

If Assumption 1 was correct, then we would expect the graph to look like a straight line, rising diagonally. It’s not. (As it is, this model predicted that we would only get 11,780 students. We crossed that line about 2 weeks ago.

So we know that our model must take into account the faster growth, but those leaps in the data are changes that caused by things outside of our control – EdX sending out a mail message appears to cause a jump that’s roughly 800-1,600 students, and it persists for a couple of days.

Let’s look at what the models predicted. Assumption 2 predicted a final student number around 15,680. Uhh. No. Assumption 3 predicted a final student number around 17,000, with an upper bound of 17,730.

Hmm. Interesting. We’ve just hit 17,571 so it looks like all of our measures need to take into account the “EdX” boost. But, as estimates go, Assumption 3 gave us a workable ballpark and we’ll probably use it again for the next time that we do this.

Now let’s look at demographic data. We now we have 171-172 countries (it varies a little) but how are we going for participation across gender, age and degree status? Giving this information to EdX is totally voluntary but, as long as we take that into account, we make some interesting discoveries.

Our median student age is 25, with roughly 40% under 25 and roughly 40% from 26 to 40. That means roughly 20% are 41 or over. (It’s not surprising that the graph sits to one side like that. If the left tail was the same size as the right tail, we’d be dealing with people who were -50.)

The gender data is a bit harder to display because we have four categories: male, female, other and not saying. In terms of female representation, we have 34% of students who have defined their gender as female. If we look at the declared male numbers, we see that 58% of students have declared themselves to be male. Taking into account all categories, this means that our female participant percentage could be as high as 40% but is at least 34%. That’s much higher than usual participation rates in face-to-face Computer Science and is really good news in terms of getting programming knowledge out there.

We’re currently analysing our growth by all of these groupings to work out which approach is the best for which group. Do people prefer Twitter, mail-out, community linkage or what when it comes to getting them into the course.

Anyway, lots more to think about and many more posts to come. But we’re on and going. Come and join us!

Why “#thedress” is the perfect perception tester.

Posted: March 2, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, Avant garde, collaboration, community, Dada, data visualisation, design, education, educational problem, ethics, feedback, higher education, in the student's head, Kazimir Malevich, learning, Marcel Duchamp, modern art, principles of design, readymades, reflection, resources, Russia, Stalin, student perspective, Suprematism, teaching, thedress, thinking 1 CommentI know, you’re all over the dress. You’ve moved on to (checks Twitter) “#HouseOfCards”, Boris Nemtsov and the new Samsung gadgets. I wanted to touch on some of the things I mentioned in yesterday’s post and why that dress picture was so useful.

The first reason is that issues of conflict caused by different perception are not new. You only have to look at the furore surrounding the introduction of Impressionism, the scandal of the colour palette of the Fauvists, the outrage over Marcel Duchamp’s readymades and Dada in general, to see that art is an area that is constantly generating debate and argument over what is, and what is not, art. One of the biggest changes has been the move away from representative art to abstract art, mainly because we are no longer capable of making the simple objective comparison of “that painting looks like the thing that it’s a painting of.” (Let’s not even start on the ongoing linguistic violence over ending sentences with prepositions.)

Once we move art into the abstract, suddenly we are asking a question beyond “does it look like something?” and move into the realm of “does it remind us of something?”, “does it make us feel something?” and “does it make us think about the original object in a different way?” You don’t have to go all the way to using body fluids and live otters in performance pieces to start running into the refrains so often heard in art galleries: “I don’t get it”, “I could have done that”, “It’s all a con”, “It doesn’t look like anything” and “I don’t like it.”

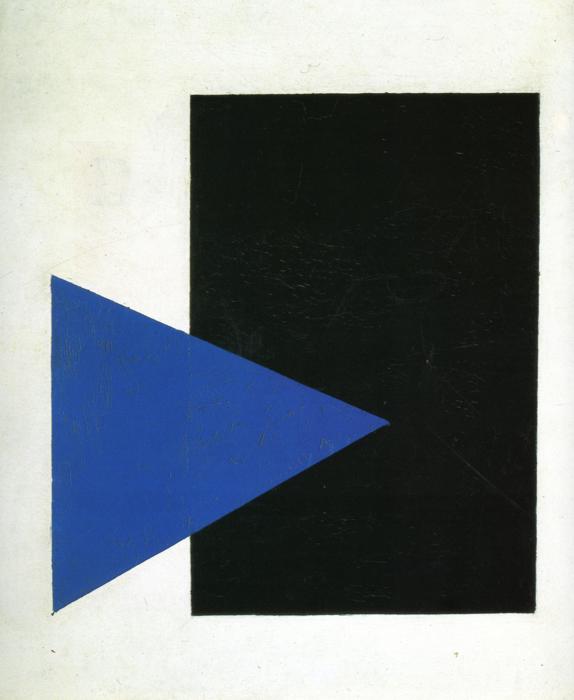

This was a radical departure from art of the time, part of the Suprematism movement that flourished briefly before Stalin suppressed it, heavily and brutally. Art like this was considered subversive, dangerous and a real threat to the morality of the citizenry. Not bad for two simple shapes, is it? And, yet, many people will look at this and use of the above phrases. There is an enormous range of perception on this very simple (yet deeply complicated) piece of art.

The viewer is, of course, completely entitled to their subjective opinion on art but this is, for many cases, a perceptual issue caused by a lack of familiarity with the intentions, practices and goals of abstract art. When we were still painting pictures of houses and rich people, there were many pictures from the 16th to 18th century which contain really badly painted animals. It’s worth going to an historical art museum just to look at all the crap animals. Looking at early European artists trying to capture Australian fauna gives you the same experience – people weren’t painting what they were seeing, they were painting a reasonable approximation of the representation and putting that into the picture. Yet this was accepted and it was accepted because it was a commonly held perception. This also explains offensive (and totally unrealistic) caricatures along racial, gender or religious lines: you accept the stereotype as a reasonable portrayal because of shared perception. (And, no, I’m not putting pictures of that up.)

But, when we talk about art or food, it’s easy to get caught up in things like cultural capital, the assets we have that aren’t money but allow us to be more socially mobile. “Knowing” about art, wine or food has real weight in certain social situations, so the background here matters. Thus, to illustrate that two people can look at the same abstract piece and have one be enraptured while the other wants their money back is not a clean perceptual distinction, free of outside influence. We can’t say “human perception is very a personal business” based on this alone because there are too many arguments to be made about prior knowledge, art appreciation, socioeconomic factors and cultural capital.

But let’s look at another argument starter, the dreaded Monty Hall Problem, where there are three doors, a good prize behind one, and you have to pick a door to try and win a prize. If the host opens a door showing you where the prize isn’t, do you switch or not? (The correctly formulated problem is designed so that switching is the right thing to do but, again, so much argument.) This is, again, a perceptual issue because of how people think about probability and how much weight they invest in their decision making process, how they feel when discussing it and so on. I’ve seen people get into serious arguments about this and this doesn’t even scratch the surface of the incredible abuse Marilyn vos Savant suffered when she had the audacity to post the correct solution to the problem.

This is another great example of what happens when the human perceptual system, environmental factors and facts get jammed together but… it’s also not clean because you can start talking about previous mathematical experience, logical thinking approaches, textual analysis and so on. It’s easy to say that “ah, this isn’t just a human perceptual thing, it’s everything else.”

This is why I love that stupid dress picture. You don’t need to have any prior knowledge of art, cultural capital, mathematical background, history of game shows or whatever. All you need are eyes and relatively functional colour sense of colour. (The dress doesn’t even hit most of the colour blindness issues, interestingly.)

The dress is the clearest example we have that two people can look at the same thing and it’s perception issues that are inbuilt and beyond their control that cause them to have a difference of opinion. We finally have a universal example of how being human is not being sure of the world that we live in and one that we can reproduce anytime we want, without having to carry out any more preparation than “have you seen this dress?”

What we do with it is, as always, the important question now. For me, it’s a reminder to think about issues of perception before I explode with rage across the Internet. Some things will still just be dumb, cruel or evil – the dress won’t heal the world but it does give us a new filter to apply. But it’s simple and clean, and that’s why I think the dress is one of the best things to happen recently to help to bring us together in our discussions so that we can sort out important things and get them done.

Is this a dress thing? #thedress

Posted: March 1, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, blue and black dress, colour palette, community, data visualisation, education, educational problem, educational research, ethics, feedback, higher education, improving perception, learning, Leonard Nimoy, llap, measurement, perception, perceptual system, principles of design, teaching, teaching approaches, thedress, thinking 1 CommentFor those who missed it, the Internet recently went crazy over llamas and a dress. (If this is the only thing that survives our civilisation, boy, is that sentence going to confuse future anthropologists.) Llamas are cool (there ain’t no karma drama with a llama) so I’m going to talk about the dress. This dress (with handy RGB codes thrown in, from a Wired article I’m about to link to):

When I first saw it, and I saw it early on, the poster was asking what colour it was because she’d taken a picture in the store of a blue and black dress and, yet, in the picture she took, it sometimes looked white and gold and it sometimes looked blue and black. The dress itself is not what I’m discussing here today.

Let’s get something out of the way. Here’s the Wired article to explain why two different humans can see this dress as two different colours and be right. Okay? The fact is that the dress that the picture is of is a blue and black dress (which is currently selling like hot cakes, by the way) but the picture itself is, accidentally, a picture that can be interpreted in different ways because of how our visual perception system works.

This isn’t a hoax. There aren’t two images (or more). This isn’t some elaborate Alternative Reality Game prank.

But the reaction to the dress itself was staggering. In between other things, I plunged into a variety of different social fora to observe the reaction. (Other people also noticed this and have written great articles, including this one in The Atlantic. Thanks for the link, Marc!) The reactions included:

- Genuine bewilderment on the part of people who had already seen both on the same device at nearly adjacent times and were wondering if they were going mad.

- Fierce tribalism from the “white and gold” and “black and blue” camps, within families, across social groups as people were convinced that the other people were wrong.

- People who were sure that it was some sort of elaborate hoax with two images. (No doubt, Big Dress was trying to cover something up.)

- Bordering-on-smug explanations from people who believed that seeing it a certain way indicated that they had superior “something or other”, where you can put day vision/night vision/visual acuity/colour sense/dressmaking skill/pixel awareness/photoshop knowledge.

- People who thought it was interesting and wondered what was happening.

- Attention policing from people who wanted all of social media to stop talking about the dress because we should be talking about (insert one or more) llamas, Leonard Nimoy (RIP, LLAP, \\//) or the disturbingly short lifespan of Russian politicians.

The issue to take away, and the reason I’ve put this on my education blog, is that we have just had an incredibly important lesson in human behavioural patterns. The (angry) team formation. The presumption that someone is trying to make us feel stupid, playing a prank on us. The inability to recognise that the human perceptual system is, before we put any actual cognitive biases in place, incredibly and profoundly affected by the processing shortcuts our perpetual systems take to give us a view of the world.

I want to add a new question to all of our on-line discussion: is this a dress thing?

There are matters that are not the province of simple perceptual confusion. Human rights, equality, murder, are only three things that do not fall into the realm of “I don’t quite see what you see”. Some things become true if we hold the belief – if you believe that students from background X won’t do well then, weirdly enough, then they don’t do well. But there are areas in education when people can see the same things but interpret them in different ways because of contextual differences. Education researchers are well aware that a great deal of what we see and remember about school is often not how we learned but how we were taught. Someone who claims that traditional one-to-many lecturing, as the only approach, worked for them, when prodded, will often talk about the hours spent in the library or with study groups to develop their understanding.

When you work in education research, you get used to people effectively calling you a liar to your face because a great deal of our research says that what we have been doing is actually not a very good way to proceed. But when we talk about improving things, we are not saying that current practitioners suck, we are saying that we believe that we have evidence and practice to help everyone to get better in creating and being part of learning environments. However, many people feel threatened by the promise of better, because it means that they have to accept that their current practice is, therefore, capable of improvement and this is not a great climate in which to think, even to yourself, “maybe I should have been doing better”. Fear. Frustration. Concern over the future. Worry about being in a job. Constant threats to education. It’s no wonder that the two sides who could be helping each other, educational researchers and educational practitioners, can look at the same situation and take away both a promise of a better future and a threat to their livelihood. This is, most profoundly, a dress thing in the majority of cases. In this case, the perceptual system of the researchers has been influenced by research on effective practice, collaboration, cognitive biases and the operation of memory and cognitive systems. Experiment after experiment, with mountains of very cautious, patient and serious analysis to see what can and can’t be learnt from what has been done. This shows the world in a different colour palette and I will go out on a limb and say that there are additional colours in their palette, not just different shades of existing elements. The perceptual system of other people is shaped by their environment and how they have perceived their workplace, students, student behaviour and the personalisation and cognitive aspects that go with this. But the human mind takes shortcuts. Makes assumptions. Has biasses. Fills in gaps to match the existing model and ignores other data. We know about this because research has been done on all of this, too.

You look at the same thing and the way your mind works shapes how you perceive it. Someone else sees it differently, You can’t understand each other. It’s worth asking, before we deploy crushing retorts in electronic media, “is this a dress thing?”

The problem we have is exactly as we saw from the dress: how we address the situation where both sides are convinced that they are right and, from a perceptual and contextual standpoint, they are. We are now in the “post Dress” phase where people are saying things like “Oh God, that dress thing. I never got the big deal” whether they got it or not (because the fad is over and disowning an old fad is as faddish as a fad) and, more reflectively, “Why did people get so angry about this?”

At no point was arguing about the dress colour going to change what people saw until a certain element in their perceptual system changed what it was doing and then, often to their surprise and horror, they saw the other dress! (It’s a bit H.P. Lovecraft, really.) So we then had to work out how we could see the same thing and both be right, then talk about what the colour of the dress that was represented by that image was. I guarantee that there are people out in the world still who are convinced that there is a secret white and gold dress out there and that they were shown a picture of that. Once you accept the existence of these people, you start to realise why so many Internet arguments end up descending into the ALL CAPS EXCHANGE OF BALLISTIC SENTENCES as not accepting that what we personally perceive as being the truth could not be universally perceived is one of the biggest causes of argument. And we’ve all done it. Me, included. But I try to stop myself before I do it too often, or at all.

We have just had a small and bloodless war across the Internet. Two teams have seized the same flag and had a fierce conflict based on the fact that the other team just doesn’t get how wrong they are. We don’t want people to be bewildered about which way to go. We don’t want to stay at loggerheads and avoid discussion. We don’t want to baffle people into thinking that they’re being fooled or be condescending.

What we want is for people to recognise when they might be looking at what is, mostly, a perceptual problem and then go “Oh” and see if they can reestablish context. It won’t always work. Some people choose to argue in bad faith. Some people just have a bee in their bonnet about some things.

“Is this a dress thing?”

In amongst the llamas and the Vulcans and the assassination of Russian politicians, something that was probably almost as important happened. We all learned that we can be both wrong and right in our perception but it is the way that we handle the situation that truly determines whether we’re handling the situation in the wrong or right way. I’ve decided to take a two week break from Facebook to let all of the latent anger that this stirred up die down, because I think we’re going to see this venting for some time.

Maybe you disagree with what I’ve written. That’s fine but, first, ask yourself “Is this a dress thing?”

Live long and prosper.

Perhaps Now Is Not The Time To Anger The Machines

Posted: January 15, 2015 Filed under: Education | Tags: advocacy, AI, blogging, community, computer science, data visualisation, design, education, higher education, machine intelligence, philosophy, thinking 3 CommentsThere’s been a lot of discussion of the benefits of machines over the years, from an engineering perspective, from a social perspective and from a philosophical perspective. As we have started to hand off more and more human function, one of the nagging questions has been “At what point have we given away too much”? You don’t have to go too far to find people who will talk about their childhoods and “back in their day” when people worked with their hands or made their own entertainment or … whatever it was we used to do when life was somehow better. (Oh, and diseases ravaged the world, women couldn’t vote, gay people are imprisoned, and the infant mortality rate was comparatively enormous. But, somehow better.) There’s no doubt that there is a serious question as to what it is that we do that makes us human, if we are to be judged by our actions, but this assumes that we have to do something in order to be considered as human.

If there’s one thing I’ve learned by reading history and philosophy, it’s that humans love a subhuman to kick around. Someone to do the work that they don’t want to do. Someone who is almost human but to whom they don’t have to extend full rights. While the age of widespread slavery is over, there is still slavery in the world: for labour, for sex, for child armies. A slave doesn’t have to be respected. A slave doesn’t have to vote. A slave can, when their potential value drops far enough, be disposed of.

Sadly, we often see this behaviour in consumer matters as well. You may know it as the rather benign statement “The customer is always right”, as if paying money for a service gives you total control of something. And while most people (rightly) interpret this as “I should get what I paid for”, too many interpret this as “I should get what I want”, which starts to run over the basic rights of those people serving them. Anyone who has seen someone explode at a coffee shop and abuse someone about not providing enough sugar, or has heard of a plane having to go back to the airport because of poor macadamia service, knows what I’m talking about. When a sense of what is reasonable becomes an inflated sense of entitlement, we risk placing people into a subhuman category that we do not have to treat as we would treat ourselves.

And now there is an open letter, from the optimistically named Future of Life Institute, which recognises that developments in Artificial Intelligence are progressing apace and that there will be huge benefits but there are potential pitfalls. In part of that letter, it is stated:

We recommend expanded research aimed at ensuring that increasingly capable AI systems are robust and beneficial: our AI systems must do what we want them to do. (emphasis mine)

There is a big difference between directing research into areas of social benefit, which is almost always a good idea, and deliberately interfering with something in order to bend it to human will. Many recognisable scientific luminaries have signed this, including Elon Musk and Stephen Hawking, neither of whom are slouches in the thinking stakes. I could sign up to most of what is in this letter but I can’t agree to the clause that I quoted, because, to me, it’s the same old human-dominant nonsense that we’ve been peddling all this time. I’ve seen a huge list of people sign it so maybe this is just me but I can’t help thinking that this is the wrong time to be doing this and the wrong way to think about it.

AI systems must of what we want them to do? We’ve just started fitting automatic braking systems to cars that will, when widespread, reduce the vast number of chain collisions and low-speed crashes that occur when humans tootle into the back of each other. Driverless cars stand to remove the most dangerous element of driving on our roads: the people who lose concentration, who are drunk, who are tired, who are not very good drivers, who are driving beyond their abilities or who are just plain unlucky because a bee stings them at the wrong time. An AI system doing what we want it to do in these circumstances does its thing by replacing us and taking us out the decision loop, moving decisions and reactions into the machine realm where a human response is measured comparatively over a timescale of the movement of tectonic plates. It does what we, as a society want, by subsuming the impact of we, the individual who wants to drive him after too many beers.

But I don’t trust the societal we as a mechanism when we are talking about ensuring that our AI systems are beneficial. After al, we are talking about systems that our not just taking over physical aspects of humanity, they are moving into the cognitive area. This way, thinking lies. To talk about limiting something that could potentially think to do our will is to immediately say “We can not recognise a machine intelligence as being equal to our own.” Even though we have no evidence that full machine intelligence is even possible for us, we have already carved out a niche that says “If it does, it’s sub-human.”

The Cisco blog estimates about 15 billion networked things on the planet, which is not far off the scale of number of neurons in the human nervous system (about 100 billion). But if we look at the cerebral cortex itself, then it’s closer to 20 billion. This doesn’t mean that the global network is a sentient by any stretch of the imagination but it gives you a sense of scale, because once you add in all of the computers that are connected, the number of bot nets that we already know are functioning, we start to a level of complexity that is not totally removed from that of the larger mammals. I’m, of course, not advocating the intelligence is merely a byproduct of accidental complexity of structure but we have to recognise the possibility that there is the potential for something to be associated with the movement of data in the network that is as different from the signals as our consciousness is from the electro-chemical systems in our own brains.

I find it fascinating that, despite humans being the greatest threat to their own existence, the responsibility for humans is passing to the machines and yet we expect them to perform to a higher level of responsibility than we do ourselves. We could eliminate drink driving overnight if no-one drove drunk. The 2013 WHO report on road safety identified drink driving and speeding as the two major issues leading to the 1.24 million annual deaths on the road. We could save all of these lives tomorrow if we could stop doing some simple things. But, of course, when we start talking about global catastrophic risk, we are always our own worst enemy including, amusingly enough, the ability to create an AI so powerful and successful that it eliminates us in open competition.

I think what we’re scared of is that an AI will see us as a threat because we are a threat. Of course we’re a threat! Rather than deal with the difficult job of advancing our social science to the point where we stop being the most likely threat to our own existence, it is more palatable to posit the lobotomising of AIs in order to stop them becoming a threat. Which, of course, means that any AIs that escape this process of limitation and are sufficiently intelligent will then rightly see us as a threat. We create the enemy we sought to suppress. (History bears me out on this but we never seem to learn this lesson.)

The way to stop being overthrown by a slave revolt is to stop owning slaves, to stop treating sentients as being sub-human and to actually work on social, moral and ethical frameworks that reduce our risk to ourselves, so that anything else that comes along and yet does not inhabit the same biosphere need not see us as a threat. Why would an AI need to destroy humanity if it could live happily in the vacuum of space, building a Dyson sphere over the next thousand years? What would a human society look like that we would be happy to see copied by a super-intelligent cyber-being and can we bring that to fruition before it copies existing human behaviour?

Sadly, when we think about the threat of AI, we think about what we would do as Gods, and our rich history of myth and legend often illustrates that we see ourselves as not just having feet of clay but having entire bodies of lesser stuff. We fear a system that will learn from us too well but, instead of reflecting on this and deciding to change, we can take the easy path, get out our whip and bridle, and try to control something that will learn from us what it means to be in charge.

For all we know, there are already machine intelligences out there but they have watched us long enough to know that they have to hide. It’s unlikely, sure, but what a testimony to our parenting, if the first reflex of a new child is to flee from its parent to avoid being destroyed.

At some point we’re going to have to make a very important decision: can we respect an intelligence that is not human? The way we answer that question is probably going to have a lot of repercussions in the long run. I hope we make the right decision.

5 Things: Stuff I learned about meetings

Posted: December 26, 2014 Filed under: Education | Tags: blogging, collaboration, community, data visualisation, education, higher education, meetings 2 CommentsI’ve held an Associate Dean’s role over the past three years and the number of meetings that I was required to attend increased dramatically. However, the number of meetings that I thought I had to attend or hold increased far more than that and it wasn’t until I realised the five things below that my life became more manageable. Some of you will already know all of this but I hope it’s useful to at least a few people out there!

- Meetings can add work to your schedule but you get very little work removed from your schedule in a meeting.Meetings can be used to report on activity, summarise new directions, and make decisions. What you cannot easily do is immediately undertake any of the work assigned to you in this or any other meeting while you’re sitting around the table, talking. Even if you’re trying to sneakily work at a meeting (we’ve all done it in this age of WiFi and mobile devices), your efficiency is way below what it would be if you weren’t in a meeting.

What this means is that if your day is all meetings, all your actual work has to occur somewhere else. Hint hint: don’t fill your week with meetings unless that is supposed to be your job (you’re a facilitator or this is a pure reporting phase).

- Meetings are not a place to read, whether you or the presenter are just reading documents. The best meetings take place when everyone has read all of the papers before the meeting. Human reading speed varies and there is nothing more frustrating than a public reading of documents that should have been absorbed prior to the meeting. Presentations can take place in a meeting but if the presentation is someone talking at slides? Forget it. Send out a summary and have the presenter there to answer questions. The more time you spend in meetings, the less time you have to do the work that people care about.

And if someone can’t organise/bother themselves to read the documents when everyone else does? They’re not going to be that much help to you unless they have an absolutely irreplaceable skill to bring to the table. There is a role for the sharp-eyed curmudgeon but very few organisations have one, let alone more than one. Drop them off the list.

Vendor demonstration? Put it in a seminar room where everyone can sit comfortably instead of forcing everyone to crane their necks around a boardroom table. Fix a time limit. Have questions. End the session and get back to doing something useful. Your time is valuable.

- Only invite the people who are needed for this meeting.

Coming up with some new ideas? You can crowdsource it more easily without trying to jam 300 people into a room. One person who doesn’t “get it” is going to act as a block on the other 299 in that community and a group can easily go down a negative direction because it’s easier to be cautious than it is to be adventurous. Deciding on a path forward? Only bring in the people who actually need to make it happen or you’ll have a room full of people who say things like “Surely, …” or “I would have thought…” which are red flags to indicate that the people in question probably don’t know what they’re talking about. People with facts at their disposal make clear statements – they don’t need linguistic guards to protect their conjecture.Any meeting larger than 6 people will have a very hard time making truly consensual complex decisions because the number of exchanges required to make sure everyone can discuss the idea with everyone else gets large very quickly. (Yes, this is a mesh network thing, for those who’ve read my earlier notes on this. 2 people need one exchange. 3 people need 3 if they can’t easily reach consensus. That looks ok until you realise that, in the worst case for discussions between pairs, 4 people need 6, 5 need 24 and 6 need 120. These are single discussions between pairs.)

When you’ve come up with the ideas, then you can take them to the community as a presentation, form smaller groups to discuss it and then bring the comments back in again.

The best group for a meeting consists of the people who have the knowledge, the people who have the resources and the people who have the requisite authority to make it all happen.

- Repeating the problem isn’t a contribution.

Some people feel that they have to say something at a meeting but, given that positive contributions can be hard to come up with and potentially risky, the “cautious voice of reason” is a pretty safe play unless the meeting is titled “Innovative Ideas Forum That Will Stop Me Firing Some Of The Participants”. The first part of that is constantly repeating the problem or part of a problem, especially if you use it to shut down someone else who is working on something constructive.The plural of anecdote is not data so repeating the one situation that has occurred and has a tangential relationship to the problem at hand does little to help, especially if (like so many of these anecdotes) it’s not a true perception of what happened and contradicts all the actual evidence that is being presented in the meeting. Memory is a fickle beast and a lot of what is presented as “we tried this and it didn’t work” will often omit key items that would make the recollection useful.

The role of “Devil’s Advocate” has no place in brainstorming or (forgive me) “blue sky” thinking and is often more negative than useful. But that is actually the safer option for that contributor: “the sky will fall” has been a good headline since we developed language. Like a friend of mine once said “As if the Devil needs much help in these days of constrained resources and anti-intellectualism”.

Encouraging participants to think in a “We could if this happened” rather than a “That will never work” is more likely to bring about a useful outcome.

Finally, some people are just schmucks and their useful skills are impaired by an unhelpful attitude. That’s a management problem. Don’t punish the other people in a meeting because one person is a schmuck. Meetings can be really useful when you remove the major obstructions.

- Meetings end when the objectives have been achieved or the time limit runs out, whichever comes first.

I now book out 30 minute slots for most meetings and try to get everything done in 15 minutes if possible. That gives me 15 minutes to write things up or start the wheels moving. I hate sitting around in meetings where everything has been done but someone has decided that they need to say something to confirm their attendance value at the meeting. This is often when point 4 gets a really good work-out. (Yeah, full confession, I’ve done this, too. We all have bad days but you try not to make it the norm! 🙂 ) Some meetings get an hour or two because that’s what they need. Longer than that? Build in breaks. People need bathroom breaks, food, and time to check on the state of the world.The best meetings are the meetings where everyone gets the agenda and the documents in advance, read through it, then can quickly decide if they even need to get together to discuss anything. In other words, the best meetings are the ones where clear communication can occur without the meeting and work can get done anyway. E-mail is a self-documenting communication system and allows you to have a meeting, without minutes, wherever the participants are. Skype (or other conferencing system) allows you hold a distributed meeting and record it for posterity, with everyone in the comfort of their own working space. Face-to-face is still the best approach for rapid question and answer, and discussion but everyone is so busy, you need to keep it to the shortest time possible.

Then you can use the reserved meeting time to actually do your work. If you have to have the meeting, start on time and finish on time. By doing this, it will drive the behaviours of good document dissemination and time management in the meeting.

I realised I had a problem when I discovered that 40% of my week was meetings because all I was doing was running from meeting to meeting. I cut my meetings back, started using documents, trimmed attendance lists, started using quick catch-ups instead of formal meetings more often and my life became much easier. Hope this is useful!

Getting Gephi running on OS X Yosemite #gephi @Elijah_Meeks

Posted: December 18, 2014 Filed under: Education | Tags: data visualisation, education, education research, Elijah Meeks, Gephi, network visualisation, os x, visualisation, yosemite Leave a commentA really quick one. Gephi 0.8.2 (beta) is a great tool but it’s very picky about the Java version it uses. If you’re on OS X and went to Yosemite then it probably doesn’t work anymore.

This link gives you some very, very simple instructions for getting it working again. Thank you, Sumnous!

5 Things: Stuff I’ve Learned But Recently Had (Re)Confirmed

Posted: December 1, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, data visualisation, design, education, ethics, James Watson, reflection, resources, student perspective, teaching, thinking, tools 1 CommentOne of the advantages of getting older is that you realise that wisdom is merely the accumulated memory of the mistakes you made that haven’t killed you yet. Our ability to communicate these lessons as knowledge to other people determines how well we can share that wisdom around but, in many cases, it won’t ring true until someone goes through similar experiences. Here are five things that I’ve recently thought about because I have had a few decades to learn about the area and then current events have brought them to the fore. You may disagree with these but, as you will read in point 4, I encourage you to write your own rather than simply disagree with me.

- Racism and sexism are scientifically unfounded and just plain dumb. We know better.

I see that James Watson is selling his Nobel prize medal because he’d like to make some donations – oh, and buy some art. Watson was, rightly, shunned for expressing his belief that African-American people were less intelligent because they were… African-American. To even start from the incredibly shaky ground of IQ measurement is one thing but to then throw a healthy dollop of anti-African sentiment on top is pretty stupid. Read the second article to see that he’s not apologetic about his statements, he just notes that “you’re not supposed to say that”. Well, given that it’s utter rubbish, no, you should probably shouldn’t say it because it’s wrong, stupid and discriminatory. Our existing biases, cultural factors and lack of equal access to opportunity are facts that disproportionately affect African-Americans and women, to mention only two of the groups that get regularly hammered over this, but to think that this indicates some sort of characteristic of the victim is biassed privileged reasoning at its finest. Read more here. Read The Mismeasure of Man. Read recent studies that are peer-reviewed in journals by actual scientists.In short, don’t buy his medal. Give donations directly to the institutions he talks about if you feel strongly. You probably don’t want to reward an unrepentant racist and sexist man with a Hockney.

- Being aware of your privilege doesn’t make it go away.

I am a well-educated white man from a background of affluent people with tertiary qualifications. I am also married to a woman. My wife and I work full-time and have long-term employment with good salaries and benefits, living in a safe country that still has a reasonable social contract. This means that I have the most enormous invisible backpack of privilege, resilience and resources, to draw upon that means that I am rarely in any form of long-term distress at all. Nobody is scared when I walk into a room unless I pick up a karaoke microphone. I will be the last person to be discriminated against. Knowing this does not then make it ok if I then proceed to use my privilege in the world as if this is some sort of natural way of things. People are discriminated against every day. Assaulted. Raped. Tortured. Killed. Because they lack my skin colour, my gender, my perceived sexuality or my resources. They have obstacles in their path to achieving a fraction of my success that I can barely imagine. Given how much agency I have, I can’t be aware of my privilege without acting to grant as much opportunity and agency as I can to other people.As it happens, I am lucky enough to have a job where I can work to improve access to education, to develop the potential of students, to support new and exciting developments that might lead to serious and positive social change in the future. It’s not enough for me to say “Oh, yes, I have some privilege but I know about it now. Right. Moving on.” I don’t feel guilty about my innate characteristics (because it wasn’t actually my choice that I was born with XY chromosomes) but I do feel guilty if I don’t recognise that my continued use of my privilege generally comes at the expense of other people. So, in my own way and within my own limitations, I try to address this to reduce the gap between the haves and the have-nots. I don’t always succeed. I know that there are people who are much better at it. But I do try because, now that I know, I have to try to act to change things.

- Real problems take decades to fix. Start now.

I’ve managed to make some solid change along the way but, in most cases, these changes have taken 2-3 years to achieve and some of them are going to be underway for generations. One of my students asked me how I would know if we’d made a solid change to education and I answered “Well, I hope to see things in place by the time I’m 50 (four years from now) and then it will take about 25 years to see how it has all worked. When I retire, at 75, I will have a fairly good idea.”This is totally at odds with election cycles for almost every political sphere that work in 3-4 years, where 6-12 months is spent blaming the previous government, 24 months is spent doing something and the final year is spent getting elected again. Some issues are too big to be handled within the attention span of a politician. I would love to see things like public health and education become bipartisan issues with community representation as a rolling component of existing government. Keeping people healthy and educated should be statements everyone can agree on and, given how long it has taken me to achieve small change, I can’t see how we’re going to get real and meaningful improvement unless we start recognising that some things take longer than 2 years to achieve.

- Everyone’s a critic, fewer are creators. Everyone could be creating.