ASWEC 2014 – Now with Education Track

Posted: March 12, 2014 Filed under: Education | Tags: advocacy, ASWEC, blogging, community, curriculum, education, educational problem, educational research, higher education, learning, measurement, principles of design, resources, software engineering, teaching, teaching approaches, thinking Leave a commentThe Australasian Software Engineering Conference has been around for 23 years and, while there have been previous efforts to add more focus on education, this year we’re very pleased to have a full day on Education on Wednesday, the 9th of April. (Full disclosure: I’m the Chair of the program committee for the Education track. This is self-advertising of a sort.) The speakers include a number of exciting software engineering education researchers and practitioners, including Dr Claudia Szabo, who recently won the SIGCSE Best Paper Award for a paper in software engineering and student projects.

Here’s the invitation from the conference chair, Professor Alan Fekete – please pass this on as far as you can!:

- Keynote by a leader of SE research, Prof Gail Murphy (UBC, Canada) on Getting to Flow in Software Development.

- Keynote by Alan Noble (Google) on Innovation at Google.

- Sessions on Testing, Software Ecosystems, Requirements, Architecture, Tools, etc, with speakers from around Australia and overseas, from universities and industry, that bring a wide range of perspectives on software development.

- An entire day (Wed April 9) focused on SE Education, including keynote by Jean-Michel Lemieux (Atlassian) on Teaching Gap: Where’s the Product Gene?

You want thinkers. Let us produce them.

Posted: February 2, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, community, design, education, educational problem, ethics, feedback, higher education, in the student's head, learning, measurement, reflection, student, student perspective, teaching, teaching approaches, universal principles of design, work/life balance Leave a commentI was at a conference recently where the room (about 1000 people from across the business and educational world) were asked what they would like to say to everyone in the room, if they had a few minutes. I thought about this a lot because, at the time, I had half an idea but it wasn’t in a form that would work on that day. A few weeks later, in a group of 100 or so, I was asked a similar question and I managed to come up with something coherent. What follows here is a more extended version of what I said, with relevant context.

If I could say anything to the parents and future employers of my students, it would be to STOP LOOKING AT GRADES as some meaningful predictor of the future ability of the student. While measures of true competency are useful, the current fine-grained but mostly arbitrary measurements of students, with rabid competitiveness and the artificial divisions between grade bands, do not fulfil this purpose. When an employer demands a GPA of X, there is no guaranteed true measure of depth of understanding, quality of learning or anything real that you can use, except for conformity and an ability to colour inside the lines. Yes, there will be exceptional people with a GPA of X, but there will also be people whose true abilities languished as they focused their energies on achieving that false grail. The best person for your job may be the person who got slightly (or much) lower marks because they were out doing additional tasks that made them the best person.

Please. I waste a lot of my time giving marks when I could be giving far more useful feedback, in an environment where that feedback could be accepted and actual positive change could take place. Instead, if I hand back a 74 with comments, I’ll get arguments about the extra mark to get to 75 rather than discussions of the comments – but don’t blame the student for that attitude. We have created a world in which that kind of behaviour is both encouraged and sensible. It’s because people keep demanding As and Cs to somehow grade and separate people that we still use them. I couldn’t switch my degree over to “Competent/Not Yet Competent” tomorrow because, being frank, we’re not MIT or Stanford and people would assume that all of my students had just scraped by – because that’s how we’re all trained.

If you’re an employer then I realise that it’s very demanding but please, where you can, look at the person wherever you can and ask your industrial bodies that feed back to education to focus on ensuring that we develop competent, thinking individuals who can practice in your profession, without forcing them to become grade-haggling bean counters who would cut a group member’s throat for an A.

If you’re a parent, then I would like to ask you to think about joining that group of parents who don’t ask what happened to that extra 1% when a student brings home a 74 or 84. I’m not going to tell you how to raise your children, it’s none of my business, but I can tell you, from my professional and personal perspective, that it probably won’t achieve what you want. Is your student enjoying the course, getting decent marks and showing a passion and understanding? That’s pretty good and, hopefully, if the educators, the parents and the employers all get it right, then that student can become a happy and fulfilled human being.

Do we want thinkers? Then we have to develop the learning environments in which we have the freedom and capability to let them think. But this means that this nonsense that there is any real difference between a mark of 84 and a mark of 85 has to stop and we need to think about how we develop and recognise true measures of competence and suitability that go beyond a GPA, a percentage or a single letter grade.

You cannot contain the whole of a person in a single number. You shouldn’t write the future of a student on such a flimsy structure.

“Hi, my name is Nick and I specialise in failure.”

Posted: June 10, 2013 Filed under: Education, Opinion | Tags: advocacy, collaboration, community, curriculum, design, education, educational research, ethics, failure, Generation Why, higher education, in the student's head, learning, measurement, reflection, resources, student perspective, survivorship, teaching, teaching approaches, thinking, tools Leave a commentI recently read an article on survivorship bias in the “You Are Not So Smart” website, via Metafilter. While the whole story addressed the World War II Statistical Research Group, it focused on the insight contributed by Abraham Wald, a statistician. The World War II Allied bomber losses were large, very large, and any chances of reducing this loss was incredibly valuable. The question was “How could the US improve their chances of bringing their bombers back intact?” Bombers landing back after missions were full of holes but armour just can’t be strapped willy-nilly on to a plane without it becoming land-locked. (There’s a reason that birds are so light!) The answer, initially, was obvious – find the place where the most holes were, by surveying the fleet, and patching them. Put armour on the colander sections and, voila, increased survival rate.

No, said Wald. That wouldn’t help.

Wald’s logic is both simple and convincing. If a plane was coming back with those holes in place, then the holes in the skin were not leading to catastrophic failure – they couldn’t have been if the planes were returning! The survivors were not showing the damage that would have led to them becoming lost aircraft. Wald used the already collected information on the damage patterns to work out how much damage could be taken on each component and the likelihood of this occurring during a bombing run. based on what kind of forces it encountered.

It’s worth reading the entire article because it’s a simple and powerful idea – attributing magical properties to the set of steps taken by people who have become ultra-successful is not going to be as useful as looking at what happened to take people out of the pathway to success. If you’ve read Steve Jobs’ biography then you’re aware that he had a number of interesting traits, only some of which may have led to him becoming as successful as he did. Of course, if you’ve been reading a lot, you’ll be aware of the importance of Paul Jobs, Steve Wozniak, Robert Noyce, Bill Gates, Jony Ive, John Lasseter, and, of course, his wife, Laurene Powell Jobs. So the whole “only eating fruit” thing, the “reality distortion field” thing and “not showering” thing (some of which he changed, some he didn’t) – which of these are the important things? Jobs, like many successful people, failed at some of his endeavours, but never in a way that completely wiped him out. Obviously. Now, when he’s not succeeding, he’s interesting, because we can look at the steps that took him down and say “Oh, don’t do that”, assuming that it’s something that can be changed or avoided . When he’s succeeding, there are so many other things getting in the way that depend upon what’s happened to you so far, who your friends are, and how many resources you get to play with, it’s hard to be able to give good advice on what to do.

I have been studying failure for some time. Firstly in myself, and now in my students. I look for those decisions, or behaviours, that lead to students struggling in their academic achievement, or to falling away completely in some cases. The majority of the students who come to me with a high level of cultural, financial and social resources are far less likely to struggle because, even when faced with a set-back, they rarely hit the point where they can’t bounce back – although, sadly, it does happen but in far fewer numbers. When they do fall over, it is for the same reasons as my less-advantaged students, who just do so in far greater numbers because they have less resilience to the set-backs. By studying failure, and the lessons learned and the things to be avoided, I can help all of my students and this does not depend upon their starting level. If I were studying the top 5% of students, especially those who had never received a mark less than A+, I would be surprised if I could learn much that I could take and usefully apply to those in the C- bracket. The reverse, however? There’s gold to be mined there.

By studying the borderlines and by looking for patterns in the swirling dust left by those departing, I hope that I can find things which reduce failure everywhere – because every time someone fails, we run the risk of not getting them back simply because failure is disheartening. Better yet, I hope to get something that is immediately usable, defensible and successful. Probably rather a big ask for a protracted study of failure!

Why You Won’t Finish This Post

Posted: June 10, 2013 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, design, education, educational problem, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, universal principles of design 3 CommentsA friend of mine on Facebook posted a link to a Slate article entitled “You Won’t Finish This Article: Why people online don’t read to the end” and it’s told me everything that I’ve been doing wrong with this blog for about the last 410 hours. Now, this doesn’t even take into account that, by linking to something potentially more interesting on a well-known site, I’ve now buried the bottom of this blog post altogether because a number of you will follow the link and, despite me asking it to appear in a new window, you will never come back to this article. (This has quite obvious implications for the teaching materials we put up, so it’s well worth a look.)

Now, on the off-chance that you did come back (hi!), we have to assume that you didn’t read all of the linked article (if you read any at all) because 28% of you ‘bounced’ immediately and didn’t actually read much at all of that page – you certainly didn’t scroll. Almost none of you read to the bottom. What is, however, amusing is that a number of you will have either Liked or forwarded a link to one or both of these pages – never having stepped through or scrolled once, but because the concept at the start looks cool. Of course, according the Slate analysis, I’ve lost over half my readers by now. Of course, this does assume the Slate layout, where an image breaks things up and forces people to scroll through. So here’s an image that will discourage almost everyone from continuing. However, it is a pretty picture:

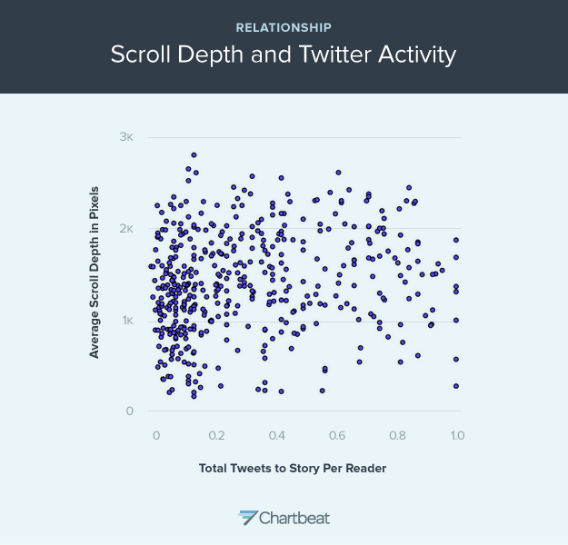

This graph shows the relationship between scroll depth and Tweet (From Slate and courtesy of Chartbeat)

What it says is that there is not an enormously strong correlation between depth of reading and frequency of tweet. So, the amount that a story is read doesn’t really tell you how much people will want to (or actually) share it. Overall, the Slate article makes it fairly clear that unless I manage to make my point in the first paragraph, I have little chance of being read any further – but if I make that first paragraph (or first images) appealing enough, any number of people will like and share it.

Of course, if people read down this far (thanks!) then they will know that I secretly start advocating the most horrible things known to humanity so, when someone finally follows their link and miraculously reads down this far, survives the Slate link out, and doesn’t end up mired in the picture swamp above, they will discover…

Oh, who am I kidding. I’ll just come back and fill this in later.

(Having stolen a time machine, I can now point out that this is yet another illustration of why we need to be thoughtful about what our students are going to do in response to on-line and hyperlinked materials rather than what we would like them to do. Any system that requires a better human, or a human to act in a way that goes against all the evidence we have of their behaviour, requires modification.)

Time to Work and Time to Play

Posted: May 19, 2013 Filed under: Education, Opinion | Tags: advocacy, education, educational problem, educational research, feedback, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, work/life balance, workload 1 CommentI do a lot of grounded theory research into student behaviour patterns. It’s a bit Indiana Jones in a rather dry way: hear a rumour of a giant cache of data, hack your way through impenetrable obfuscation and poor data alignment to find the jewel at the centre, hack your way out and try to get it to the community before you get killed by snakes, thrown into a propellor or eaten. (Perhaps the analogy isn’t perfect but how recently have you been through a research quality exercise?) Our students are all pretty similar, from the metrics I have, and I’ve gone on at length about this in other posts: hyperbolic time-discounting and so on. Embarrassingly recently, however, I was introduced to the notion of instrumentality, the capability to see that achieving a task now will reduce the difficulty in completing a goal later. If we can’t see how important this is to getting students to do something, maybe it’s time to have a good sit-down and a think! Husman et al identify three associated but distinguishable aspects to a student’s appreciation of a task: how much they rate its value, their intrinsic level of motivation, and their appreciation of the instrumentality. From this study, we have a basis for the confusing and often paradoxical presentation of a student who is intelligent and highly motivated – but just not for the task we’ve given them, despite apparently and genuinely being aware of the value of the task. Without the ability to link this task to future goal success, the exponential approach of the deadline horizon can cause a student to artificially inflate the value of something of less final worth, because the actual important goal is out of sight. But rob a student of motivation and we have to put everything into a high-stakes, heavily temporally fixed into the almost immediate future and the present, often resorting to extrinsic motivating factors (bribes/threats) to impose value. This may be why everyone who uses a punishment/reward situation achieves compliance but then has to keep using this mechanism to continue to keep values artificially high. Have we stumbled across an Economy of Pedagogy? I hope not, because I can barely understand basic economics. But we can start to illustrate why the student has to be intrinsically connected to the task and the goal framework – without it, it’s carrot/stick time and, once we do that, it’s always carrot/stick time.

Like almost every teacher I know, all of my students are experts at something but mining that can be tricky. What quickly becomes apparent, and as McGonigall reflected on in “Reality is Broken”, is that people will put far more effort into an activity that they see as play than one which they see as work. I, for example, have taken up linocut printing and, for no good reason at all, have invested days into a painstaking activity where it can take four hours to achieve even a simple outcome of reasonable quality – and it will be years before I’m good at it. Yet the time I spend at the printing studio on Saturdays is joyful, recharging and, above all, playful. If I consumed 6 hours marking assignments, writing a single number out of 10 and restricting my comments to good/bad/try harder, then I would feel spent and I would dread starting, putting it off as long as possible. Making prints, I consumed about 6 hours of effort to scan, photoshop, trim, print, reverse, apply over carbon paper, trace, cut out of lino and then manually and press print about four pieces of paper – and I felt like a new man. No real surprises here. In both cases, I am highly motivated. One task has great value to my students and me because it provides useful feedback. The artistic task has value to me because I am exploring new forms of art and artistic thinking, which I find rewarding.

But what of the instrumentality? In the case of the marking, it has to be done at a time where students can get the feedback at a time where they can use it and, given we have a follow-up activity of the same type for more marks, they need to get that sooner rather than later. If I leave it all until the end of the semester, it makes my students’ lives harder and mine, too, because I can’t do everything at once and every single ‘when is it coming’ query consumes more time. In the case of the art, I have no deadline but I do have a goal – a triptych work to put on the wall in August. Every print I make makes this final production easier. The production of the lino master? Intricate, close work using sharp objects and it can take hours to get a good result. It should be dull and repetitive but it’s not – but ask me to cut out 10 of the same thing or very, very similar things and I think it would be, very quickly. So, even something that I really enjoy becomes mundane when we mess with the task enough or get to the point, in this case, where we start to say “Well, why can’t a machine do this?” Rephrasing this, we get the instrumentality focus back again: “What do I gain in the future from doing this ten times if I will only do this ten times once?” And this is a valid question for our students, too. Why should they write “Hello, World” – it has most definitely and definitively been written. It’s passed on. It is novel no more. Bereft of novelty, it rests on its laurels. If we didn’t force students to write it, there is no way that this particular phrase, which we ‘owe’ to Brian Kernighan, is introducing anyone to anything that could not have a modicum of creativity added to it by saying in the manual “Please type a sentence into this point in the program and it will display it back to you.” It is an ex-program.

I love lecturing. I love giving tutorials. I will happily provide feedback in pracs. Why don’t I like marking? It’s easy to say “Well, it’s dull and repetitive” but, if I wouldn’t ask a student to undertake a task like that so why am I doing it? Look, I’m not advocating that all marking is like this but, certainly, the manual marking of particular aspects of software does tend to be dull.

Unless, of course, you start enjoying it and we can do that if we have enough freedom and flexibility to explore playful aspects. When I marked a big group of student assignments recently, I tried to write something new for each student and, this doesn’t always succeed for small artefacts with limited variability, I did manage to complement a student on their spanish variable names, provide personalised feedback to some students who had excelled and, generally, turned a 10 mark program into a place where I thought about each student personally and then (more often than not) said something unique. Yes, sometimes the same errors cropped up and the copy/paste is handy – but by engaging with the task and thinking about how much my future interactions with the students would be helped with a little investment now, the task was still a slog, but I came out of it quite pleased with the overall achievement. The task became more enjoyable because I had more flexibility but I also was required to be there to be part of the process, I was necessary. It became possible to be (professionally and carefully) playful – which is often how I approach teaching.

Any of you who are required to use standardised tests with manual marking: you already know how desperately dull the grading is and it is a grindingly dull, rubric-bound, tick/flick scenario that does nothing except consume work. It’s valuable because it’s required and money is money. Motivating? No. Any instrumentality? No, unless giving the test raises the students to the point where you get improved circumstances (personal/school) or you reduce the amount of testing required for some reason. It is, sadly, as dull for your students to undertake them, in this scenario, because they will know how it’s marked and it is not going to trigger any of Husman’s three distinguished but associated variables.

I am never saying that everything has to fun or easy, because I doubt many areas would be able to convey enough knowledge under these strictures, but providing tasks that have room to encourage motivation, develop a personal sense of task value, and that allow students to play, potentially bringing in some of their own natural enthusiasm on other areas or channeling it here, solves two thirds of the problem in getting students involved. Intentionally grounding learning in play and carefully designing materials to make this work can make things better. It also makes it easier for staff. Right now, as we handle the assignment work of the course I’m currently teaching, other discussions on the student forums includes the History of Computing, Hofstede’s Cultural Dimensions, the significance of certain questions in the practical, complexity theory and we have only just stopped the spontaneous student comparison of performance at a simple genetic algorithms practical. My students are exploring, they are playing in the space of the discipline and, by doing so, are moving more deeply into a knowledge of taxonomy and lexicon within this space. I am moving from Lion Tamer to Ringmaster, which is the logical step to take as what I want is citizens who are participating because they can see value, have some level of motivation and are forming their instrumentality. If learning and exploration is fun now, then going further in this may lead to fun later – the future fun goal is enhanced by achieving tasks now. I’m not sure if this is necessarily the correct first demonstration of instrumentality, but it is a useful one!

However, it requires time for both the staff member to be able to construct and moderate such an environment, especially if you’re encouraging playful exploration of areas on public discussion forums, and the student must have enough time to be able to think about things, make plans and then to try again if they don’t pick it all up on the first go. Under strict and tight deadlines, we know the creativity can be impaired when we enforce the deadlines the wrong way, and we reduce the possibility of time for exploration and play – for students and staff.

Playing is serious business and our lives are better when we do more of it – the first enabling act of good play is scheduling that first play date and seeing how it goes. I’ve certainly found it to be helpful, to me and to my students.

SIGCSE 2013: The Revolution Will Be Televised, Perspectives on MOOC Education

Posted: March 17, 2013 Filed under: Education, Opinion | Tags: advocacy, community, education, educational research, ethics, Generation Why, higher education, learning, measurement, moocs, sigcse, teaching, teaching approaches, tools 4 CommentsLong time between posts, I realise, but I got really, really unwell in Colorado and am still recovering from it. I attended a lot of interesting sessions at SIGCSE 2013, and hopefully gave at least one of them, but the first I wanted to comment on was a panel with Mehram Sahami, Nick Parlante, Fred Martin and Mark Guzdial, entitled “The Revolution Will Be Televised, Perspectives on MOOC Education”. This is, obviously, a very open area for debate and the panelists provided a range of views and a lot of information.

Mehram started by reminding the audience that we’ve had on-line and correspondence courses for some time, with MIT’s OpenCourseWare (OCW) streaming video from the 1990s and Stanford Engineering Everywhere (SEE) starting in 2008. The SEE lectures were interesting because viewership follows a power law relationship: the final lecture has only 5-10% of the views of the first lecture. These video lectures were being used well beyond Stanford, augmenting AP courses in the US and providing entire lecture series in other countries. The videos also increased engagement and the requests that came in weren’t just about the course but were more general – having a face and a name on the screen gave people someone to interact with. From Mehram’s perspective, the challenges were: certification and credit, increasing the richness of automated evaluation, validated peer evaluation, and personalisation (or, as he put it, in reality mass customisation).

Nick Parlante spoke next, as an unashamed optimist for MOOC, who has the opinion that all the best world-changing inventions are cheap, like the printing press, arabic numerals and high quality digital music. These great ideas spread and change the world. However, he did state that he considered artisinal and MOOC education to be very different: artisinal education is bespoke, high quality and high cost, where MOOCs are interesting for the massive scale and, while they could never replace artisinal, they could provide education to those who could not get access to artisinal.

It was at this point that I started to twitch, because I have heard and seen this argument before – the notion that MOOC is better than nothing, if you can’t get artisinal. The subtext that I, fairly or not, hear at this point is the implicit statement that we will never be able to give high quality education to everybody. By having a MOOC, we no longer have to say “you will not be educated”, we can say “you will receive some form of education”. What I rarely hear at this point is a well-structured and quantified argument on exactly how much quality slippage we’re tolerating here – how educational is the alternative education?

Nick also raised the well-known problems of cheating (which is rampant in MOOCs already before large-scale fee paying has been introduced) and credentialling. His section of the talk was long on optimism and positivity but rather light on statistics, completion rates, and the kind of evidence that we’re all waiting to see. Nick was quite optimistic about our future employment prospects but I suspect he was speaking on behalf of those of us in “high-end” old-school schools.

I had a lot of issues with what Nick said but a fair bit of it stemmed from his examples: the printing press and digital music. The printing press is an amazing piece of technology for replicating a written text and, as replication and distribution goes, there’s no doubt that it changed the world – but does it guarantee quality? No. The top 10 books sold in 2012 were either Twilight-derived sadomasochism (Fifty Shades of Unncessary) or related to The Hunger Games. The most work the printing presses were doing in 2012 was not for Thoreau, Atwood, Byatt, Dickens, Borges or even Cormac McCarthy. No, the amazing distribution mechanism was turning out copy after copy of what could be, generously, called popular fiction. But even that’s not my point. Even if the printing presses turned out only “the great writers”, it would be no guarantee of an increase in the ability to write quality works in the reading populace, because reading and writing are different things. You don’t have to read much into constructivism to realise how much difference it makes when someone puts things together for themselves, actively, rather than passively sitting through a non-interactive presentation. Some of us can learn purely from books but, obviously, not all of us and, more importantly, most of us don’t find it trivial. So, not only does the printing press not guarantee that everything that gets printed is good, even where something good does get printed, it does not intrinsically demonstrate how you can take the goodness and then apply it to your own works. (Why else would there be books on how to write?) If we could do that, reliability and spontaneously, then a library of great writers would be all you needed to replace every English writing course and editor in the world. A similar argument exists for the digital reproduction of music. Yes, it’s cheap and, yes, it’s easy. However, listening to music does not teach you to how write music or perform on a given instrument, unless you happen to be one of the few people who can pick up music and instrumentation with little guidance. There are so few of the latter that we call them prodigies – it’s not a stable model for even the majority of our gifted students, let alone the main body.

Fred Martin spoke next and reminded us all that weaker learners just don’t do well in the less-scaffolded MOOC environment. He had used MOOC in a flipped classroom, with small class sizes, supervision and lots of individual discussion. As part of this blended experience, it worked. Fred really wanted some honest figures on who was starting and completing MOOCs and was really keen that, if we were to do this, that we strive for the same quality, rather than accepting that MOOCs weren’t as good and it was ok to offer this second-tier solution to certain groups.

Mark Guzdial then rounded out the panel and stressed the role of MOOCs as part of a diverse set of resources, but if we were going to do that then we had to measure and report on how things had gone. MOOC results, right now, are interesting but fundamentally anecdotal and unverified. Therefore, it is too soon to jump into MOOC because we don’t yet know if it will work. Mark also noted that MOOCs are not supporting diversity yet and, from any number of sources, we know that many-to-one (the MOOC model) is just not as good as 1-to-1. We’re really not clear if and how MOOCs are working, given how many people who do complete are actually already degree holders and, even then, actual participation in on-line discussion is so low that these experienced learners aren’t even talking to each other very much.

It was an interesting discussion and conducted with a great deal of mutual respect and humour, but I couldn’t agree more with Fred and Mark – we haven’t measured things enough and, despite Nick’s optimism, there are too many unanswered questions to leap in, especially if we’re going to make hard-to-reverse changes to staffing and infrastructure. It takes 20 years to train a Professor and, if you have one that can teach, they can be expensive and hard to maintain (with tongue firmly lodged in cheek, here). Getting rid of one because we have a promising new technology that is untested may save us money in the short term but, if we haven’t validated the educational value or confirmed that we have set up the right level of quality, a few years now from now we might discover that we got rid of the wrong people at the wrong time. What happens then? I can turn off a MOOC with a few keystrokes but I can’t bring back all of my seasoned teachers in a timeframe less than years, if not decades.

I’m with Mark – the resource promise of MOOCs is enormous and they are part of our future. Are they actually full educational resources or courses yet? Will they be able to bring education to people that is a first-tier, high quality experience or are we trapped in the same old educational class divisions with a new name for an old separation? I think it’s too soon to tell but I’m watching all of the new studies with a great deal of interest. I, too, am an optimist but let’s call me a cautious one!

Grace.

Posted: January 29, 2013 Filed under: Education | Tags: advocacy, authenticity, blogging, community, education, educational problem, higher education, in the student's head, measurement, principles of design, reflection, student perspective, teaching, teaching approaches, thinking, work/life balance 1 CommentA friend sent me a link to this excellent piece on the importance of grace, in terms of your own appreciation of yourself and in your role as a teacher. Thank you, A! Here is the link:

The Lesson of Grace in Teaching

“…to hear from my own professor, whom I really love and admire, at a time when I felt ashamed of my intelligence and thus unworthy of his friendship, that I wasn’t just a student in a seat, not just a letter grade or a number on my transcript, but a valuable person who he wants to know on a personal level, was perhaps the most incredible moment of my college career.”

Thanks for the exam – now I can’t help you.

Posted: December 31, 2012 Filed under: Education | Tags: advocacy, authenticity, blogging, community, curriculum, design, education, educational problem, ethics, feedback, Generation Why, grand challenge, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, time banking, tools, universal principles of design, vygotsky, workload 1 CommentI have just finished marking a pile of examinations from a course that I co-taught recently. I haven’t finalised the marks but, overall, I’m not unhappy with the majority of the results. Interestingly, and not overly surprisingly, one of the best answered sections of the exam was based on a challenging essay question I set as an assignment. The question spans many aspects of the course and requires the student to think about their answer and link the knowledge – which most did very well. As I said, not a surprise but a good reinforcement that you don’t have to drill students in what to say in the exam, but covering the requisite knowledge and practising the right skills is often helpful.

However, I don’t much like marking exams and it doesn’t come down to the time involved, the generally dull nature of the task or the repetitive strain injury from wielding a red pen in anger, it comes down to the fact that, most of the time, I am marking the student’s work at a time when I can no longer help him or her. Like most exams at my Uni, this was the terminal examination for the course, worth a substantial amount of the final marks, and was taken some weeks after teaching finished. So what this means is that any areas I identify for a given student cannot now be corrected, unless the student chooses to read my notes in the exam paper or come to see me. (Given that this campus is international, that’s trickier but not impossible thanks to the Wonders of Skypenology.) It took me a long time to work out exactly why I didn’t like marking, but when I did, the answer was obvious.

I was frustrated that I couldn’t actually do my job at one of the most important points: when lack of comprehension is clearly identified. If I ask someone a question in the classroom, on-line or wherever, and they give me an answer that’s not quite right, or right off base, then we can talk about it and I can correct the misunderstanding. My job, after all, is not actually passing or failing students – it’s about knowledge, the conveyance, construction and quality management thereof. My frustration during exam marking increases with every incomplete or incorrect answer I read, which illustrates that there is a section of the course that someone didn’t get. I get up in the morning with the clear intention of being helpful towards students and, when it really matters, all I can do is mark up bits of paper in red ink.

Quickly, Jones! Construct a valid knowledge framework! You’re in a group environment! Vygotsky, man, Vygotsky!

A student who, despite my sweeping, and seeping, liquid red ink of doom, manages to get a 50 Passing grade will not do the course again – yet this mark pretty clearly indicates that roughly half of the comprehension or participation required was not carried out to the required standard. Miraculously, it doesn’t matter which half of the course the student ‘gets’, they are still deemed to have attained the knowledge. (An interesting point to ponder, especially when you consider that my colleagues in Medicine define a Pass at a much higher level and in far more complicated ways than a numerical 50%, to my eternal peace of mind when I visit a doctor!) Yet their exam will still probably have caused me at least some gnashing of teeth because of points missed, pointless misstatement of the question text, obscure song lyrics, apologies for lack of preparation and the occasional actual fact that has peregrinated from the place where it could have attained marks to a place where it will be left out in the desert to die, bereft of the life-giving context that would save it from such an awful fate.

Should we move the exams earlier and then use this to guide the focus areas for assessment in order to determine the most improvement and develop knowledge in the areas in most need? Should we abandon exams entirely and move to a continuous-assessment competency based system, where there are skills and knowledge that must be demonstrated correctly and are practised until this is achieved? We are suffering, as so many people have observed before, from overloading the requirement to grade and classify our students into neatly discretised performance boxes onto a system that ultimately seeks to identify whether these students have achieved the knowledge levels necessary to be deemed to have achieved the course objectives. Should we separate competency and performance completely? I have sketchy ideas as to how this might work but none that survive under the blow-torches of GPA requirements and resource constraints.

Obviously, continuous assessment (practicals, reports, quizzes and so on) throughout the semester provide a very valuable way to identify problems but this requires good, and thorough, course design and an awareness that this is your intent. Are we premature in treating the exam as a closing-off line on the course? Do we work on that the same way that we do any assignment? You get feedback, a mark and then more work to follow-up? If we threw resourcing to the wind, could we have a 1-2 week intensive pre-semester program that specifically addressed those issues that students failed to grasp on their first pass? Congratulations, you got 80%, but that means that there’s 20% of the course that we need to clarify? (Those who got 100% I’ll pay to come back and tutor, because I like to keep cohorts together and I doubt I’ll need to do that very often.)

There are no easy answers here and shooting down these situations is very much in the fish/barrel plane, I realise, but it is a very deeply felt form of frustration that I am seeing the most work that any student is likely to put in but I cannot now fix the problems that I see. All I can do is mark it in red ink with an annotation that the vast majority will never see (unless they receive the grade of 44, 49, 64, 74 or 84, which are all threshold-1 markers for us).

Ah well, I hope to have more time in 2013 so maybe I can mull on this some more and come up with something that is better but still workable.

Thinking about teaching spaces: if you’re a lecturer, shouldn’t you be lecturing?

Posted: December 30, 2012 Filed under: Education | Tags: blogging, collaboration, community, curriculum, design, education, educational problem, feedback, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design, vygotsky Leave a commentI was reading a comment on a philosophical post the other day and someone wrote this rather snarky line:

He’s is a philosopher in the same way that (celebrity historian) is a historian – he’s somehow got the job description and uses it to repeat the prejudices of his paymasters, flattering them into thinking that what they believe isn’t, somehow, ludicrous. (Grangousier, Metafilter article 123174)

Rather harsh words in many respects and it’s my alteration of the (celebrity historian)’s name, not his, as I feel that his comments are mildy unfair. However, the point is interesting, as a reflection upon the importance of job title in our society, especially when it comes to the weighted authority of your words. From January the 1st, I will be a senior lecturer at an Australian University and that is perceived differently where I am. If I am in the US, I reinterpret this title into their system, namely as a tenured Associate Professor, because that’s the equivalent of what I am – the term ‘lecturer’ doesn’t clearly translate without causing problems, not even dealing with the fact that more lecturers in Australia have PhDs, where many lecturers in the US do not. But this post isn’t about how people necessarily see our job descriptions, it’s very much about how we use them.

In many respects, the title ‘lecturer’ is rather confusing because it appears, like builder, nurse or pilot, to contain the verb of one’s practice. One of the big changes in education has been the steady acceptance of constructivism, where the learners have an active role in the construction of knowledge and we are facilitating learning, in many ways, to a greater extent than we are teaching. This does not mean that teachers shouldn’t teach, because this is far more generic than the binding of lecturers to lecturing, but it does challenge the mental image that pops up when we think about teaching.

If I asked you to visualise a classroom situation, what would you think of? What facilities are there? Where are the students? Where is the teacher? What resources are around the room, on the desks, on the walls? How big is it?

Take a minute to do just this and make some brief notes as to what was in there. Then come back here.

It’s okay, I’ll still be here!

Vitamin Ed: Can It Be Extracted?

Posted: December 22, 2012 Filed under: Education | Tags: advocacy, blogging, community, curriculum, design, education, educational problem, educational research, ethics, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, vygotsky, workload Leave a commentThere are a couple of ways to enjoy a healthy, balanced diet. The first is to actually eat a healthy, balanced diet made up from fresh produce across the range of sources, which requires you to prepare and cook foods, often changing how you eat depending on the season to maximise the benefit. The second is to eat whatever you dang well like and then use an array of supplements, vitamins, treatments and snake oil to try and beat your diet of monster burgers and gorilla dogs into something that will not kill you in 20 years. If you’ve ever bothered to look on the side of those supplements, vitamins, minerals or whatever, that most people have in their ‘medicine’ cabinets, you might see statements like “does not substitute for a balanced diet” or nice disclaimers like that. There is, of course, a reason for that. While we can be fairly certain about a range of deficiency disorders in humans, and we can prevent these problems with selective replacement, many other conditions are not as clear cut – if you eat a range of produce which contains the things that we know we need, you’re probably getting a slew of things that we also need but don’t make themselves as prominent.

In terms of our diet, while the debate rages about precisely which diet humans should be eating, we can have a fairly good stab at a sound basis from a dietician’s perspective built out of actual food. Recreating that from raw sugars, protein, vitamin and mineral supplements is technically possible but (a) much harder to manage and (b) nowhere near as satisfying as eating the real food, in most cases. Let’s nor forget that very few of us in the western world are so distant from our food that we regard it purely as fuel, with no regard for its presentation, flavour or appeal. In fact, most of us could muster a grimace for the thought of someone telling us to eat something because it was good for us or for some real or imagined medical benefit. In terms of human nutrition, we have the known components that we have to eat (sugars, proteins, fats…) and we can identify specific vitamins and minerals that we need to balance to enjoy good health, yet there is not shortage of additional supplements that we also take out of concern for our health that may have little or no demonstrated benefit, yet still we take them.

There’s been a lot of work done in trying to establish an evidence base for medical supplements and far more of the supplements fail than pass this test. Willow bark, an old remedy for pain relief, has been found to have a reliable effect because it has a chemical basis for working – evidence demonstrated that and now we have aspirin. Homeopathic memory water? There’s no reliable evidence for this working. Does this mean it won’t work? Well, here we get into the placebo effect and this is where things get really complicated because we now have the notion that we have a set of replacements that will work for our diet or health because they contain useful chemicals, and a set of solutions that work because we believe in them.

When we look at education, where it’s successful, we see a lot of techniques being mixed in together in a ‘natural’ diet of knowledge construction and learning. Face-to-face and teamwork, sitting side-by-side with formative and summative assessment, as part of discussions or ongoing dialogues, whether physical or on-line. Exactly which parts of these constitute the “balanced” educational diet? We already know that a lecture, by itself, is not a complete educational experience, in the same way that a stand-alone multiple-choice question test will not make you a scholar. There is a great deal of work being done to establish an evidence basis for exactly which bits work but, as MIT said in the OCW release, these components do not make up a course. In dietary terms, it might be raw fuel but is it a desirable meal? Not yet, most likely.

Now let’s get into the placebo side of the equation, where students may react positively to something just because it’s a change, not because it’s necessarily a good change. We can control for these effects, if we’re cautious, and we can do it with full knowledge of the students but I’m very wary of any dependency upon the placebo effect, especially when it’s prefaced with “and the students loved it”. Sorry, students, but I don’t only (or even predominantly) care if you loved it, I care if you performed significantly better, attended more, engaged more, retaining the information for longer, could achieve more, and all of these things can only be measured when we take the trouble to establish base lines, construct experiments, measure things, analyse with care and then think about the outcomes.

My major concern about the whole MOOC discussion is not whether MOOCs are good or bad, it’s more to do with:

- What does everyone mean when they say MOOC? (Because there’s variation in what people identify as the components)

- Are we building a balanced diet or are we constructing a sustenance program with carefully balanced supplements that might miss something we don’t yet value?

- Have we extracted the essential Vitamin Ed from the ‘real’ experience?

- Can we synthesise Vitamin Ed outside of the ‘real’ educational experience?

I’ve been searching for a terminological separation that allows me to separate ‘real’/’conventional’ learning experiences from ‘virtual’/’new generation’/’MOOC’ experiences and none of those distinctions are satisfying – one says “Restaurant meal” and the other says “Army ration pack” to me, emphasising the separation. Worse, my fear is that a lot of people don’t regard MOOC as ever really having Vitamin Ed inside, as the MIT President clearly believed back in 2001.

I suspect that my search for Vitamin Ed starts from a flawed basis, because it assumes a single silver bullet if we take a literal meaning of the term, so let me me spread the concept out a bit to label Vitamin Ed as the essential educational components that define a good learning and teaching experience. Calling it Vitamin Ed gives me a flag to wave and an analogue to use, to explain why we should be seeking a balanced diet for all of our students, rather than a banquet for one and dog food for the other.