Education is not defined by a building

Posted: January 24, 2016 Filed under: Education, Opinion | Tags: advocacy, aesthetics, art, authenticity, beauty, design, dewey, education, educational problem, educational research, ethics, good, higher education, in the student's head, learning, reflection, resources, teaching, teaching approaches, thinking, truth Leave a commentI drew up a picture to show how many people appear to think about art. Now this is not to say that this is my thinking on art but you only have to go to galleries for a while to quickly pick up the sotto voce (oh, and loud) discussions about what constitutes art. Once we move beyond representative art (art that looks like real things), it can become harder for people to identify what they consider to be art.

This is crude and bordering on satirical. Read the text before you have a go at me over privilege and cultural capital.

I drew up this diagram in response to reading early passages from Dewey’s “Art as Experience”:

“An instructive history of modern art could be written in terms of the formation of the distinctively modern institutions of museum and exhibition gallery. (p8)

[…]

The growth of capitalism has been a powerful influence in the development of the museum as the proper home for works of art, and in the promotion of the idea that they are apart from the common life. (p8)

[…]

Why is there repulsion when the high achievements of fine art are brought into connection with common life, the life that we share with all living creatures?” (p20)

Dewey’s thinking is that we have moved from a time when art was deeply integrated into everyday life to a point where we have corralled “worthy” art into buildings called art galleries and museums, generally in response to nationalistic or capitalistic drivers, in order to construct an artefact that indicates how cultured and awesome we are. But, by doing this, we force a definition that something is art if it’s the kind of thing you’d see in an art gallery. We take art out of life, making valuable relics of old oil jars and assigning insane values to collections of oil on canvas that please the eye, and by doing so we demand that ‘high art’ cannot be part of most people’s lives.

But the gallery container is not enough to define art. We know that many people resist modernism (and post-modernism) almost reflexively, whether it’s abstract, neo-primitivist, pop, or simply that the viewer doesn’t feel convinced that they are seeing art. Thus, in the diagram above, real art is found in galleries but there are many things found in galleries that are not art. To steal an often overheard quote: “my kids could do that”. (I’m very interested in the work of both Rothko and Malevich so I hear this a lot.)

But let’s resist the urge to condemn people because, after we’ve wrapped art up in a bow and placed it on a pedestal, their natural interpretation of what they perceive, combined with what they already know, can lead them to a conclusion that someone must be playing a joke on them. Aesthetic sensibilities are inherently subjective and evolve over time, in response to exposure, development of depth of knowledge, and opportunity. The more we accumulate of these guiding experiences, the more likely we are to develop the cultural capital that would allow us to stand in any art gallery in the world and perceive the art, mediated by our own rich experiences.

Cultural capital is a term used to describe the assets that we have that aren’t money, in its many forms, but can still contribute to social mobility and perception of class. I wrote a long piece on it and perception here, if you’re interested. Dewey, working in the 1930s, was reacting to the institutionalisation of art and was able to observe people who were attempting to build a cultural reputation, through the purchase of ‘art that is recognised as art’, as part of their attempts to construct a new class identity. Too often, when people who are grounded in art history and knowledge look at people who can’t recognise ‘art that is accepted as art by artists’ there is an aspect of sneering, which is both unpleasant and counter-productive. However, such unpleasantness is easily balanced by those people who stand firm in artistic ignorance and, rather than quietly ignoring things that they don’t like, demand that it cannot be art and loudly deride what they see in order to challenge everyone around them to accept the art of an earlier time as the only art that there is.

Neither of these approaches is productive. Neither support the aesthetics of real discussion, nor are they honest in intent beyond a judgmental and dismissive approach. Not beautiful. Not true. Doesn’t achieve anything useful. Not good.

If this argument is seeming familiar, we can easily apply it to education because we have, for the most part, defined many things in terms of the institutions in which we find them. Everyone else who stands up and talks at people over Power Point slides for forty minutes is probably giving a presentation. Magically, when I do it in a lecture theatre at a University, I’m giving a lecture and now it has amazing educational powers! I once gave one of my lectures as a presentation and it was, to my amusement, labelled as a presentation without any suggestion of still being a lecture. When I am a famous professor, my lectures will probably start to transform into keynotes and masterclasses.

I would be recognised as an educator, despite having no teaching qualifications, primarily because I give presentations inside the designated educational box that is a University. The converse of this is that “university education” cannot be given outside of a University, which leaves every newcomer to tertiary education, whether face-to-face or on-line, with a definitional crisis that cannot be resolved in their favour. We already know that home-schooling, while highly variable in quality and intention, is a necessity in some places where the existing educational options are lacking, is often not taken seriously by the establishment. Even if the person teaching is a qualified teacher and the curriculum taught is an approved one, the words “home schooling” construct tension with our assumption that schooling must take place in boxes labelled as schools.

What is art? We need a better definition than “things I find in art galleries that I recognise as art” because there is far too much assumption in there, too much infrastructure required and there is not enough honesty about what art is. Some of the works of art we admire today were considered to be crimes against conventional art in their day! Let me put this in context. I am an artist and I have, with 1% of the talent, sold as many works as Van Gogh did in his lifetime (one). Van Gogh’s work was simply rubbish to most people who looked at it then.

And yet now he is a genius.

What is education? We need a better definition than “things that happen in schools and universities that fit my pre-conceptions of what education should look like.” We need to know so that we can recognise, learn, develop and improve education wherever we find it. The world population will peak at around 10 billion people. We will not have schools for all of them. We don’t have schools for everyone now. We may never have the infrastructure we need for this and we’re going need a better definition if we want to bring real, valuable and useful education to everyone. We define in order to clarify, to guide, and to tell us what we need to do next.

First year course evaluation scheme

Posted: January 22, 2016 Filed under: Education, Opinion | Tags: advocacy, aesthetics, assessment, authenticity, beauty, design, education, educational problem, ethics, higher education, learning, reflection, resources, student perspective, teaching, teaching approaches, time management 2 CommentsIn my earlier post, I wrote:

Even where we are using mechanical or scripted human [evaluators], the hand of the designer is still firmly on the tiller and it is that control that allows us to take a less active role in direct evaluation, while still achieving our goals.

and I said I’d discuss how we could scale up the evaluation scheme to a large first year class. Finally, thank you for your patience, here it is.

The first thing we need to acknowledge is that most first-year/freshman classes are not overly complex nor heavily abstract. We know that we want to work concrete to abstract, simple to complex, as we build knowledge, taking into account how students learn, their developmental stages and the mechanics of human cognition. We want to focus on difficult concepts that students struggle with, to ensure that they really understand something before we go on.

In many courses and disciplines, the skills and knowledge we wish to impart are fundamental and transformative, but really quite straight-forward to evaluate. What this means, based on what I’ve already laid out, is that my role as a designer is going to be crucial in identifying how we teach and evaluate the learning of concepts, but the assessment or evaluation probably doesn’t require my depth of expert knowledge.

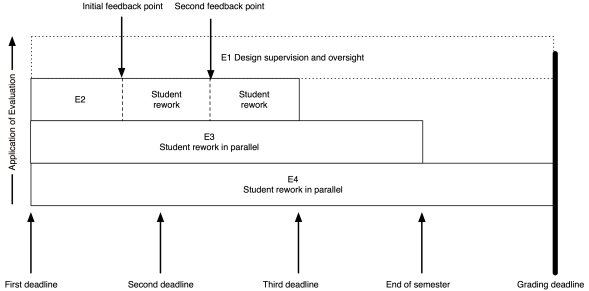

The model I put up previously now looks like this:

My role (as the notional E1) has moved entirely to design and oversight, which includes developing the E3 and E4 tests and training the next tier down, if they aren’t me.

As an example, I’ve put in two feedback points, suitable for some sort of worked output in response to an assignment. Remember that the E2 evaluation is scripted (or based on rubrics) yet provides human nuance and insight, with personalised feedback. That initial feedback point could be peer-based evaluation, group discussion and demonstration, or whatever you like. The key here is that the evaluation clearly indicates to the student how they are travelling; it’s never just “8/10 Good”. If this is a first year course then we can capture much of the required feedback with trained casuals and the underlying automated systems, or by training our students on exemplars to be able to evaluate each other’s work, at least to a degree.

The same pattern as before lies underneath: meaningful timing with real implications. To get access to human evaluation, that work has to go in by a certain date, to allow everyone involved to allow enough time to perform the task. Let’s say the first feedback is a peer-assessment. Students can be trained on exemplars, with immediate feedback through many on-line and electronic systems, and then look at each other’s submissions. But, at time X, they know exactly how much work they have to do and are not delayed because another student handed up late. After this pass, they rework and perhaps the next point is a trained casual tutor, looking over the work again to see how well they’ve handled the evaluation.

There could be more rework and review points. There could be less. The key here is that any submission deadline is only required because I need to allocate enough people to the task and keep the number of tasks to allocate, per person, at a sensible threshold.

Beautiful evaluation is symmetrically beautiful. I don’t overload the students or lie to them about the necessity of deadlines but, at the same time, I don’t overload my human evaluators by forcing them to do things when they don’t have enough time to do it properly.

As for them, so for us.

Throughout this process, the E1 (supervising evaluator) is seeing all of the information on what’s happening and can choose to intervene. At this scale, if E1 was also involved in evaluation, intervention would be likely last-minute and only in dire emergency. Early intervention depends upon early identification of problems and sufficient resources to be able to act. Your best agent of intervention is probably the person who has the whole vision of the course, assisted by other human evaluators. This scheme gives the designer the freedom to have that vision and allows you to plan for how many other people you need to help you.

In terms of peer assessment, we know that we can build student communities and that students can appreciate each other’s value in a way that enhances their perceptions of the course and keeps them around for longer. This can be part of our design. For example, we can ask the E2 evaluators to carry out simple community-focused activities in classes as part of the overall learning preparation and, once students are talking, get them used to the idea of discussing ideas rather than having dualist confrontations. This then leads into support for peer evaluation, with the likelihood of better results.

Some of you will be saying “But this is unrealistic, I’ll never get those resources.” Then, in all likelihood, you are going to have to sacrifice something: number of evaluations, depth of feedback, overall design or speed of intervention.

You are a finite resource. Killing you with work is not beautiful. I’m writing all of this to speak to everyone in the community, to get them thinking about the ugliness of overwork, the evil nature of demanding someone have no other life, the inherent deceit in pretending that this is, in any way, a good system.

We start by changing our minds, then we change the world.

The hand of an expert is visible in design

Posted: January 20, 2016 Filed under: Education | Tags: advocacy, aesthetics, arts and crafts, authenticity, beauty, community, design, dewey, education, educational problem, educational research, ethics, good, higher education, jeffrey petts, learning, morris, reflection, resources, student perspective, teaching, teaching approaches, thinking, time, time management, tools, truth 1 CommentIn yesterday’s post, I laid out an evaluation scheme that allocated the work of evaluation based on the way that we tend to teach and the availability, and expertise, of those who will be evaluating the work. My “top” (arbitrary word) tier of evaluators, the E1s, were the teaching staff who had the subject matter expertise and the pedagogical knowledge to create all of the other evaluation materials. Despite the production of all of these materials and designs already being time-consuming, in many cases we push all evaluation to this person as well. Teachers around the world know exactly what I’m talking about here.

Our problem is time. We move through it, tick after tick, in one direction and we can neither go backwards nor decrease the number of seconds it takes to perform what has to take a minute. If we ask educators to undertake good learning design, have engaging and interesting assignments, work on assessment levels well up in the taxonomies and we then ask them to spend day after day standing in front of a class and add marking on top?

Forget it. We know that we are going to sacrifice the number of tasks, the quality of the tasks or our own quality of life. (I’ve written a lot about time before, you can search my blog for time or read this, which is a good summary.) If our design was good, then sacrificing the number of tasks or their quality is going to compromise our design. If we stop getting sleep or seeing our families, our work is going to suffer and now our design is compromised by our inability to perform to our actual level of expertise!

When Henry Ford refused to work his assembly line workers beyond 40 hours because of the increased costs of mistakes in what were simple, mechanical, tasks, why do we keep insisting that complex, delicate, fragile and overwhelmingly cognitive activities benefit from us being tired, caffeine-propped, short-tempered zombies?

We’re not being honest. And thus we are not meeting our requirement for truth. A design that gets mangled for operational reasons without good redesign won’t achieve our outcomes. That’s not going to achieve our results – so that’s not good. But what of beauty?

William Morris: Snakeshead Textile

What are the aesthetics of good work? In Petts’ essay on the Arts and Crafts movement, he speaks of William Morris, Dewey and Marx (it’s a delightful essay) and ties the notion of good work to work that is authentic, where such work has aesthetic consequences (unsurprisingly given that we were aiming for beauty), and that good (beautiful) work can be the result of human design if not directly the human hand. Petts makes an interesting statement, which I’m not sure Morris would let pass un-challenged. (But, of course, I like it.)

It is not only the work of the human hand that is visible in art but of human design. In beautiful machine-made objects we still can see the work of the “abstract artist”: such an individual controls his labor and tools as much as the handicraftsman beloved of Ruskin.

Jeffrey Petts, Good Work and Aesthetic Education: William Morris, the Arts and Crafts Movement, and Beyond, The Journal of Aesthetic Education, Vol. 42, No. 1 (Spring, 2008), page 36

Petts notes that it is interesting that Dewey’s own reflection on art does not acknowledge Morris especially when the Arts and Crafts’ focus on authenticity, necessary work and a dedication to vision seems to be a very suitable framework. As well, the Arts and Crafts movement focused on the rejection of the industrial and a return to traditional crafting techniques, including social reform, which should have resonated deeply with Dewey and his peers in the Pragmatists. However, Morris’ contribution as a Pragmatist aesthetic philosopher does not seem to be recognised and, to me, this speaks volumes of the unnecessary separation between cloister and loom, when theory can live in the pragmatic world and forms of practice can be well integrated into the notional abstract. (Through an Arts and Crafts lens, I would argue that there is are large differences between industrialised education and the provision, support and development of education using the advantages of technology but that is, very much, another long series of posts, involving both David Bowie and Gary Numan.)

But here is beauty. The educational designer who carries out good design and manages to hold on to enough of her time resources to execute the design well is more aesthetically pleasing in terms of any notion of creative good works. By going through a development process to stage evaluations, based on our assessment and learning environment plans, we have created “made objects” that reflect our intention and, if authentic, then they must be beautiful.

We now have a strong motivating factor to consider both the often over-looked design role of the educator as well as the (easier to perceive) roles of evaluation and intervention.

I’ve revisited the diagram from yesterday’s post to show the different roles during the execution of the course. Now you can clearly see that the course lecturer maintains involvement and, from our discussion above, is still actively contributing to the overall beauty of the course and, we would hope, it’s success as a learning activity. What I haven’t shown is the role of the E1 as designer prior to the course itself – but that’s another post.

Even where we are using mechanical or scripted human markers, the hand of the designer is still firmly on the tiller and it is that control that allows us to take a less active role in direct evaluation, while still achieving our goals.

Do I need to personally look at each of the many works all of my first years produce? In our biggest years, we had over 400 students! It is beyond the scale of one person and, much as I’d love to have 40 expert academics for that course, a surplus of E1 teaching staff is unlikely anytime soon. However, if I design the course correctly and I continue to monitor and evaluate the course, then the monster of scale that I have can be defeated, if I can make a successful argument that the E2 to E4 marker tiers are going to provide the levels of feedback, encouragement and detailed evaluation that are required at these large-scale years.

Tomorrow, we look at the details of this as it applies to a first-year programming course in the Processing language, using a media computation approach.

Aside: My New York Story

Posted: January 15, 2016 Filed under: Education, Opinion | Tags: authenticity, blogging, cavafy, education, ithaka, New york, reflection, thinking Leave a commentI’m a story-teller. It infuses my work. I share the stories and realities of my life in order to explain points that I think other people could appreciate. Today, I’m telling you my New York story. It’s not rags-to-riches and I’m only triumphing over my own stupidity rather than terrible obstacles. I fight to give opportunity to others but my own tales are infused with my own levels of privilege. If that bothers you, probably best to stop reading now.

Like many New York stories, this one is all about how I never lived in New York. Losing both Bowie and Alan Rickman in a few days has made me think about that city again. This is the story of how I loved a city but we never ended up together.

Oh, Oh! New York!

Even in the home counties towns in the UK, the adults spoke about New York as if it were Olympus, Shangri-La and a wild west town all rolled into one. You could be incredibly cool in Hampshire just by having been to America. If you had been to New York, and survived, you were some kind of god. They had different music, different cars, different money. In a Britain collapsing under the 1970s, America was a golden place.

I grew up in the 70s and was fortunate enough to hear Bowie hit the UK scene hard, to see early Doctor Who, to be mentally invigorated by very demanding progressive UK kids’ TV, to hear a Police album when they were just starting out and then, even more fortunately, we left the home counties and, because my Mum was amazingly brave and strong, we made it across the sea to Australia, where a better life awaited us.

I shrugged off Britain in weeks but New York never left me.

I continued to grow up in one of the Australian state capitals (Australian population is mostly concentrated into cities on the ocean) and it was nice, but it was no New York. I’m not sure I’ve told anyone this but, in my head, I was always going to America. That’s what success was defined as when I was a boy: you were a traveller, you did interesting things and that meant America. When every other Brit was going to the Costa del Sol and baking their skin, the interesting people were pale, thin and had walked around in magical places like Central Park, Times Square and along Broadway. They knew about music and understood what the Tarkus artwork meant on that ELP album. They even knew what “prog-rock” meant. They were art, life and wonder.

When I finished University, I started to think seriously about America. I had visited by then, and that is a story in itself, and finally seen New York. Mid-winter. Just after Christmas. Quiet and grey, on the cusp of 1995 and 1996. It did not quite amaze but it do not disappoint, even though I was walking around with a bung knee after slipping in unfamiliar snow. I began to think about how I could get there.

But one thing became clear as I thought about it. I didn’t know how to get to the New York that I wanted to be part of. I was creative but I wasn’t an amazing artist or musician and the New York I wanted to be part of was the bubbling, creative, amazing community of 1970s. I was a middling rhythm guitarist, a karaoke-tolerable singer, an abstract artist (I still can’t draw very well), an enthusiastic poet, but, mostly, I was a computer guy. I could go and get a job but all I would be doing would be living in (or near, most likely) New York and never becoming part of my vision of NYC. Tech support in Shangri-La was not what I wanted.

I had kept an idea of where I wanted to go in my head but I had never turned that into an intention to actually go there. A goal with no plans had turned into a life without much direction. (I was lucky enough to fall in love and start a real journey and adventure locally, just as I realised that I had never set my cap for New York, but that’s another story.)

There’s a poem I love, Ithaka by C. P. Cavafy, and it talks of setting out on a magnificent journey, full of adventures and monsters, in search of the island of Ithaka as part of a glorious ancient Greek adventure. But the most important part of the poem, for me, is the end:

Ithaka gave you the marvellous journey.

Without her you would not have set out.

She has nothing left to give you now.And if you find her poor, Ithaka won’t have fooled you.

Wise as you will have become, so full of experience,

you will have understood by then what these Ithakas mean.

As Cavafy and all of the Greek authors of myth before him note, the journey is the thing. When you arrive at your goal, if you have thought about it for long enough, then you may find it was not as good as you thought it would be. Memory may have tricked you, things may have changed. But if it caused you to start a wonderful journey, then it was worthwhile. No, not just worthwhile, it was magical. It was transcendence and enlightenment.

But, by not seeing New York as a goal and leaving it as a dream, I never set myself upon the journey and thus I deprived myself of both the adventure and the chance of achieving my dream one day.

Let’s be realistic, I was never going to live in the 1970s New York that I idolised, unless I found a time machine, but if I had actually made some career and life choices that would have seen me head to America in the 90s, I still would have made it there. It would have seen me experiencing a New York that, while not the one I thought of, would have been a capstone to an amazing journey. But, because I didn’t align myself to realise my New York dream, it didn’t happen.

My long-time love affair with New York was a fantasy and we’re both lucky that we never moved it beyond that point. I would have ended up being bitter and resentful, New York didn’t need another tech support person pretending to be an artist. You need more than attraction and the frisson of distance to have a relationship.

This year, I have reassessed my goals and dreams. I am deciding which of these will define my journey and give my life structure for the next decade or two. Where can I find new wisdom? Where can I find the experiences that will take me to new and amazing places, physically or mentally? I have been successful in a number of things but I really need to focus on the goal to make sure that I get the most out of the journey.

I’m not the same person I was back in the 90s. A lot of thinking has happened, a lot of growing has happened, a lot of love has happened. I’m more comfortable with the softer definition of myself as a communicator, an educator, an artist and even a philosopher. This small journey was triggered by the realisation that I had never chosen what to do or where to go. There’s a natural pause at this stage and it’s time to set a new heading. Where do I go from here?

The point of my New York Story is a simple one: assuming that nothing else gets in the way, you’re unlikely to get somewhere unless you actually set out for it. We often mistake what we’re doing with what we want to do, the necessary aims of our work with our real goals in life. We do something today because we did it yesterday and that means we’ll do it again tomorrow. Perhaps we should only do it tomorrow if it’s the best thing to do.

There are many things in my life that I don’t want (and don’t need) to change. But I look at Cavafy’s poem and I can smell the sea winds, hear the sails fill, and the helm is asking me where to go next.

Teaching for (current) Humans

Posted: January 13, 2016 Filed under: Education, Opinion | Tags: advocacy, authenticity, briana morrison, community, design, education, educational research, edx, ethics, higher education, in the student's head, lauren margulieux, learning, mark guzdial, moocs, on-line learning, principles of design, reflection, resources, student perspective, subgoals, teaching, teaching approaches, technology, thinking, tools, video 5 Comments

Leonardo’s experiments in human-octopus engineering never received appropriate recognition.

I was recently at a conference-like event where someone stood up and talked about video lectures. And these lectures were about 40 minutes long.

Over several million viewing sessions, EdX have clearly shown that watchable video length tops out at just over 6 minutes. And that’s the same for certificate-earning students and the people who have enrolled for fun. At 9 minutes, students are watching for fewer than 6 minutes. At the 40 minute mark, it’s 3-4 minutes.

I raised this point to the speaker because I like the idea that, if we do on-line it should be good on-line, and I got a response that was basically “Yes, I know that but I think the students should be watching these anyway.” Um. Six minutes is the limit but, hey, students, sit there for this time anyway.

We have never been able to unobtrusively measure certain student activities as well as we can today. I admit that it’s hard to measure actual attention by looking at video activity time but it’s also hard to measure activity by watching students in a lecture theatre. When we add clickers to measure lecture activity, we change the activity and, unsurprisingly, clicker-based assessment of lecture attentiveness gives us different numbers to observation of note-taking. We can monitor video activity by watching what the student actually does and pausing/stopping a video is a very clear signal of “I’m done”. The fact that students are less likely to watch as far on longer videos is a pretty interesting one because it implies that students will hold on for a while if the end is in sight.

In a lecture, we think students fade after about 15-20 minutes but, because of physical implications, peer pressure, politeness and inertia, we don’t know how many students have silently switched off before that because very few will just get up and leave. That 6 minute figure may be the true measure of how long a human will remain engaged in this kind of task when there is no active component and we are asking them to process or retain complex cognitive content. (Speculation, here, as I’m still reading into one of these areas but you see where I’m going.) We know that cognitive load is a complicated thing and that identifying subgoals of learning makes a difference in cognitive load (Morrison, Margulieux, Guzdial) but, in so many cases, this isn’t what is happening in those long videos, they’re just someone talking with loose scaffolding. Having designed courses with short videos I can tell you that it forces you, as the designer and teacher, to focus on exactly what you want to say and it really helps in making your points, clearly. Implicit sub-goal labelling, anyone? (I can hear Briana and Mark warming up their keyboards!)

If you want to make your videos 40 minutes long, I can’t stop you. But I can tell you that everything I know tells me that you have set your materials up for another hominid species because you’re not providing something that’s likely to be effective for current humans.

At least they’re being honest

Posted: January 13, 2016 Filed under: Education, Opinion | Tags: advocacy, assessment, authenticity, community, competency-based assessment, education, educational research, ethics, higher education, learning, reflection, student perspective, teaching, teaching approaches, thinking, time banking, time management, tools 2 CommentsI was inspired to write this by a comment about using late penalties but dealing slightly differently with students when they owned up to being late. I have used late penalties extensively (it’s school policy) and so I have a lot of experience with the many ways students try to get around them.

Like everyone, I have had students who have tried to use honesty where every other possible way of getting the assignment in on time (starting early, working on it before the day before, miraculous good luck) has failed. Sometimes students are puzzled that “Oh, I was doing another assignment from another lecturer” isn’t a good enough excuse. (Genuine reasons for interrupted work, medical or compassionate, are different and I’m talking about the ambit extension or ‘dog ate my homework’ level of bargaining.)

My reasoning is simple. In education, owning up to something that you did knowing that it would have punitive consequences of some sort should not immediately cause things to become magically better. Plea bargaining (and this is an interesting article of why that’s not a good idea anywhere) is you agreeing to your guilt in order to reduce your sentence. But this is, once again, horse-trading knowledge on the market. Suddenly, we don’t just have a temporal currency, we have a conformal currency, where getting a better deal involves finding the ‘kindest judge’ among the group who will give you the ‘lightest sentence’. Students optimise their behaviour to what works or, if they’re lucky, they have a behaviour set that’s enough to get them to a degree without changing much. The second group aren’t mostly who we’re talking about and I don’t want to encourage the first group to become bargain-hunting mark-hagglers.

I believe that ‘finding Mr Nice Lecturer’ behaviour is why some students feel free to tell me that they thought someone else’s course was more important than mine, because I’m a pretty nice person and have a good rapport with my students, and many of my colleagues can be seen (fairly or not) as less approachable or less open.

We are not doing ourselves or our students any favours. At the very least, we risk accusations of unfairness if we extend benefits to one group who are bold enough to speak to us (and we know that impostor syndrome and lack of confidence are rife in under-represented groups). At worst, we turn our students into cynical mark shoppers, looking for the easiest touch and planning their work strategy based on what they think they can get away with instead of focusing back on the learning. The message is important and the message must be clearly communicated so that students try to do the work for when it’s required. (And I note that this may or may not coincide with any deadlines.)

We wouldn’t give credit to someone who wrote ‘True’ and then said ‘Oh, but I really meant False’. The work is important or it is not. The deadline is important or it is not. Consequences, in a learning sense, do not have to mean punishments and we do not need to construct a Star Chamber in our offices.

Yes, I do feel strongly about this. I completely understand why people do this and I have also done this before. But after thinking about it at length, I changed my practice so that being honest about something that shouldn’t have happened was appreciated but it didn’t change what occurred unless there was a specific procedural difference in handling. I am not a judge. I am not a jury. I want to change the system so that not only do I not have to be but I’m not tempted to be.

A good goal

Posted: January 12, 2016 Filed under: Education, Opinion | Tags: beauty, blogging, education, higher education, obama, president obama, reflection, teaching, thinking Leave a commentI set out to make this year an amazing year. A beautiful year for education. But it’s already challenging in some ways and I knew it was going to be.

You know what it feels like, sometimes.

What a great time to find this quote from President Obama. It’s one that I think everyone in education should try to remember and apply to what we’re doing because every time we have new students in our classes, we will never again get the chance to start well with them.

“I want us to be able when we walk out this door to say we couldn’t think of anything else that we didn’t try to do—that we didn’t shy away from a challenge because it was hard. That we weren’t timid, or got tired, or somehow were thinking about the next thing because there is no next thing. This is it. Never in our lives again will we have the chance to do as much good as we do right now. I want to make sure that we maximize it.”

State of the Union Address, President Barack Obama, 2016.

The Illusion of a Number

Posted: January 10, 2016 Filed under: Education, Opinion | Tags: authenticity, beauty, curve grading, design, education, educational problem, educational research, ethics, grading, higher education, in the student's head, learning, rapaport, reflection, resources, teaching, teaching approaches, thinking, tools, wittgenstein 3 Comments

Rabbit? Duck? Paging Wittgenstein!

I hope you’ve had a chance to read William Rapaport’s paper, which I referred to yesterday. He proposed a great, simple alternative to traditional grading that reduces confusion about what is signalled by ‘grade-type’ feedback, as well as making things easier for students and teachers. Being me, after saying how much I liked it, I then finished by saying “… but I think that there are problems.” His approach was that we could break all grading down into: did nothing, wrong answer, some way to go, pretty much there. And that, I think, is much better than a lot of the nonsense that we pretend we hand out as marks. But, yes, I have some problems.

I note that Rapaport’s exceedingly clear and honest account of what he is doing includes this statement. “Still, there are some subjective calls to make, and you might very well disagree with the way that I have made them.” Therefore, I have license to accept the value of the overall scholarship and the frame of the approach, without having to accept all of the implementation details given in the paper. Onwards!

I think my biggest concern with the approach given is not in how it works for individual assessment elements. In that area, I think it shines, as it makes clear what has been achieved. A marker can quickly place the work into one of four boxes if there are clear guidelines as to what has to be achieved, without having to worry about one or two percentage points here or there. Because the grade bands are so distinct, as Rapaport notes, it is very hard for the student to make the ‘I only need one more point argument’ that is so clearly indicative as a focus on the grade rather than the learning. (I note that such emphasis is often what we have trained students for, there is no pejorative intention here.) I agree this is consistent and fair, and time-saving (after Walvoord and Anderson), and it avoids curve grading, which I loathe with a passion.

However, my problems start when we are combining a number of these triaged grades into a cumulative mark for an assignment or for a final letter grade, showing progress in the course. Sections 4.3 and 4.4 of the paper detail the implementation of assignments that have triage graded sub-tasks. Now, instead of receiving a “some way to go” for an assignment, we can start getting different scores for sub-tasks. Let’s look at an example from the paper, note 12, to describe programming projects in CS.

- Problem definition 0,1,2,3

- Top-down design 0,1,2,3

- Documented code

- Code 0,1,2,3

- Documentation 0,1,2,3

- Annotated output

- Output 0,1,2,3

- Annotations 0,1,2,3

Total possible points = 18

Remember my hypothetical situation from yesterday? I provided an example of two students who managed to score enough marks to pass by knowing the complement of each other’s course knowledge. Looking at the above example, it appears (although not easily) to be possible for this situation to occur and both students to receive a 9/18, yet for different aspects. But I have some more pressing questions:

- Should it be possible for a student to receive full marks for output, if there is no definition, design or code presented?

- Can a student receive full marks for everything else if they have no design?

The first question indicates what we already know about task dependencies: if we want to build them into numerical grading, we have to be pedantically specific and provide rules on top of the aggregation mathematics. But, more subtly, by aggregating these measures, we no longer have an ‘accurately triaged’ grade to indicate if the assignment as a whole is acceptable or not. An assignment with no definition, design or code can hardly be considered to be a valid submission, yet good output, documentation and annotation (with no code) will not give us the right result!

The second question is more for those of us who teach programming and it’s a question we all should ask. If a student can get a decent grade for an assignment without submitting a design, then what message are we sending? We are, implicitly, saying that although we talk a lot about design, it’s not something you have to do in order to be successful. Rapaport does go on to talk about weightings and how we can emphasis these issues but we are still faced with an ugly reality that, unless we weight our key aspects to be 50-60% of the final aggregate, students will be able to side-step them and still perform to a passing standard. Every assignment should be doing something useful, modelling the correct approaches, demonstrating correct techniques. How do we capture that?

Now, let me step back and say that I have no problem with identifying the sub-tasks and clearly indicating the level of performance using triage grading, but I disagree with using it for marks. For feedback it is absolutely invaluable: triage grading on sub-tasks will immediately tell you where the majority of students are having trouble, quickly. That then lets you know an area that is more challenging than you thought or one that your students were not prepared for, for some reason. (If every student in the class is struggling with something, the problem is more likely to lie with the teacher.) However, I see three major problems with sub-task aggregation and, thus, with final grade aggregation from assignments.

The first problem is that I think this is the wrong kind of scale to try and aggregate in this way. As Rapaport notes, agreement on clear, linear intervals in grading is never going to be achieved and is, very likely, not even possible. Recall that there are four fundamental types of scale: nominal, ordinal, interval and ratio. The scales in use for triage grading are not interval scales (the intervals aren’t predictable or equidistant) and thus we cannot expect to average them and get sensible results. What we have here are, to my eye, ordinal scales, with no objective distance but a clear ranking of best to worst. The clearest indicator of this is the construction of a B grade for final grading, where no such concept exists in the triage marks for assessing assignment quality. We have created a “some way to go but sometimes nearly perfect” that shouldn’t really exist. Think of it like runners: you win one race and you come third in another. You never actually came second in any race so averaging it makes no sense.

The second problem is that aggregation masks the beauty of triage in terms of identifying if a task has been performed to the pre-determined level. In an ideal world, every area of knowledge that a student is exposed to should be an important contributor to their learning journey. We may have multiple assignments in one area but our assessment mechanism should provide clear opportunities to demonstrate that knowledge. Thus, their achievement of sufficient assignment work to demonstrate their competency in every relevant area of knowledge should be a necessary condition for graduating. When we take triage grading back to an assignment level, we can then look at our assignments grouped by knowledge area and quickly see if a student has some way to go or has achieved the goal. This is not anywhere near as clear when we start aggregating the marks because of the mathematical issues already raised.

Finally, the reduction of triage to mathematical approximation reduces the ability to specify which areas of an assessment are really valuable and, while weighting is a reasonable approximation to this, it is very hard to use a mathematical formula with more and more ‘fudge factors’, a term Rapaport uses, to make up for the fact that this is just a little too fragile.

To summarise, I really like the thrust of this paper. I think what is proposed is far better, even with all of the problems raised above, at giving a reasonable, fair and predictable grade to students. But I think that the clash with existing grading traditions and the implicit requirement to turn everything back into one number is causing problems that have to be addressed. These problems mean that this solution is not, yet, beautiful. But let’s see where we can go.

Tomorrow, I’ll suggest an even more cut-down version of grading and then work on an even trickier problem: late penalties and how they affect grades.

Assessment is (often) neither good nor true.

Posted: January 9, 2016 Filed under: Education, Opinion | Tags: advocacy, aesthetics, beauty, community, design, education, ethics, higher education, in the student's head, kohn, principles of design, rapaport, reflection, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design, work/life balance, workload 3 CommentsIf you’ve been reading my blog over the past years, you’ll know that I have a lot of time for thinking about assessment systems that encourage and develop students, with an emphasis on intrinsic motivation. I’m strongly influenced by the work of Alfie Kohn, unsurprisingly given I’ve already shown my hand on Focault! But there are many other writers who are… reassessing assessment: why we do it, why we think we are doing it, how we do it, what actually happens and what we achieve.

In my framing, I want assessment to be as all other aspects of education: aesthetically satisfying, leading to good outcomes and being clear and what it is and what it is not. Beautiful. Good. True. There are some better and worse assessment approaches out there and there are many papers discussing this. One of these that I have found really useful is Rapaport’s paper on a simplified assessment process for consistent, fair and efficient grading. Although I disagree with some aspects, I consider it to be both good, as it is designed to clearly address a certain problem to achieve good outcomes, and it is true, because it is very honest about providing guidance to the student as to how well they have met the challenge. It is also highly illustrative and honest in representing the struggle of the author in dealing with the collision of novel and traditional assessment systems. However, further discussion of Rapaport is for the near future. Let me start by demonstrating how broken things often are in assessment, by taking you through a hypothetical situation.

Thought Experiment 1

Two students, A and B, are taking the same course. There are a number of assignments in the course and two exams. A and B, by sheer luck, end up doing no overlapping work. They complete different assignments to each other, half each and achieve the same (cumulative bare pass overall) marks. They then manage to score bare pass marks in both exams, but one answers only the even questions and only answers the odd. (And, yes, there are an even number of questions.) Because of the way the assessment was constructed, they have managed to avoid any common answers in the same area of course knowledge. Yet, both end up scoring 50%, a passing grade in the Australian system.

Which of these students has the correct half of the knowledge?

I had planned to build up to Rapaport but, if you’re reading the blog comments, he’s already been mentioned so I’ll summarise his 2011 paper before I get to my main point. In 2011, William J. Rapaport, SUNY Buffalo, published a paper entitled “A Triage Theory of Grading: The Good, The Bad and the Middling.” in Teaching Philosophy. This paper summarised a number of thoughtful and important authors, among them Perry, Wolff, and Kohn. Rapaport starts by asking why we grade, moving through Wolff’s taxonomic classification of assessment into criticism, evaluation, and ranking. Students are trained, by our world and our education systems to treat grades as a measure of progress and, in many ways, a proxy for knowledge. But this brings us into conflict with Perry’s developmental stages, where students start with a deep need for authority and the safety of a single right answer. It is only when students are capable of understanding that there are, in many cases, multiple right answers that we can expect them to understand that grades can have multiple meanings. As Rapaport notes, grades are inherently dual: a representative symbol attached to a quality measure and then, in his words, “ethical and aesthetic values are attached” (emphasis mine.) In other words, a B is a measure of progress (not quite there) that also has a value of being … second-tier if an A is our measure of excellence. A is not A, as it must be contextualised. Sorry, Ayn.

When we start to examine why we are grading, Kohn tells us that the carrot and stick is never as effective as the motivation that someone has intrinsically. So we look to Wolff: are we critiquing for feedback, are we evaluating learning, or are we providing handy value measures for sorting our product for some consumer or market? Returning to my thought experiment above, we cannot provide feedback on assignments that students don’t do, our evaluation of learning says that both students are acceptable for complementary knowledge, and our students cannot be discerned from their graded rank, despite the fact that they have nothing in common!

Yes, it’s an artificial example but, without attention to the design of our courses and in particular the design of our assessment, it is entirely possible to achieve this result to some degree. This is where I wish to refer to Rapaport as an example of thoughtful design, with a clear assessment goal in mind. To step away from measures that provide an (effectively) arbitrary distinction, Rapaport proposes a tiered system for grading that simplifies the overall system with an emphasis on identifying whether a piece of assessment work is demonstrating clear knowledge, a partial solution, an incorrect solution or no work at all.

This, for me, is an example of assessment that is pretty close to true. The difference between a 74 and a 75 is, in most cases, not very defensible (after Haladyna) unless you are applying some kind of ‘quality gate’ that really reduces a percentile scale to, at most, 13 different outcomes. Rapaport’s argument is that we can reduce this further and this will reduce grade clawing, identify clear levels of achieve and reduce marking load on the assessor. That last point is important. A system that buries the marker under load is not sustainable. It cannot be beautiful.

There are issues in taking this approach and turning it back into the grades that our institutions generally require. Rapaport is very open about the difficulties that he has turning his triage system into an acceptable letter grade and it’s worth reading the paper to see that discussion alone, because it quite clearly shows what

Rapaport’s scheme clearly defines which of Wolff’s criteria he wishes his assessment to achieve. The scheme, for individual assessments, is no good for ranking (although we can fashion a ranking from it) but it is good to identify weak areas of knowledge (as transmitted or received) for evaluation of progress and also for providing elementary critique. It says what it is and it pretty much does it. It sets out to achieve a clear goal.

The paper ends with a summary of the key points of Haladyna’s 1999 book “A Complete Guide to Student Grading”, which brings all of this together.

Haladyna says that “Before we assign a grade to any students, we need:

- an idea about what a grade means,

- an understanding of the purposes of grading,

- a set of personal beliefs and proven principles that we will use in teaching

and grading,

- a set of criteria on which the grade is based, and, finally,

- a grading method,which is a set of procedures that we consistently follow

in arriving at each student’s grade. (Haladyna 1999: ix)

There is no doubt that Rapaport’s scheme meets all of these criteria and, yet, for me, we have not yet gone far enough in search of the most beautiful, most good and most true extent that we can take this idea. Is point 3, which could be summarised as aesthetics not enough for me? Apparently not.

Tomorrow I will return to Rapaport to discuss those aspects I disagree with and, later on, discuss both an even more trimmed-down model and some more controversial aspects.