Why “#thedress” is the perfect perception tester.

Posted: March 2, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, Avant garde, collaboration, community, Dada, data visualisation, design, education, educational problem, ethics, feedback, higher education, in the student's head, Kazimir Malevich, learning, Marcel Duchamp, modern art, principles of design, readymades, reflection, resources, Russia, Stalin, student perspective, Suprematism, teaching, thedress, thinking 1 CommentI know, you’re all over the dress. You’ve moved on to (checks Twitter) “#HouseOfCards”, Boris Nemtsov and the new Samsung gadgets. I wanted to touch on some of the things I mentioned in yesterday’s post and why that dress picture was so useful.

The first reason is that issues of conflict caused by different perception are not new. You only have to look at the furore surrounding the introduction of Impressionism, the scandal of the colour palette of the Fauvists, the outrage over Marcel Duchamp’s readymades and Dada in general, to see that art is an area that is constantly generating debate and argument over what is, and what is not, art. One of the biggest changes has been the move away from representative art to abstract art, mainly because we are no longer capable of making the simple objective comparison of “that painting looks like the thing that it’s a painting of.” (Let’s not even start on the ongoing linguistic violence over ending sentences with prepositions.)

Once we move art into the abstract, suddenly we are asking a question beyond “does it look like something?” and move into the realm of “does it remind us of something?”, “does it make us feel something?” and “does it make us think about the original object in a different way?” You don’t have to go all the way to using body fluids and live otters in performance pieces to start running into the refrains so often heard in art galleries: “I don’t get it”, “I could have done that”, “It’s all a con”, “It doesn’t look like anything” and “I don’t like it.”

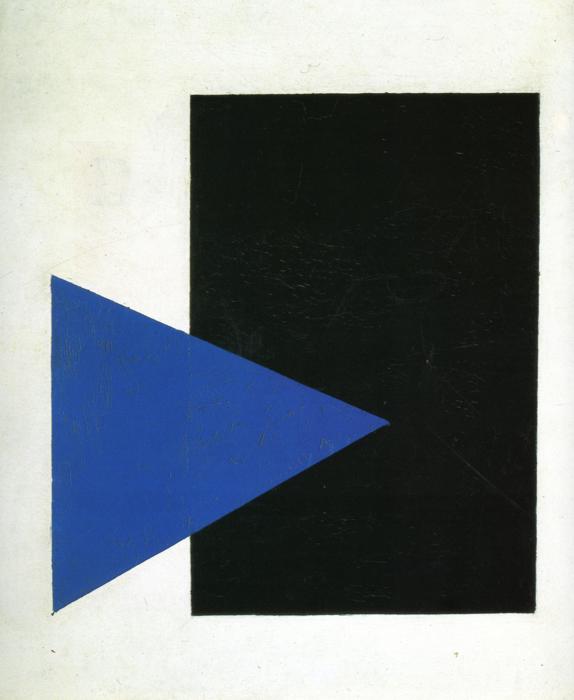

This was a radical departure from art of the time, part of the Suprematism movement that flourished briefly before Stalin suppressed it, heavily and brutally. Art like this was considered subversive, dangerous and a real threat to the morality of the citizenry. Not bad for two simple shapes, is it? And, yet, many people will look at this and use of the above phrases. There is an enormous range of perception on this very simple (yet deeply complicated) piece of art.

The viewer is, of course, completely entitled to their subjective opinion on art but this is, for many cases, a perceptual issue caused by a lack of familiarity with the intentions, practices and goals of abstract art. When we were still painting pictures of houses and rich people, there were many pictures from the 16th to 18th century which contain really badly painted animals. It’s worth going to an historical art museum just to look at all the crap animals. Looking at early European artists trying to capture Australian fauna gives you the same experience – people weren’t painting what they were seeing, they were painting a reasonable approximation of the representation and putting that into the picture. Yet this was accepted and it was accepted because it was a commonly held perception. This also explains offensive (and totally unrealistic) caricatures along racial, gender or religious lines: you accept the stereotype as a reasonable portrayal because of shared perception. (And, no, I’m not putting pictures of that up.)

But, when we talk about art or food, it’s easy to get caught up in things like cultural capital, the assets we have that aren’t money but allow us to be more socially mobile. “Knowing” about art, wine or food has real weight in certain social situations, so the background here matters. Thus, to illustrate that two people can look at the same abstract piece and have one be enraptured while the other wants their money back is not a clean perceptual distinction, free of outside influence. We can’t say “human perception is very a personal business” based on this alone because there are too many arguments to be made about prior knowledge, art appreciation, socioeconomic factors and cultural capital.

But let’s look at another argument starter, the dreaded Monty Hall Problem, where there are three doors, a good prize behind one, and you have to pick a door to try and win a prize. If the host opens a door showing you where the prize isn’t, do you switch or not? (The correctly formulated problem is designed so that switching is the right thing to do but, again, so much argument.) This is, again, a perceptual issue because of how people think about probability and how much weight they invest in their decision making process, how they feel when discussing it and so on. I’ve seen people get into serious arguments about this and this doesn’t even scratch the surface of the incredible abuse Marilyn vos Savant suffered when she had the audacity to post the correct solution to the problem.

This is another great example of what happens when the human perceptual system, environmental factors and facts get jammed together but… it’s also not clean because you can start talking about previous mathematical experience, logical thinking approaches, textual analysis and so on. It’s easy to say that “ah, this isn’t just a human perceptual thing, it’s everything else.”

This is why I love that stupid dress picture. You don’t need to have any prior knowledge of art, cultural capital, mathematical background, history of game shows or whatever. All you need are eyes and relatively functional colour sense of colour. (The dress doesn’t even hit most of the colour blindness issues, interestingly.)

The dress is the clearest example we have that two people can look at the same thing and it’s perception issues that are inbuilt and beyond their control that cause them to have a difference of opinion. We finally have a universal example of how being human is not being sure of the world that we live in and one that we can reproduce anytime we want, without having to carry out any more preparation than “have you seen this dress?”

What we do with it is, as always, the important question now. For me, it’s a reminder to think about issues of perception before I explode with rage across the Internet. Some things will still just be dumb, cruel or evil – the dress won’t heal the world but it does give us a new filter to apply. But it’s simple and clean, and that’s why I think the dress is one of the best things to happen recently to help to bring us together in our discussions so that we can sort out important things and get them done.

Is this a dress thing? #thedress

Posted: March 1, 2015 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, blue and black dress, colour palette, community, data visualisation, education, educational problem, educational research, ethics, feedback, higher education, improving perception, learning, Leonard Nimoy, llap, measurement, perception, perceptual system, principles of design, teaching, teaching approaches, thedress, thinking 1 CommentFor those who missed it, the Internet recently went crazy over llamas and a dress. (If this is the only thing that survives our civilisation, boy, is that sentence going to confuse future anthropologists.) Llamas are cool (there ain’t no karma drama with a llama) so I’m going to talk about the dress. This dress (with handy RGB codes thrown in, from a Wired article I’m about to link to):

When I first saw it, and I saw it early on, the poster was asking what colour it was because she’d taken a picture in the store of a blue and black dress and, yet, in the picture she took, it sometimes looked white and gold and it sometimes looked blue and black. The dress itself is not what I’m discussing here today.

Let’s get something out of the way. Here’s the Wired article to explain why two different humans can see this dress as two different colours and be right. Okay? The fact is that the dress that the picture is of is a blue and black dress (which is currently selling like hot cakes, by the way) but the picture itself is, accidentally, a picture that can be interpreted in different ways because of how our visual perception system works.

This isn’t a hoax. There aren’t two images (or more). This isn’t some elaborate Alternative Reality Game prank.

But the reaction to the dress itself was staggering. In between other things, I plunged into a variety of different social fora to observe the reaction. (Other people also noticed this and have written great articles, including this one in The Atlantic. Thanks for the link, Marc!) The reactions included:

- Genuine bewilderment on the part of people who had already seen both on the same device at nearly adjacent times and were wondering if they were going mad.

- Fierce tribalism from the “white and gold” and “black and blue” camps, within families, across social groups as people were convinced that the other people were wrong.

- People who were sure that it was some sort of elaborate hoax with two images. (No doubt, Big Dress was trying to cover something up.)

- Bordering-on-smug explanations from people who believed that seeing it a certain way indicated that they had superior “something or other”, where you can put day vision/night vision/visual acuity/colour sense/dressmaking skill/pixel awareness/photoshop knowledge.

- People who thought it was interesting and wondered what was happening.

- Attention policing from people who wanted all of social media to stop talking about the dress because we should be talking about (insert one or more) llamas, Leonard Nimoy (RIP, LLAP, \\//) or the disturbingly short lifespan of Russian politicians.

The issue to take away, and the reason I’ve put this on my education blog, is that we have just had an incredibly important lesson in human behavioural patterns. The (angry) team formation. The presumption that someone is trying to make us feel stupid, playing a prank on us. The inability to recognise that the human perceptual system is, before we put any actual cognitive biases in place, incredibly and profoundly affected by the processing shortcuts our perpetual systems take to give us a view of the world.

I want to add a new question to all of our on-line discussion: is this a dress thing?

There are matters that are not the province of simple perceptual confusion. Human rights, equality, murder, are only three things that do not fall into the realm of “I don’t quite see what you see”. Some things become true if we hold the belief – if you believe that students from background X won’t do well then, weirdly enough, then they don’t do well. But there are areas in education when people can see the same things but interpret them in different ways because of contextual differences. Education researchers are well aware that a great deal of what we see and remember about school is often not how we learned but how we were taught. Someone who claims that traditional one-to-many lecturing, as the only approach, worked for them, when prodded, will often talk about the hours spent in the library or with study groups to develop their understanding.

When you work in education research, you get used to people effectively calling you a liar to your face because a great deal of our research says that what we have been doing is actually not a very good way to proceed. But when we talk about improving things, we are not saying that current practitioners suck, we are saying that we believe that we have evidence and practice to help everyone to get better in creating and being part of learning environments. However, many people feel threatened by the promise of better, because it means that they have to accept that their current practice is, therefore, capable of improvement and this is not a great climate in which to think, even to yourself, “maybe I should have been doing better”. Fear. Frustration. Concern over the future. Worry about being in a job. Constant threats to education. It’s no wonder that the two sides who could be helping each other, educational researchers and educational practitioners, can look at the same situation and take away both a promise of a better future and a threat to their livelihood. This is, most profoundly, a dress thing in the majority of cases. In this case, the perceptual system of the researchers has been influenced by research on effective practice, collaboration, cognitive biases and the operation of memory and cognitive systems. Experiment after experiment, with mountains of very cautious, patient and serious analysis to see what can and can’t be learnt from what has been done. This shows the world in a different colour palette and I will go out on a limb and say that there are additional colours in their palette, not just different shades of existing elements. The perceptual system of other people is shaped by their environment and how they have perceived their workplace, students, student behaviour and the personalisation and cognitive aspects that go with this. But the human mind takes shortcuts. Makes assumptions. Has biasses. Fills in gaps to match the existing model and ignores other data. We know about this because research has been done on all of this, too.

You look at the same thing and the way your mind works shapes how you perceive it. Someone else sees it differently, You can’t understand each other. It’s worth asking, before we deploy crushing retorts in electronic media, “is this a dress thing?”

The problem we have is exactly as we saw from the dress: how we address the situation where both sides are convinced that they are right and, from a perceptual and contextual standpoint, they are. We are now in the “post Dress” phase where people are saying things like “Oh God, that dress thing. I never got the big deal” whether they got it or not (because the fad is over and disowning an old fad is as faddish as a fad) and, more reflectively, “Why did people get so angry about this?”

At no point was arguing about the dress colour going to change what people saw until a certain element in their perceptual system changed what it was doing and then, often to their surprise and horror, they saw the other dress! (It’s a bit H.P. Lovecraft, really.) So we then had to work out how we could see the same thing and both be right, then talk about what the colour of the dress that was represented by that image was. I guarantee that there are people out in the world still who are convinced that there is a secret white and gold dress out there and that they were shown a picture of that. Once you accept the existence of these people, you start to realise why so many Internet arguments end up descending into the ALL CAPS EXCHANGE OF BALLISTIC SENTENCES as not accepting that what we personally perceive as being the truth could not be universally perceived is one of the biggest causes of argument. And we’ve all done it. Me, included. But I try to stop myself before I do it too often, or at all.

We have just had a small and bloodless war across the Internet. Two teams have seized the same flag and had a fierce conflict based on the fact that the other team just doesn’t get how wrong they are. We don’t want people to be bewildered about which way to go. We don’t want to stay at loggerheads and avoid discussion. We don’t want to baffle people into thinking that they’re being fooled or be condescending.

What we want is for people to recognise when they might be looking at what is, mostly, a perceptual problem and then go “Oh” and see if they can reestablish context. It won’t always work. Some people choose to argue in bad faith. Some people just have a bee in their bonnet about some things.

“Is this a dress thing?”

In amongst the llamas and the Vulcans and the assassination of Russian politicians, something that was probably almost as important happened. We all learned that we can be both wrong and right in our perception but it is the way that we handle the situation that truly determines whether we’re handling the situation in the wrong or right way. I’ve decided to take a two week break from Facebook to let all of the latent anger that this stirred up die down, because I think we’re going to see this venting for some time.

Maybe you disagree with what I’ve written. That’s fine but, first, ask yourself “Is this a dress thing?”

Live long and prosper.

We don’t need no… oh, wait. Yes, we do. (@pwc_AU)

Posted: February 11, 2015 Filed under: Education, Opinion | Tags: advocacy, Australia, community, education, educational research, ethics, feedback, G20, higher education, learning, measurement, pricewaterhousecoopers, pwc, reflection, resources, science and technology, thinking, tools Leave a commentThe most important thing about having a good idea is not the idea itself, it’s doing something with it. In the case of sharing knowledge, you have to get good at communication or the best ideas in the world are going to be ignored. (Before anyone says anything, please go and review the advertising industry which was worth an estimated 14 billion pounds in 2013 in the UK alone. The way that you communicate ideas matters and has value.)

Knowledge doesn’t leap unaided into most people’s heads. That’s why we have teachers and educational institutions. There are auto-didacts in the world and most people can pull themselves up by their bootstraps to some extent but you still have to learn how to read and the more expertise you can develop under guidance, the faster you’ll be able to develop your expertise later on (because of how your brain works in terms of handling cognitive load in the presence of developed knowledge.)

When I talk about the value of making a commitment to education, I often take it down to two things: ongoing investment and excellent infrastructure. You can’t make bricks without clay and clay doesn’t turn into bricks by itself. But I’m in the education machine – I’m a member of the faculty of a pretty traditional University. I would say that, wouldn’t I?

That’s why it’s so good to see reports coming out of industry sources to confirm that, yes, education is important because it’s one of the many ways to drive an economy and maintain a country’s international standing. Many people don’t really care if University staff are having to play the banjo on darkened street corners to make ends meet (unless the banjo is too loud or out of tune) but they do care about things like collapsing investments and being kicked out of the G20 to be replaced by nations that, until recently, we’ve been able to list as developing.

PricewaterhouseCoopers (pWc) have recently published a report where they warn that over-dependence on mining and lack of investment in science and technology are going to put Australia in a position where they will no longer be one of the world’s 20 largest economies but will be relegated, replaced by Vietnam and Nigeria. If fact, the outlook is bleaker than that, moving Australia back beyond Bangladesh and Iran, countries that are currently receiving international support. This is no slur on the countries that are developing rapidly, improving conditions for their citizens and heading up. But it is an interesting reflection on what happens to a developed country when it stops trying to do anything new and gets left behind. Of course, science and technology (STEM) does not leap fully formed from the ground so this, in terms, means that we’re going to have make sure that our educational system is sufficiently strong, well-developed and funded to be able to produce the graduates who can then develop the science and technology.

We in the educational community and surrounds have been saying this for years. You can’t have an innovative science and technology culture without strong educational support and you can’t have a culture of innovation without investment and infrastructure. But, as I said in a recent tweet, you don’t have to listen to me bang on about “social contracts”, “general benefit”, “universal equity” and “human rights” to think that investing in education is a good idea. PwC is a multi-national company that’s the second largest professional services company in the world, with annual revenues around $34 billion. And that’s in hard American dollars, which are valuable again compared to the OzD. PwC are serious money people and they think that Australia is running a high risk if we don’t start looking at serious alternatives to mining and get our science and technology engines well-lubricated and running. And running quickly.

The first thing we have to do is to stop cutting investment in education. It takes years to train a good educator and it takes even longer to train a good researcher at University on top of that. When we cut funding to Universities, we slow our hiring, which stops refreshment, and we tend to offer redundancies to expensive people, like professors. Academic staff are not interchangeable cogs. After 12 years of school, they undertake somewhere along the lines of 8-10 years of study to become academics and then they really get useful about 10 years after that through practice and the accumulation of experience. A Professor is probably 30 years of post-school investment, especially if they have industry experience. A good teacher is 15+. And yet these expensive staff are often targeted by redundancies because we’re torn between the need to have enough warm bodies to put in front of students. So, not only do we need to stop cutting, we need to start spending and then commit to that spending for long enough to make a difference – say 25 years.

The next thing, really at the same time, we need to do is to foster a strong innovation culture in Australia by providing incentives and sound bases for research and development. This is (despite what happened last night in Parliament) not the time to be cutting back, especially when we are subsidising exactly those industries that are not going to keep us economically strong in the future.

But we have to value education. We have to value teachers. We have to make it easier for people to make a living while having a life and teaching. We have to make education a priority and accept the fact that every dollar spent in education is returned to us in so many different ways, but it’s just not easy to write it down on a balance sheet. PwC have made it clear: science and technology are our future. This means that good, solid educational systems from the start of primary to tertiary and beyond are now one of the highest priorities we can have or our country is going to sink backwards. The sheep’s back we’ve been standing on for so long will crush us when it rolls over and dies in a mining pit.

I have many great ethical and social arguments for why we need to have the best education system we can have and how investment is to the benefit of every Australia. PwC have just provided a good financial argument for those among us who don’t always see past a 12 month profit and loss sheet.

Always remember, the buggy whip manufacturers are the last person to tell you not to invest in buggy whips.

I Am Self-righteous, You Are Loud, She is Ignored

Posted: February 9, 2015 Filed under: Education | Tags: advocacy, authenticity, blogging, collaboration, community, discussion, education, educational problem, educational research, ethics, feedback, higher education, in the student's head, Lani Guinier, learning, reflection, scientific theory, student perspective, students, teaching, tools Leave a commentIf we’ve learned anything from recent Internet debates that have become almost Lovecraftian in the way that a single word uttered in the wrong place can cause an outbreaking of chaos, it is that the establishment of a mutually acceptable tone is the only sensible way to manage any conversation that is conducted outside of body-language cues. Or, in short, we need to work out how to stop people screaming at each other when they’re safely behind their keyboards or (worse) anonymity.

As a scientist, I’m very familiar with the approach that says that all ideas can be questioned and it is only by ferocious interrogation of reality, ideas, theory and perception that we can arrive at a sound basis for moving forward.

But, as a human, I’m aware that conducting ourselves as if everyone is made of uncaring steel is, to be put it mildly, a very poor way to educate and it’s a lousy way to arrive at complex consensus. In fact, while we claim such an approach is inherently meritocratic, as good ideas must flourish under such rigour, it’s more likely that we will only hear ideas from people who can endure the system, regardless of whether those people have the best ideas. A recent book, “The Tyranny of the Meritocracy” by Lani Guinier, looks at how supposedly meritocratic systems in education are really measures of privilege levels prior to going into education and that education is more about cultivating merit, rather than scoring a measure of merit that is actually something else.

This isn’t to say that face-to-face arguments are isolated from the effects that are caused by antagonists competing to see who can keep making their point for the longest time. If one person doesn’t wish to concede the argument but the other can’t see any point in making progress, it is more likely for the (for want of a better term) stubborn party to claim that they have won because they have reached a point where the other person is “giving up”. But this illustrates the key flaw that underlies many arguments – that one “wins” or “loses”.

In scientific argument, in theory, we all get together in large rooms, put on our discussion togas and have at ignorance until we force it into knowledge. In reality, what happens is someone gets up and presents and the overall impression of competency is formed by:

- The gender, age, rank, race and linguistic grasp of the speaker

- Their status in the community

- How familiar the audience are with the work

- How attentive the audience are and whether they’re all working on grants or e-mail

- How much they have invested in the speaker being right or wrong

- Objective scientific assessment

We know about the first one because we keep doing studies that tell us that women cannot be assessed fairly by the majority of people, even in blind trials where all that changes on a CV is the name. We know that status has a terrible influence on how we perceive people. Dunning-Kruger (for all of its faults) and novelty effects influence how critical we can be. We can go through all of these and we come back to the fact that our pure discussion is tainted by the rituals and traditions of presentation, with our vaunted scientific objectivity coming in after we’ve stripped off everything else.

It is still there, don’t get me wrong, but you stand a much better chance of getting a full critical hearing with a prepared, specialist audience who have come together with a clear intention to attempt to find out what is going on than an intention to destroy what is being presented. There is always going to be something wrong or unknown but, if you address the theory rather than the person, you’ll get somewhere.

I often refer to this as the difference between scientists and lawyers. If we’re tying to build a better science then we’re always trying to improve understanding through genuine discovery. Defence lawyers are trying to sow doubt in the mind of judges and juries, invalidating evidence for reasons that are nothing to do with the strength of the evidence, and preventing wider causal linkages from forming that would be to the detriment of their client. (Simplistic, I know.)

Any scientific theory must be able to stand up to scientific enquiry because that’s how it works. But the moment we turn such a process into an inquisition where the process becomes one that the person has to endure then we are no longer assessing the strength of the science – we are seeing if we can shout someone into giving up.

As I wrote in the title, when we are self-righteous, whether legitimately or not, we will be happy to yell from the rooftops. If someone else is doing it with us then we might think they are loud but how can someone else’s voice be heard if we have defined all exchange in terms of this exhausting primal scream? If that person comes from a traditionally under-represented or under-privileged group then they may have no way at all to break in.

The mutual establishment of tone is essential if we to hear all of the voices who are able to contribute to the improvement and development of ideas and, right now, we are downright terrible at it. For all we know, the cure for cancer has been ignored because it had the audacity to show up in the mind of a shy, female, junior researcher in a traditionally hierarchical lab that will let her have her own ideas investigated when she gets to be a professor.

Or it it would have occurred to someone had she received education but she’s stuck in the fields and won’t ever get more than a grade 5 education. That’s not a meritocracy.

One of the reasons I think that we’re so bad at establishing tone and seeing past the illusion of meritocracy is the reason that we’ve always been bad at handling bullying: we are more likely to see a spill-over reaction from the target than the initial action except in the most obvious cases of physical bullying. Human language and body-assisted communication are subtle and words are more than words. Let’s look at this sentence:

“I’m sure he’s doing the best he can.”

You can adjust this sentence to be incredibly praising, condescending, downright insulting, dismissive and indifferent without touching the content of the sentence. But, written like this, it is robbed of tone and context. If someone has been “needled” with statements like this for months, then a sudden outburst is increasingly likely, especially in stressful situations. This is the point at which someone says “But I only said … ” If our workplaces our innately rife with inter-privilege tension and high stress due to the collapse of the middle class – no wonder people blow up!

We have the same problem in the on-line community from an approach called Sea-Lioning, where persistent questioning is deployed in a way that, with each question isolated, appears innocuous but, as a whole, forms a bullying technique to undermine and intimidate the original writer. Now some of this is because there are people who honestly cannot tell what a mutually respectful tone look like and really want to know the answer. But, if you look at the cartoon I linked to, you can easily see how this can be abused and, in particular, how it can be used to shut down people who are expressing ideas in new space. We also don’t get the warning signs of tone. Worse still, we often can’t or don’t walk away because we maintain a connection that the other person can jump on anytime they want to. (The best thing you can do sometimes on Facebook is to stop notifications because you stop getting tapped on the shoulder by people trying to get up your nose. It is like a drink of cool water on a hot day, sometimes. I do, however, realise that this is easier to say than do.)

When students communicate over our on-line forums, we do keep an eye on them for behaviour that is disrespectful or downright rude so that we can step in and moderate the forum, but we don’t require moderation before comment. Again, we have the notion that all ideas can be questioned, because SCIENCE, but the moment we realise that some questions can be asked not to advance the debate but to undermine and intimidate, we have to look very carefully at the overall context and how we construct useful discussion, without being incredibly prescriptive about what form discussion takes.

I recently stepped in to a discussion about some PhD research that was being carried out at my University because it became apparent that someone was acting in, if not bad faith, an aggressive manner that was not actually achieving any useful discussion. When questions were answered, the answers were dismissed, the argument recast and, to be blunt, a lot of random stuff was injected to discredit the researcher (for no good reason). When I stepped in to point out that this was off track, my points were side-stepped, a new argument came up and then I realised that I was dealing with a most amphibious mammal.

The reason I bring this up is that when I commented on the post, I immediately got positive feedback from a number of people on the forum who had been uncomfortable with what had been going on but didn’t know what to do about it. This is the worst thing about people who set a negative tone and hold it down, we end up with social conventions of politeness stopping other people from commenting or saying anything because it’s possible that the argument is being made in good faith. This is precisely the trap a bad faith actor wants to lock people into and, yet, it’s also the thing that keeps most discussions civil.

Thanks, Internet trolls. You’re really helping to make the world a better place.

These days my first action is to step in and ask people to clarify things, in the most non-confrontational way I can muster because asking people “What do you mean” can be incredibly hostile by itself! This quickly establishes people who aren’t willing to engage properly because they’ll start wriggling and the Sea-Lion effect kicks in – accusations of rudeness, unwillingness to debate – which is really, when it comes down to it:

I WANT TO TALK AT YOU LIKE THIS HOW DARE YOU NOT LET ME DO IT!

This isn’t the open approach to science. This is thuggery. This is privilege. This is the same old rubbish that is currently destroying the world because we can’t seem to be able to work together without getting caught up in these stupid games. I dream of a better world where people can say any combination of “I use Mac/PC/Java/Python” without being insulted but I am, after all, an Idealist.

The summary? The merit of your argument is not determined by how loudly you shout and how many other people you silence.

I expect my students to engage with each other in good faith on the forums, be respectful and think about how their actions affect other people. I’m really beginning to wonder if that’s the best preparation for a world where a toxic on-line debate can break over into the real world, where SWAT team attacks and document revelation demonstrate what happens when people get too carried away in on-line forums.

We’re stopping people from being heard when they have something to say and that’s wrong, especially when it’s done maliciously by people who are demanding to say something and then say nothing. We should be better at this by now.

In Praise of the Beautiful Machines

Posted: February 1, 2015 Filed under: Education | Tags: advocacy, AI, artificial intelligence, authenticity, beautiful machine, beautiful machines, Bill Gates, blogging, community, design, education, educational problem, ethics, feedback, Google, higher education, in the student's head, Karlheinz Stockhausen, learning, measurement, Philippa Foot, self-driving car, teaching approaches, thinking, thinking machines, tools Leave a commentI posted recently about the increasingly negative reaction to the “sentient machines” that might arise in the future. Discussion continues, of course, because we love a drama. Bill Gates can’t understand why more people aren’t worried about the machine future.

…AI could grow too strong for people to control.

Scientists attending the recent AI conference (AAAI15) thinks that the fears are unfounded.

“The thing I would say is AI will empower us not exterminate us… It could set AI back if people took what some are saying literally and seriously.” Oren Etzioni, CEO of the Allen Institute for AI.

If you’ve read my previous post then you’ll know that I fall into the second camp. I think that we don’t have to be scared of the rise of the intelligent AI but the people at AAAI15 are some of the best in the field so it’s nice that they ask think that we’re worrying about something that is far, far off in the future. I like to discuss these sorts of things in ethics classes because my students have a very different attitude to these things than I do – twenty five years is a large separation – and I value their perspective on things that will most likely happen during their stewardship.

I asked my students about the ethical scenario proposed by Philippa Foot, “The Trolley Problem“. To summarise, a runaway trolley is coming down the tracks and you have to decide whether to be passive and let five people die or be active and kill one person to save five. I put it to my students in terms of self-driving cars where you are in one car by yourself and there is another car with five people in it. Driving along a bridge, a truck jackknifes in front of you and your car has to decide whether to drive ahead and kill you or move to the side and drive the car containing five people off the cliff, saving you. (Other people have thought about in the context of Google’s self-driving cars. What should the cars do?)

One of my students asked me why the car she was in wouldn’t just put on the brakes. I answered that it was too close and the road was slippery. Her answer was excellent:

Why wouldn’t a self-driving car have adjusted for the conditions and slowed down?

Of course! The trolley problem is predicated upon the condition that the trolley is running away and we have to make a decision where only two results can come out but there is no “runaway” scenario for any sensible model of a self-driving car, any more than planes flip upside down for no reason. Yes, the self-driving car may end up in a catastrophic situation due to something totally unexpected but the everyday events of “driving too fast in the wet” and “chain collision” are not issues that will affect the self-driving car.

But we’re just talking about vaguely smart cars, because the super-intelligent machine is some time away from us. What is more likely to happen soon is what has been happening since we developed machines: the ongoing integration of machines into human life to make things easier. Does this mean changes? Well, yes, most likely. Does this mean the annihilation of everything that we value? No, really not. Let me put this in context.

As I write this, I am listening to two compositions by Karlheinz Stockhausen, playing simultaneously but offset, “Kontakte” and “Telemusik“, works that combine musical instruments, electronic sounds, and tape recordings. I like both of them but I prefer to listen to the (intentionally sterile) Telemusik by starting Koktakte first for 2:49 and then kicking off Telemusik, blending the two and finishing on the longer Kontakte. These works, which are highly non-traditional and use sound in very different ways to traditional orchestral arrangement, may sound quite strange and, to an audience familiar with popular music quite strange, they were written in 1959 and 1966 respectively. These innovative works are now in their middle-age. They are unusual works, certainly, and a number of you will peer at your speakers one they start playing but… did their production lead to the rejection of the popular, classic, rock or folk music output of the 1960s? No.

We now have a lot of electronic music, synthesisers, samplers, software-driven music software, but we still have musicians. It’s hard to measure the numbers (this link is very good) but electronic systems have allowed us to greatly increase the number of composers although we seem to be seeing a slow drop in the number of musicians. In many ways, the electronic revolution has allowed more people to perform because your band can be (for some purposes) a band in a box. Jazz is a different beast, of course, as is classical, due to the level of training and study required. Jazz improvisation is a hard problem (you can find papers on it from 2009 onwards and now buy a so-so jazz improviser for your iPad) and hard problems with high variability are not easy to solve, even computationally.

So the increased portability of music via electronic means has an impact in some areas such as percussion, pop, rock, and electronic (duh) but it doesn’t replace the things where humans shine and, right now, a trained listener is going to know the difference.

I have some of these gadgets in my own (tiny) studio and they’re beautiful. They’re not as good as having the London Symphony Orchestra in your back room but they let me create, compose and put together pleasant sounding things. A small collection of beautiful machines make my life better by helping me to create.

Now think about growing older. About losing strength, balance, and muscular control. About trying to get out of bed five times before you succeed or losing your continence and having to deal with that on top of everything else.

Now think about a beautiful machine that is relatively smart. It is tuned to wrap itself gently around your limbs and body to support you, to help you keep muscle tone safely, to stop you from falling over, to be able to walk at full speed, to take you home when you’re lost and with a few controlling aspects to allow you to say when and where you go to the bathroom.

Isn’t that machine helping you to be yourself, rather than trapping you in the decaying organic machine that served you well until your telomerase ran out?

Think about quiet roads with 5% of the current traffic, where self-driving cars move from point to point and charge themselves in between journeys, where you can sit and read or work as you travel to and from the places you want to go, where there are no traffic lights most of the time because there is just a neat dance between aware vehicles, where bad weather conditions means everyone slows down or even deliberately link up with shock absorbent bumper systems to ensure maximum road holding.

Which of these scenarios stops you being human? Do any of them stop you thinking? Some of you will still want to drive and I suppose that there could be roads set aside for people who insisted upon maintaining their cars but be prepared to pay for the additional insurance costs and public risk. From this article, and the enclosed U Texas report, if only 10% of the cars on the road were autonomous, reduced injuries and reclaimed time and fuel would save $37 billion a year. At 90%, it’s almost $450 billion a year. The Word Food Programme estimates that $3.2 billion would feed the 66,000,000 hungry school-aged children in the world. A 90% autonomous vehicle rate in the US alone could probably feed the world. And that’s a side benefit. We’re talking about a massive reduction in accidents due to human error because (ta-dahh) no human control.

Most of us don’t actually drive our cars. They spend 5% of their time on the road, during which time we are stuck behind other people, breathing fumes and unable to do anything else. What we think about as the pleasurable experience of driving is not the majority experience for most drivers. It’s ripe for automation and, almost every way you slice it, it’s better for the individual and for society as a whole.

But we are always scared of the unknown. There’s a reason that the demons of myth used to live in caves and under ground and come out at night. We hate the dark because we can’t see what’s going on. But increased machine autonomy, towards machine intelligence, doesn’t have to mean that we create monsters that want to destroy us. The far more likely outcome is a group of beautiful machines that make it easier and better for us to enjoy our lives and to have more time to be human.

We are not competing for food – machines don’t eat. We are not competing for space – machines are far more concentrated than we are. We are not even competing for energy – machines can operate in more hostile ranges than we can and are far more suited for direct hook-up to solar and wind power, with no intermediate feeding stage.

We don’t have to be in opposition unless we build machines that are as scared of the unknown as we are. We don’t have to be scared of something that might be as smart as we are.

If we can get it right, we stand to benefit greatly from the rise of the beautiful machine. But we’re not going to do that by starting from a basis of fear. That’s why I told you about that student. She’d realised that our older way of thinking about something was based on a fear of losing control when, if we handed over control properly, we would be able to achieve something very, very valuable.

Rules: As For Them, So For Us

Posted: November 17, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, collaboration, community, Dog Eat Dog, education, educational problem, educational research, ethics, feedback, games, Generation Why, higher education, learning, measurement, student, student perspective, students, teaching, teaching approaches, time banking 1 CommentIn a previous post, I mentioned a game called “Dog Eat Dog” where players role-play the conflict between Colonist and Native Occupiers, through playing out scenarios that both sides seek to control, with the result being the production of a new rule that encapsulates the ‘lesson’ of the scenario. I then presented education as being a good fit for this model but noted that many of the rules that students have to be obey are behavioural rather than knowledge-focussed. A student who is ‘playing through’ education will probably accumulate a list of rules like this (not in any particular order):

- Always be on time for class

- Always present your own work

- Be knowledgable

- Prepare for each activity

- Participate in class

- Submit your work on time

But, as noted in Dog Eat Dog, the nasty truth of colonisation is that the Colonists are always superior to the Colonised. So, rule 0 is actually: Students are inferior to Teachers. Now, that’s a big claim to make – that the underlying notion in education is one of inferiority. In the Dog Eat Dog framing, the superiority manifests as dominance in decision making and the ability to intrude into every situation. We’ll come back to this.

If we tease apart the rules for students then are some obvious omissions that we would like to see such as “be innovative” or “be creative”, except that these rules are very hard to apply as pre-requisites for progress. We have enough potential difficulty with the measurement of professional skills, without trying to assess if one thing is a creative approach while another is just missing the point or deliberate obfuscation. It’s understandable that five of the rules presented are those that we can easily control with extrinsic motivational factors – 1, 2, 4, 5, and 6 are generally presented as important because of things like mandatory attendance, plagiarism rules and lateness penalties. 3, the only truly cognitive element on the list, is a much harder thing to demand and, unsurprisingly, this is why it’s sometimes easier to seek well-behaved students than it is to seek knowledgable, less-controlled students, because it’s so much harder to see that we’ve had a positive impact. So, let us accept that this list is naturally difficult to select and somewhat artificial, but it is a reasonable model for what people expect of a ‘good’ student.

Let me ask you some questions before we proceed.

- A student is always late for class. Could there be a reasonable excuse for this and, if so, does your system allow for it?

- Students occasionally present summary presentations from other authors, including slides prepared by scholarly authors. How do you interpret that?

- Students sometimes show up for classes and are obviously out of their depth. What do you do? Should they go away and come back later when they’re ready? Do they just need to try harder?

- Students don’t do the pre-reading and try to cram it in just before a session. Is this kind of “just in time” acceptable?

- Students sometimes sit up the back, checking their e-mail, and don’t really want to get involved. Is that ok? What if they do it every time?

- Students are doing a lot of things and often want to shift around deadlines or get you to take into account their work from other courses or outside jobs. Do you allow this? How often? Is there a penalty?

As you can see, I’ve taken each of the original ‘good student’ points and asked you to think about it. Now, let us accept that there are ultimate administrative deadlines (I’ve already talked about this a lot in time banking) and we can accept that the student is aware of these and are not planning to put all their work off until next century.

Now, let’s look at this as it applies to teaching staff. I think we can all agree that a staff member who meets that list are going to achieve a lot of their teaching goals. I’m going to reframe the questions in terms of staff.

- You have to drop your kids off every morning at day care. This means that you show up at your 9am lecture 5 minutes late every day because you physically can’t get there any faster and your partner can’t do it because he/she is working shift work. How do you explain this to your students?

- You are teaching a course from a textbook which has slides prepared already. Is it ok to take these slides and use them without any major modification?

- You’ve been asked to cover another teacher’s courses for two weeks due to their illness. You have a basis in the area but you haven’t had to do anything detailed for it in over 10 years and you’ll also have to give feedback on the final stages of a lengthy assignment. How do you prepare for this and what, if anything, do you tell the class to brief them on your own lack of expertise?

- The staff meeting is coming around and the Head of School wants feedback on a major proposal and discussion at that meeting. You’ve been flat out and haven’t had a chance to look at it, so you skim it on the way to the meeting and take it with you to read in the preliminaries. Given the importance of the proposal, do you think this is a useful approach?

- It’s the same staff meeting and Doctor X is going on (again) about radical pedagogy and Situationist philosophy. You quickly catch up on some important work e-mails and make some meetings for later in the week, while you have a second.

- You’ve got three research papers due, a government grant application and your Head of School needs your workload bid for the next calendar year. The grant deadline is fixed and you’ve already been late for three things for the Head of School. Do you drop one (or more) of the papers or do you write to the convenors to see if you can arrange an extension to the deadline?

Is this artificial? Well, of course, because I’m trying to make a point. Beyond being pedantic on this because you know what I’m saying, if you answered one way for the staff member and other way for the student then you have given the staff member more power in the same situation than the student. Just because we can all sympathise with the staff member (Doctor X sounds horribly familiar, doesn’t he?) doesn’t that the student’s reasons, when explored and contextualised, are not equally valid.

If we are prepared to listen to our students and give their thoughts, reasoning and lives as much weight and value as our own, then rule 0 is most likely not in play at the moment – you don’t think your students are inferior to you. If you thought that the staff member was being perfectly reasonable and yet you couldn’t see why a student should be extended the same privileges, even where I’ve asked you to consider the circumstances where it could be, then it’s possible that the superiority issue is one that has become well-established at your institution.

Ultimately, if this small list is a set of goals, then we should be a reasonable exemplar for our students. Recently, due to illness, I’ve gone from being very reliable in these areas, to being less reliable on things like the level of preparation I used to do and timeliness. I have looked at what I’ve had to do and renegotiated my deadlines, apologising and explaining where I need to. As a result, things are getting done and, as far as I know, most people are happy with what I’m doing. (That’s acceptable but they used to be very happy. I have way to go.) I still have a couple of things to fix, which I haven’t forgotten about, but I’ve had to carry out some triage. I’m honest about this because, that way, I encourage my students to be honest with me. I do what I can, within sound pedagogical framing and our administrative requirements, and my students know that. It makes them think more, become more autonomous and be ready to go out and practice at a higher level, sooner.

This list is quite deliberately constructed but I hope that, within this framework, I’ve made my point: we have to be honest if we are seeing ourselves as superior and, in my opinion, we should work more as equals with each other.

Data: Harder to Anonymise Yourself Than You Might Think

Posted: October 18, 2014 Filed under: Education, Opinion | Tags: Black Static, blogging, community, curriculum, data, data visualisation, education, feedback, higher education, Interzone, measurement, reflection, submission, submission system, teaching, teaching approaches, thinking, universal principles of design Leave a commentThere’s a lot of discussion around a government’s use of metadata at the moment, where instead of looking at the details of your personal data, government surveillance is limited to looking at the data associated with your personal data. In the world of phone calls, instead of taping the actual call, they can see the number you dialled, the call time and its duration, for example. CBS have done a fairly high-level (weekend-suitable) coverage of a Stanford study that quickly revealed a lot more about participants than they would have thought possible from just phone numbers and call times.

But how much can you tell about a person or an organisation without knowing the details? I’d like to show you a brief, but interesting, example. I write fiction and I’ve recently signed up to “The Submission Grinder“, which allows you to track your own submissions and, by crowdsourcing everyone’s success and failures, to also track how certain markets are performing in terms of acceptance, rejection and overall timeliness.

Now, I have access to no-one else’s data but my own (which is all of 5 data points) but I’ll show you how assembling these anonymous data results together allows me to have a fairly good stab at determining organisational structure and, in one case, a serious organisational transformation.

Let’s start by looking at a fairly quick turnover semi-pro magazine, Black Static. It’s a short fiction market with horror theming. Here’s their crowd-sourced submission graph for response times, where rejections are red and acceptances are green. (Sorry, Damien.)

Black Static has a web submission system and, as you can see, most rejections happen in the first 2-3 weeks. There is then a period where further work goes on. (It’s very important to note that this is a sample generated by those people who are using Submission Grinder, which is a subset of all people submitting to Black Static.) What this looks like, given that it is unlikely that anyone could read a lot 4,000-7,000 manuscripts in detail at a time, is that the editor is skimming the electronic slush pile to determine if it’s worth going to other readers. After this initial 2 week culling, what we are seeing is the result of further reading so we’d probably guess that the readers’ reviews are being handled as they come in, with some indication that this is one roughly weekly – maybe as a weekend job? It’s hard to say because there’s not much data beyond 21 days so we’re guessing.

Let’s look at Black Static’s sister SF magazine, Interzone, now semi-pro but still very highly regarded.

Lots more data here! Again, there appears to be a fairly fast initial cut-off mechanism from skimming the web submission slush pile. (And I can back this up with actual data as Interzone rejected one of my stories in 24 hours.) Then there appears to be a two week period where some thinking or reading takes place and then there’s a second round of culling, which may be an editorial meeting or a fast reader assignment. Finally we see two more fortnightly culls as the readers bring back their reviews. I think there’s enough data here to indicate that Interzone’s editorial group consider materials most often every fortnight. Also the acceptances generated by positive reviews appear to be the same quantity as those from the editors – although there’s so little data here we’re really grabbing at tempting looking straws.

Now let’s look at two pro markets, starting with the Magazine of Fantasy & Science Fiction.

This doesn’t have the same initial culling process that the other two had, although it appears that there is a period of 7-14 days when a lot of work has been reviewed and then rejected – we don’t see as much work rejected again until the 35 day mark, when it looks like all reader reviews are back. Notably, there is a large gap between the initial bunch of acceptances (editor says ‘yes’) and then acceptances supported by reviewers. I’m speculating now but I wonder if what we’re seeing between that first and second group of acceptances are reviewers who write back in and say “Don’t bother” quickly, rather than assembling personalised feedback for something that could be salvaged. Either way, the message here is simple. If you survive the first four weeks in F&SF system, then you are much less likely to be rejected and, with any luck, this may translate (worse case) into personal suggestions for improvement.

F&SF has a postal submission system, which makes it far more likely that the underlying work is going to batched in some way, as responses have to go out via mail and doing this in a more organised fashion makes sense. This may explain why this is such a high level of response overall for the first 35 days, as you can’t easily click a button to send a response electronically and there’re a finite number of envelopes any one person wants to prepare on any given day. (I have no idea how right I am but this is what I’m limited to by only observing the metadata.)

Tor.com has a very interesting graph, which I’ll show below.

Tor.com pays very well and has an on-line submission system via e-mail. As a result, it is positively besieged with responses and their editorial team recently shut down new submissions for two months while they cleared backlog. What interested me in this data was the fact that the 150 day spike was roughly twice as high as the 90 and 120. Hmm – 90, 120, 150 as dominant spikes. Does that sound like a monthly editors’ meeting to anyone else? By looking at the recency graph (which shows activity relative to today) we can see that there has been an amazing flurry of activity at Tor.com in the past month. Tor.com has a five person editorial team (from their website) with reading and support from two people (plus occasional others). It’s hard for five people to reach consensus without discussion so that monthly cycle looks about right. But it will take time for 7 people to read all of that workload, which explains the relative silence until 3 months have elapsed.

What about that spike at 150? It could be the end of the initial decisions and the start of “worth another look” pile so let’s see if their web page sheds any light on it. Aha!

Have you read my story? We reply to everything we’ve finished evaluating, so if you haven’t heard from us, the answer is “probably not.” At this point the vast majority of stories greater than four months old are in our second-look pile, and we respond to almost everything within seven months.

I also wonder if we are seeing previous data where it was taking longer to get decisions made – whether we are seeing two different time management strategies of Tor.com at the same time, being the 90+120 version as well as the 150 version. Looking at the website again.

Response times have improved quite a bit with the expansion of our first reader team (emphasis mine), and we now respond to the vast majority of stories within three months. But all of the stories they like must then be read by the senior editorial staff, who are all full-time editors with a lot on our plates.

So, yes, the size of Tor.com’s slush pile and the number of editors that must agree basically mean that people are putting time aside to make these decisions, now aiming at 90 days, with a bit of spillover. It looks like we are seeing two regimes at once.

All of this information is completely anonymous in terms of the stories, the authors and any actual submission or acceptance patterns that could relate data together. But, by looking at this metadata on the actual submissions, we can now start to get an understanding of the internal operations of an organisation, which in some cases we can then verify with publicly held information.

Now think about all the people you’ve phoned, the length of time that you called them and what could be inferred about your personal organisation from those facts alone. Have a good night’s sleep!

5 Things: Blogging

Posted: October 14, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, education, ethics, feedback, higher education, students, teaching, teaching approaches, thinking 2 CommentsI’ve written a lot of words here, over a few years, and I’ve learned some small things about blogging. There are some important things you need to know before you start.

- The World is Full of Dead Blogs. There are countless blogs that start with one or two posts and then stop, pretty much forever. This isn’t a huge problem, beyond holding down usernames that other people might want to use later (grr), but it doesn’t help people if they’re trying to actually read your blog sometime in the future. If you blog, then decide you don’t want to blog, consider cleaning up after yourself because it will make it easier for people to find your stuff when you actually want that to happen. If you’re not prepared to answer comments but you still want your blog to stand, switch off comments or put up a note saying that you don’t read comments from here. There’s a world of difference between a static blog and a dead blog. Don’t advertise your blog until you’ve got a routine of some sort going, just so you know if you’re going to do it or not.

A great example of an

“I’m still alive” from http://insocialwetrust.wordpress.com/2012/09/13/a-link-to-a-link-to-some-more-links/ - Regular Blogging is Hard. It takes effort and planning to pump out posts on a schedule. I managed every day for a year and it damn near killed me. Even if you’re planning once a week/month, make sure that you have a number of posts written up before you start and try to always keep a couple up your sleeve. It is far easier to mix up your feeds to keep your audience connected to you by, say, tweeting small things regularly and writing longer pieces that advertise into your Twitter feed semi-regularly. That way, when people see your name, they realise that you’re still alive and might read what you wrote. If you can, let people know roughly how often you’ll be writing and they can work that into their minds around what you’re writing.

- Write What You Want To Write But Try To Be Thematic. It’s easy to get cynical about things like how many people are following you and try to write what other people would like to read. Have some sort of purpose (maybe 2-3 different themes tops) so that what you write feels authentic to you, fits into your interests and is on a small range of topics so that people reading know what to expect. I stretch the rubber band on education a lot but it still mostly fits. I have a different blog for other things, which is far less regularly updated and is for completely different things that I also want to write. Writing somebody else or on something that you don’t really know about almost always stands out. Share your passion in your own way.

- Be Ready For Criticism. At some stage, someone is not going to like what you write, unless you are writing stuff that is so lacking in content or that is so well-known that no-one can argue with it. If you express an opinion, someone is probably going to disagree with you and if they do that rudely then it is going to sting. Most people who are reading you, and yes you can see the number of readers, will read you and, if they say nothing, have no strong feelings or probably agree with you to some extent. People are more likely to comment if they have strong disagreement and many of the strong disagreers in the on-line community are card-carrying schmucks. Some of them are genuinely trying to help but there are any number of agendas being pushed where people are committed (or paid) to jump on on-line fora and smash into people holding discussions. Some people are just rude bozos who like making other people feel bad. Hooray.

SADLY, YOU MUST HAVE A STRATEGY PREPARED FOR THIS. I don’t condone this, I’m working to change it and I think we have a long way to go in our on-line social structures. However, right now, it’s going to happen. Whether this means that you will take steps if people cross lines and become genuinely abusive, or whether you have other strategies, think about what will happen if someone decides to have a go at you because of something you wrote. The principles of freedom of speech start to fall apart when you realise that some people use their freedom to remove that of other people – which is logically nonsensical. If someone is shouting you down in your own space, they are not respecting your freedom of speech, they are not listening to you and they are trying to win through bullying. John Stuart Mill would leap up from the grave and kick them in the face because his idea of Freedom of Speech was very generous but was based on a notion of airing bad ideas in order to replace them with good. If he had been exposed to the Internet, I suspect he would have been a gibbering wreck in two days.

Most of you will know the amount of trolling that the owner of this site has endured for (perfectly reasonably) pointing out that women are badly represented and served by most video games.

Remember: someone else who feels strongly can always start their own blog to air their views. You do not owe idiots space on your comments just so they can abuse people who agree with you or spout nonsense when they have no intention at all of changing their own minds. You going mad trying to be fair is completely unreasonable when this is the aim of the Internet Troll.

- Keep It Short and Use Pictures. This is the rule I have the most trouble with. I now try to limit myself to 1,000 words but this is, really, far too long. Twitter works because it can be scanned at speed. FB works for longer things that you are bringing in from elsewhere but falls apart at the long form. However, long blogs get ranty quickly and you are probably making the same point more than once. Pick a size and try to stick to it so your readers will know roughly what they are committing to. Pictures are also easy to look at and I like them because they throw in humour and colour, which break up the words.

There’s a lot more to say but I’m more than out of words! Hope this helped.

The Fragile Student Relationship (working from #Unstuck #by Julie Felner @felner)

Posted: September 18, 2014 Filed under: Education, Opinion | Tags: advocacy, authenticity, community, curriculum, education, educational problem, educational research, feedback, felner, gratitude, higher education, in the student's head, julie felner, learning, reflection, resources, student perspective, students, teaching, teaching approaches, thinking, tools, unstuck Leave a commentI was referred some time ago to a great site called “Unstuck”, which has some accompanying iPad software, that helps you to think about how to move past those stuck moments in your life and career to get things going. They recently posted an interesting item on “How to work like a human” and I thought that a lot of what they talked about had direct relevance to how we treat students and how we work with them to achieve things. The article is by Julie Felner and I strongly suggest that you read it, but here are my thoughts on her headings, as they apply to education and students.

Ultimately, if we all work together like human beings, we’re going to get on better than if we treat our students as answer machines and they treat us as certification machines. Here’s what optimising for one thing, mechanistically, can get you:

But if we’re going to be human, we need to be connected. Here are some signs that you’re not really connected to your students.

- Anything that’s not work you treat with a one word response. A student comes to see you and you don’t have time to talk about anything but assignment X or project Y. I realise time is scarce but, if we’re trying to build people, we have to talk to people, like people.

- You’re impatient when they take time to learn or adjust. Oh yeah, we’ve all done this. How can they not pick it up immediately? What’s wrong with them? Don’t they know I’m busy?

- Sleep and food are for the weak – and don’t get sick. There are no human-centred reasons for not getting something done. I’m scheduling all of these activities back-to-back for two months. If you want it, you’ll work for it.

- We never ask how the students are doing. By which I mean, asking genuinely and eking out a genuine response, if some prodding is required. Not intrusively but out of genuine interest. How are they doing with this course?

- We shut them down. Here’s the criticism. No, I don’t care about the response. No, that’s it. We’re done. End of discussion. There are times when we do have to drawn an end to a discussion but there’s a big difference between closing off something that’s going nowhere and delivering everything as if no discussion is possible.

Here is my take on Julie’s suggestions for how we can be more human at work, which works for the Higher Ed community just as well.

- Treat every relationship as one that matters. The squeaky wheels and the high achievers get a lot of our time but all of our students are actually entitled to have the same level of relationship with us. Is it easy to get that balance? No. Is it a worthwhile goal? Yes.

- Generously and regularly express your gratitude. When students do something well, we should let them know- as soon as possible. I regularly thank my students for good attendance, handing things in on time, making good contributions and doing the prep work. Yes, they should be doing it but let’s not get into how many things that should be done aren’t done. I believe in this strongly and it’s one of the easiest things to start doing straight away.

- Don’t be too rigid about your interactions. We all have time issues but maybe you can see students and talk to them when you pass them in the corridor, if both of you have time. If someone’s been trying to see you, can you grab them from a work area or make a few minutes before or after a lecture? Can you talk with them over lunch if you’re both really pressed for time? It’s one thing to have consulting hours but it’s another to make yourself totally unavailable outside of that time. When students are seeking help, it’s when they need help the most. Always convenient? No. Always impossible to manage? No. Probably useful? Yes.

- Don’t pretend to be perfect. Firstly, students generally know when you’re lying to them and especially when you’re fudging your answers. Don’t know the answer? Let them know, look it up and respond when you do. Don’t know much about the course itself? Well, finding out before you start teaching is a really good idea because otherwise you’re going to be saying “I don’t know a lot” and there’s a big, big gap between showing your humanity and obviously not caring about your teaching. Fix problems when they arise and don’t try to make it appear that it wasn’t a problem. Be as honest as you can about that in your particular circumstances (some teaching environments have more disciplinary implications than others and I do get that).

- Make fewer assumptions about your students and ask more questions. The demographics of our student body have shifted. More of my students are in part-time or full-time work. More are older. More are married. Not all of them have gone through a particular elective path. Not every previous course contains the same materials it did 10 years ago. Every time a colleague starts a sentence with “I would have thought” or “Surely”, they are (almost always) projecting their assumptions on to the student body, rather than asking “Have you”, “Did you” or “Do you know”?

Julie made the final point that sometimes we can’t get things done to the deadline. In her words:

You sometimes have to sacrifice a deadline in order to preserve something far more important — a relationship, a person’s well-being, the quality of the work

I completely agree because deadlines are a tool but, particularly in academia, the deadline is actually rarely as important as people. If our goal is to provide a good learning environment, working our students to zombie status because “that’s what happened to us” is bordering on a cycle of abuse, rather than a commitment to quality of education.

We all want to be human with our students because that’s how we’re most likely to get them to engage with us as a human too! I liked this article and I hope you enjoyed my take on it. Thank you, Julie Felner!

When Does Collaborative Work Fall Into This Trap?

Posted: September 11, 2014 Filed under: Education | Tags: advocacy, blogging, collaboration, community, crowdsourcing, curriculum, education, educational problem, educational research, ethics, feedback, Generation Why, higher education, in the student's head, interested parties, learning, principles of design, student perspective, students, teaching, teaching approaches, thinking, universal principles of design, University of Southampton, Victor Naroditskiy Leave a commentA recent study has shown that crowdsourcing activities are prone to bringing out the competitors’ worst competitive instincts.

“[T]he openness makes crowdsourcing solutions vulnerable to malicious behaviour of other interested parties,” said one of the study’s authors, Victor Naroditskiy from the University of Southampton, in a release on the study. “Malicious behaviour can take many forms, ranging from sabotaging problem progress to submitting misinformation. This comes to the front in crowdsourcing contests where a single winner takes the prize.” (emphasis mine)

You can read more about it here but it’s not a pretty story. Looks like a pretty good reason to be very careful about how we construct competitive challenges in the classroom!