Another semester, more lessons learned (mostly by me).

Posted: June 16, 2013 Filed under: Education, Opinion | Tags: advocacy, authenticity, collaboration, community, curriculum, design, education, educational problem, educational research, ethics, feedback, Generation Why, higher education, in the student's head, learning, plagiarism, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentI’ve just finished the lecturing component for my first year course on programming, algorithms and data structures. As always, the learning has been mutual. I’ve got some longer posts to write on this at some time in the future but the biggest change for this year was dropping the written examination component down and bringing in supervised practical examinations in programming and code reading. This has given us some interesting results that we look forward to going through, once all of the exams are done and the marks are locked down sometime in late July.

Whenever I put in practical examinations, we encounter the strange phenomenon of students who can mysteriously write code in very short periods of time in a practical situation very similar to the practical examination, but suddenly lose the ability to write good code when they are isolated from the Internet, e-Mail and other people’s code repositories. This is, thank goodness, not a large group (seriously, it’s shrinking the more I put prac exams in) but it does illustrate why we do it. If someone has a genuine problem with exam pressure, and it does occur, then of course we set things up so that they have more time and a different environment, as we support all of our students with special circumstances. But to be fair to everyone, and because this can be confronting, we pitch the problems at a level where early achievement is possible and they are also usually simpler versions of the types of programs that have already been set as assignment work. I’m not trying to trip people up, here, I’m trying to develop the understanding that it’s not the marks for their programming assignments that are important, it’s the development of the skills.

I need those people who have not done their own work to realise that it probably didn’t lead to a good level of understanding or the ability to apply the skill as you would in the workforce. However, I need to do so in a way that isn’t unfair, so there’s a lot of careful learning design that goes in, even to the selection of how much each component is worth. The reminder that you should be doing your own work is not high stakes – 5-10% of the final mark at most – and builds up to a larger practical examination component, worth 30%, that comes after a total of nine practical programming assignments and a previous prac exam. This year, I’m happy with the marks design because it takes fairly consistent failure to drop a student to the point where they are no longer eligible for redemption through additional work. The scope for achievement is across knowledge of course materials (on-line quizzes, in-class scratchy card quizzes and the written exam), programming with reference materials (programming assignments over 12 weeks), programming under more restricted conditions (the prac exams) and even group formation and open problem handling (with a team-based report on the use of queues in the real world). To pass, a student needs to do enough in all of these. To excel, they have to have a good broad grasp of theoretical and practical. This is what I’ve been heading towards for this first-year course, a course that I am confident turns out students who are programmers and have enough knowledge of core computer science. Yes, students can (and will) fail – but only if they really don’t do enough in more than one of the target areas and then don’t focus on that to improve their results. I will fail anyone who doesn’t meet the standard but I have no wish to do any more of that than I need to. If people can come up to standard in the time and resource constraints we have, then they should pass. The trick is holding the standard at the right level while you bring up the people – and that takes a lot of help from my colleagues, my mentors and from me constantly learning from my students and being open to changing the learning design until we get it right.

Of course, there is always room for improvement, which means that the course goes back up on blocks while I analyse it. Again. Is this the best way to teach this course? Well, of course, what we will do now is to look at results across the course. We’ll track Prac Exam performance across all practicals, across the two different types of quizzes, across the reports and across the final written exam. We’ll go back into detail on the written answers to the code reading question to see if there’s a match for articulation and comprehension. We’ll assess the quality of response to the exam, as well as the final marked outcome, to tie this back to developmental level, if possible. We’ll look at previous results, entry points, pre-University marks…

And then we’ll teach it again!

“Hi, my name is Nick and I specialise in failure.”

Posted: June 10, 2013 Filed under: Education, Opinion | Tags: advocacy, collaboration, community, curriculum, design, education, educational research, ethics, failure, Generation Why, higher education, in the student's head, learning, measurement, reflection, resources, student perspective, survivorship, teaching, teaching approaches, thinking, tools Leave a commentI recently read an article on survivorship bias in the “You Are Not So Smart” website, via Metafilter. While the whole story addressed the World War II Statistical Research Group, it focused on the insight contributed by Abraham Wald, a statistician. The World War II Allied bomber losses were large, very large, and any chances of reducing this loss was incredibly valuable. The question was “How could the US improve their chances of bringing their bombers back intact?” Bombers landing back after missions were full of holes but armour just can’t be strapped willy-nilly on to a plane without it becoming land-locked. (There’s a reason that birds are so light!) The answer, initially, was obvious – find the place where the most holes were, by surveying the fleet, and patching them. Put armour on the colander sections and, voila, increased survival rate.

No, said Wald. That wouldn’t help.

Wald’s logic is both simple and convincing. If a plane was coming back with those holes in place, then the holes in the skin were not leading to catastrophic failure – they couldn’t have been if the planes were returning! The survivors were not showing the damage that would have led to them becoming lost aircraft. Wald used the already collected information on the damage patterns to work out how much damage could be taken on each component and the likelihood of this occurring during a bombing run. based on what kind of forces it encountered.

It’s worth reading the entire article because it’s a simple and powerful idea – attributing magical properties to the set of steps taken by people who have become ultra-successful is not going to be as useful as looking at what happened to take people out of the pathway to success. If you’ve read Steve Jobs’ biography then you’re aware that he had a number of interesting traits, only some of which may have led to him becoming as successful as he did. Of course, if you’ve been reading a lot, you’ll be aware of the importance of Paul Jobs, Steve Wozniak, Robert Noyce, Bill Gates, Jony Ive, John Lasseter, and, of course, his wife, Laurene Powell Jobs. So the whole “only eating fruit” thing, the “reality distortion field” thing and “not showering” thing (some of which he changed, some he didn’t) – which of these are the important things? Jobs, like many successful people, failed at some of his endeavours, but never in a way that completely wiped him out. Obviously. Now, when he’s not succeeding, he’s interesting, because we can look at the steps that took him down and say “Oh, don’t do that”, assuming that it’s something that can be changed or avoided . When he’s succeeding, there are so many other things getting in the way that depend upon what’s happened to you so far, who your friends are, and how many resources you get to play with, it’s hard to be able to give good advice on what to do.

I have been studying failure for some time. Firstly in myself, and now in my students. I look for those decisions, or behaviours, that lead to students struggling in their academic achievement, or to falling away completely in some cases. The majority of the students who come to me with a high level of cultural, financial and social resources are far less likely to struggle because, even when faced with a set-back, they rarely hit the point where they can’t bounce back – although, sadly, it does happen but in far fewer numbers. When they do fall over, it is for the same reasons as my less-advantaged students, who just do so in far greater numbers because they have less resilience to the set-backs. By studying failure, and the lessons learned and the things to be avoided, I can help all of my students and this does not depend upon their starting level. If I were studying the top 5% of students, especially those who had never received a mark less than A+, I would be surprised if I could learn much that I could take and usefully apply to those in the C- bracket. The reverse, however? There’s gold to be mined there.

By studying the borderlines and by looking for patterns in the swirling dust left by those departing, I hope that I can find things which reduce failure everywhere – because every time someone fails, we run the risk of not getting them back simply because failure is disheartening. Better yet, I hope to get something that is immediately usable, defensible and successful. Probably rather a big ask for a protracted study of failure!

Why You Won’t Finish This Post

Posted: June 10, 2013 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, design, education, educational problem, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, universal principles of design 3 CommentsA friend of mine on Facebook posted a link to a Slate article entitled “You Won’t Finish This Article: Why people online don’t read to the end” and it’s told me everything that I’ve been doing wrong with this blog for about the last 410 hours. Now, this doesn’t even take into account that, by linking to something potentially more interesting on a well-known site, I’ve now buried the bottom of this blog post altogether because a number of you will follow the link and, despite me asking it to appear in a new window, you will never come back to this article. (This has quite obvious implications for the teaching materials we put up, so it’s well worth a look.)

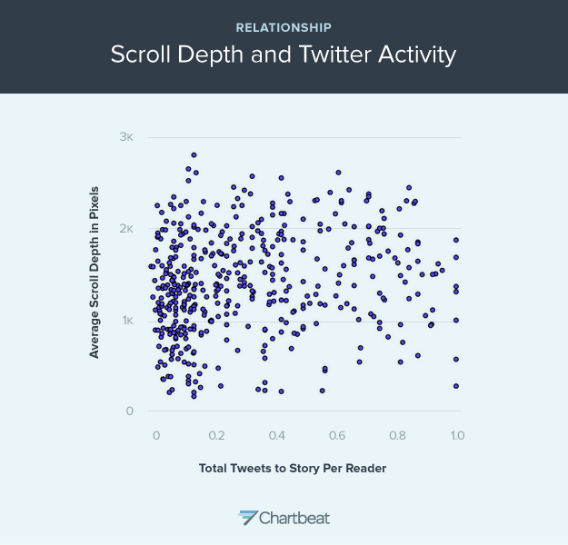

Now, on the off-chance that you did come back (hi!), we have to assume that you didn’t read all of the linked article (if you read any at all) because 28% of you ‘bounced’ immediately and didn’t actually read much at all of that page – you certainly didn’t scroll. Almost none of you read to the bottom. What is, however, amusing is that a number of you will have either Liked or forwarded a link to one or both of these pages – never having stepped through or scrolled once, but because the concept at the start looks cool. Of course, according the Slate analysis, I’ve lost over half my readers by now. Of course, this does assume the Slate layout, where an image breaks things up and forces people to scroll through. So here’s an image that will discourage almost everyone from continuing. However, it is a pretty picture:

This graph shows the relationship between scroll depth and Tweet (From Slate and courtesy of Chartbeat)

What it says is that there is not an enormously strong correlation between depth of reading and frequency of tweet. So, the amount that a story is read doesn’t really tell you how much people will want to (or actually) share it. Overall, the Slate article makes it fairly clear that unless I manage to make my point in the first paragraph, I have little chance of being read any further – but if I make that first paragraph (or first images) appealing enough, any number of people will like and share it.

Of course, if people read down this far (thanks!) then they will know that I secretly start advocating the most horrible things known to humanity so, when someone finally follows their link and miraculously reads down this far, survives the Slate link out, and doesn’t end up mired in the picture swamp above, they will discover…

Oh, who am I kidding. I’ll just come back and fill this in later.

(Having stolen a time machine, I can now point out that this is yet another illustration of why we need to be thoughtful about what our students are going to do in response to on-line and hyperlinked materials rather than what we would like them to do. Any system that requires a better human, or a human to act in a way that goes against all the evidence we have of their behaviour, requires modification.)

Note to Self

Posted: May 19, 2013 Filed under: Education, Opinion | Tags: authenticity, community, education, educational problem, ethics, higher education, in the student's head, learning, marcus aurelius, meditations, reflection, resources, teaching, teaching approaches, thinking 3 CommentsI’ve mentioned the “Meditations” of the Emperor Marcus Aurelius before – I’ve been writing this blog for over 450 hours, I’m not sure there’s anything I haven’t mentioned except my feelings on the season finale of Doctor Who, Series 7. (Eh.) Marcus Aurelius, philosopher, statesman, Roman, and Emperor wrote twelve “books” which were apparently never meant to be published. These are the private musings, notes to self, of a thoughtful man, written stoically and Stoically. When he lectures anyone, he lectures himself. He even poses questions to parts of himself: his soul, most notably.

There is much to admire in the simplicity and purpose of Marcus Aurelius’ thoughts. They are brief, because Emperors are busy people, especially when earning titles such as Germanicus (which usually involves squashing a nation state or two). They are direct, because he is talking to himself and he needs to be honest. He repeats himself for emphasis and to indicate importance, not out of forgetfulness.

Best, he writes for himself, for clarity, for the now and without thinking of a future audience.

There is a great deal to think about in this, because if you have read “Meditations”, you will know that every page contains a gem and some pages have jewels cascading from them. Yet these are the private thoughts of a person recording ways to improve himself and to keep himself in check – while he managed the Roman empire.

When I talk about improvement, I’m always trying to improve myself. When I find fault, I’ve usually found it in myself first. Yet, what a lot of words I write! Perhaps it is time to reinvestigate brevity, directness and a generosity towards the self that translates well into a kindness to strangers who might stumble upon this. The last thing I’d want to do is to stop people finding what zircons there are because the preamble is too demanding or the journey to the point too long.

Once again, I give my thanks to the writings of someone who died 2000 years ago and gave me so much to think about. Vale, Marcus Aurelius.

Time to Work and Time to Play

Posted: May 19, 2013 Filed under: Education, Opinion | Tags: advocacy, education, educational problem, educational research, feedback, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, work/life balance, workload 1 CommentI do a lot of grounded theory research into student behaviour patterns. It’s a bit Indiana Jones in a rather dry way: hear a rumour of a giant cache of data, hack your way through impenetrable obfuscation and poor data alignment to find the jewel at the centre, hack your way out and try to get it to the community before you get killed by snakes, thrown into a propellor or eaten. (Perhaps the analogy isn’t perfect but how recently have you been through a research quality exercise?) Our students are all pretty similar, from the metrics I have, and I’ve gone on at length about this in other posts: hyperbolic time-discounting and so on. Embarrassingly recently, however, I was introduced to the notion of instrumentality, the capability to see that achieving a task now will reduce the difficulty in completing a goal later. If we can’t see how important this is to getting students to do something, maybe it’s time to have a good sit-down and a think! Husman et al identify three associated but distinguishable aspects to a student’s appreciation of a task: how much they rate its value, their intrinsic level of motivation, and their appreciation of the instrumentality. From this study, we have a basis for the confusing and often paradoxical presentation of a student who is intelligent and highly motivated – but just not for the task we’ve given them, despite apparently and genuinely being aware of the value of the task. Without the ability to link this task to future goal success, the exponential approach of the deadline horizon can cause a student to artificially inflate the value of something of less final worth, because the actual important goal is out of sight. But rob a student of motivation and we have to put everything into a high-stakes, heavily temporally fixed into the almost immediate future and the present, often resorting to extrinsic motivating factors (bribes/threats) to impose value. This may be why everyone who uses a punishment/reward situation achieves compliance but then has to keep using this mechanism to continue to keep values artificially high. Have we stumbled across an Economy of Pedagogy? I hope not, because I can barely understand basic economics. But we can start to illustrate why the student has to be intrinsically connected to the task and the goal framework – without it, it’s carrot/stick time and, once we do that, it’s always carrot/stick time.

Like almost every teacher I know, all of my students are experts at something but mining that can be tricky. What quickly becomes apparent, and as McGonigall reflected on in “Reality is Broken”, is that people will put far more effort into an activity that they see as play than one which they see as work. I, for example, have taken up linocut printing and, for no good reason at all, have invested days into a painstaking activity where it can take four hours to achieve even a simple outcome of reasonable quality – and it will be years before I’m good at it. Yet the time I spend at the printing studio on Saturdays is joyful, recharging and, above all, playful. If I consumed 6 hours marking assignments, writing a single number out of 10 and restricting my comments to good/bad/try harder, then I would feel spent and I would dread starting, putting it off as long as possible. Making prints, I consumed about 6 hours of effort to scan, photoshop, trim, print, reverse, apply over carbon paper, trace, cut out of lino and then manually and press print about four pieces of paper – and I felt like a new man. No real surprises here. In both cases, I am highly motivated. One task has great value to my students and me because it provides useful feedback. The artistic task has value to me because I am exploring new forms of art and artistic thinking, which I find rewarding.

But what of the instrumentality? In the case of the marking, it has to be done at a time where students can get the feedback at a time where they can use it and, given we have a follow-up activity of the same type for more marks, they need to get that sooner rather than later. If I leave it all until the end of the semester, it makes my students’ lives harder and mine, too, because I can’t do everything at once and every single ‘when is it coming’ query consumes more time. In the case of the art, I have no deadline but I do have a goal – a triptych work to put on the wall in August. Every print I make makes this final production easier. The production of the lino master? Intricate, close work using sharp objects and it can take hours to get a good result. It should be dull and repetitive but it’s not – but ask me to cut out 10 of the same thing or very, very similar things and I think it would be, very quickly. So, even something that I really enjoy becomes mundane when we mess with the task enough or get to the point, in this case, where we start to say “Well, why can’t a machine do this?” Rephrasing this, we get the instrumentality focus back again: “What do I gain in the future from doing this ten times if I will only do this ten times once?” And this is a valid question for our students, too. Why should they write “Hello, World” – it has most definitely and definitively been written. It’s passed on. It is novel no more. Bereft of novelty, it rests on its laurels. If we didn’t force students to write it, there is no way that this particular phrase, which we ‘owe’ to Brian Kernighan, is introducing anyone to anything that could not have a modicum of creativity added to it by saying in the manual “Please type a sentence into this point in the program and it will display it back to you.” It is an ex-program.

I love lecturing. I love giving tutorials. I will happily provide feedback in pracs. Why don’t I like marking? It’s easy to say “Well, it’s dull and repetitive” but, if I wouldn’t ask a student to undertake a task like that so why am I doing it? Look, I’m not advocating that all marking is like this but, certainly, the manual marking of particular aspects of software does tend to be dull.

Unless, of course, you start enjoying it and we can do that if we have enough freedom and flexibility to explore playful aspects. When I marked a big group of student assignments recently, I tried to write something new for each student and, this doesn’t always succeed for small artefacts with limited variability, I did manage to complement a student on their spanish variable names, provide personalised feedback to some students who had excelled and, generally, turned a 10 mark program into a place where I thought about each student personally and then (more often than not) said something unique. Yes, sometimes the same errors cropped up and the copy/paste is handy – but by engaging with the task and thinking about how much my future interactions with the students would be helped with a little investment now, the task was still a slog, but I came out of it quite pleased with the overall achievement. The task became more enjoyable because I had more flexibility but I also was required to be there to be part of the process, I was necessary. It became possible to be (professionally and carefully) playful – which is often how I approach teaching.

Any of you who are required to use standardised tests with manual marking: you already know how desperately dull the grading is and it is a grindingly dull, rubric-bound, tick/flick scenario that does nothing except consume work. It’s valuable because it’s required and money is money. Motivating? No. Any instrumentality? No, unless giving the test raises the students to the point where you get improved circumstances (personal/school) or you reduce the amount of testing required for some reason. It is, sadly, as dull for your students to undertake them, in this scenario, because they will know how it’s marked and it is not going to trigger any of Husman’s three distinguished but associated variables.

I am never saying that everything has to fun or easy, because I doubt many areas would be able to convey enough knowledge under these strictures, but providing tasks that have room to encourage motivation, develop a personal sense of task value, and that allow students to play, potentially bringing in some of their own natural enthusiasm on other areas or channeling it here, solves two thirds of the problem in getting students involved. Intentionally grounding learning in play and carefully designing materials to make this work can make things better. It also makes it easier for staff. Right now, as we handle the assignment work of the course I’m currently teaching, other discussions on the student forums includes the History of Computing, Hofstede’s Cultural Dimensions, the significance of certain questions in the practical, complexity theory and we have only just stopped the spontaneous student comparison of performance at a simple genetic algorithms practical. My students are exploring, they are playing in the space of the discipline and, by doing so, are moving more deeply into a knowledge of taxonomy and lexicon within this space. I am moving from Lion Tamer to Ringmaster, which is the logical step to take as what I want is citizens who are participating because they can see value, have some level of motivation and are forming their instrumentality. If learning and exploration is fun now, then going further in this may lead to fun later – the future fun goal is enhanced by achieving tasks now. I’m not sure if this is necessarily the correct first demonstration of instrumentality, but it is a useful one!

However, it requires time for both the staff member to be able to construct and moderate such an environment, especially if you’re encouraging playful exploration of areas on public discussion forums, and the student must have enough time to be able to think about things, make plans and then to try again if they don’t pick it all up on the first go. Under strict and tight deadlines, we know the creativity can be impaired when we enforce the deadlines the wrong way, and we reduce the possibility of time for exploration and play – for students and staff.

Playing is serious business and our lives are better when we do more of it – the first enabling act of good play is scheduling that first play date and seeing how it goes. I’ve certainly found it to be helpful, to me and to my students.

The Blame Game: Things were done, mistakes were made.

Posted: May 2, 2013 Filed under: Education, Opinion | Tags: authenticity, community, education, ethics, Generation Why, higher education, in the student's head, learning, reflection, resources, student perspective, teaching, teaching approaches, work/life balance, workload 5 CommentsNote: This is a re-post of something that I put up on a student discussion forum as part of one of my first-year teaching courses. I write a number of longer posts to the students to discuss some of the things that are not strictly Computer Science but can be good to know. One of my colleagues asked me to put it up in a place where he could refer to it even after the original forum was closed, so here it is.

The Irish Central Bank recently released a 10 Euro coin with a quote from James Joyce on it. Regrettably, they got the quote wrong by inserting a ‘that’ which was not in the original quote. While this is hardly newsworthy usually, I want to draw your attention to the way that the bank handled this error.

According to the bank, the coin was “an artistic representation of the author and text and not intended as a literal representation”. In fact, “the text on the Joyce coin does not correspond to the precise text as it appears in Ulysses” and “the error is regretted”.

The error is regretted? By whom? This is a delightful example of the passive voice, frequently used because people wish to avoid associating the problem with themselves. Before this coin hit the mint, people could see the graphic design and the mistake would have been there. Was the error with the original brief, the designer, the people who should have been proofing? (The actual ‘apology’ is even worse as it says “While the error is regretted” and then goes on to try and weasel out.)

Look, the blame game is seductive because people love to allocate blame and, frankly, blame assignation is not very productive because it doesn’t fix the existing problem and, worse, it rarely fixes the future problem. However, the error (in this case) did not leap into the printing presses at the mint due to run-away nanotechnology – in this case, the producing organisation (the bank) should have said “Argh, sorry. We made a mistake.” and then gone on with the offers of refunds – but more importantly, having accepted that it was their error, they would have the mental gears engaged to make changes to stop it happening again. Right now, the bank is trying to wriggle out of a mistake, which might fool people inside the bank into thinking that this is how you deal with errors – through “after the fact” passive apology, rather than taking responsibility and doing some proper proof-reading!

Years ago, I worked with a guy whose motto was “Don’t tell me that you knew it wasn’t going to work. Tell me when you think that and tell me how we’re going to fix it.” Don’t just play the blame and “I told you so” game, be active and try to fix things!

But let’s bring this closer to home. Running late for a lecture? What happened? Was the traffic really bad – or did you not allow enough time to get there, having expected really good traffic? “The traffic was awful” is a great excuse occasionally but all the time? “I didn’t allow enough time for the traffic.” What does this mean? Allow more time! Be active! Take control (if you can). If you’re on a dire bus route, then you may have to think about other ways to deal with it – perhaps you just can’t allow enough time for the awful traffic. In that case, what do you need to do in order to get the lecture content? What do you need to let the lecturer know so that we can help you?

See the difference? If “the traffic is awful” then we have no solutions because a million cars and the Adelaide City traffic computers are beyond your control. If “I have a problem with time” then it is easier to start thinking about ways to fix this that involve you.

When you think to yourself “the assignment wasn’t completed on time”, who was actually responsible for that? Note, I’m not talking about assigning blame – I’m talking about taking responsibility. If you didn’t finish the assignment on time because you didn’t start early enough, then you have started the mental processes that lead to a potential conclusion of “Oh, I should start working on things a bit earlier.” Were you sick? Should you have organised a med cert or spoken to the lecturer?

Responsibility doesn’t have to be a burden but it does give you a reason to exercise your agency, your capacity to act and to make change in the world. If all of your problems are in the passive voice, then “assignments are handed in late”, “the money ran out”, “mistakes were made” rather than “I didn’t start early enough or put enough time in or I was horribly ill and thought I could just push through”, “I spent all of my money too quickly.” and “I made a mistake”.

Obviously, a false declaration of responsibility, where you have no intention of changing, is just as bad as weasel words in the passive voice. Saying “I made a mistake” achieves nothing unless you try and change what you’re doing to stop it happening again.

When you feel that you are responsible for something, you are more likely to devote time and effort to it. The way that you describe the things in your life can help to remind you of what you are responsible for and where you can take charge and try to bring about a positive change. Language is powerful – it can really help to focus the mind on what you need to do to get the best out of everything. Use it!

(Edit: This is now in the comments but after the original post, I linked to an article on one set of steps students could use to write a real apology. You can find it here. Thanks for the nudge, Liz!)

Tell us we’re dreaming.

Posted: April 21, 2013 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, education, Generation Why, higher education, reflection, resources, thinking, work/life balance, workload 2 CommentsI recently read an opinion piece in the Australian national newspaper, the conveniently named “The Australian”, on funding school reform. The piece, entitled “School Reform must be funded” and sub-titled “But maybe we need fewer academics thinking up ways to spend our taxes”, written by Cassandra Wilkinson, identified that the coming cuts to higher education because of the apparent impossibility of paying for school reforms in any other way. No-one, sensible, is arguing that the school cuts can come out of thin air, I make explicit reference to realities such as this in my previous post, but it does appear that Cassandra is attempting to place the blame squarely on the shoulders of the academics, for this sorry state of affairs (“the growing influence of the university sector on early childhood and school education is partly responsible for the now necessary cuts to higher education.”, from the article).

It is the professionalisation of teaching, and the intervention of education academics to convince governments that early educational investment, potentially at the expense of the family unit’s role in child rearing, that has convinced governments that money must be spent here – therefore, it is our fault that our argument is leading to money coming out of our pockets. I cannot think of a more amazing piece of victim blaming, recently, but then again I generally don’t read the opinion section of The Australian!

Now, you may immediately say “You must be quoting her out of context”, here is another extract from this rather short opinion piece:

“In addition to the public costs being generated by education academics, we have public health academics driving an expensive “preventative health” agenda that includes mental health checks for kids and public advertising about the calorie content of pizza; safety academics driving up the cost of road building and tripling the price of trampolines, which now come with fencing and crash mats; and sustainability academics driving up the cost of housing.”

Not only are people concerned about education driving up the cost of education but we have increased all other prices through our short-sighted adherence to preventative health, safety and sustainability! I keep thinking that Wilkinson, who has some quiet excellent social project credentials if I have researched the correct Cassandra Wilkinson, must be making a satirical comment here but, either my humour is failing (entirely possible), she has been edited (entirely possible) or she is completely serious and we in higher education have brought doom upon our heads by dint of doing our job. The piece finishes with:

“It may well be that the real efficiency savings will derive from a university sector employing slightly fewer academics to dream up new ways for governments to spend taxpayers money.”

and whether this is intended to be satire or not, this statement does raise my hackles.

Right now, most of the academics I know are trying to dream up ways to meet our obligations to our students in terms of a high-quality, useful and valuable education under existing restrictions. The only tax spending we’re trying to do is on the things that we can barely afford to do on the monies we get. I’m assuming that Cassandra is being satirical but is just not very good at it – or is assuming the role of her namesake, in that no-one will actually take her seriously, which is a shame as the approach that she seems to be supporting is not just saying that the only place this money can come from is higher ed, but that we should shut up because of how much we’re costing decent, family-centered Australians. If only I had that many column inches in a large-scale distribution paper to put my case that, maybe, people should stop talking about what they think we’re doing, or their fuzzy memories of old Uni days and bad movies, and come down and see what we’re doing now. Shadow me for a month. Bring running shoes. But, hey, maybe I’m just lazy, soft and dreamy. How would I know?

The rich dream of luxuries, the poor dream of staples. We are dreaming of having enough to do our jobs adequately and these are not the dreams of rich people.

Maybe I’m just too tired right now to see her humour in all of this. I seriously hope that I’ve just got the wrong end of the stick, because if this is what the social progressives are saying, then we may as well close up shop now.

SIGCSE 2013: Special Session on Designing and Supporting Collaborative Learning Activities

Posted: March 31, 2013 Filed under: Education | Tags: authenticity, community, curriculum, education, educational problem, educational research, feedback, higher education, in the student's head, learning, principles of design, reflection, resources, sigcse, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentKatrina and I delivered a special session on collaborative learning activities, focused on undergraduates because that’s our area of expertise. You can read the outline document here. We worked together on the underlying classroom activities and have both implemented these techniques but, in this session, Katrina did most of the presenting and I presented the collaborative assessment task examples, with some facilitation.

The trick here is, of course, to find examples that are both effective as teaching tools and are effective as examples. The approach I chose to take was to remind everyone in the room of what the most important aspects were to making this work with students and I did this by deliberately starting with a bad example. This can be a difficult road to walk because, when presenting a bad example, you need to convince everyone that your choice was deliberate and that you actually didn’t just stuff things up.

My approach was fairly simple. Break people into groups, based on where they were currently sitting, and then I immediately went into the question, which had been tailored for the crowd and for my purposes:

“I want you to talk about the 10 things that you’re going to do in the next 5 years to make progress in your career and improve your job performance.”

And why not? Everyone in the room was interested in education and, most likely, had a job at a time when it’s highly competitive and hard to find or retain work – so everyone has probably thought about this. It’s a fair question for this crowd.

Well, it would be, if it wasn’t so anxiety inducing. Katrina and I both observed a sea of frozen faces as we asked a question that put a large number of participants on the spot. And the reason I did this was to remind everyone that anxiety impairs genuine participation and willingness to engage. There were a large number of frozen grins with darting eyes, some nervous mumbles and a whole lot of purposeless noise, with the few people who were actually primed to answer that question starting to lead off.

I then stopped the discussion immediately. “What was wrong with that?” I asked the group.

Well, where do we start? Firstly, it’s an individual activity, not a collaborative activity – there’s no incentive or requirement for discussion, groupwork or anything like that. Secondly, while we might expect people to be able to answer this, it is a highly charged and personal areas, and you may not feel comfortable discussing your five year plan with people that you don’t know. Thirdly, some people know that they should be able to answer this (or at least some supervisors will expect that they can) but they have no real answer and their anxiety will not only limit their participation but it will probably stop them from listening at all while they sweat their turn. Finally, there is no point to this activity – why are we doing this? What are we producing? What is the end point?

My approach to collaborative activity is pretty simple and you can read any amount of Perry, Dickinson, Hamer et al (and now us as well) to look at relevant areas and Contributing Student Pedagogy, where students have a reason to collaborate and we manage their developmental maturity and their roles in the activity to get them really engaged. Everyone can have difficulties with authority and recognising whether someone is making enough contribution to a discussion to be worth their time – this is not limited to students. People, therefore, have to believe that the group they are in is of some benefit to them.

So we stepped back. I asked everyone to introduce themselves, where they came from and give a fact about their current home that people might not know. Simple task, everyone can do it and the purpose was to tell your group something interesting about your home – clear purpose, as well. This activity launched immediately and was going so well that, when I tried to move it on because the sound levels were dropping (generally a good sign that we’re reaching a transition), some groups asked if they could keep going as they weren’t quite finished. (Monitoring groups spread over a large space can be tricky but, where the activity is working, people will happily let you know when they need more time.) I was able to completely stop the first activity and nobody wanted me to continue. The second one, where people felt that they could participate and wanted to say something, needed to keep going.

Having now put some faces to names, we then moved to a simple exercise of sharing an interesting teaching approach that you’d tried recently or seen at the conference and it’s important to note the different comfort levels we can accommodate with this – we are sharing knowledge but we give participants the opportunity to share something of themselves or something that interest them, without the burden of ownership. Everyone had already discovered that everyone in the group had some areas of knowledge, albeit small, that taught them something new. We had started to build a group where participants valued each other’s contribution.

I carried out some roaming facilitation where I said very little, unless it was needed. I sat down with some groups, said ‘hi’ and then just sat back while they talked. I occasionally gave some nodded or attentive feedback to people who looked like they wanted to speak and this often cued them into the discussion. Facilitation doesn’t have to be intrusive and I’m a much bigger fan of inclusiveness, where everyone gets a turn but we do it through non-verbal encouragement (where that’s possible, different techniques are required in a mixed-ability group) to stay out of the main corridor of communication and reduce confrontation. However, by setting up the requirement that everyone share and by providing a task that everyone could participate in, my need to prod was greatly reduced and the groups mostly ran themselves, with the roles shifting around as different people made different points.

We covered a lot of the underlying theory in the talk itself, to discuss why people have difficulty accepting other views, to clarify why role management is a critical part of giving people a reason to get involved and something to do in the conversation. The notion that a valid discursive role is that of the supporter, to reinforce ideas from the proposer, allows someone to develop their confidence and critically assess the idea, without the burden of having to provide a complex criticism straight away.

At the end, I asked for a show of hands. Who had met someone knew? Everyone. Who had found out something they didn’t know about other places? Everyone. Who had learned about a new teaching technique that they hadn’t known before. Everyone.

My one regret is that we didn’t do this sooner because the conversation was obviously continuing for some groups and our session was, sadly, on the last day. I don’t pretend to be the best at this but I can assure you that any capability I have in this kind of activity comes from understanding the theory, putting it into practice, trying it, trying it again, and reflecting on what did and didn’t work.

I sometimes come out of a lecture or a collaborative activity and I’m really not happy. It didn’t gel or I didn’t quite get the group going as I wanted it to – but this is where you have to be gentle on yourself because, if you’re planning to succeed and reflecting on the problems, then steady improvement is completely possible and you can get more comfortable with passing your room control over to the groups, while you move to the facilitation role. The more you do it, the more you realise that training your students in role fluidity also assists them in understanding when you have to be in control of the room. I regularly pass control back and forward and it took me a long time to really feel that I wasn’t losing my grip. It’s a practice thing.

It was a lot of fun to give the session and we spent some time crafting the ‘bad example’, but let me summarise what the good activities should really look like. They must be collaborative, inclusive, achievable and obviously beneficial. Like all good guidelines there are times and places where you would change this set of characteristics, but you have to know your group well to know what challenges they can tolerate. If your students are more mature, then you push out into open-ended tasks which are far harder to make progress in – but this would be completely inappropriate for first years. Even in later years, being able to make some progress is more likely to keep the group going than a brick wall that stops you at step 1. But, let’s face it, your students need to know that working in that group is not only not to their detriment, but it’s beneficial. And the more you do this, the better their groupwork and collaboration will get – and that’s a big overall positive for the graduates of the future.

To everyone who attended the session, thank you for the generosity and enthusiasm of your participation and I’m catching up on my business cards in the next weeks. If I promised you an e-mail, it will be coming shortly.

Expressiveness and Ambiguity: Learning to Program Can Be Unnecessarily Hard

Posted: January 23, 2013 Filed under: Education, Opinion | Tags: advocacy, collaboration, curriculum, design, education, educational problem, feedback, Generation Why, higher education, in the student's head, learning, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools Leave a commentOne of the most important things to be able to do in any profession is to think as a professional. This is certainly true of Computer Science, because we have to spend so much time thinking as a Computer Scientist would think about how the machine will interpret our instructions. For those who don’t program, a brief quiz. What is the value of the next statement?

What is 3/4?

No doubt, you answered something like 0.75 or maybe 75% or possibly even “three quarters”? (And some of you would have said “but this statement has no intrinsic value” and my heartiest congratulations to you. Now go off and contemplate the Universe while the rest of us toil along on the material plane.) And, not being programmers, you would give me the same answer if I wrote:

What is 3.0/4.0?

Depending on the programming language we use, you can actually get two completely different answers to this apparently simple question. 3/4 is often interpreted by the computer to mean “What is the result if I carry out integer division, where I will only tell you how many times the denominator will go into the numerator as a whole number, for 3 and 4?” The answer will not be the expected 0.75, it will be 0, because 4 does not go into 3 – it’s too big. So, again depending on programming language, it is completely possible to ask the computer “is 3/4 equivalent to 3.0/4.0?” and get the answer ‘No’.

This is something that we have to highlight to students when we are teaching programming, because very few people use integer division when they divide one thing by another – they automatically start using decimal points. Now, in this case, the different behaviour of the ‘/’ is actually exceedingly well-defined and is not all ambiguous to the computer or to the seasoned programmer. It is, however, nowhere near as clear to the novice or casual observer.

I am currently reading Stephen Ramsay’s excellent “Reading Machines: Towards an Algorithmic Criticism” and it is taking me a very long time to read an 80 page book. Why? Because, to avoid ambiguity and to be as expressive and precise as possible, he has used a number of words and concepts with which I am unfamiliar or that I have not seen before. I am currently reading his book with a web browser and a dictionary because I do not have a background in literary criticism but, once I have the building blocks, I can understand his argument. In other words, I am having to learn a new language in order to read a book for that new language community. However, rather than being irked that “/” changes meaning depending on the company it keeps, I am happy to learn the new terms and concepts in the space that Ramsay describes, because it is adding to my ability to express key concepts, without introducing ambiguous shadings of language over things that I already know. Ramsay is not, for example, telling me that “book” no longer means “book” when you place it inside parentheses. (It is worth noting that Ramsay discusses the use of constraint as a creative enhancer, a la Oulipo, early on in the book and this is a theme for another post.)

The usual insult at this point is to trot out the accusation of jargon, which is as often a statement that “I can’t be bothered learning this” than it is a genuine complaint about impenetrable prose. In this case, the offender in my opinion is the person who decided to provide an invisible overloading of the “/” operator to mean both “division” and “integer division”, as they have required us to be aware of a change in meaning that is not accompanied by a change in syntax. While this isn’t usually a problem, spoken and written languages are full of these things after all, in the computing world it forces the programmer to remember that “/” doesn’t always mean “/” and then to get it the right way around. (A number of languages solve this problem by providing a distinct operator – this, however, then adds to linguistic complexity and rather than learning two meanings, you have to learn two ‘words’. Ah, no free lunch.) We have no tone or colour in mainstream programming languages, for a whole range of good computer grammar reasons, but the absence of the rising tone or rising eyebrow is sorely felt when we encounter something that means two different things. The net result is that we tend to use the same constructs to do the same thing because we have severe limitations upon our expressivity. That’s why there are boilerplate programmers, who can stitch together a solution from things they have already seen, and people who have learned how to be as expressive as possible, despite most of these restrictions. Regrettably, expressive and innovative code can often be unreadable by other people because of the gymnastics required to reach these heights of expressiveness, which is often at odds with what the language designers assumed someone might do.

We have spent a great deal of effort making computers better at handling abstract representations, things that stand in for other (real) things. I can use a name instead of a number and the computer will keep track of it for me. It’s important to note that writing int i=0; is infinitely preferable to typing “0000000000000000000000000000000000000000000000000000000000000000” into the correct memory location and then keeping that (rather large number) address written on a scrap of paper. Abstraction is one of the fundamental tools of modern programming, yet we greatly limit expressiveness in sometimes artificial ways to reduce ambiguity when, really, the ambiguity does seem a little artificial.

One of the nastiest potential ambiguities that shows up a lot is “what do we mean by ‘equals'”. As above, we already know that many languages would not tell you that “3/4 equals 3.0/4.0” because both mathematical operations would be executed and 0 is not the same as 0.75. However, the equivalence operator is often used to ask so many different questions: “Do these two things contain the same thing?”, “Are these two things considered to be the same according to the programmer?” and “Are these two things actually the same thing and stored in the same place in memory?”

Generally, however, to all of these questions, we return a simple “True” or “False”, which in reality reflects neither the truth nor the falsity of the situation. What we are asking, respectively, is “Are the contents of these the same?” to which the answer is “Same” or “Different”. To the second, we are asking if the programmer considers them to be the same, in which case the answer is really “Yes” or “No” because they could actually be different, yet not so different that the programmer needs to make a big deal about it. Finally, when we are asking if two references to an object actually point to the same thing, we are asking if they are in the same location or not.

There are many languages that use truth values, some of them do it far better than others, but unless we are speaking and writing in logical terms, the apparent precision of the True/False dichotomy is inherently deceptive and, once again, it is only as precise as it has been programmed to be and then interpreted, based on the knowledge of programmer and reader. (The programming language Haskell has an intrinsic ability to say that things are “Undefined” and to then continue working on the problem, which is an obvious, and welcome, exception here, yet this is not a widespread approach.) It is an inherent limitation on our ability to express what is really happening in the system when we artificially constrain ourselves in order to (apparently) reduce ambiguity. It seems to me that we have reduced programmatic ambiguity, but we have not necessarily actually addressed the real or philosophical ambiguity inherent in many of these programs.

More holiday musings on the “Python way” and why this is actually an unreasonable demand, rather than a positive feature, shortly.

The Limits of Expressiveness: If Compilers Are Smart, Why Are We Doing the Work?

Posted: January 23, 2013 Filed under: Education, Opinion | Tags: 'pataphysics, collaboration, community, curriculum, data visualisation, design, education, educational problem, higher education, principles of design, programming, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools 2 CommentsI am currently on holiday, which is “Nick shorthand” for catching up on my reading, painting and cat time. Recently, my interests in my own discipline have widened and I am precariously close to that terrible state that academics sometimes reach when they suddenly start uttering words like “interdisciplinary” or “big tent approach”. Quite often, around this time, the professoriate will look at each other, nod, and send for the nice people with the butterfly nets. Before they arrive and cart me away, I thought I’d share some of the reading and thinking I’ve been doing lately.

My reading is a little eclectic, right now. Next to Hooky’s account of the band “Joy Division” sits Dennis Wheatley’s “They Used Dark Forces” and next to that are four other books, which are a little more academic. “Reading Machines: Towards an Algorithmic Criticism” by Stephen Ramsay; “Debates in the Digital Humanities” edited by Matthew Gold; “10 PRINT CHR$(205.5+RND(1)); : GOTO 10” by Montfort et al; and “‘Pataphysics: A Useless Guide” by Andrew Hugill. All of these are fascinating books and, right now, I am thinking through all of these in order to place a new glass over some of my assumptions from within my own discipline.

“10 PRINT CHR$…” is an account of a simple line of code from the Commodore 64 Basic language, which draws diagonal mazes on the screen. In exploring this, the authors explore fundamental aspects of computing and, in particular, creative computing and how programs exist in culture. Everything in the line says something about programming back when the C-64 was popular, from the use of line numbers (required because you had to establish an execution order without necessarily being able to arrange elements in one document) to the use of the $ after CHR, which tells both the programmer and the machine that what results from this operation is a string, rather than a number. In many ways, this is a book about my own journey through Computer Science, growing up with BASIC programming and accepting its conventions as the norm, only to have new and strange conventions pop out at me once I started using other programming languages.

Rather than discuss the other books in detail, although I recommend all of them, I wanted to talk about specific aspects of expressiveness and comprehension, as if there is one thing I am thinking after all of this reading, it is “why aren’t we doing this better”? The line “10 PRINT CHR$…” is effectively incomprehensible to the casual reader, yet if I wrote something like this:

do this forever

pick one of “/” or “\” and display it on the screen

then anyone who spoke English (which used to be a larger number than those who could read programming languages but, honestly, today I’m not sure about that) could understand what was going to happen but, not only could they understand, they could create something themselves without having to work out how to make it happen. You can see language like this in languages such as Scratch, which is intended to teach programming by providing an easier bridge between standard language and programming using pre-constructed blocks and far more approachable terms. Why is it so important to create? One of the debates raging in Digital Humanities at the moment, at least according to my reading, is “who is in” and “who is out” – what does it take to make one a digital humanist? While this used to involve “being a programmer”, it is now considered reasonable to “create something”. For anyone who is notionally a programmer, the two are indivisible. Programs are how we create things and programming languages are the form that we use to communicate with the machines, to solve the problems that we need solved.

When we first started writing programs, we instructed the machines in simple arithmetic sequences that matched the bit patterns required to ensure that certain memory locations were processed in a certain way. We then provided human-readable shorthand, assembly language, where mnemonics replaced numbers, to make it easier for humans to write code without error. “20” became “JSR” in 6502 assembly code, for example, yet “JSR” is as impenetrably occulted as “20” unless you learn a language that is not actually a language but a compressed form of acronym. Roll on some more years and we have added pseudo-English over the top: GOSUB in Basic and the use of parentheses to indicate function calls in other languages.

However, all I actually wanted to do was to make the same thing happen again, maybe with some minor changes to what it was working on. Think of a sub-routine (method, procedure or function, if we’re being relaxed in our terminology) and you may as well think of a washing machine. It takes in something and combines it with a determined process, a machine setting, powders and liquids to give you the result you wanted, in this case taking in dirty clothes and giving back clean ones. The execution of a sub-routine is identical to this but can you see the predictable familiarity of the washing machine in JSR FE FF?

If you are familiar with ‘Pataphysics, or even “Ubu Roi” the most well-known of Jarry’s work, you may be aware of the pataphysician’s fascination with the spiral – le Grand Gidouille. The spiral, once drawn, defines not only itself but another spiral in the negative space that it contains. The spiral is also a natural way to think about programming because a very well-used programming language construct, the for loop, often either counts up to a value or counts down. It is not uncommon for this kind of counting loop to allow us to advance from one character to the next in a text of some sort. When we define a loop as a spiral, we clearly state what it is and what it is not – it is not retreading old ground, although it may always spiral out towards infinity.

However, for maximum confusion, the for loop may iterate a fixed number of times but never use the changing value that is driving it – it is no longer a spiral in terms of its effect on its contents. We can even write a for loop that goes around in a circle indefinitely, executing the code within it until it is interrupted. Yet, we use the same keyword for all of these.

In English, the word “get” is incredibly overused. There are very few situations when another verb couldn’t add more meaning, even in terms of shade, to the situation. Using “get” forces us, quite frequently, to do more hard work to achieve comprehension. Using the same words for many different types of loop pushes load back on to us.

What happens is that when we write our loop, we are required to do the thinking as to how we want this loop to work – although Scratch provides a forever, very few other languages provide anything like that. To loop endlessly in C, we would use while (true) or for (;;), but to tell the difference between a loop that is functioning as a spiral, and one that is merely counting, we have to read the body of the loop to see what is going on. If you aren’t a programmer, does for(;;) give you any inkling at all as to what is going on? Some might think “Aha, but programming is for programmers” and I would respond with “Aha, yes, but becoming a programmer requires a great deal of learning and why don’t we make it simpler?” To which the obvious riposte is “But we have special languages which will do all that!” and I then strike back with “Well, if that is such a good feature, why isn’t it in all languages, given how good modern language compilers are?” (A compiler is a program that turns programming languages into something that computers can execute – English words to byte patterns effectively.)

In thinking about language origins, and what we are capable of with modern compilers, we have to accept that a lot of the heavy lifting in programming is already being done by modern, optimising, compilers. Years ago, the compiler would just turn your instructions into a form that machines could execute – with no improvement. These days, put something daft in (like a loop that does nothing for a million iterations), and the compiler will quietly edit it out. The compiler will worry about optimising your storage of information and, sometimes, even help you to reduce wasted use of memory (no, Java, I’m most definitely not looking at you.)

So why is it that C++ doesn’t have a forever, a do 10 times, or a spiral to 10 equivalent in there? The answer is complex but is, most likely, a combination of standards issues (changing a language standard is relatively difficult and requires a lot of effort), the fact that other languages do already do things like this, the burden of increasing compiler complexity to handle synonyms like this (although this need not be too arduous) and, most likely, the fact that I doubt that many people would see a need for it.

In reading all of these books, and I’ll write more on this shortly, I am becoming increasingly aware that I tolerate a great deal of limitation in my ability to solve problems using programming languages. I put up with having my expressiveness reduced, with taking care of some unnecessary heavy lifting in making things clear to the compiler, and I occasionally even allow the programming language to dictate how I write the words on the page itself – not just syntax and semantics (which are at least understandably, socially and technically) but the use of blank lines, white space and end of lines.

How are we expected to be truly creative if conformity and constraint are the underpinnings of programming? Tomorrow, I shall write on the use of constraint as a means of encouraging creativity and why I feel that what we see in programming is actually limitation, rather than a useful constraint.