The Limits of Time

Posted: November 9, 2012 Filed under: Education | Tags: authenticity, blogging, design, eat your own dog food, eating your own dog food, eating your own dogfood, education, feedback, higher education, learning, measurement, resources, tools, work/life balance Leave a commentI’m writing this on Monday (and Thursday night), after being on the road for teaching, and I’ve been picking up the pieces of a hard drive replacement (under warranty) compounded by the subsequent discovery that at least one of my backups is corrupted. This has taken what should have been a catch-up day and turned it into a “juggle recovery/repair disk/work on secondary machine” day but, hey, I’m not complaining too much – at least I have two machines and took the trouble to keep them synchronised with each other. The worst outcome of today’s little backup issue is that I have a relatively long reinstallation process ahead of me, because I haven’t actually lost anything yet except the convenient arrangement of all of my stuff.

It does, however, reinforce one of the lessons that it took me years to learn. If you have an hour, you can do an hour’s worth of work. I know, that sounds a little ‘aw shucks’ but some things just take time to do and you have to have the time to do them. My machine recovery was scheduled to take about four hours. When it had gone for five, I clicked on it to discover that it had stopped on detecting the bad backup. I couldn’t have done that at the 30 minute mark. Maybe I could have tried to wake it up at the 2 hour mark, and maybe I would have hit the error earlier, but, in reality that wasn’t going to happen because I was doing other work.

Why is this important? Because I am going to get 1, maybe 2, attempts per day to restore this machine until it finally works. It takes hours to do it and there’s nothing I can do to make it faster. (You’ll see down the bottom that this particular prediction came true because the backup restoration has now turned out to have some fundamental problems).

When students first learn about computers, they don’t really have an idea about how long things take and how important it is to make their programs work quickly. Computational complexity describes how we expect programs to behave when we change the amount of data that they’re working on, either in terms of how much space they take up or how long they take to compute. The choice of approach can lead to massive differences in performance. Something that takes 60 seconds on one approach can take an hour on another. Scale up the size of data you’re looking at and the difference is between ‘will complete this week’ and ‘I am not going to live that long’.

When you look at a computing problem, and the resources that you have, a back of an envelope calculation will very rapidly tell you how long it will take (with a bit of testing and trial and error in some cases). If you don’t allow this much time for the solution, you probably won’t get it. Worse case is that you start something running and then you stop it, thinking it’s not going to finish, but you actually stopped it just before it was going to finish. Time estimation is important. A lot of students won’t really learn this, however, until it comes back and bites them when they overshoot. With any luck, and let’s devote some effort so it’s not just luck, they learn what to look for when they’re estimating how long things actually take.

I wasn’t expecting to have my main machine back up in time to do any work on it today, because I’ve done this dance before, but I was hoping to have it ready for tomorrow. Now, I have to plan around not having it for tomorrow either (and, as it turns out, it won’t be back before the weekend). Worst case is that I will have to put enough time aside to do a complete rebuild. However, to rebuild it will take some serious time. There’s no point setting aside the rebuild as something that I devote my time or weekend to, because it doesn’t require that much attention and I can happily work around the major copies in hour-long blocks to get useful work done.

When you know how long something takes and you plan around that, even those long boring blocks of time become something that can be done in parallel, around the work that also must happen. I see a lot of students who sit around doing something that’s not actually work while they wait for computation or big software builds to finish. Hey, if you’ve got nothing else to do then feel free to do nothing or surf the web. The only problem is that very few of us ever have nothing else to do but, by realising that something that takes a long time will take a long time, we can use filler tasks to drag down the number of things that we still have left to do.

This is being challenged at the moment because the restoration is resolutely failing and, regrettably, I am now having to get actively involved because the ‘fix the backup’ regime requires me to try things, and then try other things, in order to get it working. The good news is I still have large blocks of time – the bad news is that I’m doing all of this on a secondary machine that doesn’t have the same screen real estate. (What a first world problem!)

What a fantastic opportunity to eat my own dog food. 🙂 Tonight, I’m sitting down to plan out how I can recover from this and be back up to date on Monday, with at least one fully working system and access to all of my files. I still need to allow for the occasional ‘try this on the backup’ and then wait several hours, but I need to make sure that this becomes a low priority tasks that I schedule, rather than one that interrupts me and becomes a primary focus. We’ll see how well that goes.

Wall of Questions – Simple Student Involvement

Posted: November 4, 2012 Filed under: Education | Tags: collaboration, community, cs unplugged, csunplugged, curriculum, design, education, educational problem, Generation Why, higher education, in the student's head, learning, principles of design, reflection, resources, student perspective, teaching, teaching approaches, tools Leave a commentTeaching an intensive mode class can be challenging. Talking to anyone for 6 hours in a row (however you try and break it up) requires you to try and maintain engagement with student, but the student has to want to become and stay engaged! We’re humans so we’re always more interested in things when it is relevant to our interests – the question now becomes “How can I make students care about what I’m teaching because it is relevant to them?”

I’ve learned a lot from looking at the great work coming out of CS Unplugged, so I decided to take a low-tech approach to getting the students involved in the knowledge construction in the course.

On the Friday night of teaching, I gave my students a simple homework question: “What is your big question about networking?” This could be technical, social or crystal-ball gazing. The next morning, I handed out some large sticky notes in a variety of garish colours and asked them to write their questions on the notes and stick them on the board. This is what it looked like this morning (after about 6 hours of teaching).

The blue, orange and pink rectangles are questions. The ones on the left are yet to be answered. The ones on the right have been answered. (The green post-its are 2D bit parity as an audience participation magic trick.)

I’ve been answering these questions as fill-ins, where I have gaps, but a lot of them address issues that I was planning to cover anyway. The range is, however, far wider than I would have thought of but it’s given me a chance to address the applications and implications of networking, to directly answer questions that are of interest to the students.

Here are some (not verbatim) examples: What happened to the versions of the Internet Protocol that aren’t 4 or 6? What would happen if we had a human colony on Mars in terms of network implications? Was the IPv4 allocation ‘fair’ in terms of all countries? Could you run WiFi in the underground train network and, if so, what is the impact of the speed of the train? Will increased WiFi coverage give us cancer?

Every student has a question on the board and, now, every student is (at least to a slight degree) involved in the course. A lot of the questions that are left are security questions, and I’ll answer them as part of my security lectures this afternoon.

If you like this, and want to try it, then I am not claiming any originality for this but I can offer some suggestions:

- Give the students a little time to think about the question. It’s a good homework assignment.

- Get them to fill out the notes in class. As they finish their notes and pop them up to the board, it appears to encourage other people to finish their own notes to get them up. The notes are also shorter because the students want to get it done quickly.

- Once the notes are up, quickly review them to see how you can use them and where they fit into your teaching.

- When you can, group the notes by theme based on what you are teaching. I left them unordered for a while and I kept having to exhaustively search them, which is irritating.

- Be bold and prominent – the board is an eye-catcher and it clearly says “We have questions!” It’s also dynamic because I can easily rearrange it, move it or regroup the notes.

I’m still thinking about what to do with the notes next. I am planning to keep them but am unsure as to whether I want to ‘capture’ answers to this as I may have a knock-on effect for the next offering of this course.

What pleased me was the students who recognised their own question, because their faces lit up as I spoke to their concern. For a relatively low effort investment, that’s a great reward.

Could I have used an electronic forum? Yes, but then the focus isn’t in the classroom. The board, and your question, are in the classroom. You can go up and look at anyone else’s to see if it’s interesting. Rather than taking the application focus out of the classroom, we’re bringing in the realities and the answers as I go through the teaching.

Is there a risk that they’ll ask something I don’t know? No more than usual, and now I can sneak off and look it up before I answer, because it’s on the board. Being an honest man, I would of course have to say “I had to look this up” but I did warn them that this might happen. If a student can ask a question that has me scratching my head but I can develop an answer, I think that’s a very valuable example and it’s probably a nice moment for the student too.

I’ll certainly be doing this again!

Road to Intensive Teaching: Post 1

Posted: November 2, 2012 Filed under: Education | Tags: advocacy, authenticity, collaboration, community, curriculum, data visualisation, design, education, educational problem, educational research, Generation Why, grand challenge, higher education, in the student's head, learning, networking, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools Leave a commentI’m back on the road for intensive teaching mode again and, as always, the challenge lies in delivering 16 hours of content in a way that will stick and that will allow the students to develop and apply their understanding of the core knowledge. Make no mistake, these are keen students who have committed to being here, but it’s both warm and humid where I am and, after a long weekend of working, we’re all going to be a bit punch-drunk by Sunday.

That’s why there is going to be a heap of collaborative working, questioning, voting, discussion. That’s why there are going to be collaborative discussions of connecting machines and security. Computer Networking is a strange beast at the best of times because it’s often presented as a set of competing models and protocols, with very few actual axioms beyond “never early adopt anything because of a vendor promise” and “the only way to merge two standards is by developing another standard. Now you have three standards.”

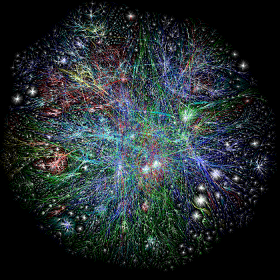

There is a lot of serious Computer Science lurking in networking. Algorithmic efficiency is regularly considered in things like routing convergence and the nature of distributed routing protocols. Proofs of correctness abound (or at least are known about) in a variety of protocols that , every day, keep the Internet humming despite all of the dumb things that humans do. It’s good that it keeps going because the Internet is important. You, as a connected being, are probably smarter than you, disconnected. A great reach for your connectivity is almost always a good thing. (Nyancat and hate groups notwithstanding. Libraries have always contained strange and unpleasant things.)

“If I have seen further, it is by standing on the shoulders of giants” (Newton, quoting Bernard of Chartres) – the Internet brings the giants to you at a speed and a range that dwarfs anything we have achieved previously in terms of knowledge sharing. It’s not just about the connections, of course, because we are also interested in how we connect, to whom we connect and who can read what we’re sharing.

There’s a vast amount of effort going into making the networks more secure and, before you think “Great, encrypted cat pictures”, let me reassure you that every single thing that comes out of your computer could, right now, be secretly and invisibly rerouted to a malicious third party and you would never, ever know unless you were keeping a really close eye (including historical records) on your connection latency. I have colleagues who are striving to make sure that we have security protocols that will make it harder for any country to accidentally divert all of the world’s traffic through itself. That will stop one typing error on a line somewhere from bringing down the US network.

“The network” is amazing. It’s empowering. It is changing the way that people think and live, mostly for the better in my opinion. It is harder to ignore the rest of the world or the people who are not like you, when you can see them, talk to them and hear their stories all day, every day. The Internet is a small but exploding universe of the products of people and, increasingly, the products of the products of people.

Computer Networking is really, really important for us in the 21st Century. Regrettably, the basics can be a bit dull, which is why I’m looking to restructure this course to look at interesting problems, which drives the need for comprehensive solutions. In the classroom, we talk about protocols and can experiment with them, but even when we have full labs to practise this, we don’t see the cosmos above, we see the reality below.

Nobody is interested in the compaction issues of mud until they need to build a bridge or a road. That’s actually very sensible because we can’t know everything – even Sherlock Holmes had his blind spots because he had to focus on what he considered to be important. If I give the students good reasons, a grand framing, a grand challenge if you will, then all of the clicking, prodding, thinking and protocol examination suddenly has a purpose. If I get it really right, then I’ll have difficulty getting them out of the classroom on Sunday afternoon.

Fingers crossed!

(Who am I kidding? My fingers have an in-built crossover!)

A Late Post On Deadlines, Amusingly Enough

Posted: November 1, 2012 Filed under: Education | Tags: advocacy, authenticity, blogging, community, curriculum, education, educational problem, educational research, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, student perspective, teaching, teaching approaches, time banking, tools, universal principles of design, work/life balance, workload 1 CommentCurrently still under a big cloud at the moment but I’m still teaching at Singapore on the weekend so I’m typing this at the airport. All of my careful plans to have items in the queue have been undermined by having a long enough protracted spell of illness (to be precise, I’m working at about half speed due to migraine or migraine-level painkillers). I have very good parts of the day where I teach and carry out all of the face-to-face things I need to do, but it drains me terribly and leaves me with no ‘extra’ time and it was the extra time I was using to do this. I’m confident that I will teach well over this weekend, I wouldn’t be going otherwise, but it will be a blur in the hotel room outside of those teaching hours.

This brings me back to the subject of deadlines. I’ve now been talking about my time banking and elastic time management ideas to a lot of people and I’ve got quite polished in my responses to the same set of questions. Let me distill them for you, as they have relevance to where I am at the moment:

- Not all deadlines can be made flexible.

I completely agree. We have to grant degrees, finalise resource allocations and so on. Banking time is about teaching time management and the deadline is the obvious focal point, but some deadlines cannot be missed. This leads me to…

- We have deadlines in industry that are fixed! Immutable! Miss it and you miss out! Why should I grant students flexible deadlines?

Because not all of your deadlines are immutable, in the same way that not all are flexible. The serious high-level government grants? The once in a lifetime opportunities to sell product X to company YYPL? Yes, they’re fixed. But to meet these fixed deadlines, we move those other deadlines that we can. We shift off other things. We work weekends. We stay up late. We delay reading something. When we learn how to manage our deadlines so that we can make time for those that are both important and immovable, we do so by managing our resources to shift other deadlines around.

Elastic time management recognises that life is full of management decisions, not mindless compliance. Pretending that some tiny assignment of pre-packaged questions we’ve been using for 10 years is the most important thing in an 18 year old’s life is not really very honest. But we do know that the students will do things if they are important and we provide enough information that they realise this!

I have had to shift a lot of deadlines to make sure that I am ready to teach for this weekend. On top of that I’ve been writing a paper that is due on the 17th of November, as well as working on many other things. How did I manage this? I quickly looked across my existing resources (and remember I’m at half-speed, so I’ve had to schedule half my usual load) and broke things down into: things that had to happen before this teaching trip, and things that could happen after. I then looked at the first list and did some serious re-arrangement. Let’s look at some of these individually.

Blog posts, which are usually prepared 1-2 days in advance, are now written on the day. My commitment to my blog is important. I think it is valuable but, and this is key, no-one else depends upon it. The blog is now allocated after everything else, which is why I had my lunch before writing this. I will still meet my requirement to post every day but it may show up some hours after my usual slot.

I haven’t been sleeping enough, which is one of the reasons that I’m in such a bad way at the moment. All of my deadlines now have to work around me getting into bed by 10pm and not getting out before 6:15am. I cannot lose any more efficiency so I have to commit serious time to rest. I have also built in some sitting around time to make sure that I’m getting some mental relaxation.

I’ve cut down my meeting allocations to 30 minutes, where possible, and combined them where I can. I’ve said ‘no’ to some meetings to allow me time to do the important ones.

I’ve pushed off certain organisational problems by doing a small amount now and then handing them to someone to look after while I’m in Singapore. I’ve sketched out key plans that I need to look at and started discussions that will carry on over the next few days but show progress is being made.

I’ve printed out some key reading for plane trips, hotel sitting and the waiting time in airports.

Finally, I’ve allocated a lot of time to get ready for teaching and I have an entire day of focus, testing and preparation on top of all of the other preparation I’ve done.

What has happened to all of the deadlines in my life? Those that couldn’t be moved, or shouldn’t be moved, have stayed where they are and the rest have all been shifted around, with the active involvement of other participants, to allow me room to do this. That is what happens in the world. Very few people have a world that is all fixed deadline and, if they do, it’s often at the expense of the invisible deadlines in their family space and real life.

I did not learn how to do this by somebody insisting that everything was equally important and that all of their work requirements trumped my life. I am learning to manage my time maturely by thinking about my time as a whole, by thinking about all of my commitments and then working out how to do it all, and to do it well. I think it’s fair to say that I learned nothing about time management from the way that my assignments were given to me but I did learn a great deal from people who talked to me about their processes, how they managed it all and through an acceptance of this as a complex problem that can be dealt with, with practice and thought.

I am a potato – heading towards caramelisation. (Programming Language Threshold Concepts Part II)

Posted: October 28, 2012 Filed under: Education | Tags: curriculum, design, education, educational problem, educational research, feedback, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, threshold concepts, tools Leave a commentFollowing up on yesterday’s discussion of some of the chapters in “Threshold Concepts Within the Disciplines”, I finished by talking about Flanagan and Smith’s thoughts on the linguistic issues in learning computer programming. This led me to the theory of markedness, a useful way to think about some of the syntactic structures that we see in computer programs. Let me introduce the concept of markedness with an example. Consider the pair of opposing concepts big/small. If you ask how ‘big’ something is, then you’re not actually assuming that the thing you’re asking about is ‘big’, you’re asking about its size. However, ask someone how ‘small’ something is and there’s a presumption that it’s actually small (most of the time). The same thing happens for old/young. Asking someone how old they are, bad jokes aside, is not implying that they are old – the word “old” here is standing in for the concept of age. This is an example of markedness in the relationship between lexical opposites: the assumed meaning (the default) is referred to as the unmarked form, where the marked form is more restrictive (in that it doesn’t subsume both concepts) and it is generally not the default. You see this in gender and plural forms too. In Lions/Lionesses, Lions is an unmarked form because it’s the default and it doesn’t exclude the Lionesses, whereas Lionesses would not be the general form used (for whatever reasons, good or bad) and excludes the male lions.

Why is this important for programming languages? Because we often have syntactic elements (the structures and the tokens that we type) that take the form of opposing concepts where one is the default, and hence unmarked, form. Many modern languages employ object-oriented programming practices (itself a threshold concept) that allow programmers to specify how the data that they define inside their programs is going to be used, even within that program. These practices include the ability to set access controls, that strictly define how you can use your code, how other pieces of code that you write can use your code, and how other people’s code can use it, as well. The fundamental access control pairs are public and private, one of which says anyone can use this piece of code to calculate things or can change this value, the other restricts such use or change to the owner. In the Java programming language, public dominates, by far, and can be considered unmarked. Private, however, changes the way that you can work with your own code and it’s easy for students to get this wrong. (To make it more confusing, there is another type of access control that sits effectively between public and private, which is an even more cognitively complex concept and is probably the least well understood of the lot!) One of the issues with any programming language is that deviating from the default requires you to understand what you are doing because you are having to type more, think more and understand more of the implications of your actions.

However, it gets harder, because we sometimes have marked/unmarked pairs where the unmarked element is completely invisible. If we didn’t have the need to describe how people could use our code then we wouldn’t need the access modifiers – the absence of public, private or protected wouldn’t signify anything. There are some implicit modes of operation in programming languages that can be overridden with keywords but the introduction of these keywords just doesn’t illustrate a positive/negative asymmetry (as with big/small or private/public), these illustrate an asymmetry between “something” and “nothing”. Now, the presence of a specific and marked keyword makes it glaringly obvious that there has been an invisible assumption sitting in that spot the whole time.

One of these troublesome word/nothing pairs is found in several languages and consists of the keyword static, with no matching keyword. What do you think the opposite (and pair) of static is? If you’re like most humans, you’d think dynamic. However, not only is this not what this keyword actually means but there is no dynamic keyword that balances it. Let’s look at this in Java:

public static void main(String [] args) {...}

public static int numberOfObjects(int theFirst) {...}

public int getValues() {...}

You’ll see that static keyword twice.Where static isn’t used, however, there’s nothing at all, and this (by its absence) also has a definite meaning and this defines what the default expectation is of behaviour in the Java programming language. From a teaching perspective, this means that we now have a default context, with a separation between those tokens and concepts that are marked and unmarked, and it becomes easier to see why students will struggle with instance methods and fields (which is what we call things without static) if we start with static, and struggle with the concept of static if we start the other way around! What further complicates is this is that every single program we write must contain at least one static method, because it is the starting point for the program’s execution. Even if you don’t want to talk about static yet, you must use it anyway (unless you want to provide the students with some skeleton code or a harness that removes this – but now we’ve put the wizard behind the curtain even more).

One other point I found very interesting in Flanagan and Smith’s chapter was the discussion of barriers and traps in programming languages, from Thimbleby’s critique of Java (1999). Barriers are the limitations on expressiveness that mean that what you want to say in a programming language can only be said in a certain way or in a certain place – which limits how we can explain the language and therefore affects learnability. As students tend to write their lines of code as and when they think of them, at least initially, these barriers will lead the students to make errors because they haven’t developed the locally valid computational idiom. I could ask for food in German as “please two pieces ham thick tasty” and, while I’ll get some looks, I’ll also get ham. Students hitting a barrier get confusing error messages that are given back to them at a time when they barely have enough framework to understand what these messages mean, let alone how to fix them. No ham for them!

Traps are unknown and unexpected problems, such as those caused by not using the right way to compare two things in a program. In short, it is possible in many programming languages to ask “does this equal that” and return an answer of true or false that does not depend upon the values of this or that, but where they are being stored in memory. This is a trap. It is confusing for the novice to try to work out why the program is telling her that two containers that have the value “3” in them are not the same because they are duplicates rather than aliases for the same entity. These traps can seriously trip someone up as they attempt to form a correct mental model and, in the worst case, can lead to magical or cargo-cult thinking once again. (This is not helped by languages that, despite saying that they will take such-and-such an action, take actions that further undermine consistent mental models without being obvious about it. Sekrit Java String munging, I’m looking at you.)

This way of thinking about languages is of great interest to me because, instead of talking about usability in an abstract sense, we are now discussing concrete benefits and deficiencies in the language. Is it heavily restrictive on what goes where, such as Pascal’s pre-declaration of variables or Java’s package import restrictions? Does the language have a large number on unbalanced marked/unmarked pairs where one of them is invisible and possibly counterintuitive, such as static? Is it easy to turn a simple English statement into a programmatic equivalent that does not do what was expected?

The authors suggested ways to dealing with this, including teaching students about formal grammars for programming languages – effectively treating this as learning a new language because the grammar, syntax and semantics are very, very different from English.(Suggestions included Wittgenstein’s Sprachspiel, language game, which will be a post for another time.) Another approach is to start from logic and then work forwards, turning this into forms that will then match the programming languages and giving us a Rosetta stone between English speakers and program speakers.

I have found the whole book very interesting so far and, obviously, so too this chapter. Identifying the problems and their locations, regrettably, is only the starting point. Now I have to think about ways to overcome this, building on what these and other authors have already written.

Imagine that you are a raw potato…

Posted: October 27, 2012 Filed under: Education | Tags: community, design, education, educational research, feedback, Generation Why, higher education, in the student's head, principles of design, resources, student perspective, teaching, teaching approaches, thinking, threshold concepts, tools Leave a commentThe words in the title of this post, surprisingly, are the first words in the Editors’ Preface to Land, Meyer and Smiths 2008 edited book “Threshold Concepts within the Disciplines”. Our group has been looking at the penetration of certain ideas through the discipline, examining how much the theory social constructivism accompanies the practice of group work for example, or, as in this case, seeing how many people identify threshold concepts in what they are trying to teach. Everyone who teaches first year Computer Science knows that some ideas seem to be sticking points and Meyer and Land’s two papers on “Threshold Concepts and Troublesome Knowledge” (2003 and 2005) provide a way of describing these sticking points by characterising why these particular aspects are hard – but also by identifying the benefits when someone actually gets it.

Threshold concept theory, in the words of Cousin, identifies the “the kind of complicated learner transitions learners undergo” and identifies portals that change the way that you think about a given discipline. This is deeply related to our goal of “Thinking as a discipline practitioner” because we must assume that a sound practitioner has passed through these portals and has transformed the way that they think in order to be able to practice correctly. Put simply, being a mathematician is more than plugging numbers into formulae.

As you can read, and I’ve mentioned in a previous post, threshold concepts are transformative, integrative, irreversible and (unfortunately) troublesome. Once you have passed through the hurdle then a new vista opens up before you but, my goodness, sometimes that’s a steep hurdle and, unsurprisingly, this is where many students fall.

The potato example in the preface describes the irreversible chemical process of cooking and how the way that we can use the potato changes at each stage. Potatoes, thankfully unaware, have no idea of what is going on nor can they oscillate on their pathway to transformation. Students, especially in the presence of the challenging, can and do oscillate on their transformational road. Anyone who teaches has seen this where we make great strides on one day and, the next, some of the progress ebbs away because a student has fallen back to a previous way of thinking. However, once we have really got the new concept to stick, then we can move forward on the basis of the new knowledge.

Threshold concepts can also be thought of as marking the boundary of areas within a discipline and, in this regard, have special interest to teachers and learners alike. Being able to subdivide knowledge into smaller sections to develop mastery that then allows further development makes the learning process easier to stage and scaffold. However, the looming and alien nature of the portal between sections introduces a range of problems that will apply to many of our students, so we have to ready to assist at these key points.

The book then provides a collection of chapters that discuss how these threshold concepts manifest inside different disciplines and in what forms the alien and troublesome nature can appear. It’s unsurprising again, for anyone teaching Computer Science or programming, that there are a large number of fundamental concepts in programming that are considered threshold concepts. These include the notion of program state, the collection of data that describes the information within a program. While state is an everyday concept (the light is on, the lift is on level 4), the concentration on state, the limitations and implications of manipulation and the new context raise this banal and everyday concepts into the threshold area. A large number of students can happily tell you which floor the lift is on, but cannot associate this physical state with the corresponding programmatic state in their own code.

Until students master some of these concepts, their questions will always appear facile, potentially ill-formed and (regrettably) may be interpreted as lazy. Flanagan and Smith raise an interesting point in that programming languages, which are written in pseudo-English with a precise but alien grammar, may be leading a linguistic problem, where the translation to a comprehensible form is one of the first threshold concepts that a student faces. As an example, consider this simple English set of instructions:

There are 10 apples in the basket. Take each apple out of the basket, polish it, and place it in the sink.

Now let’s look at what the ‘take each apple’ instruction looks like in the C programming language.

for (int i = 0; i < numberOfApples; i++) {

// commands here

}

This is second nature to me to read but a number of you have just looked at that and gone ‘huh’? If you don’t learn what each piece does, understand its importance and can then actually produce it when asked then the risk is that you will just reproduce this template whenever I ask you to count apples. However, there are two situations that humans understand readily: “do something so many times” and “do something UNTIL something happens”. In programs we write these two cases differently – but it’s a linguistic distinction that, from Flanagan and Smith’s work “From Playing to Understanding”, correlates quite well with an ability to pick the more appropriate way of writing the program. If the language itself is the threshold, and for some students it certainly appears that it is, then we are not even able to assume that the students will reach the first stage of ‘local thresholds’ found within the subdomain itself, they are stuck on the outside reading a menu in a foreign language trying to work out if it says “this way to the toilet”.

Such linguistic thresholds will make students appear very, very slow and this is a problem. If you ask a student a question and the words make no sense in the way that you’re presenting them, then they will either not respond (if they have a choice) as they don’t know what you asked, they will answer a different question (by taking a stab at the meaning) or they will ask you what you mean. If someone asks you what you mean when, to you, the problem is very simple, we run the risk of throwing up a barrier between teacher and learner, the teacher assuming that the learner is stupid or lazy, the student assuming that the teacher either doesn’t know what they’re saying or doesn’t care about them.

I’ll write more on the implications of all of this tomorrow.

A Difficult Argument: Can We Accept “Academic Freedom” In Defence of Poor Teaching?

Posted: October 26, 2012 Filed under: Education | Tags: advocacy, authenticity, community, curriculum, education, educational problem, educational research, ethics, feedback, Generation Why, higher education, measurement, principles of design, reflection, student perspective, teaching, teaching approaches, thinking, tools, vygotsky 3 CommentsLet me frame this very carefully, because I realise that I am on very, very volatile ground with any discussion that raises the spectre of a right or a wrong way of teaching. The educational literature is equally careful about this and, very sensibly, you read about rates of transfer, load issues, qualitative aspects and quantitative outcomes, without any hard and fast statements such as “You must never lecture again!” or “You must use formative assessment or bees will consume your people!”

I am aware, however, that we are seeing a split between those people who accept that educational research has something to tell them, which may possibly override personal experience or industry requirement, and those who don’t. But, and let me tread very carefully indeed, while those of us who accept that the traditional lecture is not always the right approach realise that the odd lecture (or even entire course of lectures) won’t hurt our students, there is far more damaging and fundamental disagreement.

Does education transform in the majority of cases or are most students ‘set’ by the time that they come to us?

This is a key question because it affects how we deal with our students. If there are ‘good’ and ‘bad’ students, ‘smart’ and ‘dumb’ or ‘hardworking’ and ‘lazy’, and this is something that is an immutable characteristic, then a lot of what we are doing in order to engage students, to assist them in constructing knowledge and placing into them collaborative environments, is a waste of their time. They will either get it (if they’re smart and hardworking) or they won’t. Putting a brick next to a bee doesn’t double your honey-making capacity or your ability to build houses. Except, of course, that students are not bees or bricks. In fact, there appears to be a vast amount of evidence that says that such collaborative activities, if set up correctly in accordance with the established work in social constructivism and cognitive apprenticeship, will actually have the desired effect and you will see positive transformations in students who take part.

However, there are still many activities and teachers who continue to treat students as if they are always going to be bricks or bees. Why does this matter? Let me digress for a moment.

I don’t care if vampires, werewolves or zombies actually exist or not and, for the majority of my life, it is unlikely to make any difference to me. However, if someone else is convinced that she is a vampire and she attacks me and drain my blood, I am just as dead as if she were not a vampire – of course, I now will not rise from the dead but this is of little import to me. What matters is the impact upon me because of someone else’s practice of their beliefs.

If someone strongly believes that students are either ‘smart enough’ to take their courses or not, they don’t care who fails or how many, and that it is purely the role of the student to have or to spontaneously develop this characteristic then their impact will likely be high enough to have a negative impact on at least some students. We know about stereotype threat. We’re aware of inherent bias. In this case, we’re no longer talking about right or wrong teaching (thank goodness), we’re talking about a fundamentally self-fulfilling prophecy as a teaching philosophy. This will have as great an impact to those who fail or withdraw as the transformation pathway does to those who become better students and develop.

It is, I believe, almost never about the bright light of our most stellar successes. Perhaps we should always be held to answer (or at least explain) for the number and nature of those who fall away. I have been looking for statements of student rights across Australia and the Higher Education sites all seem to talk about ‘fair assessment’ and ‘right of appeal’, as well as all of the student responsibilities. The ACARA (Australian Curriculum and Reporting Authority) website talks a lot about opportunities and student needs in schools. What I haven’t yet found is something that I would like to see, along these lines:

“Educational is transformational. Students are entitled to be assessed on their own performance, in the context of their opportunities.”

Curve grading, which I’ve discussed before, immediately forces a false division of students into good and bad, merely by ‘better’ students existing. It is hard to think of something that is fundamentally less fair or appropriate to the task if we accept that our goal is improvement to a higher standard, regardless of where people start. In a curve graded system, the ‘best’ person can coast because all they have to do is stay one step ahead of their competition and natural alignment and inflation will do the rest. This is not the motivational framework that we wish to establish, especially when the lowest realise that all is lost.

I am a long distance runner and my performances will never set the world on fire. To come first in a race, I would have to be in a small race with very unfit people. But no-one can take away my actual times for my marathons and it is those times that have been used to allow me to enter other events. You’ll note that in the Olympics, too. Qualifying times are what are used because relative performance does not actually establish any set level of quality. The final race? Yes, we’ve established competitiveness and ranking becomes more important – but then again, entering the final heat of an Olympic race is an Olympian achievement. Let’s not quibble on this, because this is the equivalent of Nobel and Turing awards.

And here is the problem again. If I believe that education is transformative and set up all of my classes with collaborative work, intrinsic motivation and activities to develop self-regulation, then that’s great but what if it’s in third-year? If the ‘students were too dumb to get it’ people stand between me and my students for the first two years then I will have lost a great number of possibly good students by this stage – not to mention the fact that the ones who get through may need some serious de-programming.

Is it an acceptable excuse that another academic should be free to do what they want, if what they want to do is having an excluding and detrimental effect on students? Can we accept that if it means that we have to swallow that philosophy? If I do, does it make me complicit? I would like nothing more than to let people do what they want, hey, I like that as much as the next person, but in thinking about the effect of some decisions being made, is the notion of personal freedom in what is ultimately a public service role still a sufficiently good argument for not changing practice?

Heading to SIGCSE!

Posted: October 25, 2012 Filed under: Education | Tags: authenticity, blogging, community, education, educational research, higher education, reflection, resources, sigcse, teaching approaches, time banking, tools, universal principles of design, workload Leave a commentI’m pretty snowed under for the rest of the week and, while I dig myself out of a giant pile of papers on teaching first year programmers (apparently it’s harder than throwing Cay’s book at them and yelling “LEARN!”), I thought I’d talk about some of the things that are going on in our Computer Science Education Research Group. The first thing to mention is, of course, the group is still pretty new – it’s not quite “new car smell” territory but we are certainly still finding out exactly which direction we’re going to take and, while that’s exciting, it also makes for bitten fingernails at paper acceptance notification time.

We submitted a number of papers to SIGCSE and a special session on Contributing Student Pedagogy and collaboration, following up on our multi-year study on this and Computer Science Education paper. One of the papers and the special session have been accepted, which is fantastic news for the group. Two other papers weren’t accepted. While one was a slightly unfortunate near-miss (but very well done, lead author who shall remain nameless [LAWSRN]), the other was a crowd splitter. The feedback on both was excellent and it’s given me a lot to think about, as I was lead on the paper that really didn’t meet the bar. As always, it’s a juggling act to work out what to put into a paper in order to support the argument to someone outside the group and, in hindsight quite rightly, the reviewers thought that I’d missed the mark and needed to try a different tack. However, with one exception, the reviewers thought that there was something there worth pursuing and that is, really, such an important piece of knowledge that it justifies the price of admission.

Yes, I’d have preferred to have got it right first time but the argument is crucial here and I know that I’m proposing something that is a little unorthodox. The messenger has to be able to deliver the message. Marathons are not about messengers who run three steps and drop dead before they did anything useful!

The acceptances are great news for the group and will help to shape what we do for the next 12-18 months. We also now have some papers that, with some improvement, can be sent to another appropriate conference. I always tell my students that academic writing is almost never wasted because if it’s not used here, or published there, the least that you can learn is not to write like that or not about that topic. Usually, however, rewriting and reevaluation makes work stronger and more likely to find a place where you can share it with the world.

We’re already planning follow-up studies in November on some of the work that will be published at SIGCSE and the nature of our investigations are to try and turn our findings into practically applicable steps that any teacher can take to improve participation and knowledge transfer. These are just some of the useful ideas that we hope to have ready for March but we’ll see how much we get done. As always. We’re coming up to the busy end of semester with final marking, exams and all of that, as well as the descent into admin madness as we lose the excuse of “hey, I’d love to do that but I’m teaching.” I have to make sure that I wrestle enough research time into my calendar to pursue some of the exciting work that we have planned.

I look forward to seeing some of you in Colorado in March to talk about how it went!

Recursive Tutorial: A tutorial on writing a tutorial

Posted: October 24, 2012 Filed under: Education | Tags: authenticity, community, curriculum, data visualisation, education, educational research, Generation Why, grand challenge, higher education, in the student's head, learning, principles of design, reflection, student perspective, teaching, teaching approaches, thinking, tools Leave a commentI assigned the Grand Challenge students a slightly strange problem for yesterday’s tutorial: “How would you write an R tutorial for Year 11 High School Students?” R is an open source statistics package that is incredibly powerful and versatile but it is nowhere near as friendly to use or accessible as traditional GUI tools such as Microsoft Excel. R has some menus and buttons on it but most of these are used to control the environment, rather than applying the statistical and mathematical functions. R Studio is an associated Integrated Development Environment (IDE) that makes working with R easier but, at its core, R relies upon you knowing enough R to type the right commands.

Discussing this with students, we compared Excel and R to find out what the core differences were and some of them are not important early on but become more important later. Excel, for example, allows you to quickly paste and move around data, apply some functions, draw some graphs and come to a result quickly, mostly by pushing buttons and using on-line help with a little typing. But, and it’s an important but, unless you write a program in Excel (and not that many people do), re-applying all of that manipulation to a new data source requires you to click and push and move across the screen all over again. You have to recreate a long and complicated combination of mechanical and cognitive functions. R, by contrast, requires you to type commands to get things to happen but it remembers them by default and you can easily extract them. Because of how R works, you drag in data (from a file, say) and then execute a set of manipulation steps. If you’re familiar with R then this is straight-forward. If not, then steep learning curve. However, re-using these instructions and manipulations on a new data source is trivial. You change the file and re-run all of the steps.

Why am I talking about new data sources? Because it’s often the case that you want to do the same thing with new data OR you realise that the data you were working with was incomplete or in error. Unless you write a lot of Visual Basic in Excel (and that no longer works on Macs so it’s not a transferable option), your Excel spreadsheet with changed data requires you to potentially reapply or check the application of everything in the spreadsheet, especially if there is any sorting of data, creation of new columns or summary data – and let’s not even start talking about pivot tables! But, for single run, for finance, for counting stuff, Excel is almost always going to be more easy to teach people to use than R. For scientists, however, R is better to use for two very important reasons: it is less likely to do something that is irreversible to your data and the vast majority of its default choices are sensible.

The students came up with a list of things that Excel does (good and bad): it’s strongly visual, lay-user friendly, tells you what you can do, does what it damn well wants to, data changes may require manual reapplication. There’s a corresponding list for R: steep learning curve, visual display for R environment but command-line interface for commands, does what you tell it to do (except when it’s too smart). I surveyed the class to find out who was using R rather than Excel and the majority of students were using R for their analysis but, and again it’s an important but, only because they had to. In situations where Excel was enough (simple manipulation, straight forward analysis), then Excel got used because Excel is far easier to use and far friendlier.

The big question for the students was “How do I start doing something?” In Excel, you type numbers into the spreadsheet and then can just start selecting things using a relatively good on-line help system. In R you are faced with a blinking prompt and you have to know enough to type streams of commands like this:

newtab <-read.csv("~/days.txt",header=FALSE)

plot(seq(1,nrow(newtab)),newtab$V1)

boxplot(newtab)

abline(a=1500,b=0)

mean(newtab)

And, with a whole set of other commands, you can get graphs like this. (I realise that this is not a box plot!)

Once you’re used to it, this is meaningful, powerful and re-applicable. I can update the data and re-run this to my heart’s content, analysing vast quantities of data without having to keep mouse clicking into cells. But let’s remember our context. I’m not talking about higher education students, I’m talking about school students and it’s important to remember that teaching people something before they’re ready to use it or before they have an opportunity to use it is potentially not the best use of effort.

My students pointed out that the school students of today are all learning how to use graphing calculators, with giant user manuals, and (in some cases) the students switch on their calculators to see a menu rather than the traditional calculator single line. But the syntax and input modes for calculators vary widely. Some use ( ) for operations like sin, so a student will see sin(30) when they start doing trig, whereas some don’t. This means that some of the students I might want to teach R to have not necessarily got their head around the fact that functions exist, except as something that Excel requires them to do. Let’s go to the why here, because it’s important. Why are students learning how to use these graphing calculators? So they can pass their exams, where the competent and efficient use of these things will help them. Yes, it appears that students may be carrying out the kind of operations I would like them to put into a more powerful tool, but why should they?

If a teach a high school student about Excel then there are many places that they might use this kind of software: micro-budgeting, keeping track of things, the ‘simple’ approximation of a database storing books or things like that. However, the general practice of using Excel is familiarisation with a GUI interface that is very, very common and that most students need experience with. If I teach them R then I might be extending their knowledge but (a) the majority are probably not yet ready for it and (b) they are highly unlikely to need to use it for anything in the near future.

The conclusion that my students reached was that, if we really wanted to provide exposure to an industry-like scientific or engineering tool at the earlier stage, then why not use one that was friendlier, more helpful but still had a more scientific focus. They suggested Matlab (as a number of them had been exposed) or Mathematica. Now this whole exercise was designed to get them to practice their thinking about outreach, community, communication and sharing knowledge, so I wasn’t ever actually planning to run an R tutorial at Year 11. But these students thought through and asked the very important questions:

- Who is this aimed at?

- What do they already know?

- What do they need to know?

- Why are we doing this?

Of course, I have also learned a great deal from this as well – I had no idea that the calculators had quite got to this point, nor that there were schools were students would have to select through a graphical menu to get to the simple “3+3 EXE” section of the calculator! Don’t tell my Grand Challenge students but I think I’m learning roughly as much as they are!

Polymaths, Philomaths and Teaching Philosophy: Why we can’t have the first without the second, and the second should be the goal of the third.

Posted: October 22, 2012 Filed under: Education | Tags: advocacy, authenticity, collaboration, community, education, educational problem, ethics, Generation Why, higher education, philosophy, principles of design, reflection, resources, teaching, teaching approaches, thinking, tools, universal principles of design, vygotsky 1 CommentYou may have heard the term polymath, a person who possesses knowledge across multiple fields, or if you’re particularly unlucky, you’ve been at one of those cocktail parties where someone hands you a business card that says, simply, “Firstname Surname, Polymath” and you have formed a very interesting idea of what a polymath is. We normally reserve this term for people who excel across multiple fields such as, to drawn examples from this Harvard Business Review blog by Kyle Wiens, Leonard da Vinci (artist and inventor), Benjamin Franklin, Paul Robeson or Steve Jobs. (Let me start to address the article’s gender imbalance with Hypatia of Alexandria, Natalie Portman, Maya Angelou and Mayim Bialik, to name a small group of multidisciplinary women, admittedly focussing on the Erdös-Bacon intersection.) By focusing on those who excel, we do automatically associate a higher degree of assumed depth of knowledge across these multiple fields. The term “Renaissance [person]” is often bandied about as well.

Da Vinci, seen here inventing the cell phone. Sadly, it was to be over 500 years before the cell phone tower was invented so he never received a call. His monthly bill was still enormous.

Now, I have worked as a system administrator and programmer, a winemaker and I’m now an academic in Computer Science, being slowly migrated into some aspects of managerialism, who hopes shortly to start a PhD in Creative Writing. Do I consider myself to be a polymath? No, absolutely not, and I struggle to think of anyone who would think of me that way, either. I have a lot of interests but, while I have had different areas of expertise over the years, I’ve never managed the assumed highly parallel nature of expertise that would be required to be considered a polymath, of any standing. I have academic recognition of some of these interests but this changes neither the value (to me or others) nor has it ever been required to be well-lettered to be in the group mentioned above.

I describe myself, if I have to, as a philomath, someone who is a lover of learning. (For both of the words, the math suffix comes from the Greek and means to learn, but poly means much/many and philo means loving, so a polymath is ‘many learnéd’.) The immediate pejorative for someone who leans lots of things across areas is the infamous “Jack of all trades” and its companion “master of none”. I love to learn new things, I like studying but I also like applying it. I am confident that the time I spent in each discipline was valuable and that I knew my stuff. However, the main point I’d like to state here is that you cannot be a polymath without first having been a philomath – I don’t see how you can develop good depth in many areas unless you have a genuine love of learning. So every polymath was first a philomath.

Now let’s talk about my students. If they are at all interested in anything I’m teaching them, and let’s assume that at least some of them love various parts of a course at some stage, then they are looking to develop more knowledge in one area of learning. However, looking at my students as mono-cultural beings who only exist when they are studying, say, the use of the linked list in programming, is to sell them very, very short indeed. My students love doing a wide range of things. Yes, those who love learning in my higher educational context will probably do better but I guarantee you that every single student you have loves doing something, and most likely that’s more than one thing! So every single one of my students is inherently a philomath – but the problems arise when what they love to learn is not what I want to teach!

This leads me to the philosophy of learning and teaching, how we frame, study and solve the problems of trying to construct knowledge and transform it to allow its successful transfer to other people, as well as how we prepare students to receive, use and develop it. It makes sense that the state that we wish to develop on our students is philomathy. Students are already learning from, interested and loving their lives and the important affairs of the world as they see them, so to get them interested in what we want to teach them requires us to acknowledge that we are only one part of their lives. I rarely meet a student who cannot provide a deep, accurate and informative discourse on something in their lives. If we accept this then, rather than demanding an unnatural automaton who rewrites their entire being to only accept our words on some sort of diabolical Turing Tape of compliance, we now have a much easier path, in some respects, because accepting this means that our students will spend time on something in the depth that we want – it is now a matter of finding out how to tap into this. At this point, the yellow rag of populism is often raised, unfairly in most cases, because it is assumed that students will only study things which are ‘pop’ or ‘easy’. There is nothing ‘easy’ about most of the pastimes at which our students excel and they will expend vast amount of efforts on tasks if they can see a clear reason to do so, it appears to be a fair return on investment, and they feel that they have reasonable autonomy in the process. Most of my students work harder for themselves than they ever will for me: all I do is provide a framework that allows them to achieve something and this, in turn, allows them to develop a love. Once the love has been generated, the philomathic wheel turns and knowledge (most of the time) develops.

Whether you agree on the nature of the tasks or not, I hope that you can see why the love of learning should be a core focus of our philosophy. Our students should engage because they want to and not just because we force them to do so. Only one of these approaches will persist when you remove the rewards and the punishments and, while Skinner may disagree, we appear to be more than rats, especially when we engage our delightfully odd brains to try and solve tasks that are not simply rote learned. Inspiring the love of learning in any one of our disciplines puts a student on the philomathic path but this requires us to accept that their love of learning may have manifested in many other areas, that may be confusedly described as without worth, and that all we are doing is to try and get them to bring their love to something that will be of benefit to them in their studies and, assuming we’ve set the course up correctly, their lives in our profession.