Whoops, I Seem To Have Written a Book. (A trip through Python and R Towards Truth)

Posted: May 6, 2012 Filed under: Education | Tags: blogging, curriculum, data visualisation, design, education, higher education, Python, R, reflection, teaching, teaching approaches, tools 3 CommentsMark’s 1000th post (congratulations again!) and my own data analysis reminded me of something that I’ve been meaning to do for some time, which is work out how much I’ve written over the 151 published posts that I’ve managed this year. Now, foolish me, given that I can see the per-post word count, I started looking around to see how I could get an entire blog count.

And, while I’m sure it’s obvious to someone else who will immediately write in and say “Click here, Nick, sheesh!”, I couldn’t find anything that actually did what I wanted to do. So, being me, I decided to do it ye olde fashioned way – exporting the blog and analysing it manually. (Seriously, I know that it must be here somewhere but my brain decided that this would be a good time to try some analysis practice.)

Now, before I go on, here are the figures (not including this post!):

- Since January 1st, I have published 151 posts. (Eek!)

- The total number of words, including typed hyperlinks and image tags, is 102,136. (See previous eek.)

- That’s an average of just over 676 words per post.

Is there a pattern to this? Have I increased the length of my posts over time as I gained confidence? Have they decreased over time as I got busier? Can I learn from this to make my posting more efficient?

The process was, unsurprisingly, not that simple because I took it as an opportunity to work on the design of an assignment for my Grand Challenges students. I deliberately started from scratch and assumed no installed software or programming knowledge above fundamentals on my part (this is harder than it sounds). Here are the steps:

- Double check for mechanisms to do this automatically.

- Realise that scraping 150 page counts by hand would be slow so I needed an alternative.

- Dump my WordPress site to an Export XML file.

- Stare at XML and slowly shake head. This would be hard to extract from without a good knowledge of Regular Expressions (which I was pretending not to have) or Python/Perl-fu (which I can pretend that I have to then not have but my Fu is weak these days).

- Drag Nathan Yau’s Visualize This down from the shelf of Design and Visualisation books in my study.

- Read Chapter 2, Handling Data.

- Download and install Beautiful Soup, an HTML and XML parsing package that does most of the hard word for you. (Instructions in Visualize This)

- Start Python

- Read the XML file into Python.

- Load up the Beautiful Soup package. (The version mentioned in the book is loaded up in a different way to mine so I had to re-enage my full programming brain to find the solution and make notes.)

- Mucked around until I extracted what I wanted to while using Python in interpreter mode (very, very cool and one of my favourite Python features).

- Wrote an 11 line program to do the extraction of the words, counting them and adding them (First year programming level, nothing fancy).

A number of you seasoned coders and educators out there will be staring at points 11 and 12, with a wavering finger, about to say “Hang on… have you just smoothed over about an hour plus of student activity?” Yes, I did. What took me a couple of minutes could easily be a 1-2 hour job for a student. Which is, of course, why it’s useful to do this because you find things like Beautiful Soup is called bs4 when it’s a locally installed module on OS X – which has obviously changed since Nathan wrote his book.

Now, a good play with data would be incomplete without a side trip into the tasty world of R. I dumped out the values that I obtained from word counting into a Comma Separated Value (CSV) file and, digging around in the R manual, Visualize This, and Data Analysis with Open Source Tools by Philipp Janert (O’Reilly), I did some really simple plotting. I wanted to see if there was any rhyme or reason to my posting, as a first cut. Here’s the first graph of words per post. The vertical axis is the number of words and the horizontal axis is the post number. So, reading left to right, you’ll see my development over time.

Sadly, there’s no pattern there at all – not only can’t we see one by eye, the correlation tests of R also give a big fat NO CORRELATION.

Now, here’s a graph of the moving average over a 5 day window, to see if there is another trend we can see. Maybe I do have trends, but they occur over a larger time?

Uh, no. In fact, this one is worse for overall correlation. So there’s no real pattern here at all but there might be something lurking in the fine detail, because you can just about make out some peaks and troughs. (In fact, mucking around with the moving average window does show a pattern that I’ll talk about later.)

However, those of who you are used to reading graphs will have noticed something about the axis label for the x-axis. It’s labelled as wp$day. This would imply that I was plotting post day versus average or count and, of course, I’m not. There have not been 151 days since January the 1st, but there have been days when I have posted multiple times. At the moment, for a number of reasons, this isn’t clear to the reader. More importantly, the day on which I post is probably going to have a greater influence on me as I will have different access to the Internet and time available. During SIGCSE, I think I posted up to 6 times a day. Somewhere, this is lost in the structure of the data that considers each post as an independent entity. They consume time and, as a result, a longer post on the same day will reduce the chances of another long post on the same day – unless something unusual is going on.

There is a lot more analysis left to do here and it will take more time than I have today, unfortunately. But I’ll finish it off next week and get back to you, in case you’re interested.

What do I need to do next?

- Relabel my graphs so that it is much clearer what I am doing.

- If I am looking for structure, then I need to start looking at more obvious influences and, in this case, given there’s no other structure we can see, this probably means time-based grouping.

- I need to think what else I should include in determining a pattern to my posts. Weekday/weekend? Maybe my own calendar will tell me if I was travelling or really busy?

- Establish if there’s any reason for a pattern at all!

As a final note, novels ‘officially start at a count of 40,000 words, although they tend to fall into the 80-100,000 range. So, not only have I written a novel in the past 4 months, I am most likely on track to write two more by the end of the year, because I will produce roughly 160-180,000 more words this year. This is not the year of blogging, this is the year of a trilogy!

Next year, my blog posts will all be part of a rich saga involving a family of boy wizards who live on the wrong side on an Ice Wall next to a land that you just don’t walk into. On Mars. Look for it on Amazon. Thanks for reading!

Stats, stats and more stats: The Half Life of Fame

Posted: May 5, 2012 Filed under: Education | Tags: abelson, education, feedback, half-life of fame, higher education, Korzybski, learning, measurement, MIKE, reflection 4 CommentsSo, here are the stats for my blog, at time of writing. You can see a steady increase in hits over the last few weeks. What does this mean? Have I somehow hit a sweet spot in my L&T discussions? Has my secret advertising campaign paid off (no, not seriously). Well, there are a couple of things in there that are both informative… and humbling.

Firstly, two of the most popular searches that find my blog are “London 2012 tube” and “alone in a crowd”. These hits have probably accounted for about 16% of my traffic for the past three weeks. What does that tell me? Well, firstly, the Olympics aren’t too far away and people are looking for how convenient their hotels are. The second is a bit sadder.

The second search “alone in a crowd” is coming in across two languages – English and Russian. I have picked up a reasonable presence in Russia and Ukraine, mostly from that search term. It seems to contribute a lot to my (Australian) Monday morning feed, which means that a lot of people seem to search for this on Sundays.

But let me show you another graph, and talk about the half life of fame:

That’s since the beginning my blogging activities. That spike at Week 9? That’s when I started blogging SIGCSE and also includes the day when over 100 people jumped on my blog because of a referral from Mark Guzdial. That was also the conference at which Hal Abelson referred to a concept of the Half Life of fame – the inevitable drop away after succeeding at something, if you don’t contribute more. And you can see that pretty clearly in the data. After SIGCSE, I was happily on my way back to being read by about 20-30 people a day, tops, most of whom I knew, because I wasn’t providing much more information to the people who scanned me at SIGCSE.

Without consciously doing it, I’ve managed to put out some articles that appear to have wider appeal and that are now showing up elsewhere. But these stats, showing improvement, are meaningless unless I really know what people are looking at. So, right now I’m pulling apart all of my log data to see what people are actually reading – whether I have an increasing L&T presence and readership, or a lot of sad Russian speakers or lost people on the London Underground system. I’m expecting to see another fall-away very soon now and drop down to the comfortable zone of my little corner of the Internet. I’m not interested in widespread distribution – I’m interesting in getting an inspiring or helpful message to the people who need it. Only one person needs to read this blog for it to be useful. It just has to the right one person. 🙂

One of the most interesting things about doing this, every day, is that you start wondering about whether your effort is worth it. Are people seeking it out? Are people taking the time to read it or just clicking through? Are there a growing number of frustrated Tube travellers thinking “To heck with Korzybski!” Time to go into the data and look. I’m going to keep writing regardless but I’d like to get an idea of where all of this is going.

Grand Challenges – A New Course and a New Program

Posted: May 4, 2012 Filed under: Education | Tags: advocacy, challenge, curriculum, design, education, educational problem, equality, grand challenges, higher education, learning, reflection, teaching, universal principles of design Leave a commentOh, the poor students that I spoke to today. We have a new degree program starting, the Bachelor of Computer Science (Advanced), and it’s been given to me to coordinate and set up the first course: Grand Challenges in Computer Science, a first-year offering. This program (and all of its unique components) are aimed at students who have already demonstrated that they have got their academics sorted – a current GPA of 6 or higher (out of 7, that’s A equivalent or Distinctions for those who speak Australian), or an ATAR (Australian Tertiary Admission Rank) of 95+ out of 100. We identified some students who met the criteria and might want to be in the degree, and also sent out a general advertisement as some people were close and might make the criteria with a nudge.

These students know how to do their work and pass their courses. Because of this, we can assume some things and then build to a more advanced level.

Now, Nick, you might be saying, we all know that you’re (not so secretly) all about equality and accessibility. Why are you running this course that seems so… stratified?

Ah, well. Remember when I said you should probably feel sorry for them? I talked to these students about the current NSF Grand Challenges in CS, as I’ve already discussed, and pointed out that, given that the students in question had already displayed a degree of academic mastery, they could go further. In fact, they should be looking to go further. I told them that the course would be hard and that I would expect them to go further, challenge themselves and, as a reward, they’d do amazing things that they could add to their portfolios and their experience bucket.

I showed them that Cholera map and told them how smart data use saved lives. I showed them We Feel Fine and, after a slightly dud demo where everyone I clicked on had drug issues, I got them thinking about the sheer volume of data that is out there, waiting to be analysed, waiting to tell us important stories that will change the world. I pretty much asked them what they wanted to be, given that they’d already shown us what they were capable of. Did they want to go further?

There are so many things that we need, so many problems to solve, so much work to do. If I can get some good students interested in these problems early and provide a coursework system to help them to develop their solutions, then I can help them to make a difference. Do they have to? No, course entry is optional. But it’s so tempting. Small classes with a project-based assessment focus based on data visualisation: analysis, summarisation and visualisation in both static and dynamic areas. Introduction to relevant philosophy, cognitive fallacies, useful front-line analytics, and display languages like R and Processing (and maybe Julia). A chance to present to their colleagues, work with research groups, do student outreach – a chance to be creative and productive.

I, of course, will take as much of the course as I can, having worked on it with these students, and feed parts of it into outreach into schools, send other parts in different levels of our other degrees. Next year, I’ll write a brand new grand challenges course and do it all again. So this course is part of forming a new community core, a group of creative and accomplished leaders, to an extent, but it is also about making this infectious knowledge, a striving point for someone who now knows that a good mark will get them into a fascinating program. But I want all of it to be useful elsewhere, because if it’s good here, then (with enough scaffolding) it will be good elsewhere. Yes, I may have to slow it down elsewhere but that means that the work done here can help many courses in many ways.

I hope to get a good core of students and I’m really looking forward to seeing what they do. Are they up for the challenge? I guess we’ll find out at the end of second semester.

But, so you know, I think that they might be. Am I up for it?

I certainly hope so! 🙂

No More Page 3 Girls

Posted: May 3, 2012 Filed under: Education | Tags: education, higher education, news, reflection, teaching, teaching approaches Leave a commentYou are probably wondering where today’s post is going. (If you’re not from certain parts of the world you’re probably wondering what I’m talking about!) So let me briefly explain, first, what a Page 3 girl is and, secondly, what I’m talking about.

Back in 1969, Rubert Murdoch relaunched the Sun newspaper in the UK and put “glamour models” on Page 3. They were clothed, with a degree of suggestive reveal. Why Page 3? Because it’s the first page you see AFTER you open the newspaper. When it’s sitting on the shelf, you can’t see what’s on Page 3 – but, once you do pick it up, you can get to the glamour models pretty quickly.

(Yes, you’ve probably worked out what kind of newspaper the Sun was. If you haven’t run into the word tabloid yet, now is a good time to check it out.)

In late 1970, to celebrate the newspaper’s first anniversary, the Sun ran its first ‘nude’ model with a topless girl. And, forty years later, they’re still at it. So, that’s a Page 3 girl – but why am I talking about it?

Because our way of reading news has changed.

Newspapers, while still around, are in the process of moving to alternative delivery mechanisms. It will probably be relatively soon that we won’t have a page 3 because we have exclusively hyperlinked sources – a front page, decided by editorial committee but strongly influenced by click monitoring and how the users explore the space. Before the Internet, stories that were to be buried could be put on page 32, between boring sports and public notices. Now, you have to saturate your users in stories and hope that they won’t find it – or be accused that you’re not reporting all stories. Of course, once people find it, they can now link directly, share, restructure and construct your own stories.

On the Internet, there are no page numbers, only connections – and the connections are mutable.

So, no more Page 3, although there will not be an end to unfortunate pop-up images of women and questionable content, and there will be no end to people trying to hide stories or manipulate links in a way that achieves the same aims as burying. But we have entered a time when we can bypass all of this and then share the information on how to get the information, without all of that getting in the way.

(And, of course, we enter a time of clickjacking, misleading searches, commercial redirection and other nonsense. Hey, I never said that the time after Page 3 girls was going to solve everything! Come back in 10 years and we’ll talk about the new possibilities.)

Saving Lives With Pictures: Seeing Your Data and Proving Your Case

Posted: May 2, 2012 Filed under: Education | Tags: advocacy, analytics, authenticity, cholera outbreak, data visualisation, design, education, higher education, learning, teaching, teaching approaches, voronoi 1 Comment

From Wikipedia, original map by John Snow showing the clusters of cholera cases in the London epidemic of 1854

This diagram is fascinating for two reasons: firstly, because we’re human, we wonder about the cluster of black dots and, secondly, because this diagram saved lives. I’m going to talk about the 1854 Broad Street Cholera outbreak in today’s post, but mainly in terms of how the way that you represent your data makes a big difference. There will be references to human waste in this post and it may not be for the squeamish. It’s a really important story, however, so please carry on! I have drawn heavily on the Wikipedia page, as it’s a very good resource in this case, but I hope I have added some good thoughts as well.

19th Century London had a terrible problem with increasing population and an overtaxed sewerage system. Underfloor cesspools were overfilling and the excess was being taken and dumped into the River Thames. Only one problem. Some water companies were taking their supply from the Thames. For those who don’t know, this is a textbook way to distribute cholera – contaminating drinking water with infected human waste. (As it happens, a lack of cesspool mapping meant that people often dug wells near foul ground. If you ever get a time machine, cover your nose and mouth and try not to breath if you go back before 1900.)

But here’s another problem – the idea that germs carried cholera was not the dominant theory at the time. People thought that it was foul air and bad smells (the miasma theory) that carried the bugs. Of course, from this century we can look back and think “Hmm, human waste everywhere, bugs everywhere, bad smells everywhere… ohhh… I see what you did there.” but this is from the benefit of early epidemiological studies such as those of John Snow, a London physician of the 19th Century.

John Snow recorded the locations of the households where cholera had broken out, on the map above. He did this by walking around and talking to people, with the help of a local assistant curate, the Reverend Whitehead, and, importantly, working out what they had in common with each other. This turned out to be a water pump on Broad Street, at the centre of this map. If people got their water from Broad Street then they were much more likely to get sick. (Funnily enough, monks who lived in a monastery adjacent to the pump didn’t get sick. Because they only drank beer. See? It’s good for you!) John Snow was a skeptic of the miasma theory but didn’t have much else to go on. So he went looking for a commonality, in the hope of finding a reason, or a vector. If foul air wasn’t the vector – then what was spreading the disease?

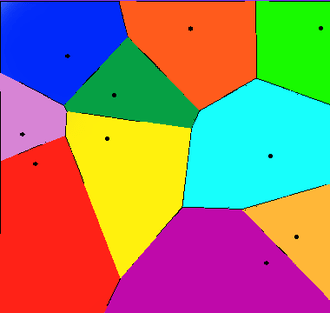

Snow divided the map up into separate compartments that showed the pump and compartment showed all of the people for whom this would be the pump that they used, because it was the closest. This is what we would now call a Voronoi diagram, and is widely used to show things like the neighbourhoods that are serviced by certain shops, or the impacts of roads on access to shops (using the Manhattan Distance).

A Voronoi diagram from Wikipedia showing 10 shops, in a flat city. The cells show the areas that contain all the customers who are closest to the shop in that cell.

What was interesting about the Broad Street cell was that its boundary contained most of the cholera cases. The Broad Street pump was the closest pump to most people who had contracted cholera and, for those who had another pump slightly closer, it was reported to have better tasting water (???) which meant that it was used in preference. (Seriously, the mind boggles on a flavour preference for a pump that was contaminated both by river water and an old cesspit some three feet away.)

Snow went to the authorities with sound statistics based on his plots, his interviews and his own analysis of the patterns. His microscopic analysis had turned up no conclusive evidence, but his patterns convinced the authorities and the handle was taken off the pump the next day. (As Snow himself later said, not many more lives may have been saved by this particular action but it gave credence to the germ theory that went on to displace the miasma theory.)

For those who don’t know, the stink of the Thames was so awful during Summer, and so feared, that people fled to the country where possible. Of course, this option only applied to those with country houses, which left a lot of poor Londoners sweltering in the stink and drinking foul water. The germ theory gave a sound public health reason to stop dumping raw sewage in the Thames because people could now get past the stench and down to the real cause of the problem – the sewage that was causing the stench.

So John Snow had encountered a problem. The current theory didn’t seem to hold up so he went back and analysed the data available. He constructed a survey, arranged the results, visualised them, analysed them statistically and summarised them to provide a convincing argument. Not only is this the start of epidemeology, it is the start of data science. We collect, analyse, summarise and visualise, and this allows us to convince people of our argument without forcing them to read 20 pages of numbers.

This also illustrates the difference between correlation and causation – bad smells were always found with sewage but, because the bad smell was more obvious, it was seen as causative of the diseases that followed the consumption of contaminated food and water. This wasn’t a “people got sick because they got this wrong” situation, this was “households died, with children dying at a rate of 160 per 1000 born, with a lifespan of 40 years for those who lived”. Within 40 years, the average lifespan had gone up 10 years and, while infant mortality didn’t really come down until the early 20th century, for a range of reasons, identifying the correct method of disease transmission has saved millions and millions of lives.

So the next time your students ask “What’s the use of maths/statistics/analysis?” you could do worse than talk to them briefly about a time when people thought that bad smells caused disease, people died because of this idea, and a physician and scientist named John Snow went out, asked some good questions, did some good thinking, saved lives and changed the world.

What’s a Prof?

Posted: May 1, 2012 Filed under: Education | Tags: advocacy, education, higher education, identity, reflection 1 CommentHave a look at this picture – it’s a grab of one of the Google Image search pages for Professors:

If you look at the cartoons on the page, page 3 of the search, you’ll see lab coats and blackboards. While there are a lot of (obviously) portrait shots, searching across the images for yourself will reveal a lot of ‘action shots’ – talking, teaching and, in some cases, just plain thinking. However, what really sticks out on the first images you find, which I haven’t shown here, is the number of Professor Frink (Simpsons) and Professor Farnsworth (Futurama) images – characters who are ubernerds, with strange speaking patterns, a cavalier disregard for the human condition and a fundamental disconnect from the people around them.

Looking at Google Image Search, you’ll see pictures of young professors and old professors. Women and men. The range of races. Nary a white coat in sight unless they are actively involved in research that requires a white coat and are undertaking said research at the point of photography! (I should note that I subscribe to John Birmingham’s fundamental model of suitability of ethnic dress: one should only wear a Greek fisherman’s cap if one is Greek, and a fisherman. I extend it to scientific or trade garb, including military or paramilitary uniform, in that one should then only wear the dress while engaged in the activity. I wore a lab coat when I was studying wine making and in the lab. I think it’s become cleaning cloths now.)

This is all rather light-hearted, except for the slight problem that a number of people’s only interaction with the notion of a professor will be from widely available media sources – and, even though Futurama in particular is heavily ironic in its use, ironic use has a subtle aspect that can easily be lost in communication. It’s already obvious that the scientific community has an uphill battle sometimes and add to this an assumption that we are all bizarre anti-social, uselessly pontificating grey beards who have no understanding of real people and we start any discussion on the back foot.

I like the new image of science that is coming through the media – scientists and professors can be active, have relationships, do cool things, basically being just like everyone else except that they have a title of some sort that reflects what they are good at doing. We do, of course, have a sizeable chunk of the community who did come in during a time when a very different professorial model was encouraged and probably feel at least slightly under assault from the changes in role, respect and expectation that are now spreading across our Universities. But we’re still all just people, whether we look like professors or not.

We don’t have to define what a professor is but it’s always worth reminding people that we are people first, always, before you start trying to photoshop us into white coats, sticking-up white hair and Coke-bottle glasses.

Oh, Perry. (Our Representation of Intellectual Development, Holds On, Holds on.)

Posted: April 30, 2012 Filed under: Education | Tags: advocacy, authenticity, dualism, education, higher education, learning, measurement, multiplicity, perry, reflection, research, resources, teaching, teaching approaches 1 CommentI’ve spent the weekend working on papers, strategy documents, promotion stuff and trying to deal with the knowledge that we’ve had some major success in one of our research contracts – which means we have to employ something like four staff in the next few months to do all of the work. Interesting times.

One of the things I love about working on papers is that I really get a chance to read other papers and books and digest what people are trying to say. It would be fantastic if I could do this all the time but I’m usually too busy to tear things apart unless I’m on sabbatical or reading into a new area for a research focus or paper. We do a lot of reading – it’s nice to have a focus for it that temporarily trumps other more mundane matters like converting PowerPoint slides.

It’s one thing to say “Students want you to give them answers”, it’s something else to say “Students want an authority figure to identify knowledge for them and tell them which parts are right or wrong because they’re dualists – they tend to think in these terms unless we extend them or provide a pathway for intellectual development (see Perry 70).” One of these statements identifies the problem, the other identifies the reason behind it and gives you a pathway. Let’s go into Perry’s classification because, for me, one of the big benefits of knowing about this is that it stops you thinking that people are stupid because they want a right/wrong answer – that’s just the way that they think and it is potentially possible to change this mechanism or help people to change it for themselves. I’m staying at the very high level here – Perry has 9 stages and I’m giving you the broad categories. If it interests you, please look it up!

We start with dualism – the idea that there are right/wrong answers, known to an authority. In basic duality, the idea is that all problems can be solved and hence the student’s task is to find the right authority and learn the right answer. In full dualism, there may be right solutions but teachers may be in contention over this – so a student has to learn the right solution and tune out the others.

If this sounds familiar, in political discourse and a lot of questionable scientific debate, that’s because it is. A large amount of scientific confusion is being caused by people who are functioning as dualists. That’s why ‘it depends’ or ‘with qualification’ doesn’t work on these people – there is no right answer and fixed authority. Most of the time, you can be dismissed as having an incorrect view, hence tuned out.

As people progress intellectually, under direction or through exposure (or both), they can move to multiplicity. We accept that there can be conflicting answers, and that there may be no true authority, hence our interpretation starts to become important. At this stage, we begin to accept that there may be problems for which no solutions exist – we move into a more active role as knowledge seekers rather than knowledge receivers.

Then, we move into relativism, where we have to support our solutions with reasons that may be contextually dependant. Now we accept that viewpoint and context may make which solution is better a mutable idea. By the end of this category, students should be able to understand the importance of making choices and also sticking by a choice that they’ve made, despite opposition.

This leads us into the final stage: commitment, where students become responsible for the implications of their decisions and, ultimately, realise that every decision that they make, every choice that they are involved in, has effects that will continue over time, changing and developing.

I don’t want to harp on this too much but this indicates one of the clearest divides between people: those who repeat the words of an authority, while accepting no responsibility or ownership, hence can change allegiance instantly; and those who have thought about everything and have committed to a stand, knowing the impact of it. If you don’t understand that you are functioning at very different levels, you may think that the other person is (a) talking down to you or (b) arguing with you under the same expectation of personal responsibility.

Interesting way to think about some of the intractable arguments we’re having at the moment, isn’t it?

Apparently We’re Doing It Wrong: Jeffrey McManus Thinks We Should Shut Down More CS Courses

Posted: April 29, 2012 Filed under: Education | Tags: education, higher education, identity, identity crisis, reflection, teaching Leave a commentI ran across a blog post by Jeffrey McManus, who runs a for-profit training organisation called CodeLesson. You can find his post here. Jeffrey’s thoughts appear to be (my summary):

- Most students want to learn applied software engineering, not computer science.

- Undergrads don’t know if courses are good or bad, therefore they are captive consumers, therefore all universities are slow to adapt and reform.

- Two universities in commuting distance shouldn’t have the same academic departments because more courses encourages mediocrity. (Does this apply to internet providers like, for example, Jeffrey’s?)

- We should be using good online courses instead of mediocre or outdated (implicitly not on-line) ones.

- Most university courses aren’t that good if you want applied software engineering, because PhD graduates are out of data by about 5-10 years and have apparently never updated their skills. But, that’s ok, because it’s not the academic’s fault, it’s the fault of a crusty old dean somewhere.

- Our academic departments should be reorganised frequently.

I’ve tried to be pretty fair in my summary but you should go and have a look at the site for yourself because (a) I work at a Uni so I might be biassed and (b) I wasn’t very impressed so I may have filtered him a bit, for which I apologise but I am human. I agree with a few of his points, or parts of his points to varying degrees, but I suspect that he’s got too many interacting issues jammed together – there are any number of business people whose hair will turn white if you say that constant reorganisation is the path to success.

I hear arguments like this occasionally but it makes me sound like I’ve trapped these students in my evil cave and I won’t let them out until they graduate. Far from it! When I start in first year, I tell them that if they just want to program they should leave and go and do a tech course somewhere. They can be a successful programmer in 3-6 months. If they want to innovate, lead, and be ready for the challenges of the future, including writing the languages and systems that everyone else can learn to use in those courses, then they probably want to hang around. (Yes, yes, famous college dropout counter-example – who mysteriously employs a vast number of people with CS or ICT degrees. Moving on.)

Points 2, 4 and 5 don’t really hold up, to me. Point 2 is either irrelevant or universal – therefore irrelevant. Lack of discernment can’t just be limited to undergrads so this ‘inability to detect rubbish’ skill should lead to everyone being slow to adapt and reform. If you’re in any awful program then wouldn’t know it until you developed enough knowledge. Yes, college courses are longer but we have all sorts of industry placement, outreach and practical programming exercises to try and deal with the problem of transition to industry. Apart from anything else, our students are developing other skills as well as coding. Coding doesn’t equal computer science. Point 4 assumes that because applied software engineering can be taught online it should be taught online. The entire point is based on the assumption that face-to-face is mediocre. Point 5 is actually pretty offensive. Are we all sitting around pulling on our grey beards and contemplating our navels, having looked at nothing else for 10 years?

Once again, we’ve got this terrible issue with our identity and the way that we are regarded. Once again, we have a case for building up perceptions of our community and getting rid of this farcical representation of our profession and our Universities.

There is a rebuttal on the post, which isn’t really necessary as he’s walking his comments list and shooting down pretty much all opposition. It’s worth reading the comments to remind yourself how many people think that we produce graduates to produce professors to produce graduates (rinse/repeat). Someone discussed the idea that a CS degree is only partially about what you need next year and what we may need to have to do in 10,20 or 40 years. Jeffrey’s response?

I will probably be dead in 40 years time. Anyway, who says they can’t provide education that’s relevant both today and in decades hence? Virtually every other technical discipline seems to be able to do that.

Now, I’d argue that a lot of us are doing that but maybe we come back to that issue of ICT identity. Here’s a guy who is actively making money running on-line courses that are built on technologies developed by people who have the degrees that he doesn’t think are necessary – and he doesn’t seem to realise the issue with that. Or, he does, because he’s a businessman, but not enough other people do. We’ve still got an identity crisis to address.

Should we aiming to maintain (or develop) excellence? Yes. Should we balance industry and academic requirement? Yes. Should we be using on-line effectively? Yes. That, however, is not what I believe Jeffrey is saying – I think that he’s saying that we are intrinsically unworthy and, to an extent, providing a mechanism to waste a student’s time when they could be learning pertinent information at a reasonable price in an on-line manner.

He seems to be pretty keen on addressing posts that disagree with him so I’ll wait to see if he shows up here. In the meantime, read the post, especially the last line where his entire business case is based upon people believing everything else that he wrote in that post.

More on our image – and the need for a revamp.

Posted: April 28, 2012 Filed under: Education | Tags: ALTA, curriculum, design, education, higher education, reflection, teaching, teaching approaches 4 CommentsToday, in between meetings with people about forming a cohesive ICT community and defining our identity, I saw a billboard as I walked along the streets of the Melbourne CBD.

A picture of a woman’s torso, naked except for a bra, with the slogan “Who said engineering was boring?”

Says it all, really, doesn’t it? I’ve long said that associating a verb in a sentence with a negative is the best way to get people to think about the verb rather than the more complex semantics of the negated verb. Now, for a whole lot of people, a vaguely leery billboard is going to put the words “engineering” and “boring” together.

Some of these people will be young people in our target recruitment group – mid to late school – and this kind of stuff sticks.

The building the billboard was on was built by civil engineers, using systems designed by mechanical and electronic/electrical engineers, the pictures were produced on machines constructed by computer systems engineers and elecs, images constructed and edited through digital cameras by tech-savvy photographers and processed on systems built by software engineers, computer scientists, electronic artists and many, many other people who are all being insulted by the same poster they helped to support and create. (My apologies because I didn’t list everybody, but the sheer scale of the number of people who contributed to that is quite large!)

Today, on my way home, a giant hunk of steel, powered by two big balls of spinning flame, climbed up into the sky and, in an hour, crossed a distance that used to take weeks to traverse. Right now, I am communicating with you around the world using a machine built of metal, burnt oil residue and sand, that is sending information to you at nearly the speed of light, wherever you happen to be.

How, in anyone’s perverted lexicon, can that be anything other than exciting?

Identity and Community – Addressing the ICT Education Community

Posted: April 27, 2012 Filed under: Education | Tags: advocacy, ALTA, education, feedback, higher education, reflection, teaching, teaching approaches Leave a commentHad a great meeting at Swinburne University, (Melbourne, Victoria, Australia), today as part of my ALTA Fellowship role. I brought the talk and early outcomes from the ALTA Forum in Queensland into (sunny-ish?) Melbourne and shared it with a new group of participants.

I haven’t had time to write my notes up yet but the overall sentiment was pretty close to what was expressed at the ALTA Forum initially:

- We don’t have an “ICT is…” identity that we can point to. Dentists do teeth. Doctors heal the sick. Lawyers do law. ICT does… what?

- We need a common dissemination point for IT, CS, IS, ICT, CS-EE… etc. rather than the piecemeal framework we currently have that is strongly aligned with subdivision of the discipline.

- We need professionalism in learning and teaching, where people dedicate time to improve their L&T – no more stagnant courses!

- We need to have enough time to be professional! L&T must be seen as valuable and be allocated enough time to be undertaken properly.

- It would be great to have a Field of Research Code for Education within the Discipline of ICT – as distinct from general education coding – to make sure that CS Ed/ICT Ed is seen as educational research in the discipline, rather than a non-specific investigation.

- We need to identify and foster a community of practice to get out of the silos. Let’s all agree that we want to do this properly and ignore school and University boundaries.

- We need to stop talking about the lack of national community and start addressing the lack of a national community.

So a good validation for the early work at the Forum and I’m really looking forward to my meeting at RMIT tomorrow. Thanks, Graham and Catherine, for being so keen to host the first official ALTA engagement and dissemination event!