The Student as Creator – Making Patterns, Not Just Following Patterns

Posted: May 10, 2012 Filed under: Education | Tags: design, education, higher education, maze, modes of thinking, teaching, teaching approaches, thinking Leave a commentWe talk a lot about what we want students to achieve. Sometimes the students hear the details and sometimes they hear “Do your work, pass your courses, get your degree, wave paper in air, throw hat, profit.” Now, of course, sometimes they hear that because that’s what we say – or that’s what their environment, the people around them, even the employers say.

The image above is a Chinese-inspired maze pattern. Composed of simple elements, it can become complex quickly. If you built a hedge maze along these lines you could probably keep a lot of people lost for some time, simply because they wouldn’t necessarily be able to decompose the maze to the simple patterns, work out the composition and then solve the problem.

I can teach someone to follow a maze easily. In fact, this is probably done by the time they’ve finished school. Jump on the track, do your work, stay inside the lines, keep walking until you find the goal. Teaching someone to be able to step back, observe the patterns and then arrive at the goal more efficiently can also be taught, or it can arrive with experience. But, going further, being able to look at the maze and construct a brand new maze, potentially with new patterns or composition techniques, requires inspiration. You can reach this point with a fantastic brain and a lifetime of experience (we must have been able to do this) but, these days, we can also teach students abstraction, thinking, the right way to go about a problem so that they move beyond following the hedges or being able to build exactly the same kind of maze again.

This brings the student into a new mode of thinking: as a creator, rather than a pattern matcher or a follower. It is, by far, the hardest things to teach as it requires you to concentrate on providing an environment that supports and encourages creativity, as well as making sure that no-one is trying to build mazes that defy gravity, or where you can walk through concrete walls. (I note that these initial grounding constraints may relax later on – once people have a good grasp of the basics, creativity can take them to places where you can walk through walls.)

Of course, focussing on the mechanics of getting the piece of paper at the end of the degree, as if this was the objective, doesn’t lead to the right way of thinking. Getting into the right space requires us to focus on what should really be happening: the successful transfer of knowledge, the building of frameworks for knowledge development and a robust basis for creative and critical thought. This can, and does, occur spontaneously – but trying to make it happen more often results in a much larger group of people who can, potentially, change the world.

Whoops, I Seem To Have Written a Book. (A trip through Python and R Towards Truth)

Posted: May 6, 2012 Filed under: Education | Tags: blogging, curriculum, data visualisation, design, education, higher education, Python, R, reflection, teaching, teaching approaches, tools 3 CommentsMark’s 1000th post (congratulations again!) and my own data analysis reminded me of something that I’ve been meaning to do for some time, which is work out how much I’ve written over the 151 published posts that I’ve managed this year. Now, foolish me, given that I can see the per-post word count, I started looking around to see how I could get an entire blog count.

And, while I’m sure it’s obvious to someone else who will immediately write in and say “Click here, Nick, sheesh!”, I couldn’t find anything that actually did what I wanted to do. So, being me, I decided to do it ye olde fashioned way – exporting the blog and analysing it manually. (Seriously, I know that it must be here somewhere but my brain decided that this would be a good time to try some analysis practice.)

Now, before I go on, here are the figures (not including this post!):

- Since January 1st, I have published 151 posts. (Eek!)

- The total number of words, including typed hyperlinks and image tags, is 102,136. (See previous eek.)

- That’s an average of just over 676 words per post.

Is there a pattern to this? Have I increased the length of my posts over time as I gained confidence? Have they decreased over time as I got busier? Can I learn from this to make my posting more efficient?

The process was, unsurprisingly, not that simple because I took it as an opportunity to work on the design of an assignment for my Grand Challenges students. I deliberately started from scratch and assumed no installed software or programming knowledge above fundamentals on my part (this is harder than it sounds). Here are the steps:

- Double check for mechanisms to do this automatically.

- Realise that scraping 150 page counts by hand would be slow so I needed an alternative.

- Dump my WordPress site to an Export XML file.

- Stare at XML and slowly shake head. This would be hard to extract from without a good knowledge of Regular Expressions (which I was pretending not to have) or Python/Perl-fu (which I can pretend that I have to then not have but my Fu is weak these days).

- Drag Nathan Yau’s Visualize This down from the shelf of Design and Visualisation books in my study.

- Read Chapter 2, Handling Data.

- Download and install Beautiful Soup, an HTML and XML parsing package that does most of the hard word for you. (Instructions in Visualize This)

- Start Python

- Read the XML file into Python.

- Load up the Beautiful Soup package. (The version mentioned in the book is loaded up in a different way to mine so I had to re-enage my full programming brain to find the solution and make notes.)

- Mucked around until I extracted what I wanted to while using Python in interpreter mode (very, very cool and one of my favourite Python features).

- Wrote an 11 line program to do the extraction of the words, counting them and adding them (First year programming level, nothing fancy).

A number of you seasoned coders and educators out there will be staring at points 11 and 12, with a wavering finger, about to say “Hang on… have you just smoothed over about an hour plus of student activity?” Yes, I did. What took me a couple of minutes could easily be a 1-2 hour job for a student. Which is, of course, why it’s useful to do this because you find things like Beautiful Soup is called bs4 when it’s a locally installed module on OS X – which has obviously changed since Nathan wrote his book.

Now, a good play with data would be incomplete without a side trip into the tasty world of R. I dumped out the values that I obtained from word counting into a Comma Separated Value (CSV) file and, digging around in the R manual, Visualize This, and Data Analysis with Open Source Tools by Philipp Janert (O’Reilly), I did some really simple plotting. I wanted to see if there was any rhyme or reason to my posting, as a first cut. Here’s the first graph of words per post. The vertical axis is the number of words and the horizontal axis is the post number. So, reading left to right, you’ll see my development over time.

Sadly, there’s no pattern there at all – not only can’t we see one by eye, the correlation tests of R also give a big fat NO CORRELATION.

Now, here’s a graph of the moving average over a 5 day window, to see if there is another trend we can see. Maybe I do have trends, but they occur over a larger time?

Uh, no. In fact, this one is worse for overall correlation. So there’s no real pattern here at all but there might be something lurking in the fine detail, because you can just about make out some peaks and troughs. (In fact, mucking around with the moving average window does show a pattern that I’ll talk about later.)

However, those of who you are used to reading graphs will have noticed something about the axis label for the x-axis. It’s labelled as wp$day. This would imply that I was plotting post day versus average or count and, of course, I’m not. There have not been 151 days since January the 1st, but there have been days when I have posted multiple times. At the moment, for a number of reasons, this isn’t clear to the reader. More importantly, the day on which I post is probably going to have a greater influence on me as I will have different access to the Internet and time available. During SIGCSE, I think I posted up to 6 times a day. Somewhere, this is lost in the structure of the data that considers each post as an independent entity. They consume time and, as a result, a longer post on the same day will reduce the chances of another long post on the same day – unless something unusual is going on.

There is a lot more analysis left to do here and it will take more time than I have today, unfortunately. But I’ll finish it off next week and get back to you, in case you’re interested.

What do I need to do next?

- Relabel my graphs so that it is much clearer what I am doing.

- If I am looking for structure, then I need to start looking at more obvious influences and, in this case, given there’s no other structure we can see, this probably means time-based grouping.

- I need to think what else I should include in determining a pattern to my posts. Weekday/weekend? Maybe my own calendar will tell me if I was travelling or really busy?

- Establish if there’s any reason for a pattern at all!

As a final note, novels ‘officially start at a count of 40,000 words, although they tend to fall into the 80-100,000 range. So, not only have I written a novel in the past 4 months, I am most likely on track to write two more by the end of the year, because I will produce roughly 160-180,000 more words this year. This is not the year of blogging, this is the year of a trilogy!

Next year, my blog posts will all be part of a rich saga involving a family of boy wizards who live on the wrong side on an Ice Wall next to a land that you just don’t walk into. On Mars. Look for it on Amazon. Thanks for reading!

Grand Challenges – A New Course and a New Program

Posted: May 4, 2012 Filed under: Education | Tags: advocacy, challenge, curriculum, design, education, educational problem, equality, grand challenges, higher education, learning, reflection, teaching, universal principles of design Leave a commentOh, the poor students that I spoke to today. We have a new degree program starting, the Bachelor of Computer Science (Advanced), and it’s been given to me to coordinate and set up the first course: Grand Challenges in Computer Science, a first-year offering. This program (and all of its unique components) are aimed at students who have already demonstrated that they have got their academics sorted – a current GPA of 6 or higher (out of 7, that’s A equivalent or Distinctions for those who speak Australian), or an ATAR (Australian Tertiary Admission Rank) of 95+ out of 100. We identified some students who met the criteria and might want to be in the degree, and also sent out a general advertisement as some people were close and might make the criteria with a nudge.

These students know how to do their work and pass their courses. Because of this, we can assume some things and then build to a more advanced level.

Now, Nick, you might be saying, we all know that you’re (not so secretly) all about equality and accessibility. Why are you running this course that seems so… stratified?

Ah, well. Remember when I said you should probably feel sorry for them? I talked to these students about the current NSF Grand Challenges in CS, as I’ve already discussed, and pointed out that, given that the students in question had already displayed a degree of academic mastery, they could go further. In fact, they should be looking to go further. I told them that the course would be hard and that I would expect them to go further, challenge themselves and, as a reward, they’d do amazing things that they could add to their portfolios and their experience bucket.

I showed them that Cholera map and told them how smart data use saved lives. I showed them We Feel Fine and, after a slightly dud demo where everyone I clicked on had drug issues, I got them thinking about the sheer volume of data that is out there, waiting to be analysed, waiting to tell us important stories that will change the world. I pretty much asked them what they wanted to be, given that they’d already shown us what they were capable of. Did they want to go further?

There are so many things that we need, so many problems to solve, so much work to do. If I can get some good students interested in these problems early and provide a coursework system to help them to develop their solutions, then I can help them to make a difference. Do they have to? No, course entry is optional. But it’s so tempting. Small classes with a project-based assessment focus based on data visualisation: analysis, summarisation and visualisation in both static and dynamic areas. Introduction to relevant philosophy, cognitive fallacies, useful front-line analytics, and display languages like R and Processing (and maybe Julia). A chance to present to their colleagues, work with research groups, do student outreach – a chance to be creative and productive.

I, of course, will take as much of the course as I can, having worked on it with these students, and feed parts of it into outreach into schools, send other parts in different levels of our other degrees. Next year, I’ll write a brand new grand challenges course and do it all again. So this course is part of forming a new community core, a group of creative and accomplished leaders, to an extent, but it is also about making this infectious knowledge, a striving point for someone who now knows that a good mark will get them into a fascinating program. But I want all of it to be useful elsewhere, because if it’s good here, then (with enough scaffolding) it will be good elsewhere. Yes, I may have to slow it down elsewhere but that means that the work done here can help many courses in many ways.

I hope to get a good core of students and I’m really looking forward to seeing what they do. Are they up for the challenge? I guess we’ll find out at the end of second semester.

But, so you know, I think that they might be. Am I up for it?

I certainly hope so! 🙂

Saving Lives With Pictures: Seeing Your Data and Proving Your Case

Posted: May 2, 2012 Filed under: Education | Tags: advocacy, analytics, authenticity, cholera outbreak, data visualisation, design, education, higher education, learning, teaching, teaching approaches, voronoi 1 Comment

From Wikipedia, original map by John Snow showing the clusters of cholera cases in the London epidemic of 1854

This diagram is fascinating for two reasons: firstly, because we’re human, we wonder about the cluster of black dots and, secondly, because this diagram saved lives. I’m going to talk about the 1854 Broad Street Cholera outbreak in today’s post, but mainly in terms of how the way that you represent your data makes a big difference. There will be references to human waste in this post and it may not be for the squeamish. It’s a really important story, however, so please carry on! I have drawn heavily on the Wikipedia page, as it’s a very good resource in this case, but I hope I have added some good thoughts as well.

19th Century London had a terrible problem with increasing population and an overtaxed sewerage system. Underfloor cesspools were overfilling and the excess was being taken and dumped into the River Thames. Only one problem. Some water companies were taking their supply from the Thames. For those who don’t know, this is a textbook way to distribute cholera – contaminating drinking water with infected human waste. (As it happens, a lack of cesspool mapping meant that people often dug wells near foul ground. If you ever get a time machine, cover your nose and mouth and try not to breath if you go back before 1900.)

But here’s another problem – the idea that germs carried cholera was not the dominant theory at the time. People thought that it was foul air and bad smells (the miasma theory) that carried the bugs. Of course, from this century we can look back and think “Hmm, human waste everywhere, bugs everywhere, bad smells everywhere… ohhh… I see what you did there.” but this is from the benefit of early epidemiological studies such as those of John Snow, a London physician of the 19th Century.

John Snow recorded the locations of the households where cholera had broken out, on the map above. He did this by walking around and talking to people, with the help of a local assistant curate, the Reverend Whitehead, and, importantly, working out what they had in common with each other. This turned out to be a water pump on Broad Street, at the centre of this map. If people got their water from Broad Street then they were much more likely to get sick. (Funnily enough, monks who lived in a monastery adjacent to the pump didn’t get sick. Because they only drank beer. See? It’s good for you!) John Snow was a skeptic of the miasma theory but didn’t have much else to go on. So he went looking for a commonality, in the hope of finding a reason, or a vector. If foul air wasn’t the vector – then what was spreading the disease?

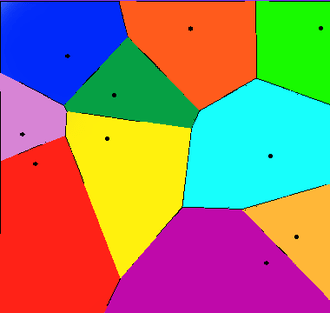

Snow divided the map up into separate compartments that showed the pump and compartment showed all of the people for whom this would be the pump that they used, because it was the closest. This is what we would now call a Voronoi diagram, and is widely used to show things like the neighbourhoods that are serviced by certain shops, or the impacts of roads on access to shops (using the Manhattan Distance).

A Voronoi diagram from Wikipedia showing 10 shops, in a flat city. The cells show the areas that contain all the customers who are closest to the shop in that cell.

What was interesting about the Broad Street cell was that its boundary contained most of the cholera cases. The Broad Street pump was the closest pump to most people who had contracted cholera and, for those who had another pump slightly closer, it was reported to have better tasting water (???) which meant that it was used in preference. (Seriously, the mind boggles on a flavour preference for a pump that was contaminated both by river water and an old cesspit some three feet away.)

Snow went to the authorities with sound statistics based on his plots, his interviews and his own analysis of the patterns. His microscopic analysis had turned up no conclusive evidence, but his patterns convinced the authorities and the handle was taken off the pump the next day. (As Snow himself later said, not many more lives may have been saved by this particular action but it gave credence to the germ theory that went on to displace the miasma theory.)

For those who don’t know, the stink of the Thames was so awful during Summer, and so feared, that people fled to the country where possible. Of course, this option only applied to those with country houses, which left a lot of poor Londoners sweltering in the stink and drinking foul water. The germ theory gave a sound public health reason to stop dumping raw sewage in the Thames because people could now get past the stench and down to the real cause of the problem – the sewage that was causing the stench.

So John Snow had encountered a problem. The current theory didn’t seem to hold up so he went back and analysed the data available. He constructed a survey, arranged the results, visualised them, analysed them statistically and summarised them to provide a convincing argument. Not only is this the start of epidemeology, it is the start of data science. We collect, analyse, summarise and visualise, and this allows us to convince people of our argument without forcing them to read 20 pages of numbers.

This also illustrates the difference between correlation and causation – bad smells were always found with sewage but, because the bad smell was more obvious, it was seen as causative of the diseases that followed the consumption of contaminated food and water. This wasn’t a “people got sick because they got this wrong” situation, this was “households died, with children dying at a rate of 160 per 1000 born, with a lifespan of 40 years for those who lived”. Within 40 years, the average lifespan had gone up 10 years and, while infant mortality didn’t really come down until the early 20th century, for a range of reasons, identifying the correct method of disease transmission has saved millions and millions of lives.

So the next time your students ask “What’s the use of maths/statistics/analysis?” you could do worse than talk to them briefly about a time when people thought that bad smells caused disease, people died because of this idea, and a physician and scientist named John Snow went out, asked some good questions, did some good thinking, saved lives and changed the world.

More on our image – and the need for a revamp.

Posted: April 28, 2012 Filed under: Education | Tags: ALTA, curriculum, design, education, higher education, reflection, teaching, teaching approaches 4 CommentsToday, in between meetings with people about forming a cohesive ICT community and defining our identity, I saw a billboard as I walked along the streets of the Melbourne CBD.

A picture of a woman’s torso, naked except for a bra, with the slogan “Who said engineering was boring?”

Says it all, really, doesn’t it? I’ve long said that associating a verb in a sentence with a negative is the best way to get people to think about the verb rather than the more complex semantics of the negated verb. Now, for a whole lot of people, a vaguely leery billboard is going to put the words “engineering” and “boring” together.

Some of these people will be young people in our target recruitment group – mid to late school – and this kind of stuff sticks.

The building the billboard was on was built by civil engineers, using systems designed by mechanical and electronic/electrical engineers, the pictures were produced on machines constructed by computer systems engineers and elecs, images constructed and edited through digital cameras by tech-savvy photographers and processed on systems built by software engineers, computer scientists, electronic artists and many, many other people who are all being insulted by the same poster they helped to support and create. (My apologies because I didn’t list everybody, but the sheer scale of the number of people who contributed to that is quite large!)

Today, on my way home, a giant hunk of steel, powered by two big balls of spinning flame, climbed up into the sky and, in an hour, crossed a distance that used to take weeks to traverse. Right now, I am communicating with you around the world using a machine built of metal, burnt oil residue and sand, that is sending information to you at nearly the speed of light, wherever you happen to be.

How, in anyone’s perverted lexicon, can that be anything other than exciting?

Got Vygotsky?

Posted: April 25, 2012 Filed under: Education | Tags: Csíkszentmihályi, curriculum, design, education, flow, games, higher education, learning, principles of design, resources, teaching, teaching approaches, tools, vygotsky, Zone of proximal development, ZPD 4 CommentsOne of my colleagues drew my attention to an article in a recent Communications of the ACM, May 2012, vol 55, no 5, (Education: “Programming Goes to School” by Alexander Repenning) discussing how we can broaden participation of women and minorities in CS by integrating game design into middle school curricula (Thanks, Jocelyn!). The article itself is really interesting because it draws on a number of important theories in education and CS education but puts it together with a strong practical framework.

There’s a great diagram in it that shows Challenge versus Skills, and clearly illustrates that if you don’t get the challenge high enough, you get boredom. Set it too high, you get anxiety. In between the two, you have Flow (from Csíkszentmihályi’ s definition, where this indicates being fully immersed, feeling involved and successful) and the zone of proximal development (ZPD).

Which brings me to Vygotsky. Vgotsky’s conceptualisation of the zone of proximal development is designed to capture that continuum between the things that a learner can do with help, and the things that a learner can do without help. Looking at the diagram above, we can now see how learners can move from bored (when their skills exceed their challenges) into the Flow zone (where everything is in balance) but are can easily move into a space where they will need some help.

Most importantly, if we move upwards and out of the ZPD by increasing the challenge too soon, we reach the point where students start to realise that they are well beyond their comfort zone. What I like about the diagram above is that transition arrow from A to B that indicates the increase of skill and challenge that naturally traverses the ZPD but under control and in the expectation that we will return to the Flow zone again. Look at the red arrows – if we wait too long to give challenge on top of a dry skills base, the students get bored. It’s a nice way of putting together the knowledge that most of us already have – let’s do cool things sooner!

That’s one of the key aspects of educational activities – not they are all described in terms educational psychology but they show clear evidence of good design, with the clear vision of keeping students in an acceptably tolerable zone, even as we ramp up the challenges.

One the key quotes from the paper is:

The ability to create a playable game is essential if students are to reach a profound, personally changing “Wow, I can do this” realization.

If we’re looking to make our students think “I can do this”, then it’s essential to avoid the zone of anxiety where their positivity collapses under the weight of “I have no idea how anyone can even begin to help me to do this.” I like this short article and I really like the diagram – because it makes it very clear when we overburden with challenge, rather than building up skill and challenge in a matched way.

Putting the pieces together: Constructive Feedback

Posted: April 6, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, teaching, teaching approaches, tools Leave a commentOver the last couple of days, I’ve introduced a scenario that examines the commonly held belief that negative reinforcement can have more benefit than positive reinforcement. I’ve then shown you, via card tricks, why the way that you construct your experiment (or the way that you think about your world) means that this becomes a self-fulfilling prophecy.

Now let’s talk about making use of this. Remember this diagram?

This shows what happens if, when you occupy a defined good or bad state, you then redefine your world so that you lose all notion of degree and construct a false dichotomy through partitioning. We have to think about the fundamental question here – what do we actually want to achieve when we categorise activities as good or bad?

Realistically, most highly praiseworthy events are unusual – the A+. Similarly, the F should be less represented across our students than the P and the “I’ve done nothing, I don’t care, give me 0” should be as unlikely as the A+. But, instead of looking at outcomes (marks), let’s look at behaviours. Now I’m going to construct my feedback to students to address the behaviour or approach that they employed to achieve a certain outcome. This allows me to separate the B+ of a gifted student who didn’t really try from the B+ of a student who struggled and strived to achieve it. It also allows me to separate the F of someone who didn’t show up to class from the F of someone who didn’t understand the material because their study habits aren’t working. Finally, this allows me to separate the A+ of achievement from the A+ of plagiarism or cheating.

Under stress, I expect people to drop back down in their ability to reason and carry out higher-level thought. We could refer to Maslow or read any book on history. We could invoke Hick’s Law – that time taken to make decisions increases as function of the number of choices, and factor in pilot thresholds, decision making fatigue and all of that. Or we could remember what it was like to be undergraduates. 🙂

Let me break down student behaviours into unacceptable, acceptable and desirable behaviours, and allocate the same nomenclature that I did for cards. So the unacceptable are now the 2,3,4, the acceptable and 5-10, and the desirable are J-K. (The numbers don’t matter, I’m after the concept.) When I construct feedback for my students, I want to encourage certain behaviours and discourage others. Ideally, I want to even develop more of certain behaviours (putting more Kings in the deck, effectively). But what happens if I don’t discourage the unacceptable behaviours?

Under stress, under reduced cognitive function and reduced ability to make decisions, those higher level desirable behaviours are most likely going to suffer. Let us model the student under stress as a stacked deck, where the Q and K have been removed. Not only do we have less possibility for praise but we have an increased probability of the student making the wrong decision and using a 2,3,4 behaviour. It doesn’t matter that we’ve developed all of these great new behaviours (added Kings), if under stress they all get thrown out anyway.

So we also have to look at removing or reducing the likelihood of the unacceptable behaviours. A student who has no unacceptable behaviour left in their deck will, regardless of the pressure, always behave in an acceptable manner. If you can also add desirable behaviours then they have an increase chance of doing well. This is where targeted feedback comes in at the behavioural level. I don’t need to worry about acceptable behaviours and I should be very careful in my feedback to recognise that there is an asymmetric situation where any improvement from unacceptable is great but a decline from desirable to acceptable may be nowhere near as catastrophic. Moderation, thought and student knowledge are all very useful concepts here. But we can also involve the student in useful and scaleable ways.

Raising student awareness of how their own process works is important. We use reflective exercises midway through our end-of-first year programming course to develop student process awareness: what worked, what didn’t work, how can you improve. This gives students a framework for self-assessment that allows them to find their own 2s and their own Kings. By getting the students to do it, with feedback from us, they develop it in their own vocabulary and it makes it ideal for sharing with other students at a similar level. In fact, as the final exercise, we ask students to summarise their growth in their process awareness during the course and ask them for advice that they would give to other students about to start. Here are three examples:

Write code. Go beyond your practicals, explore the language, try things out for yourself. The more you code, the better you’ll get, so take every opportunity you can.

If I could give advice to a future … student, I would tell them to test their code as often as possible, while writing it. Make sure that each stage of your code is flawless before going onto the next stage.

If I could give one piece of advice to students …, it would be to start the coding early and to keep at it. Sometimes you can have a problem that you just can’t work out how to fix but if you start early you leave yourself time to be able to walk away and come back later. A lot of the time when you come back you will see the problem very quickly and wonder why you couldn’t see it before.

There are a lot of Kings in the above advice – in fact, let’s promote them and call them Aces, because these are behaviours that, with practice, will become habits and they’ll give you a K at the same time as removing a 2. “Practise your coding skills” “Test your code thoroughly” “Start early” Students also framed their lessons in terms of what not to do. As one student reflected, he knew that it would take him four days to write the code, so he was wondering why he only started two days out – you could read the puzzlement in the words as he wrote them. He’d identified the problem and, by semester’s end, had removed that 2.

I’m not saying anything amazing or controversial here, but I hope that this has framed a common situation in a way that is useful to you. And you got to shout at cards, too. Bonus!

It’s Feedback Week! Yelling at Pilots is Good for Them!

Posted: April 4, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, measurement, pilot training, reflection, teaching, teaching approaches 1 CommentThe next few posts will deal with effective feedback and the impact of positive and negative reinforcement. I’ll start off with a story that I often tell in lectures.

There’s a well established fact among pilot instructors, in the Air Force, that negative reinforcement works better than positive reinforcement. Combat pilots are very highly trained, go through an extremely rigorous selection procedure and have to maintain a very high level of personal discipline and fitness. The difference between success and failure, life and death effectively, can come down to one decision. During training, these pilots are put under constant stress, to try and prepare them for the real situation.

The instructors have observed that these pilots respond better to being yelled at than being congratulated. What’s their evidence? Well, if a pilot does something really well, and is praised, chances are his or her performance will get worse. Whereas, if the pilot does something bad, or dangerous, and you yell at him or her, his or her performance will improve. Well, that’s a pretty simple framework. Let’s draw it as a diagram.

Now, of course, to see the impact of praise or abuse, you have to record what you did (the praise or abuse) and then what happened (improvement or decline). So we need to draw up some boxes. Ticks mean praise, crosses mean abuse. Up arrow mean improvement, down arrow means decline. A tick in the box that you reach by selecting the column that belongs to Praise (tick) and the row that belongs to Improvement (up arrow) means that the pilot improved after praise. Let’s look at a picture.

So here we can see that, much as an instructor would expect, when I’m nice to you, you get worse more often that you get better. You get better once when praised, compared to getting worse three times. But, wow, when I yelled at you, you get better far more often than you got worse!

There is, of course, a trick here. Yes, it appears that shouting works better than praising but, without giving the game away in the comments (or Googling), do you know why? (The data is a reasonable approximation of the real situation, so there are no hidden arrows or ticks anywhere. 🙂 )

Now, true confession, the picture above is not actually of pilot training – I don’t have an F18 on my desk – but it will be a reasonable approximation of the situation, although it comes from a different source. The graph above comes from a game called “Card Shouting” and I’ll tell you more about that in tomorrow’s post.

I Ran Out Of Time! (Why Are Software Estimates So Bad?)

Posted: March 24, 2012 Filed under: Education | Tags: curriculum, design, education, higher education, reflection, resources, teaching approaches Leave a commentI read an interesting question on Quora regarding task estimation. The question, “Engineering Management: Why are software development task estimations regularly off by a factor of 2-3?“, had an answer wiki attached to it and then some quite long and specific answers. There is a lot there for anyone who works with students, and I’ll summarise some of them here that I like to talk about with my students:

- The idea that if we plan hard enough, we can control everything: Planning gives us the illusion of control in many regards. If we are unrealistic in assessment of our own abilities or we don’t account for the unexpected, then we are almost bound for failure. Making big plans doesn’t work if we never come up with concrete milestones, allocate resources that we have and do something other than plan.

- Poor discovery work: If you don’t look into what is actually required then you can’t achieve it. Doing any kind of task assessment without working out what you’re being asked to do, how long you have, what else you have to do and how you will know when you’re done is wasted effort.

- Failure to assess previous projects: Learn from your successes and your failures! How much time did you allocate last time? Was it enough? No? ADD MORE TIME! How closely related are the two projects – if one is a subset of another what does this say for the time involved? Can you re-use elements from the previous project? Be critical of your previous work. What did you learn? What could you improve? What can you re-use? What do you need to never do again?

- Big hands, little maps: There’s a great answer on the linked web page of drawing a broad line on Google maps at a high-level view and estimating the walking time for a trip. The devil is in the details! If you wave your hands in a broad way across a map it makes the task look simple. You need to get down to the appropriate level to make a good estimate – too far down, you get caught up in minutiae, too far up, you get a false impression of plain sailing.

I found it to be an interesting question with lots of informative answers and a delightful thought experiment of walking the California coast. I hope you like it too!

Graphs, DAGS and Inverted Pyramids: When Is a Prerequisite Not a Prerequisite?

Posted: March 22, 2012 Filed under: Education | Tags: curriculum, design, education, higher education, sigcse, teaching, teaching approaches, tools 1 CommentI attended a very interesting talk at SIGCSE called “Bayesian Network Analysis of Computer Science Grade Distributions” (Anthony and Raney, Baldwin-Wallace College). Their fundamental question was how could they develop computational tools to increase the graduation rate of students in their 4 year degree. Motivated by a desire to make grade predictions, and catch students before they fall off, they started searching their records back to 1998 to find out if they could get some answers out of student performance data.

One of their questions was: Are the prerequisites actually prerequisite? If this is true, then there should be at least some sort of correlation between performance and attendance in a prerequisite course and the courses that depend upon it. I liked their approach because it took advantage of structures and data that they already had, and to which they applied a number of different analytical techniques.

They started from a graph of the prerequisites, which should be able to be built as something where you start from an entry subject and can progress all the way through to some sort of graduation point, but can only progress to later courses if you have the prereqs. (If we’re being Computer Science-y, prereq graphs can’t contain links that take you around in a loop and must be directed acyclic graphs (DAGs), but you can ignore that bit.) As it turns out, this structure can easily be converted to certain analytical structures, which makes the analysis a lot easier as we don’t have to justify any structural modification.

Using one approach, the researchers found that they could estimate a missing mark in the list of student marks to an accuracy of 77% – that is they correctly estimate the missing (A,B,C,D,F) grade 77% of the time, compared with 30% of the time if they don’t take the prereqs into account.

They presented a number of other interesting results but one that I found both informative and amusing was that they tried to use an automated network learning algorithm to pick the most influential course in assessing how a student will perform across their degree. However, as they said themselves, they didn’t constrain the order of their analysis – although CS400 might depend upon CS300 in the graph, their algorithm just saw them as connected. Because of this, the network learning picked their final year, top grade, course as the most likely indicator of good performance. Well, yes, if you get an A in CSC430 then you’ve probably done pretty well up until now. The machine learning involved didn’t have this requirement as a constraint so it just picked the best starting point – from its perspective. (I though that this really reinforced what the researchers were talking about – that finding the answer here was more than just correlation and throwing computing power at it. We had to really understand what we wanted to make sure we got the right answer.)

Machine learning is going to give you an answer, in many cases, but it’s always interesting to see how many implicit assumptions there are that we ignore. It’s like trying to build a pyramid by saying “Which stone is placed to indicate that we’ve finished”, realising it’s the capstone and putting that down on the ground and walking away. We, of course, have points requirements for degrees, so it gets worse because now you have to keep building and doing it upside down!

I’m certainly not criticising the researchers here – I love their work, I think that they’re very open about where they are trying to take this and I thought it was a really important point to drive home. Just because we see structures in a certain way, we always have to be careful how we explain them to machines because we need useful information that can be used in our real teaching worlds. The researchers are going to work on order-constrained network learning to refine this and I’m really looking forward to seeing the follow-up on this work.

I am also sketching out some similar analysis for my new PhD student to do when he starts in April. Oh, I hope he’s not reading this because he’s going to be very, VERY busy. 🙂