Knowing the Tricks Helps You To Deal With Assumptions

Posted: September 10, 2014 Filed under: Education | Tags: authenticity, blogging, card shouting, collaboration, community, curriculum, data visualisation, design, education, educational problem, educational research, Heads Heads, higher education, Law of Small Numbers, random numbers, random sequence, random sequences, randomness, reflection, resources, students, teaching, teaching approaches, tools Leave a commentI teach a variety of courses, including one called Puzzle-Based Learning, where we try to teach think and problem-solving techniques through the use of simple puzzles that don’t depend on too much external information. These domain-free problems have most of the characteristics of more complicated problems but you don’t have to be an expert in the specific area of knowledge to attempt them. The other thing that we’ve noticed over time is that a good puzzle is fun to solve, fun to teach and gets passed on to other people – a form of infectious knowledge.

Some of the most challenging areas to try and teach into are those that deal with probability and statistics, as I’ve touched on before in this post. As always, when an area is harder to understand, it actually requires us to teach better but I do draw the line at trying to coerce students into believing me through the power of my mind alone. But there are some very handy ways to show students that their assumptions about the nature of probability (and randomness) so that they are receptive to the idea that their models could need improvement (allowing us to work in that uncertainty) and can also start to understand probability correctly.

We are ferociously good pattern matchers and this means that we have some quite interesting biases in the way that we think about the world, especially when we try to think about random numbers, or random selections of things.

So, please humour me for a moment. I have flipped a coin five times and recorded the outcome here. But I have also made up three other sequences. Look at the four sequences for a moment and pick which one is most likely to be the one I generated at random – don’t think too much, use your gut:

- Tails Tails Tails Heads Tails

- Tails Heads Tails Heads Heads

- Heads Heads Tails Heads Tails

- Heads Heads Heads Heads Heads

Have you done it?

I’m just going to put a bit more working in here to make sure that you’ve written down your number…

I’ve run this with students and I’ve asked them to produce a sequence by flipping coins then produce a false sequence by making subtle changes to the generated one (turns heads into tails but change a couple along the way). They then write the two together on a board and people have to vote on which one is which. As it turns out, the chances of someone picking the right sequence is about 50/50, but I engineered that by starting from a generated sequence.

This is a fascinating article that looks at the overall behaviour of people. If you ask people to write down a five coin sequence that is random, 78% of them will start with heads. So, chances are, you’ve picked 3 or 4 as you’re starting sequence. When it comes to random sequences, most of us equate random with well-shuffled, and, on the large scale, 30 times as many people would prefer option 3 to option 4. (This is where someone leaps into the comments to say “A-ha” but, it’s ok, we’re talking about overall behavioural trends. Your individual experience and approach may not be the dominant behaviour.)

From a teaching point of view, this is a great way to break up the concepts of random sequences and some inherent notion that such sequences must be disordered. There are 32 different ways of flipping 5 coins in a strict sequence like this and all of them are equally likely. It’s only when we start talking about the likelihood of getting all heads versus not getting all heads that the aggregated event of “at least one head” starts to be more likely.

How can we use this? One way is getting students to write down their sequences and then asking them to stand up, then sit down when your ‘call’ (from a script) goes the other way. If almost everyone is still standing at heads then you’ve illustrated that you know something about how their “randomisers” work. A lot of people (if your class is big enough) should still be standing when the final coin is revealed and this we can address. Why do so many people think about it this way? Are we confusing random with chaotic?

The Law of Small Numbers (Tversky and Kahneman), also mentioned in the post, which is basically that people generalise too much from small samples and they expect small samples to act like big ones. In your head, if the grand pattern over time could be resorted into “heads, tails, heads, tails,…” then small sequences must match that or they just don’t look right. This is an example of the logical fallacy called a “hasty generalisation” but with a mathematical flavour. We are strongly biassed towards the the validity of our experiences, so when we generate a random sequence (or pick a lucky door or win the first time at poker machines) then we generalise from this small sample and can become quite resistant to other discussions of possible outcomes.

If you have really big classes (367 or more) then you can start a discussion on random numbers by asking people what the chances are that any two people in the room share a birthday. Given that there are only 366 possible birthdays, the Pigeonhole principle states that two people must share a birthday as, in a class of 367, there are only 366 birthdays to go around so one must be repeated! (Note for future readers: don’t try this in a class of clones.) There are lots of other, interesting thinking examples in the link to Wikipedia that helps you to frame randomness in a way that your students might be able to understand it better.

I’ve used a lot of techniques before, including the infamous card shouting, but the new approach from the podcast is a nice and novel angle to add some interest to a class where randomness can show up.

A Puzzling Thought

Posted: September 19, 2012 Filed under: Education | Tags: advocacy, authenticity, card shouting, collaboration, curriculum, design, education, educational problem, educational research, ethics, feedback, higher education, in the student's head, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking 4 CommentsToday I presented one of my favourite puzzles, the Monty Hall problem, to a group of Year 10 high school students. Probability is a very challenging area to teach because we humans seem to be so very, very bad at grasping it intuitively. I’ve written before about Card Shouting, where we appear to train cards to give us better results by yelling at them, and it becomes all too clear that many people have instinctive models of how the world work that are neither robust nor transferable. This wouldn’t be a problem except that:

- it makes it harder to understand science,

- the real models become hard to believe because they’re counter-intuttitve, and

- casinos make a lot of money out of people who don’t understand probability.

Monty Hall is simple. There are three doors and behind one is a great prize. You pick a door but it doesn’t get opened. The host, who knows where the prize is, opens one of the doors that you didn’t pick but the door that he/she opens is always going to be empty. So the host, in full knowledge, opens a known empty door, but it has to be one that you didn’t pick. You then have a choice to switch to the door that you didn’t pick and that hasn’t been opened, or you can stay with your original pick.

Now let’s fast forward to the fact that you should always switch because you have a 2/3 chance of getting the prize if you do (no, not 50/50) so switching is the winning strategy. Going into today, what I expected was:

- Initially, most students would want to stay with their original choice, having decided that there was no benefit to switching or that it was a 50/50 deal so it didn’t make any sense.

- At least one student would actively reject the idea.

- With discussion and demonstration, I could get students thinking about this problem in the right way.

The correct mental framework for Monty Hall is essential. What are the chances, with 1 prize behind 3 doors, that you picked the right door initially. It’s 1/3, right? So the chances that you didn’t pick the correct door is 2/3. Now, if you just swapped randomly, there’d be no advantage but this is where you have to understand the problem. There are 2 doors that you didn’t pick and, by elimination, these 2 doors contain the prize 2/3 of the time. The host knows where the prize is so the host will never open a door and show you the prize, the host just removes a worthless door. Now you have two sets of doors – the one you picked (correct 1/3 of the time) and the remaining door from the unpicked pair (correct 2/3 of the time). So, given that there’s only one remaining door to pick in the unpicked pair, by switching you increase your chances of winning from 1/3 to 2/3.

Don’t believe me? Here’s an on-line simulator that you can run (Ignore what it says about Internet Explorer, it tends to run on most things.)

Still don’t believe me? Here’s some Processing code that you can run locally and see the rates converge to the expected results of 1/3 for staying and 2/3 for switching.

This is a challenging and counter-intuitive result, until you actually understand what’s happening, and this clearly illustrates one of those situations where you can ask students to plug numbers into equations for probability but, when you actually ask them to reason mathematically, you suddenly discover that they don’t have the correct mental models to explain what is going on. So how did I approach it?

Well, I used Peer Instruction techniques to get the class to think about the problem and then vote on it. As expected, about 60% of the class were stayers. Then I asked them to discuss this with a switcher and to try and convince each other of the rightness of their actions. Then I asked them to vote again.

No significant change. Dang.

So I wheeled out the on-line simulator to demonstrate it working and to ensure that everyone really understood the problem. Then I showed the Processing simulation showing the numbers converging as expected. Then I pulled out the big guns: the 100 door example. In this case, you select from 100 doors and Monty eliminates 98 (empty) doors that you didn’t choose.

Suddenly, when faced with the 100 doors, many students became switchers. (Not surprising.) I then pointed out that the two problems (3 doors and 100 doors) had reduced to the same problem, except that the remaining doors were the only door left standing from 2 and 99 doors respectively. And, suddenly, on the repeated vote, everyone’s a switcher. (I then ran the code on the 100 door example and had to apologise because the 99% ‘switch’ trace is so close to the top that it’s hard to see.)

Why didn’t the discussion phase change people’s minds? I think it’s because of the group itself, a junior group with very little vocabulary of probability. it would have been hard for the to articulate the reasons for change beyond much ‘gut feeling’ despite the obvious mathematical ability present. So, expecting this, I confirmed that they were understanding the correct problem by showing demonstration and extended simulation, which provided conflicting evidence to their previously held belief. Getting people to think about the 100 door model, which is a quite deliberate manipulation of the fact that 1/100 vs 99/100 is a far more convincing decision factor than 1/3 vs 2/3, allowed them to identify a situation where switching makes sense, validating what I presented in the demonstrations.

In these cases, I like to mull for a while to work out what I have and haven’t learned from this. I believe that the students had a lot of fun in the puzzle section and that most of them got what happened in Monty Hall, but I’d really like to come back to them in a year or two and see what they actually took away from today’s example.

Putting the pieces together: Constructive Feedback

Posted: April 6, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, teaching, teaching approaches, tools Leave a commentOver the last couple of days, I’ve introduced a scenario that examines the commonly held belief that negative reinforcement can have more benefit than positive reinforcement. I’ve then shown you, via card tricks, why the way that you construct your experiment (or the way that you think about your world) means that this becomes a self-fulfilling prophecy.

Now let’s talk about making use of this. Remember this diagram?

This shows what happens if, when you occupy a defined good or bad state, you then redefine your world so that you lose all notion of degree and construct a false dichotomy through partitioning. We have to think about the fundamental question here – what do we actually want to achieve when we categorise activities as good or bad?

Realistically, most highly praiseworthy events are unusual – the A+. Similarly, the F should be less represented across our students than the P and the “I’ve done nothing, I don’t care, give me 0” should be as unlikely as the A+. But, instead of looking at outcomes (marks), let’s look at behaviours. Now I’m going to construct my feedback to students to address the behaviour or approach that they employed to achieve a certain outcome. This allows me to separate the B+ of a gifted student who didn’t really try from the B+ of a student who struggled and strived to achieve it. It also allows me to separate the F of someone who didn’t show up to class from the F of someone who didn’t understand the material because their study habits aren’t working. Finally, this allows me to separate the A+ of achievement from the A+ of plagiarism or cheating.

Under stress, I expect people to drop back down in their ability to reason and carry out higher-level thought. We could refer to Maslow or read any book on history. We could invoke Hick’s Law – that time taken to make decisions increases as function of the number of choices, and factor in pilot thresholds, decision making fatigue and all of that. Or we could remember what it was like to be undergraduates. 🙂

Let me break down student behaviours into unacceptable, acceptable and desirable behaviours, and allocate the same nomenclature that I did for cards. So the unacceptable are now the 2,3,4, the acceptable and 5-10, and the desirable are J-K. (The numbers don’t matter, I’m after the concept.) When I construct feedback for my students, I want to encourage certain behaviours and discourage others. Ideally, I want to even develop more of certain behaviours (putting more Kings in the deck, effectively). But what happens if I don’t discourage the unacceptable behaviours?

Under stress, under reduced cognitive function and reduced ability to make decisions, those higher level desirable behaviours are most likely going to suffer. Let us model the student under stress as a stacked deck, where the Q and K have been removed. Not only do we have less possibility for praise but we have an increased probability of the student making the wrong decision and using a 2,3,4 behaviour. It doesn’t matter that we’ve developed all of these great new behaviours (added Kings), if under stress they all get thrown out anyway.

So we also have to look at removing or reducing the likelihood of the unacceptable behaviours. A student who has no unacceptable behaviour left in their deck will, regardless of the pressure, always behave in an acceptable manner. If you can also add desirable behaviours then they have an increase chance of doing well. This is where targeted feedback comes in at the behavioural level. I don’t need to worry about acceptable behaviours and I should be very careful in my feedback to recognise that there is an asymmetric situation where any improvement from unacceptable is great but a decline from desirable to acceptable may be nowhere near as catastrophic. Moderation, thought and student knowledge are all very useful concepts here. But we can also involve the student in useful and scaleable ways.

Raising student awareness of how their own process works is important. We use reflective exercises midway through our end-of-first year programming course to develop student process awareness: what worked, what didn’t work, how can you improve. This gives students a framework for self-assessment that allows them to find their own 2s and their own Kings. By getting the students to do it, with feedback from us, they develop it in their own vocabulary and it makes it ideal for sharing with other students at a similar level. In fact, as the final exercise, we ask students to summarise their growth in their process awareness during the course and ask them for advice that they would give to other students about to start. Here are three examples:

Write code. Go beyond your practicals, explore the language, try things out for yourself. The more you code, the better you’ll get, so take every opportunity you can.

If I could give advice to a future … student, I would tell them to test their code as often as possible, while writing it. Make sure that each stage of your code is flawless before going onto the next stage.

If I could give one piece of advice to students …, it would be to start the coding early and to keep at it. Sometimes you can have a problem that you just can’t work out how to fix but if you start early you leave yourself time to be able to walk away and come back later. A lot of the time when you come back you will see the problem very quickly and wonder why you couldn’t see it before.

There are a lot of Kings in the above advice – in fact, let’s promote them and call them Aces, because these are behaviours that, with practice, will become habits and they’ll give you a K at the same time as removing a 2. “Practise your coding skills” “Test your code thoroughly” “Start early” Students also framed their lessons in terms of what not to do. As one student reflected, he knew that it would take him four days to write the code, so he was wondering why he only started two days out – you could read the puzzlement in the words as he wrote them. He’d identified the problem and, by semester’s end, had removed that 2.

I’m not saying anything amazing or controversial here, but I hope that this has framed a common situation in a way that is useful to you. And you got to shout at cards, too. Bonus!

Card Shouting: Teaching Probability and Useful Feedback Techniques by… Yelling at Cards

Posted: April 5, 2012 Filed under: Education | Tags: card shouting, education, feedback, fiero, games, higher education, resources, teaching, teaching approaches, tools 2 CommentsI introduced a teaching tool I call ‘Card Shouting’ into some of my lectures a while ago – usually those dealing with Puzzle Based Learning or thinking techniques. What does Card Shouting do? It demonstrates probability, shows why experimental construction is important and engages your class for the rest of the lecture. So what is Card Shouting?

(As a note, I’ve been a bit rushed today and I may not have put this the best way that I could. I apologise for that in advance and look forward to constructive suggestions for clarity.)

Take a standard deck of cards, shuffle it and put it face down somewhere where the class can see it. (You either need very big cards or a document camera so that everyone can see this in a large class. In a small class a standard deck is fine.)

Then explain the rules, with a little patter. Here’s an example of what I say: “I’m sick and tired of losing at Poker! I’ve decided that I want to train a pack of cards to give me the cards I want. Which ones do I want? High cards, lots of them! What don’t I want? Low cards – I don’t want any really low cards.”

Pause to leaf through the deck and pull out a Ace, King and Queen. Also grab a 2,3 and 4. Any suit is fine. Hold up the Ace, King and Queen.

“Ok, these guys are my favourites. What I’m going to do, after this, is shuffle everything back in and draw a card from the top of the deck and flip it up so you can see it. If it’s one of these (A, K, Q), I want to hear positive things – I want ‘yeahs!’ and applause and good stuff. But if it’s one of these (hold up 2,3 and 4) I want boos and hisses and something negative. If it’s not either – well, we don’t do anything.” (Big tip: put a list of the accepted positive and negative phrases on the board. It’s much easier that having to lecture people on not swearing)

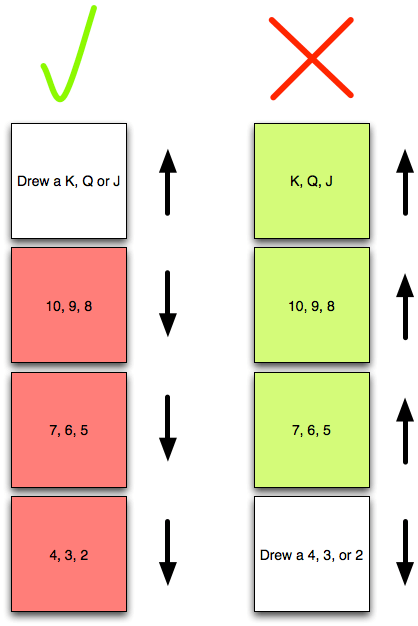

“Let’s find out what works best: praise or yelling- but we’re going to have measure our outcomes. I want to know if my training is working! If I draw a bad card and abuse it, I want to see improvement – I don’t want to see one of the bad cards on the next draw. If I draw a good card, and praise it, I want the deck to give me another good card – if it’s lower than a J, I’ve wasted my praise!” Then work through with the class how you can measure positive and negative reaction from the cards – we’ve already set up the basis for this in yesterday’s post, so here’s that diagram again.

In this case, we record a tick in the top left hand box if we get a good card and, after praising it, we get another good card. We put a tick in top right hand box if we draw a bad card, abuse the deck, and then get anything that is NOT a bad card. Similarly for the bottom row, if we praise it and the next card is not good, we put a mark in the bottom left hand box and, finally, if we abuse the card and the next card is still bad, we record a mark in the bottom right hand box.

Now you shuffle and start drawing cards, getting the reactions and then, based on the card after the reaction, record improvement or decline in the face of praise and abuse. If you draw something that’s neither good NOR bad to start with – you can ignore it. We only care about the situation when we have drawn a good or a bad card and what the immediate next card is.

The pattern should emerge relatively quickly. Your card shouting is working! If you get a card in the bad zone, you shout at it, and it’s 3 times as likely to improve. But wait, praise doesn’t do anything for these cards! You can praise a card and, again, roughly 3 times as often – it gets worse and drops out of the praise zone!

Not only have we trained the deck, we’ve shown that abuse is a far more powerful training tool!

Wait.

What? Why is this working? I discussed this in outline yesterday when I pointed out it’s a commonly held belief that negative reinforcement is more likely to cause beneficial outcome based on observation – but I didn’t say why.

Well, let’s think about the problem. First of all, to make the maths easy, I’m going to pull the Ace out of the deck. Sorry, Ace. Now I have 12 cards, 2 to K.

Let me redefine my good cards as J, Q, K. I’ve already defined my bad cards as 2, 3, 4. There are 12 different card values overall. Let me make the problem even simpler. If we encounter a good card, and praise it, what are the chances that I get another good card? (Let’s assume that we have put together a million shuffled decks, so the removal of one card doesn’t alter the probabilities much.)

Well, there are 3 good card values, out of 12, and 9 card values that aren’t good – so that’s 9/12 (75%) chance that I will get a not good card after a good card. What about if I only have 12 cards? Well, if I draw a K, I now only have the Q and the J left in my good card set, compared to 9 other cards. So I have a 9/11 chance of getting a worse card! 75% chance of the next card being worse is actually the best situation.

You can probably see where this is going. I have a similar chance of showing improvement after I’ve abused the deck for giving me a bad card.

Shouting at the cards had no effect on the situation at all – success and failure are defined so that the chances of them occurring serially (success following success, failure following failure) are unlikely but, more subtly, we extend the definition of bad when we have a good card – and vice versa! If we draw any card from 5 to 10 normally, we don’t care about it, but if we want to stay in the good zone, then these 6 cards get added to the bad cards temporarily – we have effectively redefined our standards and made it harder to get the good result. Here’s a diagram, with colour for emphasis, to show the usually neutral outcomes are grouped with the extreme.. No wonder we get the results we do! The rules that we use have determined what our outcome MUST look like.

So, that’s Card Shouting. It seems a bit silly but it allows you to start talking about measurement and illustrate that the way you define things is important. It introduces probability in terms of counting. (You can also ask lots of other questions like “What would happen if good was 8-K and bad was 2-7?”)

My final post on this, tomorrow, is going to tie this all together to talk about giving students feedback, and why we have to move beyond simple definitions of positive and negative, to focus on adding constructive behaviours and reducing destructive behaviours. We’re going to talk about adding some Kings and removing some deuces. See you then.

It’s Feedback Week! Yelling at Pilots is Good for Them!

Posted: April 4, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, measurement, pilot training, reflection, teaching, teaching approaches 1 CommentThe next few posts will deal with effective feedback and the impact of positive and negative reinforcement. I’ll start off with a story that I often tell in lectures.

There’s a well established fact among pilot instructors, in the Air Force, that negative reinforcement works better than positive reinforcement. Combat pilots are very highly trained, go through an extremely rigorous selection procedure and have to maintain a very high level of personal discipline and fitness. The difference between success and failure, life and death effectively, can come down to one decision. During training, these pilots are put under constant stress, to try and prepare them for the real situation.

The instructors have observed that these pilots respond better to being yelled at than being congratulated. What’s their evidence? Well, if a pilot does something really well, and is praised, chances are his or her performance will get worse. Whereas, if the pilot does something bad, or dangerous, and you yell at him or her, his or her performance will improve. Well, that’s a pretty simple framework. Let’s draw it as a diagram.

Now, of course, to see the impact of praise or abuse, you have to record what you did (the praise or abuse) and then what happened (improvement or decline). So we need to draw up some boxes. Ticks mean praise, crosses mean abuse. Up arrow mean improvement, down arrow means decline. A tick in the box that you reach by selecting the column that belongs to Praise (tick) and the row that belongs to Improvement (up arrow) means that the pilot improved after praise. Let’s look at a picture.

So here we can see that, much as an instructor would expect, when I’m nice to you, you get worse more often that you get better. You get better once when praised, compared to getting worse three times. But, wow, when I yelled at you, you get better far more often than you got worse!

There is, of course, a trick here. Yes, it appears that shouting works better than praising but, without giving the game away in the comments (or Googling), do you know why? (The data is a reasonable approximation of the real situation, so there are no hidden arrows or ticks anywhere. 🙂 )

Now, true confession, the picture above is not actually of pilot training – I don’t have an F18 on my desk – but it will be a reasonable approximation of the situation, although it comes from a different source. The graph above comes from a game called “Card Shouting” and I’ll tell you more about that in tomorrow’s post.