Rule 0: Read Your Sources Before You Cite Them

Posted: May 12, 2012 Filed under: Education, Opinion | Tags: authenticity, blogging, education, ethics, higher education, knuth, plagiarism, reflection, teaching, teaching approaches 1 Comment(This, once again, is a little more opinion/political but it does touch on some important teaching points and might be useful for a class in ethics. However, some of you might find my editorial stance disagrees with your perspective.)

Some of you will have seen that the Chronicle of Higher Education recently fired one of their blogging staff because she “did not meet The Chronicle’s basic editorial standards for reporting and fairness in opinion articles”. You can find the story in a number of places, and there’s a reasonable summary here, but, despite people trying to turn this into a debate on “left-wing victimisation clap trap” versus “freedom of speech” versus any number of the quite offensive straw men that were put up in the original blog, Naomi Schaefer Riley committed the cardinal sin.

She published work that made a claim which could not be substantiated by the references.

The title of her blog was “The Most Persuasive Case for Eliminating Black Studies? Just Read the Dissertations.” but, as it turned out, she hadn’t. The dissertations weren’t available to read so she wrote a scathing, dismissive and quite unpleasant article on incomplete knowledge. Then, when called on it, she claimed that she didn’t need to read them to write a 500 word blog post.

Regardless of everything else in the post, regardless of who is right, this is just not acceptable. Had she started from a position of assessing the abstracts, drawing a long bow and then saying “But, of course, we have to see the dissertations”, I suspect she’d still have her job. Journalists do this all the time. However, like scientists, there comes a point where you have to be able to pick up the grain of truth that you’re standing on and point to it. If it turns out that you’ve, effectively, made something up or, worse, misrepresented what you’ve read, then that’s unacceptable and in this case, quite rightly, the Chronicle asked her to go.

A book by Donald Knuth (which I won’t be speaking about today but there are not that many good Knuth shots. Don’t Google Image Search for him at work, because you’ll get an underwear model as well. [WHO IS NOT DONALD KNUTH, I HASTEN TO ADD.])

Of course, you know that I discovered that people had done this. How? The survey paper, to avoid plagiarising Knuth, had rephrased one of the clear and concise explanations – and they had introduced a distinctive way of representing the problem. (I still found the original much clearer.) It got to the stage that I could tell who had read the original or the survey from which twist they had in their framing paragraph for a key point, without having to spend time looking at the references.

Why had people done this? Because Knuth wasn’t readily available. Being in a 1965 publication meant that many libraries had shunted these ‘old books’ to stores as newer volumes came in and it required a week or two to get it back, sometimes longer. Sometimes these volumes were lost forever. (These days, I’m happy to say, there are many on-line sources for this paper. So there’s no excuse, if you’re in CS, you go off now and read yourself some Knuth.) The survey paper was easy to find and was pretty well written. It was just unfortunate that a wrinkle had crept in that allowed us to tell Knuth from Knuth-prime.

It’s still no excuse. It’s a pretty basic rule for us – if you’ve only read the abstract, you haven’t read the paper. If you haven’t read the paper, you can’t cite the paper. If you’ve read a survey, then you can cite the survey but not one of the surveyed papers. But, categorically and set in stone, if you haven’t read the paper then you can’t criticise the paper.

Personally, I think that Naomi Schaefer Riley’s article was pretty badly written, unnecessarily vicious and was the kind of article I’d describe as “written by the food critic before they entered the restaurant”. But that’s only my opinion of the worth of the article. For that, should she lose her job? No, of course not – we differ, that’s life. But for writing an article that insinuated in the text, and stated in the heading, that she had read something, upon which she based a vitriolic criticism, which she then recanted, claiming she didn’t have enough time?

I could lose my job for that. I could even lose my PhD for that.

My Vice Chancellor could lose his job for that.

It’s a bit of a shame that it took some community nudging for the Chronicle to do something here, but I think they did the right thing. If you want to write about our world and our standards, then I think you pretty much have to exemplify them yourselves. It’s all about authenticity. Fairness. Ethics. Something that I hope Naomi Schaefer Riley can think about and learn from. I hope she’s had a chance to think about this and go forward constructively from it sometime in the future. Maybe no-one has every called her on it before? Either way, the next time she shows up, I’ll happily read what she’s written – but I will be checking her references.

100 Killer Words (Pleas Reed Allowed)

Posted: May 6, 2012 Filed under: Education, Opinion | Tags: authenticity, blogging, education, higher education, identity, ivory tower, killer words, learning, lighthouse, reflection, teaching Leave a commentWriting over 100,000 words in a year has an impact on a lot of things. It affects the way that you think about whatever it is that you’re writing on. It affects the way that you manage your time, because you have to put aside 30-60 minutes a day. It affects the way that you think about your contact with the world because, when you have a daily deadline, you have to find something interesting every single day.

I have always read a lot. I read quickly and I enjoy it a great deal. But, until recently, apart from technical writing, my reading wasn’t important enough to keep track of. I surfed some pages over there? Oh, that’s nice. But now? Now, if I read something and there’s a germ of an idea, I have to keep track of it because I will need that to put together a post, most often late at night or on weekends. My gadgets and browsers are full of half-ideas, links, open pages, sketches. It changes you, writing and thinking this much in such a short time.

Let me, briefly, tell you how I’ve changed this year. I don’t know what to call this year because it’s most certainly not “The Year of Living Dangerously” or “The Year My Voice Broke” and it’s most certainly not “The Year of Living Biblically”. But let’s leave that for the moment. Let me tell you how I’ve changed.

- I have never used my brain so much, for such an extended period. My day is now full of stories and influences, connections and images, thinking, analysing, preparing and presenting. This is changing me as a person – giving me depth, making me more able to discuss issues, drawing out a lot of the frustration and anger I’ve wrestled with for years.

- I now try to construct working solutions from what I have, rather than excise non-working components. If reading and thinking this much about education has taught me anything, it’s that there is no perfect system and there are no perfect people. Saying that your system would work if only people were better is not achieving anything. You have to build with what you’ve got. People are building amazing systems from ordinary people, inspiration and not much else. No matter how you draw up your standards, setting a perfection bar, which is very different from a quality bar, will just lead to failure, frustration and negativity.

- I can see the possibility of improvement. People, governments, companies, systems – they often disappoint me. I have had the luxury of reading across the world and writing a small fraction of it. For every cruel, vicious, and stupid person, there are so many more other people out there. I have long wondered whether our world will outlast me by much. Am I sure that it will? No. Am I more optimistic that it will? Yes.

But it’s not all beer and skittles. I also work far too much. Along the way, my workaholism has been severely re-engaged. I worked a full (long) week last week and yet, here I am, 5 hours work on Saturday and somewhere along the lines of 8-10 hours on Sunday. That’s not a good change and I have to work out how I can keep all the positive aspects – because the positives are magnificent – without getting drawn down into the maelstrom.

I would like to describe this year as “The Year of Living” because, in many ways, I’ve never felt so alive, so aware, so informed and so capable of changing things in a constructive way. But, until I nail the overwork thing, it doesn’t get that title.

For now, because I’ve written 100,000 words or, 100 kiloWords, I’m going to call it “The Year of Killer Words” and hope that, homophones aside, that there’s some truth to that – that some of my words have brought light into the shadows and killed some monsters. Rather idealistically, that’s how I think of the job that we do – we bring light into dark places. Yes, a University can look like an ivory tower sometimes, and sometimes it is, but lighthouses look much the same – it’s the intention and the function that makes one an elitist nightmare and gives the other its worth and nobility.

That image, up the top? That’s what I think we’re doing when we do it right.

Saving Lives With Pictures: Seeing Your Data and Proving Your Case

Posted: May 2, 2012 Filed under: Education | Tags: advocacy, analytics, authenticity, cholera outbreak, data visualisation, design, education, higher education, learning, teaching, teaching approaches, voronoi 1 Comment

From Wikipedia, original map by John Snow showing the clusters of cholera cases in the London epidemic of 1854

This diagram is fascinating for two reasons: firstly, because we’re human, we wonder about the cluster of black dots and, secondly, because this diagram saved lives. I’m going to talk about the 1854 Broad Street Cholera outbreak in today’s post, but mainly in terms of how the way that you represent your data makes a big difference. There will be references to human waste in this post and it may not be for the squeamish. It’s a really important story, however, so please carry on! I have drawn heavily on the Wikipedia page, as it’s a very good resource in this case, but I hope I have added some good thoughts as well.

19th Century London had a terrible problem with increasing population and an overtaxed sewerage system. Underfloor cesspools were overfilling and the excess was being taken and dumped into the River Thames. Only one problem. Some water companies were taking their supply from the Thames. For those who don’t know, this is a textbook way to distribute cholera – contaminating drinking water with infected human waste. (As it happens, a lack of cesspool mapping meant that people often dug wells near foul ground. If you ever get a time machine, cover your nose and mouth and try not to breath if you go back before 1900.)

But here’s another problem – the idea that germs carried cholera was not the dominant theory at the time. People thought that it was foul air and bad smells (the miasma theory) that carried the bugs. Of course, from this century we can look back and think “Hmm, human waste everywhere, bugs everywhere, bad smells everywhere… ohhh… I see what you did there.” but this is from the benefit of early epidemiological studies such as those of John Snow, a London physician of the 19th Century.

John Snow recorded the locations of the households where cholera had broken out, on the map above. He did this by walking around and talking to people, with the help of a local assistant curate, the Reverend Whitehead, and, importantly, working out what they had in common with each other. This turned out to be a water pump on Broad Street, at the centre of this map. If people got their water from Broad Street then they were much more likely to get sick. (Funnily enough, monks who lived in a monastery adjacent to the pump didn’t get sick. Because they only drank beer. See? It’s good for you!) John Snow was a skeptic of the miasma theory but didn’t have much else to go on. So he went looking for a commonality, in the hope of finding a reason, or a vector. If foul air wasn’t the vector – then what was spreading the disease?

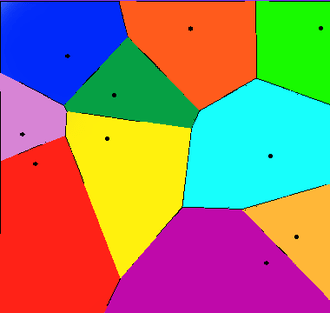

Snow divided the map up into separate compartments that showed the pump and compartment showed all of the people for whom this would be the pump that they used, because it was the closest. This is what we would now call a Voronoi diagram, and is widely used to show things like the neighbourhoods that are serviced by certain shops, or the impacts of roads on access to shops (using the Manhattan Distance).

A Voronoi diagram from Wikipedia showing 10 shops, in a flat city. The cells show the areas that contain all the customers who are closest to the shop in that cell.

What was interesting about the Broad Street cell was that its boundary contained most of the cholera cases. The Broad Street pump was the closest pump to most people who had contracted cholera and, for those who had another pump slightly closer, it was reported to have better tasting water (???) which meant that it was used in preference. (Seriously, the mind boggles on a flavour preference for a pump that was contaminated both by river water and an old cesspit some three feet away.)

Snow went to the authorities with sound statistics based on his plots, his interviews and his own analysis of the patterns. His microscopic analysis had turned up no conclusive evidence, but his patterns convinced the authorities and the handle was taken off the pump the next day. (As Snow himself later said, not many more lives may have been saved by this particular action but it gave credence to the germ theory that went on to displace the miasma theory.)

For those who don’t know, the stink of the Thames was so awful during Summer, and so feared, that people fled to the country where possible. Of course, this option only applied to those with country houses, which left a lot of poor Londoners sweltering in the stink and drinking foul water. The germ theory gave a sound public health reason to stop dumping raw sewage in the Thames because people could now get past the stench and down to the real cause of the problem – the sewage that was causing the stench.

So John Snow had encountered a problem. The current theory didn’t seem to hold up so he went back and analysed the data available. He constructed a survey, arranged the results, visualised them, analysed them statistically and summarised them to provide a convincing argument. Not only is this the start of epidemeology, it is the start of data science. We collect, analyse, summarise and visualise, and this allows us to convince people of our argument without forcing them to read 20 pages of numbers.

This also illustrates the difference between correlation and causation – bad smells were always found with sewage but, because the bad smell was more obvious, it was seen as causative of the diseases that followed the consumption of contaminated food and water. This wasn’t a “people got sick because they got this wrong” situation, this was “households died, with children dying at a rate of 160 per 1000 born, with a lifespan of 40 years for those who lived”. Within 40 years, the average lifespan had gone up 10 years and, while infant mortality didn’t really come down until the early 20th century, for a range of reasons, identifying the correct method of disease transmission has saved millions and millions of lives.

So the next time your students ask “What’s the use of maths/statistics/analysis?” you could do worse than talk to them briefly about a time when people thought that bad smells caused disease, people died because of this idea, and a physician and scientist named John Snow went out, asked some good questions, did some good thinking, saved lives and changed the world.

Oh, Perry. (Our Representation of Intellectual Development, Holds On, Holds on.)

Posted: April 30, 2012 Filed under: Education | Tags: advocacy, authenticity, dualism, education, higher education, learning, measurement, multiplicity, perry, reflection, research, resources, teaching, teaching approaches 1 CommentI’ve spent the weekend working on papers, strategy documents, promotion stuff and trying to deal with the knowledge that we’ve had some major success in one of our research contracts – which means we have to employ something like four staff in the next few months to do all of the work. Interesting times.

One of the things I love about working on papers is that I really get a chance to read other papers and books and digest what people are trying to say. It would be fantastic if I could do this all the time but I’m usually too busy to tear things apart unless I’m on sabbatical or reading into a new area for a research focus or paper. We do a lot of reading – it’s nice to have a focus for it that temporarily trumps other more mundane matters like converting PowerPoint slides.

It’s one thing to say “Students want you to give them answers”, it’s something else to say “Students want an authority figure to identify knowledge for them and tell them which parts are right or wrong because they’re dualists – they tend to think in these terms unless we extend them or provide a pathway for intellectual development (see Perry 70).” One of these statements identifies the problem, the other identifies the reason behind it and gives you a pathway. Let’s go into Perry’s classification because, for me, one of the big benefits of knowing about this is that it stops you thinking that people are stupid because they want a right/wrong answer – that’s just the way that they think and it is potentially possible to change this mechanism or help people to change it for themselves. I’m staying at the very high level here – Perry has 9 stages and I’m giving you the broad categories. If it interests you, please look it up!

We start with dualism – the idea that there are right/wrong answers, known to an authority. In basic duality, the idea is that all problems can be solved and hence the student’s task is to find the right authority and learn the right answer. In full dualism, there may be right solutions but teachers may be in contention over this – so a student has to learn the right solution and tune out the others.

If this sounds familiar, in political discourse and a lot of questionable scientific debate, that’s because it is. A large amount of scientific confusion is being caused by people who are functioning as dualists. That’s why ‘it depends’ or ‘with qualification’ doesn’t work on these people – there is no right answer and fixed authority. Most of the time, you can be dismissed as having an incorrect view, hence tuned out.

As people progress intellectually, under direction or through exposure (or both), they can move to multiplicity. We accept that there can be conflicting answers, and that there may be no true authority, hence our interpretation starts to become important. At this stage, we begin to accept that there may be problems for which no solutions exist – we move into a more active role as knowledge seekers rather than knowledge receivers.

Then, we move into relativism, where we have to support our solutions with reasons that may be contextually dependant. Now we accept that viewpoint and context may make which solution is better a mutable idea. By the end of this category, students should be able to understand the importance of making choices and also sticking by a choice that they’ve made, despite opposition.

This leads us into the final stage: commitment, where students become responsible for the implications of their decisions and, ultimately, realise that every decision that they make, every choice that they are involved in, has effects that will continue over time, changing and developing.

I don’t want to harp on this too much but this indicates one of the clearest divides between people: those who repeat the words of an authority, while accepting no responsibility or ownership, hence can change allegiance instantly; and those who have thought about everything and have committed to a stand, knowing the impact of it. If you don’t understand that you are functioning at very different levels, you may think that the other person is (a) talking down to you or (b) arguing with you under the same expectation of personal responsibility.

Interesting way to think about some of the intractable arguments we’re having at the moment, isn’t it?

The Unhappiest Bartender in Australia

Posted: April 24, 2012 Filed under: Education | Tags: advocacy, authenticity, education, higher education, reflection, teaching, teaching approaches Leave a commentI’m not talking about students for this one, I’m talking about the scientific community. On reading yet more articles about the growing rate of retraction, on top of the inability to replicate key studies, it appears that we are at risk of losing our way. I need to be able to train my students for the world that they will work in – so I’m going to briefly discuss my beliefs and interview myself to talk about my fears of what happens when scientific integrity is trumped by mercenary and short-sighted values.

The executive summary is “Do science properly or do something else.” If you’re already practising science at a high level, with integrity, please leave work early and enjoy a beverage of your choice, at your own expense. I salute you! Come back and read this once you are refreshed. (This is a bit more opinionated than usual, so if you want to focus on my Learning and Teaching posts, you might want to read some of my previous posts or come back tomorrow. I welcome you to stay, however.)

I understand, to an extent, why people are taking questionable approaches to their work in order to achieve publication in the same that I understand why students cheat sometimes. But comprehending the rationalisation does not mean that I condone the actions – far from it. In another blog I commented on the fact that some people change their behaviour when they drink. If they are aware that this is going to happen, then the excuse “I was drunk” is not an excuse. Getting drunk was an enabling step. If your choices, as a scientist, are leading you down dark paths then you have to look at the end of that path to see where you’re going. “That was where my path naturally led” isn’t valid when you know that you’re on the wrong road.

I’m pretty worried by some of the behaviour that people are practising to get ahead. But don’t think that I’m in a strong enough position that I’m immune to the lure of the dark path – I want to keep my job, make good progress, get promoted, get grants, have an impact. Like everyone else, I want to change the world. The question is “What are you prepared to compromise in order to get to that stage?”

Do I feel pressure to publish? Yes! Am I willing to fabricate data to do so? No. Am I willing to cite ‘suggested papers’ that all appear to be from the editor of the special edition or a select group of friends? No. Am I willing to run an experiment 100 times and write up the single time it worked as if this was a general case? No!

But, wait, if you don’t meet your publication targets, doesn’t that have an impact on your career? Yes, possibly. I’m expected to publish at a very high level on a regular basis.

And if you don’t? Well, I can demonstrate my worth in other ways but research turns into publications, publications support grants, grants bring in people, people do research. Not publishing will have a serious impact on my ability to produce research.

So you’d bend a little because it’s in the greater interest for your work to be published because your research is valuable. Nice try, but no. I’d prefer to leave my job than compromise my principles in this regard.

Well, it’s really nice that you’ve got that level of agency but, hey, your wife has a stable income and the wolf isn’t at your door. Aren’t you just making an argument from privilege? Hmmm.

Well, that’s a good question. My response would normally be that there are many, many jobs that use some of what I have that don’t require me to have a strong set of scientific and personal ethics. I could teach computing courses and never have to worry about research ethics. I could write code as a small cog in a large company and not have to worry as much about experimental replication. I could tend bar, I guess, or maybe work in a shop, if jobs like that still exist in 10 years time and they’ll hire a 50 year old. But, again, this assumes a level of skill transferability and agency that does presume a basis of privilege if I’m going to walk away from science and do something else.

But this assumes that you went in to be a scientist thinking that this kind of bad behaviour is just what scientists did, that ethics were optional, that publication by any means was acceptable – that reality was mutable when deadlines were tight. Let’s break this thinking now because I don’t want any students to come to my program thinking like that.

I believe that if you want to be a scientist, you have to accept that this comes with a package of ethical behaviours that are not optional.

Science has impact! Building on bad science gives you more bad science. This bad behaviour in science could be, and probably is, killing people. We’re potentially setting back scientific progress because of time wasted trying to build on experiments that don’t work. We are in the middle of a data deluge and picking from the many correct things is hard enough, without adding deceitful or misleading publications as well.

What concerns me, reading about increasing retraction rates and dodgy surveys, is that the questionable path to success may become the norm. People are already questioning perfectly good science, because of a growing mistrust fuelled by bad scientific behaviour, and “Well, I don’t know” is a de rigeur rejoinder in certain parts of the blogosphere.

I always talk about authenticity because it’s the backbone of my teaching. I have to believe it, or know it, or it just won’t work with the students. The day I think that our community is lost, I’ll no longer be able to train students to go to the fantasy land that I naively thought was reality and I’ll quit.

Come and find me, if I do, I’ll probably be working in a bar – and looking really unhappy.

SIGCSE, Keynote #2, Hal Abelson, “The midwife doesn’t get to keep the baby.”

Posted: March 3, 2012 Filed under: Education, Opinion | Tags: abelson, authenticity, DSpace, education, higher education, mit, MITx, OCW, reflection, resources, sigcse, teaching, teaching approaches 6 CommentsWell, another fantastic keynote and, for the record, that’s not the real title. The title of the talk was From Computational Thinking to Computational Values. For those who don’t know who Hal Abelson is, he’s a Professor of EE/CS at MIT who has made staggering contributions to pedagogy and the teaching of Computer Science over the years. He’s been involved with the first implementations of Logo, changed the way we think about using computer languages, has been a cornerstone of the Free Software Movement (including the Foundation), led the charge of the OpenCourseWare (OCW) at MIT, published many things that other people would have been scared to publish and, basically, has spent a long time trying to make the world a better place.

It went without saying that, today, we were in for some inspiration and, no doubt, some sort of call to arms. We weren’t disappointed. What follows is as accurate a record as I could make, typing furiously. I took a vast quantity of notes over what was a really interesting talk and I’ll try to get the main points down here. Any mistakes are mine and I have tried to represent the talk without editorialising, although I have adjusted some of the phrasing slightly in places, so the words are, pretty much, Professor Abelsons’s.

Professor Abelson started from a basic introduction of Computational Thinking (CT) but quickly moved on to how he thought that we’d not quite captured it properly in modern practice: it’s how we look in this digital world and see it as a source of empowerment for everybody, as a life changing view. Not just CT, but computational values.

What do we mean? We’re not only talking about cool ideas but that these ideas should be empowering and people should be able to exercise great things and have an impact on the world.

He then went on to talk about Google’s Ngram viewer, which allows you to search all of the books that Google has scanned in and find patterns. You can use this to see how certain terms, ideas and names come and go over time. What’s interesting here is that (1) ascent to and descent from fame appears to be getting faster and (2) you can visualise all of this and get an idea of the half-life of fame (which was nearly the title of this post).

Abelson describes this as a generative platform, one which can be used for things that were not thought of it when it was built, one we can build upon ourselves and change over time. Generating new things for an unseen future. (Paper reference here was Nature, with a covering article from another magazine entitled “Researchers Aim to chart intellectual trends in Arxiv”)

Then the talk took a turn. Professor Abelson took us back, 8 years ago, when Duke’s “Give everyone an iPod” project had every student (eventually) with a free iPod and encouraged them to record, share and mix-up what they were working with.

Enter the Intellectual Property Lawyer. Do the students have permission to share the lecturer-created creative elements of the lectures?

Professor Abelson’s point is that we are booming more concerned with locking up our content into proprietary Content Management Systems (CMS) and this risks turning the academy into a marketplace for packaged ideas and content, rather than a place of open enquiry and academic freedom. This was the main theme of the talk and we’ve got a lot of ground left to cover here! This talk was for those who loved computational values, rather than property creation.

We visited the early, ham-fisted attempts to grant limited licences for simple activities like recording lectures and the immediately farcical notion that I could take notes of a lecture and be in breach of copyright if I then discussed it with a classmate who didn’t attend. Ngrams shows what happens when you have a system where you can do what you like with the data – what if the person holding that data for you, which you created, starts telling you what to do? Where does this leave our Universities?

Are we producing education or property? Professor Abelson sees this as a battle for the soul of the Universities. We should be generative.

We can take computational actions, actions that we will take to reinforce the sense that we have that people ought to be able to relish the power that they get from our computational thinking and computational ideas. This includes providing open courseware (like MIT’s OCW and Stanford’s AI) and open access to research, especially (but not only) when funded by the public purse.

As a teaser, at this point, Abelson introduced MITx, an online intensive learning system that opens up on MONDAY. No other real details – put it in your calendar to check out on Monday! MIT want their material and their content engines to be open source and generative – that word again! Put it into your own context or framework and do great things!

The companion visions to all of this are this:

- Great learning institutions provide universal access to course content. (OpenCourseWare)

- Great research institutions provide universal access to their collective intellectual resources.(DSpace)

What are the two reasons that we should all support these open initiatives? Why should we fill in the moat and open the drawbridge?

- Without initiatives to maintain them, we risk marginalising our academic values and stressing our university communities.

- To keep a seat at the table in decisions about the disposition of knowledge in the information age.

Abelson introduced an interesting report, “Who Owns Academic Work? Battling for Control of Intellectual Property”, which discusses the conflation of property and academic rights.

Basically, scientific literature has become property. We, academia, produce it and then give away our rights to journal publishers, who give us limited rights in exchange on a personal level and then hold onto it forever. Neither our institution nor the public has any right to this material anymore. We looked at some examples of rights. Sign up to certain publishers and, from that point on, you can use only up to 250 words of any of the transferred publications in a new work. The number of publishers is shrinking and the cost of subscription is rising.

Professor Abelson asked how it is that, in this sphere alone, the midwife gets to keep the baby? We all have to publish if we act individually, as promotions and tenure depend upon publication in prominent journals – but that there was hope (and here he referred to the Mathematical boycott of the Elsevier publishing group). HR 3699 (the Research Works Act) could have challenged any federal law that mandated open access on federally funded research. Lobbied for by the journal publishing group, it lost support, firstly from Elsevier, and then from the two members of Congress who proposed it

Even those institutions that have instituted an open access policy are finding it hard – some publishers have made specific amendments to the clause that allows pre-print drafts to be display locally to say “except where someone has an institutionally mandated open access policy”.

BUT. HR3699 has gone away for now. Abelson’s message is that there is hope!

We have allowed a lot of walled gardens to spring up. Places where data is curated and applications made available, but only under the permission of the gardener. Despite our libraries paying up to hundreds of thousands of dollars for access to the on-line journal stores, we are severely limited in what we can do with them. Your library cannot search it, index it, scrape it, or many other things. You can, of course, buy a service that provides some of these possibilities from the publisher. A walled garden is not a generative environment.

Jonathan Zittrain, 2008, listed two important generative technologies: the internet and the PC, because you didn’t need anyone’s permission to link or to run software. In Technology Review, now, Zittrain thinks that the PC is dead because of the number of walled gardens that have sprung up.

In Professor Abelson’s words:

“Network Effectslead toMonopoly Positionslead toConcentration of Channelslead toDecline of Generativity.“

“We have the spark of inspiration about how one should relate to their information environment and the belief that that kind of inspiration, power and generativity should be available to everybody.These beliefs are powerful and have powerful enemies. Draw on your own inspiration and power to make sure that what inspired us is going to be available to our students.“

Fred Brooks: Building Student Projects That Work For Us, For Them and For Their Clients

Posted: March 3, 2012 Filed under: Education | Tags: authenticity, curriculum, design, education, fred brooks, higher education, mythical man month, principles of design, project, reflection, resources, software engineering, teaching, teaching approaches, universal principles of design 3 CommentsIn the Thursday keynote, Professor Brooks discussed a couple of project approaches that he thought were useful, based on his extensive experience. Once again, if you’re not in ICT, don’t switch off – I’m sure that there’s something that you can use if you want to put projects into your courses. Long-term group projects are very challenging and, while you find them everywhere, that’s no guarantee that they’re being managed properly. I’ll introduce Professor Brooks points and then finish with a brief discussion on the use of projects in the curriculum.

Firstly, Brooks described a course that you might find in a computer architecture course. The key aspect of this project is that modern computer architecture is very complex and, in many ways, fully realistic general purpose machines are well beyond the scope of time and ability that we have until very late undergraduate study. Brooks works with a special-purpose unit but this drives other requirements, as we’ll see. Fred’s guidelines for this kind of project are:

- Have milestones with early delivery.

Application Description

Students must provide a detailed application description which is the most precise statement that the students can manage. A precise guess is considered better here than a vague fact, because the explicit assumption can be questioned and corrected, where handwaving may leave holes in the specification that will need to be fixed later. Students should be aware of how sensitive the application is to each assumption – this allows people to invest effort accordingly. The special-purpose nature of the architecture that they’re constructing means that the application description has to be very, very accurate or you risk building the wrong machine.Programming Manual (End of the first month as a draft)

Another early deliverable is a programming manual – for a piece of software that hasn’t been written yet. Students are encouraged to put something specific down because, even if it’s wrong, it encourages thought and an early precision of thought. - Then the manual is intensely critiqued – students get the chance to re-do it.

- The actual project is then handed in well before the final days of semester.

- Once again the complete project goes through a very intense critique.

- Students get the chance to incorporate the changes from the critique. Students will pay attention to the critique because it is criticism on a live document where they can act to improve their performance.

The next project described is a classic Software Engineering project – team-based software production using strong SE principles. This is a typical project found in CS at the University level but is time-intensive to manage and easy to get wrong. Fred shared his ideas on how it could be done well, based on running it over 22 years since 1966. Here are his thoughts:

- You should have real projects for real clients.

Advertise on the web for software that can be achieved by 3-5 people during a single semester, which would be useful BUT (and it’s an important BUT) you can live without. There must be the possibility that the students can fail, which means that clients have to be ready to get nothing, after having invested meeting time throughout the project.

- Teams should be 3-5, ideally 4.

With 3 people you tend to get two against one. With five, things can get too diffuse in terms of role allocation. Four is, according to Fred, just right.

- There should be lots of project choices, to allow teams choice and they can provide a selection of those that they want.

- Teams must be allowed to fail.

Not every team will fail, or needs to fail, but students need to know that it’s possible.

- Roles should be separated.

Get clear role separations and stick to them. One will look after the schedule, using the pitchfork of motivation, obtaining resources and handling management one level up. One will be chief designer of technical content. Other jobs will be split out but it should be considered a full-time job.

- Get the client requirements and get them right.

The client generally doesn’t really know what they want. You need to talk to them and refine their requirements over time. Build a prototype because it allows someone to say “That’s not what I want!” Invest time early to get these requirements right!

- Meet the teams weekly.

Weekly coaching is labour intensive and is mostly made up of listening, coaching and offering suggestions – but it takes time. Meeting each week makes something happen each week. When a student explains – they are going to have to think.

- Early deliverable to clients, with feedback.

Deadlines make things happen.

- Get something (anything) running early.

The joy of getting anything running is a spur to further success. It boosts morale and increases effort. Whatever you build can be simple but it should have built-in stubs or points where the extension to complexity can easily occur . You don’t want to have to completely reengineer at every iteration!

- Make the students present publicly.

Software Engineers have to be able to communicate what they are doing, what they have done and what they are planning to do – to their bosses, to their clients, to their staff. It’s a vital skill in the industry.

- Final grade is composed of a Team Grade (relatively easy to assess) AND an Individual Grade (harder)

Don’t multiple one by the other! If effort has been expended, some sort of mark should result. The Team Grade can come from the documentation, the presentation and an assessment of functionality – time consuming but relatively easy. The Individual Grade has to be fair to everyone and either you or the group may give a false indication of a person’s value. Have an idea of how you think everyone is going and then compare that to the group’s impression – they’ll often be the same. Fred suggested giving everyone 10 points that they allocated to everyone ELSE in the group. In his experience, these tallies usually agreed with his impression – except on the rare occasion when he had been fooled by a “mighty fast talker”

This is a pretty demanding list. How do you do tasks for people at the risk of wasting their time for six months? If failure is possible, then failure is inevitable at some stage and it’s always going to hurt to some extent. A project is going to be a deep drilling exercise and, by its nature, cannot be the only thing that students do because they’ll miss out on essential breadth. But the above suggestions will help to make sure that, when the students do go drilling, they have a reasonable chance of striking oil.

SIGCSE, Keynote #1, Fred Brooks. (Yes, THAT Fred Brooks.)

Posted: March 2, 2012 Filed under: Education | Tags: authenticity, curriculum, design, education, higher education, reflection, sigcse, teaching, teaching approaches 5 CommentsFrederick P. Brooks, Jr is a pretty well-know figure in Computer Science. Even if you only vaguely heard of one of his most famous books “The Mythical Man Month“, you’ll know that his impact on how we think about projects, and software engineering projects in general, is significant and he’s been having this impact for several decades. He’s spent a lot of time trying to get student Software Engineering projects into a useful and effective teaching form – but don’t turn off because you think this is only about ICT. There’s a lot for everyone in what follows.

His keynote on Thursday morning was on “The Teacher’s Job is to Design Learning Experiences; not Primarily to Impart Information” and he covered a range of topics, including some general principles and a lot of exemplars. He raised the old question: why do we still lecture? He started from a discussion of teaching before printing, following the development of the printed word and into the modern big availability, teleprocessed world of today.

His main thesis is that it’s up to the teacher to design a learning experience, not just deliver information – and as this is one my maxims, I’m not going to disagree with him here!

Professor Brooks things that we should consider:

- Learning not teaching

- The student not the teacher

- Experience not just the written word

- developing skills in preference to inserting or providing information

- designing a learning experience, rather than just delivering a lecture

His follow-up to this, which I wish I’d thought of, is that Computer Science professionals all have to be designers, at least to a degree, so linking this with an educational design pathway is a good fit. CS people should be good at designing good CS education materials!

He argues that CS Educational content falls into four basic categories: the background information (like number systems), theory (like complexity mathematics), description of practice (How people HAVE done it) and skills for practice (how YOU WILL do it). In CS we develop a number of these skills through critiqued practice – do it, it gets critiqued and then we do it again.

He then spent some time discussing exemplars, including innovative assignments, the flipped classroom, projects and a large number of examples, which I hope to commit to another post.

Looking at this critically, it’s hard to disagree with any of the points presented except that we stayed at a fairly abstract level and, as the man himself said, he’s a radical over-simplifier. But there was a lot of very useful information that would encourage good behaviour in CS education – it’s more than just picking up a book and it’s also more than just handing out a programming assignment. Often, when people disagree with ‘ivory tower’ approaches, they don’t design the alternative any better. A poorly-designed industry-focused, project-heavy course is just as bad as a boring death0by-lecture theoretical course.

Bad design is bad design. “No design” is almost guaranteed to be bad design.

I’ll post a follow-up going in to more detail over what he said about projects some time soon because I think it’s pretty interesting.

It was a great pleasure to hear such an influential figure and, as always, it wasn’t surprising to see why he’s had so much impact – he can express himself well and, overall, it’s a good message.

Familiarity: Breeding Contempt or Just Contextually Sensitive?

Posted: March 1, 2012 Filed under: Education, Opinion | Tags: authenticity, education, familiarity breeds contempt, higher education, reflection, teaching, teaching approaches 1 CommentOne of the strangest homilies I know is “Familiarity breeds contempt”. Supposedly, in one reading, the more we know someone, the easier it is to find fault. In another, very English, reading of it, allowing someone to be too familiar with you reduces the barriers between you and allows for contempt. (It’s worth noting that being over-familiar with someone and using their first name or a diminutive ahead of an often unstated social timeframe was a major gaffe in society. Please, call me Nick. 🙂 )

What a strange thought that is – that we must maintain an artificial distance lest we be found to be human. There’s a world of behaviour between maintaining professionalism and being stand-offish – one allows you to maintain integrity and do things like provide an objective mark, the other drives a wedge between you and your students. This is a very hard line to handle when you’re teaching K-12, because the winnowing hasn’t occurred yet. In the Higher Ed sector, as I’ve noted before, everyone who couldn’t concentrate or acted up is probably already gone. I have the polite ones, the ones who passed, the ones who didn’t sit there and cut pieces off people’s hair or be generally anti-social.

I have to walk a careful line on this one when I teach in Singapore, because it is a more formal society. Business cards are presented formally, business relationships have more structure and my students prefer to call me Sir or Dr Nick (Hi, everybody!). Now, I’m happy for them to call me Nick but, here’s the tricky thing, not if that means that they have moved me into the box of people that they don’t respect. That’s a cultural thing and, by being aware of it, I manage the relationship better. Down in Australia, I expect my students to call me Nick, because we don’t have as heavily formalised a society and I feel that I can manage my objectivity and relationships without the strictures of being Dr Falkner. But I have a lot of international students and sometimes it just makes them happier to call me Dr Falkner or Sir.

Ultimately, as part of this juggling act, it’s not my view of what is and what is not formal that matters – it’s how the student wants to address me that they feel that I am their teacher, and that they are getting the right kind of education. This then allows us both to work together, happily. If someone calling me Nick is going to put fingernails down the blackboard of their soul, then me insisting upon informality is inappropriate.

When I’m in the US, I take the trouble to explain that I have a PhD and am a tenured Assistant Professor in US parlance, a Lecturer Level B in Australian jargon, because it helps people to put me into the right mental box. This is the other trick of familiarity – you have to make sure that your level of being familiar is contextually correct. It bugs me slightly that I have given talks where people’s attitudes towards me and my material change when they find out I’m tenured and a Doctor, but it’s always my job to work out how to communicate with my audience. If I presume that every audience is the same then I risk being over and under-familiar – and, because I haven’t done my research as to how to deliver my message to that audience , that’s when I risk breeding contempt.

James Frey’s Legacy: Authenticity needs to be authentic!

Posted: February 18, 2012 Filed under: Education, Opinion | Tags: authenticity, education, higher education, reflection, teaching approaches Leave a commentJames Frey is an American author who has been in the news on and off over the last few years. He published a book called A Million Little Pieces, which purported to be memoirs of his struggle with addiction, association with criminals and time in jail. On the strength of this account of his fall and rise, and his defeat of his demons, he sold a lot of books, went on Oprah and probably got to dive, Scrooge McDuck-like, into a giant pool filled with money.

There’s only one problem. Despite being billed as autobiographical, it turned out that his claims that, minor details aside, it was all true were false. It was sold as a memoir, an account of the life of the subject, and it was not an account of his life, but that of, in his own words, “about the person I created in my mind to help me cope, and not the person who went through the experience.”

Many people claimed to find inspiration in James Frey’s work, including Oprah, and the backlash against the book was severe. Once it was established that the path to redemption outlined in the book didn’t start from a sufficiently dark place, or wasn’t really based on fact, questioning arose over what could be learned or derived from a semi-fictional work, rather that a true memoir.

The question here is one of authenticity. If you, as a recovering addict, tell me that the way to beat addiction is to dance the Lambada (the forbidden dance) 7 times a day because that’s the only thing that stops the cravings and I act on this, then I am depending upon your representation of how you beat your demons as being accurate. This assumes that you were actually a drug addict in the first place. That you did dance the Lambada. That it did, to the best of your knowledge, deal with your problems. If you were never an addict in the first place, your undisputed credibility is now disputed and you have no authenticity.

James Frey is a writer. Not one that I enjoy, being frank, but there is no doubt that he can write and produce a book. If he wrote a book entitled “A Million Giant Suckers: How I Turned a Nation’s Obsession with Suffering and Redemption Into Cold, Hard Cash“, I would probably buy it because his credibility is beyond doubt in this regard! (I really want that swimming pool full of paper money, too. Note: never dive into gold, it’s not that soft.)

So how does this apply to teaching? There are two important aspects of authenticity in terms of teaching, for me. Firstly, that when we talk about something from ‘the real world’ outside of academia, that we have either directly experienced it or we have trustworthy accounts of it being in use. (And, in the case of reportage, we clearly state that it is reportage.) Secondly, when we present students with ‘real-world challenges’ that are experiences that will prepare them for the world outside!

To me, this means that when I quote statistics in support of arguments – they are real statistics, with credible sources, in the correct context. This means that I try and get industry involved where possible, if I don’t have the experience myself, and talk to people to get informed. I used to work in industry but that was over 10 years ago and industry has changed a lot in that time. Yes, I’m still a sys admin and network admin at heart, but I’ve never had to implement BGP or MPLS or run a Lion server cluster, and that means that I need to keep reading and talking to other people to maintain my credibility.

For me, though, I have to careful what I claim. I’m the first to admit that, while I have a good skill basis, I’m now rusty at systems because I’ve spent all my time polishing my research, teaching and admin. I’m comfortable talking here, because I feel have sufficient credibility to discuss these matters, but you wouldn’t find me holding forth on the administration of Linux boxes any time soon.

I don’t want my students to learn a good lesson if I’m presenting bad information to them – that, to me, has always been the cold comfort of scoundrels, that someone learnt a valuable life lesson from their dastardly deeds. I don’t think James Frey ever set out to go as far as he did, I doubt he’s that calculating, but taking an unsuccessful novel and turning it into a successful memoir may make good business sense… but it’s a terrible, terrible lesson on the value of authenticity.