E-Library: Electronic or Ephemeral?

Posted: May 13, 2012 Filed under: Education, Opinion | Tags: advocacy, blogging, design, eBook, education, educational problem, ephemeral library, ethics, Generation Why, higher education, resources, teaching, teaching approaches Leave a commentMy technical and professional library is a strange beast. Part Computer Science, part graphic design, part fiction, it’s made up of new books, books I had in Uni, books that I have inherited from other academics and books that I salvaged from libraries before they disappeared. But, of course, there is a new and growing section of my library, which you can’t see on the shelves – my E-Library. I realised that, this week, I now have started an E-Library collection that grows on a monthly basis as I add more content. I shall use the term eBooks for the rest of this post, but I’m not referring to a specific format – it’s just the digitised and electronically transferable image of a book that I’m concerned with.

Why am I buying eBooks? Because they arrive within minutes. I talk about this from a student perspective in tomorrow’s main post but, for me, I buy physical+electronic where I can because I will end up with a copy that I can use right now and a copy that I can add to my physical library.

Ephemeral Library X, © Krystyna Ziach

http://ny.artslant.com/global/artists/show/213982-krystyna-ziach?tab=ARTWORKS&user_id=209460

When I am gone, or when I retire, my professional library will be stripped for those things that will be kept, by me or my wife, and the rest will go out into the corridor, onto a table, for the rest of my colleagues and students to pick through. The remainder will probably be offered to a school, as the main library is not really interested in my 1950s Engineering texts. But what of texts that only exist in the Ephemeral Library? There are so many questions about this form of my library:

- Will I even be able to transfer all of my books? I buy mostly from suppliers who allow me to legitimately transfer the electronic copies but there are some of my books that are locked to my identity or my machine.

- How will I advertise them? Put up a webpage with a download link? That immediately breaches most publishers restrictions. Asking people to register their interest and then provide it to them takes effort and, most likely, means that it will be a low priority.

- Will the formats that I am buying today be a working format in 30 years time? We have a tendency to think in the now, forgetting that 78s are gone, 8-track is gone, cassette is mostly gone and vinyl is more fringe oriented than mainstream these days. Beta is buried deep in the ground with VHS buried just above it. The physical formats are being obliterated in the face of the relentless march of digitised containers but, remember, standards change and, worse, standards evolve within the standards themselves. At some stage BluRay X will break BluRay 1.2, most likely. In the same way, PDF 22 may lose the ability to handle earlier versions. Backwards compatibility is a grand goal but, time and again, we have eventually abandoned it on the argument that it is no longer necessary.

- Will I maintain the burden of updating my media to make sure that 3 doesn’t happen? How much spare time do you have?

- Finally, what happens when I die? I don’t think I’m allowed to transfer my iTunes account details to my wife – so over 260 songs will, at some stage, disappear from our shared iPods. The same for my library. Suddenly, books disappear. Possibly books that have not been published for years and will never be published again. Gutenberg dies and all of his Bibles spontaneously combust? Not the most robust model.

Obviously, part of the whole management process that will have to be recognised is the difference between renting, leasing and owning a digital property. If we are actually going to own things, and most people think that they own things but would be surprised if they read the fine print, we have to come up with a form of identity management that allows transfer of property to occur across legally recognisable lines. One can only hope that we’ve sorted out the simple things like child rearing, marriage, hospital visitation and social security access before we attempt to push through a global, trans-corportate, persistent rights management system that allows us to keep our collections together, even after we die.

Spot the Computer Science Student and Win!

Posted: May 8, 2012 Filed under: Education | Tags: advocacy, computer science, computer science student, education, higher education, learning, measurement, resources, stereotype, student, teaching approaches 1 CommentCS Students get a pretty bad rap on that whole “stereotype” thing. Given that I’m an evidence-based researcher, let’s do some tests to find out if we can, in fact, spot the CS student. Here’s a quick game for you. Hidden in this image are 3 Computer Science students.

Which ones are they? (You can click on the image to enlarge it.)

I’ll make it easy for you to reference them – we’ll number the rows from the top (A) to the bottom (H) and the images from left to right as 1 to … well, whatever, because the rows aren’t the same length. So the picture with the cactus is A2, ok? Got it? Go!

Who did you pick? Got the details? Now scroll down.

Of course, if you know me at all, you probably know the answer to this already.

They’re ALL Computer Science students – well, they’re found in an image search for “I am a Computer Science student” and, while this is not guaranteed, it means that most of these students are in CS. Now, knowing that, go back and look at the ones you thought were music majors, physicists, business students, economics people. Yes, one or two of them probably look more likely than most but – wait for it – they don’t all look the same. Yeah, you know that, and I know that, but we just have to keep plugging away to make sure that everyone ELSE gets that. Heck, the pictures above are showing less pairs of glasses per person than you would expect from the average and there’s not even one light sabre! WON’T SOMEONE THINK OF THE STEREOTYPES???

This is only page 2 of the Image Search and I picked it because I liked the idea of some inanimate objects being labelled as CS students as well. Oh, that’s right, I said that you’d win something. You know never to trust me with statements unless I’m explicit in my use of terminology now. Sounds like a win to me!

(Of course, the guy with red hair is giving the strong impression that he now knows that you were looking at him on the Internet. I don’t know if you wanted that but that’s just how it is.)

Heroes

Posted: May 7, 2012 Filed under: Education | Tags: advocacy, alan turing, Arland D Williams, Arland Williams, bruno schulz, education, heroes, higher education, inspiring students, learning, reflection, teaching, teaching approaches, turing, work/life balance 1 CommentI know that I learn best when I’m inspired and engaged, so I regularly look for things around me that I can bring into the classroom that go beyond “program this” or “design that”. Our students are surrounded by the real world and, unfortunately, it’s easy to understand why they might be influenced by things that are less than inspirational. I don’t want to be negative, but there are so many examples of bad behaviour on the national and international stage that, sometimes, you really wonder why you bother.

So, today, I’m going to talk about four people. Regrettably, three of them some of you won’t be able to talk about because of personal convictions, political considerations or the ages of your class, but I hope that most of you will either have learned something new or remembered something important by the time I’m finished. Are these people actually heroes, given the title of my post? Well, one is a professional inspiration to me, one is an artistic inspiration to me (and reminder of the importance of what I’m doing), one is generally inspiring in the area of democracy and dedication, and the other… well, the other, I can barely look at his picture without wondering if I could ever approach the level of selflessness and heroism that he demonstrated. But I’ll talk about him last.

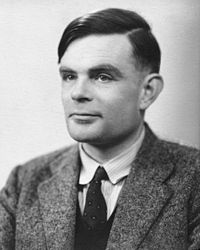

This is Alan Turing, the most likely candidate for the term “Father of Computer Science”. Witty, well-educated, highly intelligent and thoughtful, he was leader in cryptanalysis at Bletchley Park, providing statistical and mathematical genius to breaking codes including the design of the bombe, the machine that attacked Enigma. Importantly, for me as a Computer Scientist, he developed Turing Machines, effectively providing the foundations of studies in the theory of computation. He provided the first detailed design of a computer that used a stored program, very different from the electrical calculators of the day. He defined some of the key terms that we still use in Artificial Intelligence. (There’s so much more but it wouldn’t mean much to you outside the discipline, but he’s well worth looking up.)

Of course, some of you can’t mention Turing to your students, because he was a known homosexual, with a conviction for gross indecency in 1952 after admitting to a consensual homosexual relationship. He had a choice between imprisonment or chemical castration (he chose the latter) and his security clearance was revoked and he was barred from continuing with his security work. He was found dead in 1954, having (most likely) committed suicide.

There is no doubt that the field I am in is the better (or even exists) for Turing having lived and worked in this field. We are poorer for his early loss and, personally, I’m ashamed that persecution based on his sexual orientation may have led to the premature self-administered death of a genius.

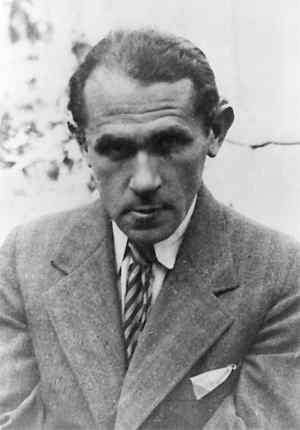

Meet Bruno Schulz, author, artist and critic. Schulz wrote some incredible works, contributed murals and was, despite his somewhat hermitic nature, an influential contributor to the arts. Schulz was born and lived, for most of his life, in Drohobych, Galicia. His contributions, although limited by his early death, include the highly influential works “Sanatorium Under the Sign of the Hourglass” and “The Street of Crocodiles”. In 1938, he was awarded the Polish Academy’s Golden Laurel award for his works and translations.

I am currently writing a series of stories that were inspired, in part, by the “Sanatorium” with its dreamlike qualities, stories interweaving with unreliable narration and innate and unexpected metamorphoses. Schulz is a fascinating counterpoint to Borges for me, woven with the immersion in Jewish culture I would expect from Singer, but with a different tone that comes from through, even in the English translations I have to read.

We have no more works from Schulz, not even the fragments of the book he was working on at the time of his death “The Messiah”. Why was Schulz killed? After the German invasion of the Soviet Union in the Second World War, Drohobych was occupied and, for a time, Schulz (who was Jewish) was protected by a Gestapo officer who admired his artistic work. Unfortunately, another Gestapo officer, a rival of the first, decided to kill this “personal Jew” and shot Schulz on the way home. You will excuse me for being confusing by referring to neither officer by name.

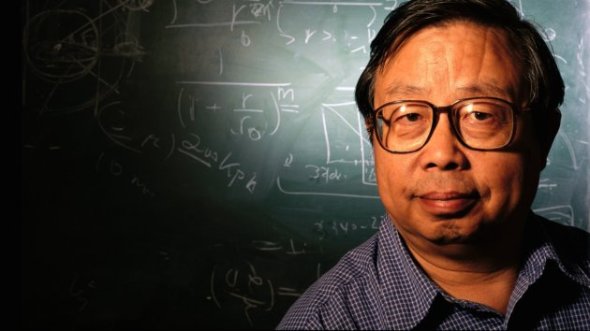

This person you may have heard of. Fang Lizhi died very recently, a Chinese Astrophysicist who lived in exile for over 22 years, after a life spent trying to pursue science despite being politically persona non grata and, for many years, not being able to publish under his own name. He survived hard labour during his re-education by the worker class during the cultural revolution but continued to fight against what he saw as severe obstacles to the pursuit of his scientific aims, including proscriptive ideological opposition to some of the key ideas required to be a successful astrophysicist or cosmologist.

In 1989, he was highly instrumental in the movement that occupied Tiananmen Square, despite not being directly involved in the protest and, once those protests had been dealt with, he decided that, with his wife, his safety was no longer ensured and he sought refuge at the US Embassy. He remained in the embassy for over a year, while diplomatic negotiations continued. Eventually he was allowed to leave and had an international career in his discipline, as well as speaking regularly on human rights and social responsibility. Of all the people on this list, Professor Fang died of old age, at 76, having managed to escape from the situation in which he found himself.

We talk a lot about academic freedom, or the entitlement to academic freedom, but we often forget that there is a harsh and heavy price imposed for it, depending upon the laws and the governments in which we find ourselves. That is a hard and heavy lesson.

Some of you will not be able to talk about Alan Turing, because he was gay. Some of you may have difficulty discussing Bruno Schulz, because of the involvement of Nazis or because he was a Jew. Some of you have may have stopped reading the moment you saw the picture of Fang Lizhi, because you didn’t want to get into trouble. Please keep reading.

So let me give you the story of the first man on this page. Let me tell you about a man who was a bank investigator. Recently divorced, with a youngest child of 17. I want to tell you about him because his story is the simplest and the most complex. He has no giant academic backstory, no grand contribution to literature, no oppression to fight. He just choose to be good.

In 1982, Arland D. Williams, Jr, was a passenger on board a plane from Washington DC to Florida, Air Florida Flight 90, that took off in freezing weather, iced up, failed to gain altitude and slammed into the 14th Street Bridge across the Potomac. The crash killed four motorists and the plane slid forward, down into the Potomac, with the tail breaking off as it did so. There were 79 people on board. Only 6 made it up and onto the tail, which was still floating.

When the rescue helicopter got there, they started recovering people from the tail section, dropping rescue ropes. Williams caught the rescue ropes multiple times and, instead of using them for himself, he handed them to the other passengers.

Life vests were dropped. Rescue balls. He handed them on.

The helicopter, overloaded and struggling with the conditions, got every other survivor back to shore, sometimes having to pick up the weak survivors multiple times. But Williams made sure that everyone else got helped before he did.

Sadly, tragically, by the time the helicopter came back for him, the tail section had shifted and sank, taking him with it. As it happened, Williams had made so little fuss about himself during his actions that his identity had to be determined after the fact.

It would be easy, and cynical, to describe human beings in terms of animals, given some of the awful things we do. Taking away a man’s livelihood (maybe even killing him) because of who he’s in love with? Killing someone because you have an argument with someone else? Persecuting someone for trying to pursue science or democracy?

Yet their stories survive, and we learn. Slowly, sometimes, but we learn.

It would be easy to assume that everyone, when desperate enough, would scrabble like rats to survive. (Except, of course, that not even rats do that. We just tell ourselves they do because we can’t sometimes recognise that this is just a paltry excuse for human evil.)

Here is your counter example – Arland Williams. Here is your existential proof that revokes the “WE ARE ALL LIKE THIS” Myth. There are so many more. Go back to the top of the page and look at that ordinary, middle-aged man. Look at someone who looked down at the freezing water around him and decided to do something great, something amazing, something heroic.

Grand Challenges – A New Course and a New Program

Posted: May 4, 2012 Filed under: Education | Tags: advocacy, challenge, curriculum, design, education, educational problem, equality, grand challenges, higher education, learning, reflection, teaching, universal principles of design Leave a commentOh, the poor students that I spoke to today. We have a new degree program starting, the Bachelor of Computer Science (Advanced), and it’s been given to me to coordinate and set up the first course: Grand Challenges in Computer Science, a first-year offering. This program (and all of its unique components) are aimed at students who have already demonstrated that they have got their academics sorted – a current GPA of 6 or higher (out of 7, that’s A equivalent or Distinctions for those who speak Australian), or an ATAR (Australian Tertiary Admission Rank) of 95+ out of 100. We identified some students who met the criteria and might want to be in the degree, and also sent out a general advertisement as some people were close and might make the criteria with a nudge.

These students know how to do their work and pass their courses. Because of this, we can assume some things and then build to a more advanced level.

Now, Nick, you might be saying, we all know that you’re (not so secretly) all about equality and accessibility. Why are you running this course that seems so… stratified?

Ah, well. Remember when I said you should probably feel sorry for them? I talked to these students about the current NSF Grand Challenges in CS, as I’ve already discussed, and pointed out that, given that the students in question had already displayed a degree of academic mastery, they could go further. In fact, they should be looking to go further. I told them that the course would be hard and that I would expect them to go further, challenge themselves and, as a reward, they’d do amazing things that they could add to their portfolios and their experience bucket.

I showed them that Cholera map and told them how smart data use saved lives. I showed them We Feel Fine and, after a slightly dud demo where everyone I clicked on had drug issues, I got them thinking about the sheer volume of data that is out there, waiting to be analysed, waiting to tell us important stories that will change the world. I pretty much asked them what they wanted to be, given that they’d already shown us what they were capable of. Did they want to go further?

There are so many things that we need, so many problems to solve, so much work to do. If I can get some good students interested in these problems early and provide a coursework system to help them to develop their solutions, then I can help them to make a difference. Do they have to? No, course entry is optional. But it’s so tempting. Small classes with a project-based assessment focus based on data visualisation: analysis, summarisation and visualisation in both static and dynamic areas. Introduction to relevant philosophy, cognitive fallacies, useful front-line analytics, and display languages like R and Processing (and maybe Julia). A chance to present to their colleagues, work with research groups, do student outreach – a chance to be creative and productive.

I, of course, will take as much of the course as I can, having worked on it with these students, and feed parts of it into outreach into schools, send other parts in different levels of our other degrees. Next year, I’ll write a brand new grand challenges course and do it all again. So this course is part of forming a new community core, a group of creative and accomplished leaders, to an extent, but it is also about making this infectious knowledge, a striving point for someone who now knows that a good mark will get them into a fascinating program. But I want all of it to be useful elsewhere, because if it’s good here, then (with enough scaffolding) it will be good elsewhere. Yes, I may have to slow it down elsewhere but that means that the work done here can help many courses in many ways.

I hope to get a good core of students and I’m really looking forward to seeing what they do. Are they up for the challenge? I guess we’ll find out at the end of second semester.

But, so you know, I think that they might be. Am I up for it?

I certainly hope so! 🙂

Saving Lives With Pictures: Seeing Your Data and Proving Your Case

Posted: May 2, 2012 Filed under: Education | Tags: advocacy, analytics, authenticity, cholera outbreak, data visualisation, design, education, higher education, learning, teaching, teaching approaches, voronoi 1 Comment

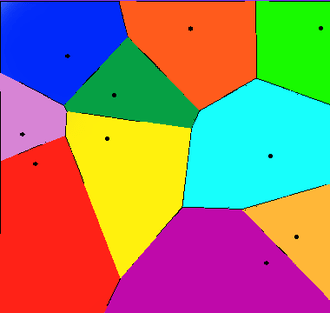

From Wikipedia, original map by John Snow showing the clusters of cholera cases in the London epidemic of 1854

This diagram is fascinating for two reasons: firstly, because we’re human, we wonder about the cluster of black dots and, secondly, because this diagram saved lives. I’m going to talk about the 1854 Broad Street Cholera outbreak in today’s post, but mainly in terms of how the way that you represent your data makes a big difference. There will be references to human waste in this post and it may not be for the squeamish. It’s a really important story, however, so please carry on! I have drawn heavily on the Wikipedia page, as it’s a very good resource in this case, but I hope I have added some good thoughts as well.

19th Century London had a terrible problem with increasing population and an overtaxed sewerage system. Underfloor cesspools were overfilling and the excess was being taken and dumped into the River Thames. Only one problem. Some water companies were taking their supply from the Thames. For those who don’t know, this is a textbook way to distribute cholera – contaminating drinking water with infected human waste. (As it happens, a lack of cesspool mapping meant that people often dug wells near foul ground. If you ever get a time machine, cover your nose and mouth and try not to breath if you go back before 1900.)

But here’s another problem – the idea that germs carried cholera was not the dominant theory at the time. People thought that it was foul air and bad smells (the miasma theory) that carried the bugs. Of course, from this century we can look back and think “Hmm, human waste everywhere, bugs everywhere, bad smells everywhere… ohhh… I see what you did there.” but this is from the benefit of early epidemiological studies such as those of John Snow, a London physician of the 19th Century.

John Snow recorded the locations of the households where cholera had broken out, on the map above. He did this by walking around and talking to people, with the help of a local assistant curate, the Reverend Whitehead, and, importantly, working out what they had in common with each other. This turned out to be a water pump on Broad Street, at the centre of this map. If people got their water from Broad Street then they were much more likely to get sick. (Funnily enough, monks who lived in a monastery adjacent to the pump didn’t get sick. Because they only drank beer. See? It’s good for you!) John Snow was a skeptic of the miasma theory but didn’t have much else to go on. So he went looking for a commonality, in the hope of finding a reason, or a vector. If foul air wasn’t the vector – then what was spreading the disease?

Snow divided the map up into separate compartments that showed the pump and compartment showed all of the people for whom this would be the pump that they used, because it was the closest. This is what we would now call a Voronoi diagram, and is widely used to show things like the neighbourhoods that are serviced by certain shops, or the impacts of roads on access to shops (using the Manhattan Distance).

A Voronoi diagram from Wikipedia showing 10 shops, in a flat city. The cells show the areas that contain all the customers who are closest to the shop in that cell.

What was interesting about the Broad Street cell was that its boundary contained most of the cholera cases. The Broad Street pump was the closest pump to most people who had contracted cholera and, for those who had another pump slightly closer, it was reported to have better tasting water (???) which meant that it was used in preference. (Seriously, the mind boggles on a flavour preference for a pump that was contaminated both by river water and an old cesspit some three feet away.)

Snow went to the authorities with sound statistics based on his plots, his interviews and his own analysis of the patterns. His microscopic analysis had turned up no conclusive evidence, but his patterns convinced the authorities and the handle was taken off the pump the next day. (As Snow himself later said, not many more lives may have been saved by this particular action but it gave credence to the germ theory that went on to displace the miasma theory.)

For those who don’t know, the stink of the Thames was so awful during Summer, and so feared, that people fled to the country where possible. Of course, this option only applied to those with country houses, which left a lot of poor Londoners sweltering in the stink and drinking foul water. The germ theory gave a sound public health reason to stop dumping raw sewage in the Thames because people could now get past the stench and down to the real cause of the problem – the sewage that was causing the stench.

So John Snow had encountered a problem. The current theory didn’t seem to hold up so he went back and analysed the data available. He constructed a survey, arranged the results, visualised them, analysed them statistically and summarised them to provide a convincing argument. Not only is this the start of epidemeology, it is the start of data science. We collect, analyse, summarise and visualise, and this allows us to convince people of our argument without forcing them to read 20 pages of numbers.

This also illustrates the difference between correlation and causation – bad smells were always found with sewage but, because the bad smell was more obvious, it was seen as causative of the diseases that followed the consumption of contaminated food and water. This wasn’t a “people got sick because they got this wrong” situation, this was “households died, with children dying at a rate of 160 per 1000 born, with a lifespan of 40 years for those who lived”. Within 40 years, the average lifespan had gone up 10 years and, while infant mortality didn’t really come down until the early 20th century, for a range of reasons, identifying the correct method of disease transmission has saved millions and millions of lives.

So the next time your students ask “What’s the use of maths/statistics/analysis?” you could do worse than talk to them briefly about a time when people thought that bad smells caused disease, people died because of this idea, and a physician and scientist named John Snow went out, asked some good questions, did some good thinking, saved lives and changed the world.

What’s a Prof?

Posted: May 1, 2012 Filed under: Education | Tags: advocacy, education, higher education, identity, reflection 1 CommentHave a look at this picture – it’s a grab of one of the Google Image search pages for Professors:

If you look at the cartoons on the page, page 3 of the search, you’ll see lab coats and blackboards. While there are a lot of (obviously) portrait shots, searching across the images for yourself will reveal a lot of ‘action shots’ – talking, teaching and, in some cases, just plain thinking. However, what really sticks out on the first images you find, which I haven’t shown here, is the number of Professor Frink (Simpsons) and Professor Farnsworth (Futurama) images – characters who are ubernerds, with strange speaking patterns, a cavalier disregard for the human condition and a fundamental disconnect from the people around them.

Looking at Google Image Search, you’ll see pictures of young professors and old professors. Women and men. The range of races. Nary a white coat in sight unless they are actively involved in research that requires a white coat and are undertaking said research at the point of photography! (I should note that I subscribe to John Birmingham’s fundamental model of suitability of ethnic dress: one should only wear a Greek fisherman’s cap if one is Greek, and a fisherman. I extend it to scientific or trade garb, including military or paramilitary uniform, in that one should then only wear the dress while engaged in the activity. I wore a lab coat when I was studying wine making and in the lab. I think it’s become cleaning cloths now.)

This is all rather light-hearted, except for the slight problem that a number of people’s only interaction with the notion of a professor will be from widely available media sources – and, even though Futurama in particular is heavily ironic in its use, ironic use has a subtle aspect that can easily be lost in communication. It’s already obvious that the scientific community has an uphill battle sometimes and add to this an assumption that we are all bizarre anti-social, uselessly pontificating grey beards who have no understanding of real people and we start any discussion on the back foot.

I like the new image of science that is coming through the media – scientists and professors can be active, have relationships, do cool things, basically being just like everyone else except that they have a title of some sort that reflects what they are good at doing. We do, of course, have a sizeable chunk of the community who did come in during a time when a very different professorial model was encouraged and probably feel at least slightly under assault from the changes in role, respect and expectation that are now spreading across our Universities. But we’re still all just people, whether we look like professors or not.

We don’t have to define what a professor is but it’s always worth reminding people that we are people first, always, before you start trying to photoshop us into white coats, sticking-up white hair and Coke-bottle glasses.

Oh, Perry. (Our Representation of Intellectual Development, Holds On, Holds on.)

Posted: April 30, 2012 Filed under: Education | Tags: advocacy, authenticity, dualism, education, higher education, learning, measurement, multiplicity, perry, reflection, research, resources, teaching, teaching approaches 1 CommentI’ve spent the weekend working on papers, strategy documents, promotion stuff and trying to deal with the knowledge that we’ve had some major success in one of our research contracts – which means we have to employ something like four staff in the next few months to do all of the work. Interesting times.

One of the things I love about working on papers is that I really get a chance to read other papers and books and digest what people are trying to say. It would be fantastic if I could do this all the time but I’m usually too busy to tear things apart unless I’m on sabbatical or reading into a new area for a research focus or paper. We do a lot of reading – it’s nice to have a focus for it that temporarily trumps other more mundane matters like converting PowerPoint slides.

It’s one thing to say “Students want you to give them answers”, it’s something else to say “Students want an authority figure to identify knowledge for them and tell them which parts are right or wrong because they’re dualists – they tend to think in these terms unless we extend them or provide a pathway for intellectual development (see Perry 70).” One of these statements identifies the problem, the other identifies the reason behind it and gives you a pathway. Let’s go into Perry’s classification because, for me, one of the big benefits of knowing about this is that it stops you thinking that people are stupid because they want a right/wrong answer – that’s just the way that they think and it is potentially possible to change this mechanism or help people to change it for themselves. I’m staying at the very high level here – Perry has 9 stages and I’m giving you the broad categories. If it interests you, please look it up!

We start with dualism – the idea that there are right/wrong answers, known to an authority. In basic duality, the idea is that all problems can be solved and hence the student’s task is to find the right authority and learn the right answer. In full dualism, there may be right solutions but teachers may be in contention over this – so a student has to learn the right solution and tune out the others.

If this sounds familiar, in political discourse and a lot of questionable scientific debate, that’s because it is. A large amount of scientific confusion is being caused by people who are functioning as dualists. That’s why ‘it depends’ or ‘with qualification’ doesn’t work on these people – there is no right answer and fixed authority. Most of the time, you can be dismissed as having an incorrect view, hence tuned out.

As people progress intellectually, under direction or through exposure (or both), they can move to multiplicity. We accept that there can be conflicting answers, and that there may be no true authority, hence our interpretation starts to become important. At this stage, we begin to accept that there may be problems for which no solutions exist – we move into a more active role as knowledge seekers rather than knowledge receivers.

Then, we move into relativism, where we have to support our solutions with reasons that may be contextually dependant. Now we accept that viewpoint and context may make which solution is better a mutable idea. By the end of this category, students should be able to understand the importance of making choices and also sticking by a choice that they’ve made, despite opposition.

This leads us into the final stage: commitment, where students become responsible for the implications of their decisions and, ultimately, realise that every decision that they make, every choice that they are involved in, has effects that will continue over time, changing and developing.

I don’t want to harp on this too much but this indicates one of the clearest divides between people: those who repeat the words of an authority, while accepting no responsibility or ownership, hence can change allegiance instantly; and those who have thought about everything and have committed to a stand, knowing the impact of it. If you don’t understand that you are functioning at very different levels, you may think that the other person is (a) talking down to you or (b) arguing with you under the same expectation of personal responsibility.

Interesting way to think about some of the intractable arguments we’re having at the moment, isn’t it?

Identity and Community – Addressing the ICT Education Community

Posted: April 27, 2012 Filed under: Education | Tags: advocacy, ALTA, education, feedback, higher education, reflection, teaching, teaching approaches Leave a commentHad a great meeting at Swinburne University, (Melbourne, Victoria, Australia), today as part of my ALTA Fellowship role. I brought the talk and early outcomes from the ALTA Forum in Queensland into (sunny-ish?) Melbourne and shared it with a new group of participants.

I haven’t had time to write my notes up yet but the overall sentiment was pretty close to what was expressed at the ALTA Forum initially:

- We don’t have an “ICT is…” identity that we can point to. Dentists do teeth. Doctors heal the sick. Lawyers do law. ICT does… what?

- We need a common dissemination point for IT, CS, IS, ICT, CS-EE… etc. rather than the piecemeal framework we currently have that is strongly aligned with subdivision of the discipline.

- We need professionalism in learning and teaching, where people dedicate time to improve their L&T – no more stagnant courses!

- We need to have enough time to be professional! L&T must be seen as valuable and be allocated enough time to be undertaken properly.

- It would be great to have a Field of Research Code for Education within the Discipline of ICT – as distinct from general education coding – to make sure that CS Ed/ICT Ed is seen as educational research in the discipline, rather than a non-specific investigation.

- We need to identify and foster a community of practice to get out of the silos. Let’s all agree that we want to do this properly and ignore school and University boundaries.

- We need to stop talking about the lack of national community and start addressing the lack of a national community.

So a good validation for the early work at the Forum and I’m really looking forward to my meeting at RMIT tomorrow. Thanks, Graham and Catherine, for being so keen to host the first official ALTA engagement and dissemination event!

The Unhappiest Bartender in Australia

Posted: April 24, 2012 Filed under: Education | Tags: advocacy, authenticity, education, higher education, reflection, teaching, teaching approaches Leave a commentI’m not talking about students for this one, I’m talking about the scientific community. On reading yet more articles about the growing rate of retraction, on top of the inability to replicate key studies, it appears that we are at risk of losing our way. I need to be able to train my students for the world that they will work in – so I’m going to briefly discuss my beliefs and interview myself to talk about my fears of what happens when scientific integrity is trumped by mercenary and short-sighted values.

The executive summary is “Do science properly or do something else.” If you’re already practising science at a high level, with integrity, please leave work early and enjoy a beverage of your choice, at your own expense. I salute you! Come back and read this once you are refreshed. (This is a bit more opinionated than usual, so if you want to focus on my Learning and Teaching posts, you might want to read some of my previous posts or come back tomorrow. I welcome you to stay, however.)

I understand, to an extent, why people are taking questionable approaches to their work in order to achieve publication in the same that I understand why students cheat sometimes. But comprehending the rationalisation does not mean that I condone the actions – far from it. In another blog I commented on the fact that some people change their behaviour when they drink. If they are aware that this is going to happen, then the excuse “I was drunk” is not an excuse. Getting drunk was an enabling step. If your choices, as a scientist, are leading you down dark paths then you have to look at the end of that path to see where you’re going. “That was where my path naturally led” isn’t valid when you know that you’re on the wrong road.

I’m pretty worried by some of the behaviour that people are practising to get ahead. But don’t think that I’m in a strong enough position that I’m immune to the lure of the dark path – I want to keep my job, make good progress, get promoted, get grants, have an impact. Like everyone else, I want to change the world. The question is “What are you prepared to compromise in order to get to that stage?”

Do I feel pressure to publish? Yes! Am I willing to fabricate data to do so? No. Am I willing to cite ‘suggested papers’ that all appear to be from the editor of the special edition or a select group of friends? No. Am I willing to run an experiment 100 times and write up the single time it worked as if this was a general case? No!

But, wait, if you don’t meet your publication targets, doesn’t that have an impact on your career? Yes, possibly. I’m expected to publish at a very high level on a regular basis.

And if you don’t? Well, I can demonstrate my worth in other ways but research turns into publications, publications support grants, grants bring in people, people do research. Not publishing will have a serious impact on my ability to produce research.

So you’d bend a little because it’s in the greater interest for your work to be published because your research is valuable. Nice try, but no. I’d prefer to leave my job than compromise my principles in this regard.

Well, it’s really nice that you’ve got that level of agency but, hey, your wife has a stable income and the wolf isn’t at your door. Aren’t you just making an argument from privilege? Hmmm.

Well, that’s a good question. My response would normally be that there are many, many jobs that use some of what I have that don’t require me to have a strong set of scientific and personal ethics. I could teach computing courses and never have to worry about research ethics. I could write code as a small cog in a large company and not have to worry as much about experimental replication. I could tend bar, I guess, or maybe work in a shop, if jobs like that still exist in 10 years time and they’ll hire a 50 year old. But, again, this assumes a level of skill transferability and agency that does presume a basis of privilege if I’m going to walk away from science and do something else.

But this assumes that you went in to be a scientist thinking that this kind of bad behaviour is just what scientists did, that ethics were optional, that publication by any means was acceptable – that reality was mutable when deadlines were tight. Let’s break this thinking now because I don’t want any students to come to my program thinking like that.

I believe that if you want to be a scientist, you have to accept that this comes with a package of ethical behaviours that are not optional.

Science has impact! Building on bad science gives you more bad science. This bad behaviour in science could be, and probably is, killing people. We’re potentially setting back scientific progress because of time wasted trying to build on experiments that don’t work. We are in the middle of a data deluge and picking from the many correct things is hard enough, without adding deceitful or misleading publications as well.

What concerns me, reading about increasing retraction rates and dodgy surveys, is that the questionable path to success may become the norm. People are already questioning perfectly good science, because of a growing mistrust fuelled by bad scientific behaviour, and “Well, I don’t know” is a de rigeur rejoinder in certain parts of the blogosphere.

I always talk about authenticity because it’s the backbone of my teaching. I have to believe it, or know it, or it just won’t work with the students. The day I think that our community is lost, I’ll no longer be able to train students to go to the fantasy land that I naively thought was reality and I’ll quit.

Come and find me, if I do, I’ll probably be working in a bar – and looking really unhappy.

Let’s get out of the geek box – professional pride is what we’re after.

Posted: April 23, 2012 Filed under: Education, Opinion | Tags: advocacy, education, educational problem, Generation Why, higher education, teaching, teaching approaches Leave a commentAs a member of the Information and Communication Technology (ICT) education community, I deal with a lot of students and, believe me, they come in all shapes, sizes and types. Could I pick one of my students out of a crowd by type alone? No. Could I pick a Science, Technology, Engineering and Mathematics (STEM) class from looking at who is sitting in the seats? Sadly, yes, but probably more from gender representation than anything else – and that is something that we’re very much trying to change.

I’m not a big fan of ‘Geek pride’ or attempting to ‘reclaim’ pejorative terms such as dork or nerd. I don’t see why we have to try and turn these terms around, much less put up with them. I have lots of interests – if I paint in oil, I’m an artist, if I sketch on an iPad, I’m a nerd? What? If I can discuss David Foster Wallace or Margaret Atwood’s books at length I’m educated but if I do the same thing with Science Fiction, I’m a geek? Huh? I work a lot in information classification so you can understand that (a) this doesn’t make much sense to me and (b) highlights the problem that accepting the term, in any sense, might eventually give us ownership but it still allows people to put us in the geek box. Let’s get out of the geek box and reclaim a far more useful form of identify – professional pride in doing a job well, with a job that is worth doing.

Let me be more blunt – being good at my job and the interests I have outside of my job may have some relationship but it’s never going to be an ironclad correlation. Stereotypes aren’t useful in any area and, despite the popular stereotype of ICT and scientists on television and in other media, my community is made up many, many different kinds of people. Like any other community.

Forcing us to identify as geeks, dorks or nerds; requiring people to have an all-consuming love of certain TV shows; resorting to a ‘geek shibboleth’ of unpopular or obscure information to confirm membership? This are ways to create a fragmented set of sub-communities that are divided, diminished and able to be ignored. It also provides a barrier to entry because people assume that they must pass these membership tests to join the community when this is not true at all. I don’t want people to ignore our stream of education and the profession because of their incorrect perception of what is required to be a member.

(If you want to watch Buffy, watch Buffy! But don’t feel that you can’t be a programmer because you prefer Ginsberg to Giles.)

I am not a geek. Or a dork. Or a nerd. I am interested in everything – like so many of my students and like so many other people! I want to communicate to my students that they don’t need to be in a box to play in the world. And they shouldn’t put other people in there, either.

Here are my rather loose thoughts but I’d really like to get some dialogue going in the comments if possible, to help me get a handle on it so that I can communicate these things with my students.

- My interests and my job have some connection but one does not completely define the other.

I am an educator, a computer scientist, a programmer, a systems designer – none of these need to be apologised for, tolerated by other people or somehow seen as beneath any other discipline. (This applies to all lines of work – a job done well is a matter of pride and should be respected, assuming that the job in question isn’t inherently unethical or evil.) I can do these jobs well. I also happen to be a painter, a writer, a singer, a guitar player and an amateur long distance runner. If I had listed these terms first, how would you have classed me? What are my job interests and what are my real interests? As it happens, I enjoy the works of Borges, Singer, and Stoppard – but I also enjoy le Guin, Banks, Dick, Moorcock, Tiptree and Steven King.

If I take professional pride in doing my job well, and I then do perform it well, my interests, or the stereotypes associated with my interests, are irrelevant. Feel free to question my taste, but don’t use it to tell me who I am, what I can do and how my work should be appreciated. - All professions have jargon or, more precisely, all professions have a specific set of terms that are used to precisely convey information between practitioners. This is not cause for mockery or derision.

Watched “House” recently? When was the last time you went to the Doctor and called him or her a geek, even out of earshot, for referring to the abdomen instead of tummy? We’re all exposed to tech jargon because the tech is everywhere – when I use certain terms, I’m doing so to make sure that I’m referring to the right thing. We don’t want to turn tech talk into a shibboleth (a means of identifying the same religious group) but we want it to remain an accurate and concise way of discussing things in a professional sense. But, as a profession, this comes with an obligation… - As a profession, communication with other people is worthy of attention because it is important.

When the pilots are flying your plane, they’ll try and communicate with you in a combination of pilot-specific language and normal human communication. ICT people have to do that all the time and, admittedly, sometimes we succeed more than others. Some people in my profession try to confound other people when speaking for a whole lot of reasons that aren’t really that important – please don’t do it. It’s divisive and it’s unnecessary. If people don’t know what you’re talking about, educate them. Use the right words to do your job and the right words to communicate with other people. We don’t want to turn ourselves into some kind of exclusive club because, ultimately, it’s going to work against us. And it is working against us. - It’s time to grow up

Sometimes this all seems so… schoolyard. People called other people names and it caused group formation and division. Now, in an ongoing battle of “geek” versus “anti-geek” we revisit the playground and try and put people into boxes. It’s time to move away from that and accept that stereotypes are often untrue, although convenient, and that we don’t need to put people into these boxes. That applies to people outside the ICT community and to people inside the community. Every community has a range of people – you will always find people to support loose stereotypes but, look carefully, and you’ll always find people who don’t fit. - We’re not smarter and our field isn’t so hard that only amazing people can do it

When some people go and talk to students they say things like “It’s hard but you get so much out of it”. What students hear is “It’s hard.” That saying “It’s hard” is worn like a badge of honour – that you have to be worthy enough to do somethings because they’re difficult.Rubbish.There are as many degrees of work difficulty as there are pieces of work and challenges range from easy to impossible – like any other discipline. It’s nice to feel smart, it’s nice to think you’ve conquered something but, being honest, you don’t need to be really smart to do these things although you do need to dedicate some time and thought to most of the activities. Yes, at the top end, there are scarily smart people. I’m not one of them but I admire those who have those skills and use them well. The really bright people are often some of the nicest and most humble. It’s another division that we don’t need.I’m a great believer that we should tell students the truth, in the context of other professions. We have less memorisation than medicine but more freedom to create and innovate. In ICT we have fewer theorems than maths but more large programs where we try to string things together. We have fewer people pass out from fumes than Organic Chemistry but that’s a positive and a negative (Yes, I’m joking). We get to do amazing things but, like all amazing things, this requires study and work. It is completely achievable by the vast majority of students who qualify for University. We don’t need to be exclusive and divided – we want more people and we want our community to grow.

We have some seriously difficult challenges to solve in the coming decades. We’re not going to get anywhere by splintering communities, making false barriers to entry and trying to pretend that our schoolyard view is even vaguely indicative of reality.