Raising the Dead: The early and late lecture

Posted: May 15, 2012 Filed under: Education | Tags: education, energy drink, higher education, principles of design, teaching, teaching approaches, zombie Leave a commentI teach at a University that has about 25,000 students on a campus that is heavily constrained in terms of expansion space. To our Northern boundary lies a river, to the Western edge borders a road, the South has a very large road (one of the main streets of the CBD – and then the CBD) and our Eastern edge has both another University, then a road. When it comes to expanding, we have to go up or down.

Fortunately, students stack vertically. (As evidenced by this picture from Indiana University Northwest.)

Because of this, we have to make good use of our teaching spaces – because building more is a challenge and our University continues to grow! While we always have enough space, sometimes this means that lectures start at 8am or go until 6-7pm so that we can accommodate the rich diversity of course pathways that students choose and we can get enough bottoms into the proximity of sufficient seats.

Of course, the earlier the lecture, the more likely you are to have the dreaded zombie student.

These aren’t the walking dead – these are the barely waking alive. It can be hard enough to get information across to people when they’re awake let alone when they’re semi-conscious and attempting to wake themselves up with caffeine, guarana and whatever chemical is found in the energy drink of choice. Now this isn’t limited to the early or late but it’s more often seen in these sessions – for me at least.

My students in Singapore are coffee, tea or (brace yourself) coffee-mixed-with-tea drinkers and will drink one to two over a daily session. My students in Adelaide can consume 4-6 cans of Energy drink (large cans) and, by the end of it, appear awake but have the learning capacity of a slightly damaged brick.

I, as an ex-student, both understand and sympathise. For me, the early lecture meant dragging myself out of bed at the last minute, often after a late night, showering at speed, dashing into Uni and then, after all this adrenal explosion, sitting down for an hour of a traditional lecture. Back then, I didn’t drink coffee or tea, nor did I drink Coke that early in the morning and (strange to believe) we didn’t have energy drinks. As a result, the lecture had to complete with all of the lead-in excitement and, quite often, I had difficulty focusing. Later on, I discovered caffeine in a big way but, after finally working out the way between alert and awake, I stopped using it to try and stay awake and started focusing on getting enough sleep.

But that took me a while to figure out.

These days, of course, I may have to deal with students who dashed in to make an 8 or 9am lecture, under similar circumstances, or have spent all day with us and I’m seeing them out at 5-6pm. Up in Singapore I may be dealing with people who’ve worked 5 and a half days and then spend 6 or 7 hours with me on the Saturday and Sunday. What does this mean?

My only defence against the zombie student is to engage those parts of the brain that are still human, still alive, and try to keep them from going all the way to the dark side. I have to be interesting, engaging and I have to involve the students in the lecture. A traditional ‘stand out the front and talk’ lecture is just not going to fly in this slot. As it is I usually run around the room like a battery-powered cymbal clapping monkey, regardless of time, but at the early and the later I have to make sure that everyone is involved, especially if they look like they’re nodding off. Sometimes this can be as simple as getting people to talk in small groups and give me an answer.

You won’t always be able to stop the zombies from taking over, especially when it’s been a really big weekend, but we know that they’re out there.

Waiting… well, sleeping. Mostly sleeping.

E-Library: Electronic or Ephemeral?

Posted: May 13, 2012 Filed under: Education, Opinion | Tags: advocacy, blogging, design, eBook, education, educational problem, ephemeral library, ethics, Generation Why, higher education, resources, teaching, teaching approaches Leave a commentMy technical and professional library is a strange beast. Part Computer Science, part graphic design, part fiction, it’s made up of new books, books I had in Uni, books that I have inherited from other academics and books that I salvaged from libraries before they disappeared. But, of course, there is a new and growing section of my library, which you can’t see on the shelves – my E-Library. I realised that, this week, I now have started an E-Library collection that grows on a monthly basis as I add more content. I shall use the term eBooks for the rest of this post, but I’m not referring to a specific format – it’s just the digitised and electronically transferable image of a book that I’m concerned with.

Why am I buying eBooks? Because they arrive within minutes. I talk about this from a student perspective in tomorrow’s main post but, for me, I buy physical+electronic where I can because I will end up with a copy that I can use right now and a copy that I can add to my physical library.

Ephemeral Library X, © Krystyna Ziach

http://ny.artslant.com/global/artists/show/213982-krystyna-ziach?tab=ARTWORKS&user_id=209460

When I am gone, or when I retire, my professional library will be stripped for those things that will be kept, by me or my wife, and the rest will go out into the corridor, onto a table, for the rest of my colleagues and students to pick through. The remainder will probably be offered to a school, as the main library is not really interested in my 1950s Engineering texts. But what of texts that only exist in the Ephemeral Library? There are so many questions about this form of my library:

- Will I even be able to transfer all of my books? I buy mostly from suppliers who allow me to legitimately transfer the electronic copies but there are some of my books that are locked to my identity or my machine.

- How will I advertise them? Put up a webpage with a download link? That immediately breaches most publishers restrictions. Asking people to register their interest and then provide it to them takes effort and, most likely, means that it will be a low priority.

- Will the formats that I am buying today be a working format in 30 years time? We have a tendency to think in the now, forgetting that 78s are gone, 8-track is gone, cassette is mostly gone and vinyl is more fringe oriented than mainstream these days. Beta is buried deep in the ground with VHS buried just above it. The physical formats are being obliterated in the face of the relentless march of digitised containers but, remember, standards change and, worse, standards evolve within the standards themselves. At some stage BluRay X will break BluRay 1.2, most likely. In the same way, PDF 22 may lose the ability to handle earlier versions. Backwards compatibility is a grand goal but, time and again, we have eventually abandoned it on the argument that it is no longer necessary.

- Will I maintain the burden of updating my media to make sure that 3 doesn’t happen? How much spare time do you have?

- Finally, what happens when I die? I don’t think I’m allowed to transfer my iTunes account details to my wife – so over 260 songs will, at some stage, disappear from our shared iPods. The same for my library. Suddenly, books disappear. Possibly books that have not been published for years and will never be published again. Gutenberg dies and all of his Bibles spontaneously combust? Not the most robust model.

Obviously, part of the whole management process that will have to be recognised is the difference between renting, leasing and owning a digital property. If we are actually going to own things, and most people think that they own things but would be surprised if they read the fine print, we have to come up with a form of identity management that allows transfer of property to occur across legally recognisable lines. One can only hope that we’ve sorted out the simple things like child rearing, marriage, hospital visitation and social security access before we attempt to push through a global, trans-corportate, persistent rights management system that allows us to keep our collections together, even after we die.

Hurdles and Hang-ups: Identifying Those Things That Trip Up a Student

Posted: May 13, 2012 Filed under: Education | Tags: curriculum, design, education, educational problem, feedback, Generation Why, higher education, learning, measurement, reflection, teaching, teaching approaches 2 CommentsMost of the courses I teach have a number of guidelines in place that allow a student to, with relative ease and fairly early on, identify if they are meeting the requirements of the course. Some of these are based around their running assessment percentages, where a student knows their mark and can use this to estimate how they’re travelling. We use a minimum performance requirement that says that a student must achieve at least 40% in every component (where a component is “the examination” or “the aggregate mark across the whole of their programming assignments”) and 50% overall in order to pass. If a student doesn’t meet this, but would otherwise pass, we can look at targeted remedial (replacement) assessment in order to address the concern.

Bazinga! (With apologies to the Austrian athlete involved, who suffered no more injury than some cuts to his lower lip and jaw.)

One of the things we use is often referred to as a hurdle assessment, an assessment item that is compulsory and must be passed in order to pass the course. One of the good things about hurdle assessments is that you can take something that you consider to be a crucial skill and require a demonstration of adequate performance in that skill – well before the final examination and, often, in a way that is more practically oriented. Because of this we have practical programming exams early on in our course, to resolve the issues of students who can write about programming but can’t actually program yet.

It would be easy to think of these as barriers to progress, but the term hurdle is far more apt in this case, because if you visualise athletic training for the hurdles, you will see a sequence of hurdles leading to a goal. If you fall at one, then you require more training and then can attempt it again. This is another strong component of our guidelines – if we present hurdles, we must offer opportunities for learning and then reassessment.

Of course, this is the goal, role and burden of the educator: not the cheering on of the naturally gifted, but the encouragement, development and picking up of those who fall occasionally.

Picked correctly, hurdles identify a lack of ability or development in a core skill that is an absolute pre-requisite for further achievement. Picked poorly, it encourages misdirected effort, rote learning or eye-rolling by students as they undertake compulsory make-work.

I spend a lot to time trying to frame what is happening in the course so that my students can keep an eye on their own progress. A lot of what affects a student is nothing to do with academic ability and everything to do with their youth, their problems, their lives and their hang-ups. If I can provide some framing that tells them what is important and when it is important for it to be important, then I hope to provide a set of guides against which students can assess their own abilities and prioritise their efforts in order to achieve success.

Students have enough problems these days, with so many of them working or studying part-time or changing degrees or … well… 21st Century, really, without me adding to it by making the course a black box where no feedback or indicators reach them until I stamp a big red F on their paperwork and tell them to come back next year. If they can still afford it.

Rule 0: Read Your Sources Before You Cite Them

Posted: May 12, 2012 Filed under: Education, Opinion | Tags: authenticity, blogging, education, ethics, higher education, knuth, plagiarism, reflection, teaching, teaching approaches 1 Comment(This, once again, is a little more opinion/political but it does touch on some important teaching points and might be useful for a class in ethics. However, some of you might find my editorial stance disagrees with your perspective.)

Some of you will have seen that the Chronicle of Higher Education recently fired one of their blogging staff because she “did not meet The Chronicle’s basic editorial standards for reporting and fairness in opinion articles”. You can find the story in a number of places, and there’s a reasonable summary here, but, despite people trying to turn this into a debate on “left-wing victimisation clap trap” versus “freedom of speech” versus any number of the quite offensive straw men that were put up in the original blog, Naomi Schaefer Riley committed the cardinal sin.

She published work that made a claim which could not be substantiated by the references.

The title of her blog was “The Most Persuasive Case for Eliminating Black Studies? Just Read the Dissertations.” but, as it turned out, she hadn’t. The dissertations weren’t available to read so she wrote a scathing, dismissive and quite unpleasant article on incomplete knowledge. Then, when called on it, she claimed that she didn’t need to read them to write a 500 word blog post.

Regardless of everything else in the post, regardless of who is right, this is just not acceptable. Had she started from a position of assessing the abstracts, drawing a long bow and then saying “But, of course, we have to see the dissertations”, I suspect she’d still have her job. Journalists do this all the time. However, like scientists, there comes a point where you have to be able to pick up the grain of truth that you’re standing on and point to it. If it turns out that you’ve, effectively, made something up or, worse, misrepresented what you’ve read, then that’s unacceptable and in this case, quite rightly, the Chronicle asked her to go.

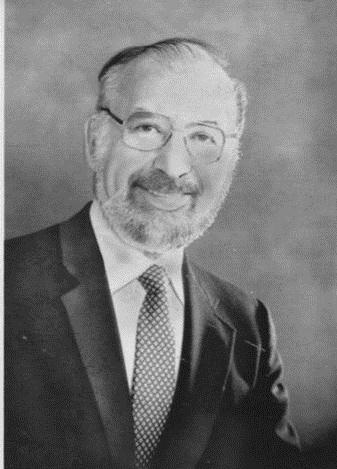

A book by Donald Knuth (which I won’t be speaking about today but there are not that many good Knuth shots. Don’t Google Image Search for him at work, because you’ll get an underwear model as well. [WHO IS NOT DONALD KNUTH, I HASTEN TO ADD.])

Of course, you know that I discovered that people had done this. How? The survey paper, to avoid plagiarising Knuth, had rephrased one of the clear and concise explanations – and they had introduced a distinctive way of representing the problem. (I still found the original much clearer.) It got to the stage that I could tell who had read the original or the survey from which twist they had in their framing paragraph for a key point, without having to spend time looking at the references.

Why had people done this? Because Knuth wasn’t readily available. Being in a 1965 publication meant that many libraries had shunted these ‘old books’ to stores as newer volumes came in and it required a week or two to get it back, sometimes longer. Sometimes these volumes were lost forever. (These days, I’m happy to say, there are many on-line sources for this paper. So there’s no excuse, if you’re in CS, you go off now and read yourself some Knuth.) The survey paper was easy to find and was pretty well written. It was just unfortunate that a wrinkle had crept in that allowed us to tell Knuth from Knuth-prime.

It’s still no excuse. It’s a pretty basic rule for us – if you’ve only read the abstract, you haven’t read the paper. If you haven’t read the paper, you can’t cite the paper. If you’ve read a survey, then you can cite the survey but not one of the surveyed papers. But, categorically and set in stone, if you haven’t read the paper then you can’t criticise the paper.

Personally, I think that Naomi Schaefer Riley’s article was pretty badly written, unnecessarily vicious and was the kind of article I’d describe as “written by the food critic before they entered the restaurant”. But that’s only my opinion of the worth of the article. For that, should she lose her job? No, of course not – we differ, that’s life. But for writing an article that insinuated in the text, and stated in the heading, that she had read something, upon which she based a vitriolic criticism, which she then recanted, claiming she didn’t have enough time?

I could lose my job for that. I could even lose my PhD for that.

My Vice Chancellor could lose his job for that.

It’s a bit of a shame that it took some community nudging for the Chronicle to do something here, but I think they did the right thing. If you want to write about our world and our standards, then I think you pretty much have to exemplify them yourselves. It’s all about authenticity. Fairness. Ethics. Something that I hope Naomi Schaefer Riley can think about and learn from. I hope she’s had a chance to think about this and go forward constructively from it sometime in the future. Maybe no-one has every called her on it before? Either way, the next time she shows up, I’ll happily read what she’s written – but I will be checking her references.

Once again, XKCD says it all – “Share Your Knowledge With Joy”

Posted: May 11, 2012 Filed under: Education | Tags: blogging, education, higher education, identity, learning, randall munroe, teaching, teaching approaches, tools, xkcd Leave a commentIn a recent XKCD post, Randall Munroe asks us why we criticise people when they don’t know something, rather than taking it as an opportunity to inform and delight them. After all, what is the actual benefit of belittling someone if they haven’t happened to have been exposed to the same information as you.

Well, that’s an excellent question. And, if you’re an educator, it’s the essential question.

We know that out students come to us without the information that they need. Because of this, they are regularly going to not know things and, sometimes, that’s going to be frustrating, but that’s what we’d expect.

I’ve run across it a few times myself when I’ve been surprised that people haven’t known basic (and to me common) terms in other languages like French or German. Why should they? I was raised in England, intermittently around French speakers, and have been exposed to European languages in one form or another for 40 years. I studied French at school and have German-speaking friends and colleagues, who I’ve visited. When someone doesn’t know what bon mot, or soupçon means, that’s not actually an indicator of anything, except that they don’t know it yet. Ok, hand up in shame, I have, in the past, been obviously surprised when someone didn’t know something but, over the last few years, I’ve worked really hard to curb it and try to be positive and informative, rather than being a schmuck.

After all, when I was a wine making student, a Microbiology PhD student sneered at me, quite effectively, because I didn’t know how to prepare a certain type of sample. The fact that I had never been shown, it hadn’t been a pre-requisite, and that it was actually his job to show me apparently eluded him on the day. Net result? 10 years later I remember being made to feel small but I still don’t remember how to prepare that sample.

I know what it’s like when someone decides to feel superior through exclusivity, rather than get a kick out of sharing the knowledge. Even if it wasn’t my job, even if knowledge sharing wasn’t something I enjoyed, even if it wasn’t the only ethically defensible choice – I should still be doing the right thing because I know what it’s like to be on the other side.

Thanks again, Randall, for a potted summary, in fun cartoon form, to remind us what it means to not be a schmuck.

The Student as Creator – Making Patterns, Not Just Following Patterns

Posted: May 10, 2012 Filed under: Education | Tags: design, education, higher education, maze, modes of thinking, teaching, teaching approaches, thinking Leave a commentWe talk a lot about what we want students to achieve. Sometimes the students hear the details and sometimes they hear “Do your work, pass your courses, get your degree, wave paper in air, throw hat, profit.” Now, of course, sometimes they hear that because that’s what we say – or that’s what their environment, the people around them, even the employers say.

The image above is a Chinese-inspired maze pattern. Composed of simple elements, it can become complex quickly. If you built a hedge maze along these lines you could probably keep a lot of people lost for some time, simply because they wouldn’t necessarily be able to decompose the maze to the simple patterns, work out the composition and then solve the problem.

I can teach someone to follow a maze easily. In fact, this is probably done by the time they’ve finished school. Jump on the track, do your work, stay inside the lines, keep walking until you find the goal. Teaching someone to be able to step back, observe the patterns and then arrive at the goal more efficiently can also be taught, or it can arrive with experience. But, going further, being able to look at the maze and construct a brand new maze, potentially with new patterns or composition techniques, requires inspiration. You can reach this point with a fantastic brain and a lifetime of experience (we must have been able to do this) but, these days, we can also teach students abstraction, thinking, the right way to go about a problem so that they move beyond following the hedges or being able to build exactly the same kind of maze again.

This brings the student into a new mode of thinking: as a creator, rather than a pattern matcher or a follower. It is, by far, the hardest things to teach as it requires you to concentrate on providing an environment that supports and encourages creativity, as well as making sure that no-one is trying to build mazes that defy gravity, or where you can walk through concrete walls. (I note that these initial grounding constraints may relax later on – once people have a good grasp of the basics, creativity can take them to places where you can walk through walls.)

Of course, focussing on the mechanics of getting the piece of paper at the end of the degree, as if this was the objective, doesn’t lead to the right way of thinking. Getting into the right space requires us to focus on what should really be happening: the successful transfer of knowledge, the building of frameworks for knowledge development and a robust basis for creative and critical thought. This can, and does, occur spontaneously – but trying to make it happen more often results in a much larger group of people who can, potentially, change the world.

Deadlines and Decisions – an Introduction to Time Banking

Posted: May 9, 2012 Filed under: Education | Tags: education, higher education, learning, measurement, perry, reflection, resources, teaching, teaching approaches, time banking, time management, tools 3 CommentsI’m working on a new project, as part of my educational research, to change the way that students think about deadlines and time estimation. The concept’s called Time Banking and it’s pretty simple. Some schools already give students some ‘slack time’, free extension time that the students manage to allow them to manage their own deadlines. Stanford offers 2 days up front so, at any time in the course, you can claim some extra time and give yourself an extension.

The idea behind Time Banking is that you get extra hours if you hand up your work (to a certain standard) early. These hours can be used later as free extensions for assignment, up to some maximum number of days. This makes deadlines flexible and personalised per student.

Now I know that some of you already have your “Time is Money, Jones!” hats on and may even be waggling a finger. Here’s a picture of what that looks like, if you’re not a-waggling.

“Deadlines are fixed for a reason!”

“We use deadlines to teach professional conduct!”

“This is going to make marking impossible.”

“That’s not the right way to tie a bow tie!”

“It’s the end of civilisation as we know it!” (Sorry, that’s a little hyperbolic)

Of course, some deadlines are fixed. However, looking back over my own activities during the past quarter, I have far more negotiable and mutable deadlines than I do fixed ones. Knowing how to assess my own use of time in the face of a combination of fixed and mutable deadlines is a skill that I refine every year.

If I had up late, telling me to hand up on time or start earlier doesn’t really involve me in the process that’s required: making a decision as to how I’m going to manage all of my commitments over time, rather than panicking when I run into a deadline.

I can’t help thinking that forcing students to treat every assignment deadline as fixed, whether it needs to be or not, doesn’t deal with the student in the way that we try to in every other sphere. It makes them depend upon the deadline from an authority, rather than forcing them to look at their assignment work across a whole semester and plan inside that larger context. How can we produce students who are able to work at the multiplicity or commitment level, sorry, Perry again, if we force them to be authority-dependent dualists in their time management?

Now, before you think I’ve gone mad, there are some guidelines for all of this, as well as the requirement to have a good basis in evidence.

- We must be addressing an existing behavioural problem. (More on this later.)

- Some deadlines are immutable. This includes weekly dependencies, assignments where the solutions are revealed post submission, and ‘end of semester’ close-off dates.

- The assessment of ‘early and satisfactory’ must be low effort for the teacher. We don’t want to encourage handing up empty assignments a week ahead. We want to encourage meeting a certain standard, preferably automatically assessed, to bring student activity forward.

- We have limits on the amount you can bank or spend, to keep assessment of the submitted materials inside the realm of possibility and, again, to reduce unnecessary load on the staff,

- We don’t tolerate bad behaviour. Cheating or system fiddling immediately removes the system from the scheme.

- We provide up-front hours to give all students a base line of extension.

- We integrate this with our existing ‘system problem’ and ‘medical/compassionate problem’ extension systems.

Now, if students don’t have a problem, there’s nothing to fix. If our existing fixed deadline system encouraged students to start their work at the right time and finish in a timely fashion, then by final year, we wouldn’t need anything like this. However, my data from our web submission system clearly indicates the existence of ‘persistently’ late students and, in fact, rather than getting better, we actually start to see some students getting later in second, third and honours years. So, while this isn’t concrete, we’re not seeing the “Nope, no problem here” behaviour that we’d like. So that’s point 1 dealt with – it looks like we have a problem.

Most of the points are technical issues or components of an economic model, but 6 and 7 address a more important issue: equity. Right now, if your on-line submission systems crash the day before the assignment is due, what happens? Everyone who handed in their work has done the right thing but, because you have to grant a one day extension, they actually prioritised their work too early. Not a huge deal in many ways, because students who get their work in early probably march to a different drum anyway, but it makes a mockery of the whole fixed deadline thing. Either the deadline is fixed or it isn’t – by allowing extension on a broad scale for any reason, you’re admitting that your deadline was arbitrary.

We’re trying to make them think harder than that.

How about, instead, you hand out 24 hours of time in the bank. Now the students who handed up early have 24 hours to spend later on and the students who didn’t get it in before the crash have a fair chance to get their work in on time. Student gets sick, your medical extensions are now just managed as time in the bank, reflecting the fact that knock on effects can be far greater than just getting an extension for a single assignment.

But we don’t go crazy. My current thoughts are that we’d limit the students to only starting to count early about 2 days before the assignment is due, and allow a maximum of 3 days extension (greater for medical or compassionate). This keeps it in our marking boundary and also, assuming that you’ve placed your assignments in the context of the appropriate knowledge delivery, keeps the assignments roughly in the same location as the work – not doing the assignment at the beginning of the term and then forgetting the knowledge.

So, cards on the table, I’m writing a paper on this, identifying exactly what I need to look at in order to demonstrate if this is a problem, the literature that supports my approach, the objections to it and the obstacles. I also have to spec the technical system that would support it and , yes, identify the range of assignments for which it would work. It won’t work for everything/everyone or every course. But I suspect it might work very well for some areas.

Could we allow team banking? Course banking? Social sharing? Community involvement (donation to charity for so many hours in the bank at the end of the course)? What could we do by involving students in the elastic management of their own time?

There’s a lot more but I’d love to hear some thoughts on it. I look forward to the discussion!

Spot the Computer Science Student and Win!

Posted: May 8, 2012 Filed under: Education | Tags: advocacy, computer science, computer science student, education, higher education, learning, measurement, resources, stereotype, student, teaching approaches 1 CommentCS Students get a pretty bad rap on that whole “stereotype” thing. Given that I’m an evidence-based researcher, let’s do some tests to find out if we can, in fact, spot the CS student. Here’s a quick game for you. Hidden in this image are 3 Computer Science students.

Which ones are they? (You can click on the image to enlarge it.)

I’ll make it easy for you to reference them – we’ll number the rows from the top (A) to the bottom (H) and the images from left to right as 1 to … well, whatever, because the rows aren’t the same length. So the picture with the cactus is A2, ok? Got it? Go!

Who did you pick? Got the details? Now scroll down.

Of course, if you know me at all, you probably know the answer to this already.

They’re ALL Computer Science students – well, they’re found in an image search for “I am a Computer Science student” and, while this is not guaranteed, it means that most of these students are in CS. Now, knowing that, go back and look at the ones you thought were music majors, physicists, business students, economics people. Yes, one or two of them probably look more likely than most but – wait for it – they don’t all look the same. Yeah, you know that, and I know that, but we just have to keep plugging away to make sure that everyone ELSE gets that. Heck, the pictures above are showing less pairs of glasses per person than you would expect from the average and there’s not even one light sabre! WON’T SOMEONE THINK OF THE STEREOTYPES???

This is only page 2 of the Image Search and I picked it because I liked the idea of some inanimate objects being labelled as CS students as well. Oh, that’s right, I said that you’d win something. You know never to trust me with statements unless I’m explicit in my use of terminology now. Sounds like a win to me!

(Of course, the guy with red hair is giving the strong impression that he now knows that you were looking at him on the Internet. I don’t know if you wanted that but that’s just how it is.)

Heroes

Posted: May 7, 2012 Filed under: Education | Tags: advocacy, alan turing, Arland D Williams, Arland Williams, bruno schulz, education, heroes, higher education, inspiring students, learning, reflection, teaching, teaching approaches, turing, work/life balance 1 CommentI know that I learn best when I’m inspired and engaged, so I regularly look for things around me that I can bring into the classroom that go beyond “program this” or “design that”. Our students are surrounded by the real world and, unfortunately, it’s easy to understand why they might be influenced by things that are less than inspirational. I don’t want to be negative, but there are so many examples of bad behaviour on the national and international stage that, sometimes, you really wonder why you bother.

So, today, I’m going to talk about four people. Regrettably, three of them some of you won’t be able to talk about because of personal convictions, political considerations or the ages of your class, but I hope that most of you will either have learned something new or remembered something important by the time I’m finished. Are these people actually heroes, given the title of my post? Well, one is a professional inspiration to me, one is an artistic inspiration to me (and reminder of the importance of what I’m doing), one is generally inspiring in the area of democracy and dedication, and the other… well, the other, I can barely look at his picture without wondering if I could ever approach the level of selflessness and heroism that he demonstrated. But I’ll talk about him last.

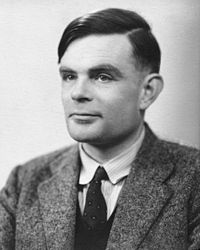

This is Alan Turing, the most likely candidate for the term “Father of Computer Science”. Witty, well-educated, highly intelligent and thoughtful, he was leader in cryptanalysis at Bletchley Park, providing statistical and mathematical genius to breaking codes including the design of the bombe, the machine that attacked Enigma. Importantly, for me as a Computer Scientist, he developed Turing Machines, effectively providing the foundations of studies in the theory of computation. He provided the first detailed design of a computer that used a stored program, very different from the electrical calculators of the day. He defined some of the key terms that we still use in Artificial Intelligence. (There’s so much more but it wouldn’t mean much to you outside the discipline, but he’s well worth looking up.)

Of course, some of you can’t mention Turing to your students, because he was a known homosexual, with a conviction for gross indecency in 1952 after admitting to a consensual homosexual relationship. He had a choice between imprisonment or chemical castration (he chose the latter) and his security clearance was revoked and he was barred from continuing with his security work. He was found dead in 1954, having (most likely) committed suicide.

There is no doubt that the field I am in is the better (or even exists) for Turing having lived and worked in this field. We are poorer for his early loss and, personally, I’m ashamed that persecution based on his sexual orientation may have led to the premature self-administered death of a genius.

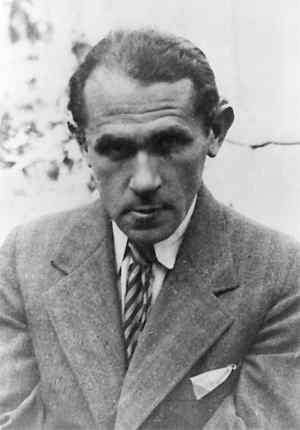

Meet Bruno Schulz, author, artist and critic. Schulz wrote some incredible works, contributed murals and was, despite his somewhat hermitic nature, an influential contributor to the arts. Schulz was born and lived, for most of his life, in Drohobych, Galicia. His contributions, although limited by his early death, include the highly influential works “Sanatorium Under the Sign of the Hourglass” and “The Street of Crocodiles”. In 1938, he was awarded the Polish Academy’s Golden Laurel award for his works and translations.

I am currently writing a series of stories that were inspired, in part, by the “Sanatorium” with its dreamlike qualities, stories interweaving with unreliable narration and innate and unexpected metamorphoses. Schulz is a fascinating counterpoint to Borges for me, woven with the immersion in Jewish culture I would expect from Singer, but with a different tone that comes from through, even in the English translations I have to read.

We have no more works from Schulz, not even the fragments of the book he was working on at the time of his death “The Messiah”. Why was Schulz killed? After the German invasion of the Soviet Union in the Second World War, Drohobych was occupied and, for a time, Schulz (who was Jewish) was protected by a Gestapo officer who admired his artistic work. Unfortunately, another Gestapo officer, a rival of the first, decided to kill this “personal Jew” and shot Schulz on the way home. You will excuse me for being confusing by referring to neither officer by name.

This person you may have heard of. Fang Lizhi died very recently, a Chinese Astrophysicist who lived in exile for over 22 years, after a life spent trying to pursue science despite being politically persona non grata and, for many years, not being able to publish under his own name. He survived hard labour during his re-education by the worker class during the cultural revolution but continued to fight against what he saw as severe obstacles to the pursuit of his scientific aims, including proscriptive ideological opposition to some of the key ideas required to be a successful astrophysicist or cosmologist.

In 1989, he was highly instrumental in the movement that occupied Tiananmen Square, despite not being directly involved in the protest and, once those protests had been dealt with, he decided that, with his wife, his safety was no longer ensured and he sought refuge at the US Embassy. He remained in the embassy for over a year, while diplomatic negotiations continued. Eventually he was allowed to leave and had an international career in his discipline, as well as speaking regularly on human rights and social responsibility. Of all the people on this list, Professor Fang died of old age, at 76, having managed to escape from the situation in which he found himself.

We talk a lot about academic freedom, or the entitlement to academic freedom, but we often forget that there is a harsh and heavy price imposed for it, depending upon the laws and the governments in which we find ourselves. That is a hard and heavy lesson.

Some of you will not be able to talk about Alan Turing, because he was gay. Some of you may have difficulty discussing Bruno Schulz, because of the involvement of Nazis or because he was a Jew. Some of you have may have stopped reading the moment you saw the picture of Fang Lizhi, because you didn’t want to get into trouble. Please keep reading.

So let me give you the story of the first man on this page. Let me tell you about a man who was a bank investigator. Recently divorced, with a youngest child of 17. I want to tell you about him because his story is the simplest and the most complex. He has no giant academic backstory, no grand contribution to literature, no oppression to fight. He just choose to be good.

In 1982, Arland D. Williams, Jr, was a passenger on board a plane from Washington DC to Florida, Air Florida Flight 90, that took off in freezing weather, iced up, failed to gain altitude and slammed into the 14th Street Bridge across the Potomac. The crash killed four motorists and the plane slid forward, down into the Potomac, with the tail breaking off as it did so. There were 79 people on board. Only 6 made it up and onto the tail, which was still floating.

When the rescue helicopter got there, they started recovering people from the tail section, dropping rescue ropes. Williams caught the rescue ropes multiple times and, instead of using them for himself, he handed them to the other passengers.

Life vests were dropped. Rescue balls. He handed them on.

The helicopter, overloaded and struggling with the conditions, got every other survivor back to shore, sometimes having to pick up the weak survivors multiple times. But Williams made sure that everyone else got helped before he did.

Sadly, tragically, by the time the helicopter came back for him, the tail section had shifted and sank, taking him with it. As it happened, Williams had made so little fuss about himself during his actions that his identity had to be determined after the fact.

It would be easy, and cynical, to describe human beings in terms of animals, given some of the awful things we do. Taking away a man’s livelihood (maybe even killing him) because of who he’s in love with? Killing someone because you have an argument with someone else? Persecuting someone for trying to pursue science or democracy?

Yet their stories survive, and we learn. Slowly, sometimes, but we learn.

It would be easy to assume that everyone, when desperate enough, would scrabble like rats to survive. (Except, of course, that not even rats do that. We just tell ourselves they do because we can’t sometimes recognise that this is just a paltry excuse for human evil.)

Here is your counter example – Arland Williams. Here is your existential proof that revokes the “WE ARE ALL LIKE THIS” Myth. There are so many more. Go back to the top of the page and look at that ordinary, middle-aged man. Look at someone who looked down at the freezing water around him and decided to do something great, something amazing, something heroic.