369 (+2)

Posted: November 3, 2012 Filed under: Education, Opinion | Tags: advocacy, authenticity, blogging, community, data visualisation, design, education, educational problem, ethics, feedback, Generation Why, higher education, in the student's head, resources, student perspective, teaching, teaching approaches 1 CommentThe post before my previous post was my 369th post. I only saw because I’m in manual posting mode at the moment and it’s funny how my brain immediately started to pull the number apart. It’s the first three powers of 3, of course, 3, 6, 9, but it’s also 123 x 3 (and I almost always notice 1,2,3). It’s divisible by 9 (because the digits add up to 9), which means it’s also divisible by 3 (which give us 123 as I said earlier). So it’s non-prime (no surprises there). Some people will trigger on the 36x part because of the 365/366 number of days in the year.

That’s pretty much where I stop on this, and no doubt there will be much more in the comments from more mathematical folk than I, but numbers almost always pop out at me. Like some people (certainly not all) in the fields of Science Technology Engineering and Mathematics, numbers and facts fascinate me. However, I know many fine Computer Scientists who do not notice these things at all – and this is one of those great examples where the stereotypes fall down. Our discipline, like all the others, has mathematical people, some of whom are also artists, musicians, poets, jugglers, juggalos, but it also has people who are not as mathematical. This is one of the problems when we try to establish who might be good at what we do or who might enjoy it. We try to come up with a simple identification scheme that we can apply – the risk being, of course, that if we get it wrong we risk excluding more people than we include.

So many students tell me that they can’t do computing/programming because they’re no good at maths. Point 1, you’re probably better at maths than you think, but Point 2, you don’t have to be good at maths to program unless you’re doing some serious formal and proof work, algorithmic efficiencies or mathematical scientific programming. You can get by on a reasonable understanding of the basics, and yes, I do mean algebra here but very, very low level, and focus as you need to. Yes, certain things will make more sense if your mind is trained in a certain way, but this comes with training and practice.

It’s too easy to put people in a box when they like or remember numbers, and forget that half the population (at one stage) could bellow out 8675309 if they were singing along to the radio. Or recite their own phone number from the house they lived in when they were 10, for that matter. We’re all good for about 7 digit numbers, and a few of these slots, although the introduction of smart phones has reduced the number of numbers we have to remember.

So in this 369(+2)th post, let me speak to everyone out there who ever thought that the door to programming was closed because they couldn’t get through math, or really didn’t enjoy it. Programming is all about solving problems, only some of which are mathematical. Do you like solving problems? Did you successfully dress yourself today?

Did you, at any stage in the past month, run across an unfamiliar door handle and find yourself able to open it, based on applying previous principles, to the extent that you successfully traversed the door? Congratulations, human, you have the requisite skills to solve problems. Programming can give you a set of tools to apply that skill to bigger problems, for your own enjoyment or to the benefit of more people.

Road to Intensive Teaching: Post 1

Posted: November 2, 2012 Filed under: Education | Tags: advocacy, authenticity, collaboration, community, curriculum, data visualisation, design, education, educational problem, educational research, Generation Why, grand challenge, higher education, in the student's head, learning, networking, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools Leave a commentI’m back on the road for intensive teaching mode again and, as always, the challenge lies in delivering 16 hours of content in a way that will stick and that will allow the students to develop and apply their understanding of the core knowledge. Make no mistake, these are keen students who have committed to being here, but it’s both warm and humid where I am and, after a long weekend of working, we’re all going to be a bit punch-drunk by Sunday.

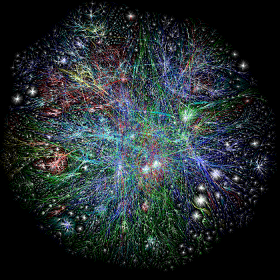

That’s why there is going to be a heap of collaborative working, questioning, voting, discussion. That’s why there are going to be collaborative discussions of connecting machines and security. Computer Networking is a strange beast at the best of times because it’s often presented as a set of competing models and protocols, with very few actual axioms beyond “never early adopt anything because of a vendor promise” and “the only way to merge two standards is by developing another standard. Now you have three standards.”

There is a lot of serious Computer Science lurking in networking. Algorithmic efficiency is regularly considered in things like routing convergence and the nature of distributed routing protocols. Proofs of correctness abound (or at least are known about) in a variety of protocols that , every day, keep the Internet humming despite all of the dumb things that humans do. It’s good that it keeps going because the Internet is important. You, as a connected being, are probably smarter than you, disconnected. A great reach for your connectivity is almost always a good thing. (Nyancat and hate groups notwithstanding. Libraries have always contained strange and unpleasant things.)

“If I have seen further, it is by standing on the shoulders of giants” (Newton, quoting Bernard of Chartres) – the Internet brings the giants to you at a speed and a range that dwarfs anything we have achieved previously in terms of knowledge sharing. It’s not just about the connections, of course, because we are also interested in how we connect, to whom we connect and who can read what we’re sharing.

There’s a vast amount of effort going into making the networks more secure and, before you think “Great, encrypted cat pictures”, let me reassure you that every single thing that comes out of your computer could, right now, be secretly and invisibly rerouted to a malicious third party and you would never, ever know unless you were keeping a really close eye (including historical records) on your connection latency. I have colleagues who are striving to make sure that we have security protocols that will make it harder for any country to accidentally divert all of the world’s traffic through itself. That will stop one typing error on a line somewhere from bringing down the US network.

“The network” is amazing. It’s empowering. It is changing the way that people think and live, mostly for the better in my opinion. It is harder to ignore the rest of the world or the people who are not like you, when you can see them, talk to them and hear their stories all day, every day. The Internet is a small but exploding universe of the products of people and, increasingly, the products of the products of people.

Computer Networking is really, really important for us in the 21st Century. Regrettably, the basics can be a bit dull, which is why I’m looking to restructure this course to look at interesting problems, which drives the need for comprehensive solutions. In the classroom, we talk about protocols and can experiment with them, but even when we have full labs to practise this, we don’t see the cosmos above, we see the reality below.

Nobody is interested in the compaction issues of mud until they need to build a bridge or a road. That’s actually very sensible because we can’t know everything – even Sherlock Holmes had his blind spots because he had to focus on what he considered to be important. If I give the students good reasons, a grand framing, a grand challenge if you will, then all of the clicking, prodding, thinking and protocol examination suddenly has a purpose. If I get it really right, then I’ll have difficulty getting them out of the classroom on Sunday afternoon.

Fingers crossed!

(Who am I kidding? My fingers have an in-built crossover!)

I am a potato – heading towards caramelisation. (Programming Language Threshold Concepts Part II)

Posted: October 28, 2012 Filed under: Education | Tags: curriculum, design, education, educational problem, educational research, feedback, Generation Why, higher education, in the student's head, learning, measurement, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, threshold concepts, tools Leave a commentFollowing up on yesterday’s discussion of some of the chapters in “Threshold Concepts Within the Disciplines”, I finished by talking about Flanagan and Smith’s thoughts on the linguistic issues in learning computer programming. This led me to the theory of markedness, a useful way to think about some of the syntactic structures that we see in computer programs. Let me introduce the concept of markedness with an example. Consider the pair of opposing concepts big/small. If you ask how ‘big’ something is, then you’re not actually assuming that the thing you’re asking about is ‘big’, you’re asking about its size. However, ask someone how ‘small’ something is and there’s a presumption that it’s actually small (most of the time). The same thing happens for old/young. Asking someone how old they are, bad jokes aside, is not implying that they are old – the word “old” here is standing in for the concept of age. This is an example of markedness in the relationship between lexical opposites: the assumed meaning (the default) is referred to as the unmarked form, where the marked form is more restrictive (in that it doesn’t subsume both concepts) and it is generally not the default. You see this in gender and plural forms too. In Lions/Lionesses, Lions is an unmarked form because it’s the default and it doesn’t exclude the Lionesses, whereas Lionesses would not be the general form used (for whatever reasons, good or bad) and excludes the male lions.

Why is this important for programming languages? Because we often have syntactic elements (the structures and the tokens that we type) that take the form of opposing concepts where one is the default, and hence unmarked, form. Many modern languages employ object-oriented programming practices (itself a threshold concept) that allow programmers to specify how the data that they define inside their programs is going to be used, even within that program. These practices include the ability to set access controls, that strictly define how you can use your code, how other pieces of code that you write can use your code, and how other people’s code can use it, as well. The fundamental access control pairs are public and private, one of which says anyone can use this piece of code to calculate things or can change this value, the other restricts such use or change to the owner. In the Java programming language, public dominates, by far, and can be considered unmarked. Private, however, changes the way that you can work with your own code and it’s easy for students to get this wrong. (To make it more confusing, there is another type of access control that sits effectively between public and private, which is an even more cognitively complex concept and is probably the least well understood of the lot!) One of the issues with any programming language is that deviating from the default requires you to understand what you are doing because you are having to type more, think more and understand more of the implications of your actions.

However, it gets harder, because we sometimes have marked/unmarked pairs where the unmarked element is completely invisible. If we didn’t have the need to describe how people could use our code then we wouldn’t need the access modifiers – the absence of public, private or protected wouldn’t signify anything. There are some implicit modes of operation in programming languages that can be overridden with keywords but the introduction of these keywords just doesn’t illustrate a positive/negative asymmetry (as with big/small or private/public), these illustrate an asymmetry between “something” and “nothing”. Now, the presence of a specific and marked keyword makes it glaringly obvious that there has been an invisible assumption sitting in that spot the whole time.

One of these troublesome word/nothing pairs is found in several languages and consists of the keyword static, with no matching keyword. What do you think the opposite (and pair) of static is? If you’re like most humans, you’d think dynamic. However, not only is this not what this keyword actually means but there is no dynamic keyword that balances it. Let’s look at this in Java:

public static void main(String [] args) {...}

public static int numberOfObjects(int theFirst) {...}

public int getValues() {...}

You’ll see that static keyword twice.Where static isn’t used, however, there’s nothing at all, and this (by its absence) also has a definite meaning and this defines what the default expectation is of behaviour in the Java programming language. From a teaching perspective, this means that we now have a default context, with a separation between those tokens and concepts that are marked and unmarked, and it becomes easier to see why students will struggle with instance methods and fields (which is what we call things without static) if we start with static, and struggle with the concept of static if we start the other way around! What further complicates is this is that every single program we write must contain at least one static method, because it is the starting point for the program’s execution. Even if you don’t want to talk about static yet, you must use it anyway (unless you want to provide the students with some skeleton code or a harness that removes this – but now we’ve put the wizard behind the curtain even more).

One other point I found very interesting in Flanagan and Smith’s chapter was the discussion of barriers and traps in programming languages, from Thimbleby’s critique of Java (1999). Barriers are the limitations on expressiveness that mean that what you want to say in a programming language can only be said in a certain way or in a certain place – which limits how we can explain the language and therefore affects learnability. As students tend to write their lines of code as and when they think of them, at least initially, these barriers will lead the students to make errors because they haven’t developed the locally valid computational idiom. I could ask for food in German as “please two pieces ham thick tasty” and, while I’ll get some looks, I’ll also get ham. Students hitting a barrier get confusing error messages that are given back to them at a time when they barely have enough framework to understand what these messages mean, let alone how to fix them. No ham for them!

Traps are unknown and unexpected problems, such as those caused by not using the right way to compare two things in a program. In short, it is possible in many programming languages to ask “does this equal that” and return an answer of true or false that does not depend upon the values of this or that, but where they are being stored in memory. This is a trap. It is confusing for the novice to try to work out why the program is telling her that two containers that have the value “3” in them are not the same because they are duplicates rather than aliases for the same entity. These traps can seriously trip someone up as they attempt to form a correct mental model and, in the worst case, can lead to magical or cargo-cult thinking once again. (This is not helped by languages that, despite saying that they will take such-and-such an action, take actions that further undermine consistent mental models without being obvious about it. Sekrit Java String munging, I’m looking at you.)

This way of thinking about languages is of great interest to me because, instead of talking about usability in an abstract sense, we are now discussing concrete benefits and deficiencies in the language. Is it heavily restrictive on what goes where, such as Pascal’s pre-declaration of variables or Java’s package import restrictions? Does the language have a large number on unbalanced marked/unmarked pairs where one of them is invisible and possibly counterintuitive, such as static? Is it easy to turn a simple English statement into a programmatic equivalent that does not do what was expected?

The authors suggested ways to dealing with this, including teaching students about formal grammars for programming languages – effectively treating this as learning a new language because the grammar, syntax and semantics are very, very different from English.(Suggestions included Wittgenstein’s Sprachspiel, language game, which will be a post for another time.) Another approach is to start from logic and then work forwards, turning this into forms that will then match the programming languages and giving us a Rosetta stone between English speakers and program speakers.

I have found the whole book very interesting so far and, obviously, so too this chapter. Identifying the problems and their locations, regrettably, is only the starting point. Now I have to think about ways to overcome this, building on what these and other authors have already written.

Imagine that you are a raw potato…

Posted: October 27, 2012 Filed under: Education | Tags: community, design, education, educational research, feedback, Generation Why, higher education, in the student's head, principles of design, resources, student perspective, teaching, teaching approaches, thinking, threshold concepts, tools Leave a commentThe words in the title of this post, surprisingly, are the first words in the Editors’ Preface to Land, Meyer and Smiths 2008 edited book “Threshold Concepts within the Disciplines”. Our group has been looking at the penetration of certain ideas through the discipline, examining how much the theory social constructivism accompanies the practice of group work for example, or, as in this case, seeing how many people identify threshold concepts in what they are trying to teach. Everyone who teaches first year Computer Science knows that some ideas seem to be sticking points and Meyer and Land’s two papers on “Threshold Concepts and Troublesome Knowledge” (2003 and 2005) provide a way of describing these sticking points by characterising why these particular aspects are hard – but also by identifying the benefits when someone actually gets it.

Threshold concept theory, in the words of Cousin, identifies the “the kind of complicated learner transitions learners undergo” and identifies portals that change the way that you think about a given discipline. This is deeply related to our goal of “Thinking as a discipline practitioner” because we must assume that a sound practitioner has passed through these portals and has transformed the way that they think in order to be able to practice correctly. Put simply, being a mathematician is more than plugging numbers into formulae.

As you can read, and I’ve mentioned in a previous post, threshold concepts are transformative, integrative, irreversible and (unfortunately) troublesome. Once you have passed through the hurdle then a new vista opens up before you but, my goodness, sometimes that’s a steep hurdle and, unsurprisingly, this is where many students fall.

The potato example in the preface describes the irreversible chemical process of cooking and how the way that we can use the potato changes at each stage. Potatoes, thankfully unaware, have no idea of what is going on nor can they oscillate on their pathway to transformation. Students, especially in the presence of the challenging, can and do oscillate on their transformational road. Anyone who teaches has seen this where we make great strides on one day and, the next, some of the progress ebbs away because a student has fallen back to a previous way of thinking. However, once we have really got the new concept to stick, then we can move forward on the basis of the new knowledge.

Threshold concepts can also be thought of as marking the boundary of areas within a discipline and, in this regard, have special interest to teachers and learners alike. Being able to subdivide knowledge into smaller sections to develop mastery that then allows further development makes the learning process easier to stage and scaffold. However, the looming and alien nature of the portal between sections introduces a range of problems that will apply to many of our students, so we have to ready to assist at these key points.

The book then provides a collection of chapters that discuss how these threshold concepts manifest inside different disciplines and in what forms the alien and troublesome nature can appear. It’s unsurprising again, for anyone teaching Computer Science or programming, that there are a large number of fundamental concepts in programming that are considered threshold concepts. These include the notion of program state, the collection of data that describes the information within a program. While state is an everyday concept (the light is on, the lift is on level 4), the concentration on state, the limitations and implications of manipulation and the new context raise this banal and everyday concepts into the threshold area. A large number of students can happily tell you which floor the lift is on, but cannot associate this physical state with the corresponding programmatic state in their own code.

Until students master some of these concepts, their questions will always appear facile, potentially ill-formed and (regrettably) may be interpreted as lazy. Flanagan and Smith raise an interesting point in that programming languages, which are written in pseudo-English with a precise but alien grammar, may be leading a linguistic problem, where the translation to a comprehensible form is one of the first threshold concepts that a student faces. As an example, consider this simple English set of instructions:

There are 10 apples in the basket. Take each apple out of the basket, polish it, and place it in the sink.

Now let’s look at what the ‘take each apple’ instruction looks like in the C programming language.

for (int i = 0; i < numberOfApples; i++) {

// commands here

}

This is second nature to me to read but a number of you have just looked at that and gone ‘huh’? If you don’t learn what each piece does, understand its importance and can then actually produce it when asked then the risk is that you will just reproduce this template whenever I ask you to count apples. However, there are two situations that humans understand readily: “do something so many times” and “do something UNTIL something happens”. In programs we write these two cases differently – but it’s a linguistic distinction that, from Flanagan and Smith’s work “From Playing to Understanding”, correlates quite well with an ability to pick the more appropriate way of writing the program. If the language itself is the threshold, and for some students it certainly appears that it is, then we are not even able to assume that the students will reach the first stage of ‘local thresholds’ found within the subdomain itself, they are stuck on the outside reading a menu in a foreign language trying to work out if it says “this way to the toilet”.

Such linguistic thresholds will make students appear very, very slow and this is a problem. If you ask a student a question and the words make no sense in the way that you’re presenting them, then they will either not respond (if they have a choice) as they don’t know what you asked, they will answer a different question (by taking a stab at the meaning) or they will ask you what you mean. If someone asks you what you mean when, to you, the problem is very simple, we run the risk of throwing up a barrier between teacher and learner, the teacher assuming that the learner is stupid or lazy, the student assuming that the teacher either doesn’t know what they’re saying or doesn’t care about them.

I’ll write more on the implications of all of this tomorrow.

Sources of Knowledge: Stickiness and the Chasm Between Theory and Practice.

Posted: October 21, 2012 Filed under: Education | Tags: blogging, collaboration, design, education, educational research, higher education, in the student's head, principles of design, reflection, resources, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design, vygotsky Leave a commentMy head is still full of my current crop of research papers and, while I can’t go into details, I can discuss something that I’m noticing more and more as I read into the area of Computer Science Education. Firstly, how much I have left to learn and, secondly, how difficult it is sometimes to track down ideas and establish novelty, provenance and worth. I read Mark Guzdial’s blog a lot because Mark has spent a lot of time being very clever in this area (Sorry, Mark, it’s true) but he is also an excellent connecter of the reader to good sources of information, as well as reminding us when something pops up that is effectively a rehash of an old idea. This level of knowledge and ability to discuss ideas is handy when we keep seeing some of the same old ideas pop up, from one source or another, over time. I’ve spoken before about how the development of the mass-accessible library didn’t end the importance of the University or school, and Mark makes a similar note in a recent post on MOOCs when he points us to an article on mail delivery lessons from a hundred years before and how this didn’t lead to the dissolution of the education system. Face-to-face continues to be important, as do bricks and mortar, so while the MOOC is a fascinating new tool and methodology with great promise, the predicted demise of the school and college may (once again) turn out to be premature.

If you’ve read Malcolm Gladwell’s “The Tipping Point”, you’ll be familiar with the notion that ideas need to have certain characteristics, and certain human agents, before they become truly persuasive and widely adopted. If you’ve read Dawkin’s “Selfish Gene” (published over a decade before) then you’ll understand that Gladwell’s book would be stronger if it recognised a debt to Dawkins’ coining of the term meme, for self-replicating beliefs and behaviours. Gladwell’s book, as a source, is a fairly unscientific restatement of some existing ideas with a useful narrative structure, despite depending on some now questionable case studies. In many ways, it is an example of itself because Gladwell turned existing published information into a form where, with his own additions, he has identified a useful way to discuss certain systems of behaviour. Better still, people do (still) read it.

(A quick use of Google Trends shows me that people search for “The Tipping Point” roughly twice as much as “The Selfish Gene” but for “Richard Dawkins” twice as much as “Malcolm Gladwell”. Given Dawkins’ very high profile in belligerent atheism, this is not overly surprising.)

Gladwell identified the following three rules of epidemics (in terms of the spread of ideas):

- The Law of the Few: There are a small group of people who make a big difference to the proliferation of an idea. The mavens accumulate knowledge and know a lot about the area. The connectors are the gregarious and sociable people who know a lot of other people and, in Gladwell’s words, “have a gift for bringing the word together”. The final type of people are salespeople or (more palatably) persuaders, the people who convince us that something is a good idea. Gladwell’s thesis is that it is not just about the message, but that the messenger matters.

- The Stickiness Factor: Ideas have to be memorable in order to spread effectively so there is something about the specific content of the message that will determine its impact. Content matters.

- The Power of Context: We are all heavily influenced by and sensitive to our environment. Context matters.

Dawkins’ meme is a very sticky idea and, while there’s a lot of discussion about the Selfish Gene, we now have the field of memetics and the fact that the word ‘meme’ is used (almost correctly) thousands, if not millions, of times a day. Every time that you’ve seen a prawn running on a treadmill while Yakity Sax plays, you can think of Richard Dawkins and thank him for giving you a word to describe this.

My early impressions of some of the problem with the representation of earlier ideas in CS Ed, as if they are new, makes me wonder if there is a fundamental problem with the stickiness of some of these ideas. I would argue that the most successful educational researchers, and I’ve had the privilege to see some of them, are in fact strong combinations of Gladwell’s few. Academics must be, by definition, mavens, information specialists in our domains. We must be able to reach out to our communities and spread our knowledge – is this enough for us to be called connectors? We have to survive peer review, formal discussions and criticism and we have to be able to argue our ideas, on the reasonable understanding that it is our ideas and not ourselves that is potentially at fault. Does this also make us persuaders? If we can find all of these “few” in our community, and we already a community of the few, where does it leave us in terms of explaining why we, in at least some areas, keep rehashing the same old ideas. Do we fail to appreciate the context of those colleagues we seek to reach or are our ideas just not sticky enough? (Context is crucial here, in my opinion, because it is very easy to to explain a new idea in a way that effectively says “You’ve been doing it wrong all these years. Now fix it or you’re a bad person.” This is going to create a hostile environment. Once again, context matters but this time it is in terms of establishing context.)

I wonder if this is compounded in Computer Science by the ability to separate theory from practice, and to draw in new practice from both an educational research focus and an industrial focus? To explain why teamwork actually works, we move into social constructivism and to Vygotsky, via Ben-Ari in many cases, Bandura, cognitive apprenticeship – that’s an educational research focus. To say that teamwork works, because we’ve got some good results from industry and we’re supported by figures such as Brooks, Boehm and Humphrey and their case studies in large-scale development – that’s an industrial focus. The practice of teamwork is sticky, that ship has sailed in software development, but does the stickiness of the practice transfer to the stickiness of the underlying why? The answer, I believe, is ‘no’ and I’m beginning to wonder if a very sticky “what” is actually acting against the stickiness of the “why”. Why ask “why?” when you know that it works? This seems to be a running together of the importance of stickiness and the environment of the CS Ed researcher as a theoretical educationalist, working in a field that has a strong industrial focus, with practitioner feedback and accreditation demands pushing a large stream of “what do to”.

It has been a thoughtful week and, once again, I admit my novice status here. Is this the real problem? If so, how can we fix it?

Authenticity and Challenge: Software Engineering Projects Where Failure is an Option

Posted: October 17, 2012 Filed under: Education | Tags: authenticity, collaboration, community, curriculum, design, education, educational problem, fred brooks, Generation Why, higher education, in the student's head, learning, principles of design, reflection, resources, sigcse, software engineering, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design 2 CommentsIt’s nearly the end of semester and that means that a lot of projects are coming to fruition – or, in a few cases, are still on fire as people run around desperately trying to put them out. I wrote a while about seeing Fred Brooks at a conference (SIGCSE) and his keynote on building student projects that work. The first four of his eleven basic guidelines were:

- Have real projects for real clients.

- Groups of 3-5.

- Have lots of project choices

- Groups must be allowed to fail.

We’ve done this for some time in our fourth year Software Engineering option but, as part of a “Dammit, we’re Computer Science, people should be coming to ask about getting CS projects done” initiative, we’ve now changed our third year SE Group Project offering from a parallel version of an existing project to real projects for real clients, although I must confess that I have acted as a proxy in some of them. However, the client need is real, the brief is real, there are a lot of projects on the go and the projects are so large and complex that:

- Failure is an option.

- Groups have to work out which part they will be able to achieve in the 12 weeks that they have.

For the most part, this approach has been a resounding success. The groups have developed their team maturity faster, they have delivered useful and evolving prototypes, they have started to develop entire tool suites and solve quite complex side problems because they’ve run across areas that no-one else is working in and, most of all, the pride that they are taking in their work is evident. We have lit the blue touch paper and some of these students are skyrocketing upwards. However, let me not lose sight of one our biggest objectives, that we be confident that these students will be able to work with clients. In the vast majority of cases, I am very happy to say that I am confident that these students can make a useful, practical and informed contribution to a software engineering project – and they still have another year of projects and development to go.

The freedom that comes with being open with a client about the possibility of failure cannot be overvalued. This gives both you and the client a clear understanding of what is involved- we do not need to shield the students, nor does the client have to worry about how their satisfaction with software will influence things. We scaffold carefully but we have to allow for the full range of outcomes. We, of course, expect the vast majority of projects to succeed but this experience will not be authentic unless we start to pull away the scaffolding over time and see how the students stand by themselves. We are not, by any stretch, leaving these students in the wilderness. I’m fulfilling several roles here: proxying for some clients, sharing systems knowledge, giving advice, mentoring and, every so often, giving a well-needed hairy eyeball to a bad idea or practice. There is also the main project manager and supervisor who is working a very busy week to keep track of all of these groups and provide all of what I am and much, much more. But, despite this, sometimes we just have to leave the students to themselves and it will, almost always, dawn on them that problem solving requires them to solve the problem.

I’m really pleased to see this actually working because it started as a brainstorm of my “Why aren’t we being asked to get involved in more local software projects” question and bouncing it off the main project supervisor, who was desperate for more authentic and diverse software projects. Here is a distillation of our experience so far:

- The students are taking more ownership of the projects.

- The students are producing a lot of high quality work, using aggressive prototyping and regular consultation, staged across the whole development time.

- The students are responsive and open to criticism.

- The students have a better understanding of Software Engineering as a discipline and a practice.

- The students are proud of what they have achieved.

None of this should come as much of a surprise but, in a 25,000+ person University, there are a lot of little software projects on the 3-person team 12 month scale, which are perfect for two half-year project slots because students have to design for the whole and then decide which parts to implement. We hope to give these projects back to them (or similar groups) for further development in the future because that is the way of many, many software engineers: the completion, extension and refactoring of other people’s codebases. (Something most students don’t realise is that it only takes a very short time for a codebase you knew like the back of your hand to resemble the product of alien invaders.)

I am quietly confident, and hopeful, that this bodes well for our Software Engineers and that we still start to seem them all closely bunched towards the high achieving side of the spectrum in terms of their ability to practice. We’re planning to keep running this in the future because the early results have been so promising. I suppose the only problem now is that I have to go and find a huge number of new projects for people to start on for 2013.

As problems go, I can certainly live with that one!

Industry Speaks! (May The Better Idea Win)

Posted: October 16, 2012 Filed under: Education | Tags: alan noble, community, data visualisation, design, education, entrepreneurship, Generation Why, grand challenge, higher education, learning, measurement, MIKE, principles of design, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentAlan Noble, Director of Engineering for Google Australia and an Adjunct Professor with my Uni, generously gave up a day today to give a two hour lecture of distributed systems and scale to our third-year Distributed Systems course, and another two-hour lecture on entrepreneurship to my Grand Challenge students. Industry contact is crucial for my students because the world inside the Uni and the world outside the Uni can be very, very different. While we try to keep industry contact high in later years, and we’re very keen on authentic assignments that tackle real-world problems, we really need the people who are working for the bigger companies to come in and tell our students what life would be like working for Google, Microsoft, Saab, IBM…

My GC students have had a weird mix of lectures that have been designed to advance their maturity in the community and as scientists, rather than their programming skills (although that’s an indirect requirement), but I’ve been talking from a position of social benefit and community-focused ethics. It is essential that they be exposed to companies, commercialisation and entrepreneurship as it is not my job to tell them who to be. I can give them skills and knowledge but the places that they take those are part of an intensely personal journey and so it’s great to have an opportunity for Alan, a man with well-established industry and research credentials, to talk to them about how to make things happen in business terms.

The students I spoke to afterwards were very excited and definitely saw the value of it. (Alan, if they all leave at the end of this year and go to Google, you’re off the Christmas Card list.) Alan focused on three things: problems, users and people.

Problems: Most great companies find a problem and solve it but, first, you have to recognise that there is a problem. This sometimes just requires putting the right people in front of something to find out what these new users see as a problem. You have to be attentive to the world around you but being inventive can be just as important. Something Alan said really resonated with me in that people in the engineering (and CS) world tend to solve the problems that they encounter (do it once manually and then set things up so it’s automatic thereafter) and don’t necessarily think “Oh, I could solve this for everyone”. There are problems everywhere but, unless we’re looking for them, we may just adapt and move on, instead of fixing the problem.

Users: Users don’t always know what they want yet (the classic Steve Jobs approach), they may not ask for it or, if they do ask for something, what they want may not yet be available for them. We talked here about a lot of current solutions to problems but there are so many problems to fix that would help users. Simultaneous translation, for example, over telephone. 100% accurate OCR (while we’re at it). The risk is always that when you offer the users the idea of a car, all they ask for is a faster horse (after Henry Ford). The best thing for you is a happy user because they’re the best form of marketing – but they’re also fickle. So it’s a balancing act between genuine user focus and telling them what they need.

People: Surround yourself with people who are equally passionate! Strive of a culture of innovation and getting things done. Treasure your agility as a company and foster it if you get too big. Keep your units of work (teams) smaller if you can and match work to the team size. Use structures that encourage a short distance from top to bottom of the hierarchy, which allows for ideas to move up, down and sideways. Be meritocratic and encourage people to contest ideas, using facts and articulating their ideas well. May the Better Idea Win! Motivating people is easier when you’re open and transparent about what they’re doing and what you want.

Alan then went on to speak a lot about execution, the crucial step in taking an idea and having a successful outcome. Alan had two key tips.

Experiment: Experiment, experiment, experiment. Measure, measure, measure. Analyse. Take it into account. Change what you’re doing if you need to. It’s ok to fail but it’s better to fail earlier. Learn to recognise when your experiment is failing – and don’t guess, experiment! Here’s a quote that I really liked:

When you fail a little every day, it’s not failing, it’s learning.

Risk goes hand-in-hand with failure and success. Entrepreneurs have to learn when to call an experiment and change direction (pivot). Pivot too soon, you might miss out on something good. Pivot too late, you’re in trouble. Learning how to be agile is crucial.

Data: Collect and scrutinise all of the data that you get – your data will keep you honest if you measure the right things. Be smart about your data and never copy it when you can analyse it in situ.

(Alan said a lot more than this over 2 hours but I’m trying to give you the core.)

Alan finished by summarising all of this as his Three As of Entrepreneurship, then why we seem to be hitting an entrepreneurship growth spurt in Australia at the moment. The Three As are:

- Audit your data

- Having Audited, Admit when things aren’t working

- Once admitted, you can Adapt (or pivot)

As to why we’re seeing a growth of entrepreneurship, Australia has a population who are some of the highest early adopters on the planet. We have a high technical penetration, over 20,000,000 potential users, a high GDP and we love tech. 52% of Australians have smart phones and we had so many mobile phones, pre-smart, that it was just plain crazy. Get the tech right and we will buy it. Good tech, however, is hardware+software+user requirement+getting it all right.

It’s always a pleasure to host Alan because he communicates his passion for the area well but he also puts a passionate and committed face onto industry, which is what my students need to see in order to understand where they could sit in their soon-to-be professional community.

Dealing with Plagiarism: Punishment or Remediation?

Posted: October 15, 2012 Filed under: Education | Tags: advocacy, community, curriculum, design, education, educational problem, educational research, ethics, feedback, Generation Why, higher education, in the student's head, learning, measurement, plagiarism, principles of design, reflection, student perspective, teaching, teaching approaches, thinking, tools, work/life balance 6 CommentsI have written previously about classifying plagiarists into three groups (accidental, panicked and systematic), trying to get the student to focus on the journey rather than the objective, and how overwork can produce situations in which human beings do very strange things. Recently, I was asked to sit in on another plagiarism hearing and, because I’ve been away from the role of Assessment Coordinator for a while, I was able to look at the process with an outsider’s eye, a slightly more critical view, to see how it measures up.

Our policy is now called an Academic Honesty Policy and is designed to support one of our graduate attributes: “An awareness of ethical, social and cultural issues within a global context and their importance in the exercise of professional skills and responsibilities”. The principles are pretty straight-forward for the policy:

- Assessment is an aid to learning and involves obligations on the part of students to make it effective.

- Academic honesty is an essential component of teaching, learning and research and is fundamental to the very nature of universities.

- Academic writing is evidence-based, and the ideas and work of others must be acknowledged and not claimed or presented as one’s own, either deliberately or unintentionally.

The policy goes on to describe what student responsibilities are, why they should do the right thing for maximum effect of the assessment and provides some handy links to our Writing Centre and applying for modified arrangements. There’s also a clear statement of what not to do, followed by lists of clarifications of various terms.

Sitting in on a hearing, looking at the process unfolding, I can review the overall thrust of this policy and be aware that it has been clearly identified to students that they must do their own work but, reading through the policy and its implementation guide, I don’t really see what it provides to sufficiently scaffold the process of retraining or re-educating students if they are detected doing the wrong thing.

There are many possible outcomes from the application of this policy, starting with “Oh, we detected something but we turned out to be wrong”, going through “Well, you apparently didn’t realise so we’ll record your name for next time, now submit something new ” (misunderstanding), “You knew what you were doing so we’re going to give you zero for the assignment and (will/won’t) let you resubmit it (with a possible mark cap)” (first offence), “You appear to make a habit of this so we’re giving you zero for the course” (second offence) and “It’s time to go.” (much later on in the process after several confirmed breaches).

Let me return to my discussions on load and the impact on people from those earlier posts. If you accept my contention that the majority of plagiarism cheating is minor omission or last minute ‘helmet fire’ thinking under pressure, then we have to look at what requiring students to resubmit will do. In the case of the ‘misunderstanding’, students may also be referred to relevant workshops or resources to attend in order to improve their practices. However, considering that this may have occurred because the student was under time pressure, we have just added more work and a possible requirement to go and attend extra training. There’s an old saying from Software Development called Brook’s Law:

“…adding manpower to a late software project makes it later.” (Brooks, Mythical Man Month, 1975)

In software it’s generally because there is ramp up time (the time required for people to become productive) and communication overheads (which increases with the square of the number of people again). There is time required for every assignment that we set which effectively stands in for the ramp-up and, as plagiarising/cheating students have probably not done the requisite work before (or could just have completed the assignment), we have just added extra ramp-up into their lives for any re-issued assignments and/or any additional improvement training. We have also greatly increased the communication burden because the communication between lecturers and peers has implicit context based on where we are in the semester. All of the student discussion (on-line or face-to-face) from points A to B will be based around the assignment work in that zone and all lecturing staff will also have that assignment in their heads. An significantly out-of-sequence assignment not only isolates the student from their community, it increases the level of context switching required by the staff, decreasing the amount of effective time that have with the student and increasing the amount of wall-clock time. Once again, we have increased the potential burden on a student that, we suspect, is already acting this way because of over-burdening or poor time management!

Later stages in the policy increase the burden on students by either increasing the requirement to perform at a higher level, due to the reduction of available marks through giving a zero, or by removing an entire course from their progress and, if they wish to complete the degree, requiring them to overload or spend an additional semester (at least) to complete their degree.

My question here is, as always, are any of these outcomes actually going to stop the student from cheating or do they risk increasing the likelihood of either the student cheating or the student dropping out? I complete agree with the principles and focus of our policy, and I also don’t believe that people should get marks for work that they haven’t done, but I don’t see how increasing burden is actually going to lead to the behaviour that we want. (Dan Pink on TED can tell you many interesting things about motivation, extrinsic factors and cognitive tasks, far more effectively than I can.)

This is, to many people, not an issue because this kind of policy is really treated as being punitive rather than remedial. There are some excellent parts in our policy that talk about helping students but, once we get beyond the misunderstanding, this language of support drops away and we head swiftly into the punitive with the possibility of controlled resubmission. The problem, however, is that we have evidence that light punishment is interpreted as a licence to repeat the action, because it doesn’t discourage. This does not surprise me because we have made such a risk/reward strategy framing with our current policy. We have resorted to a punishment modality and, as a result, we have people looking at the punishments to optimise their behaviour rather than changing their behaviour to achieve our actual goals.

This policy is a strange beast as there’s almost no way that I can take an action under the current approach without causing additional work to students at a time when it is their ability to handle pressure that is likely to have led them here. Even if it’s working, and it appears that it does, it does so by enforcing compliance rather than actually leading people to change the way that they think about their work.

My conjecture is that we cannot isolate the problems to just this policy. This spills over into our academic assessment policies, our staff training and our student support, and the key difference between teaching ethics and training students in ethical behaviour. There may not be a solution in this space that meets all of our requirements but if we are going to operate punitively then let us be honest about it and not over-burden the student with remedial work that they may not be supported for. If we are aiming for remediation then let us scaffold it properly. I think that our policy, as it stands, can actually support this but I’m not sure that I’ve seen the broad spread of policy and practice that is required to achieve this desirable, but incredibly challenging, goal of actually changing student behaviour because the students realise that it is detrimental to their learning.

The Earth Goes Around the Sun or the Sun Goes Around the Earth: Your Reaction Reflects Your Investment

Posted: October 8, 2012 Filed under: Education | Tags: advocacy, collaboration, community, design, education, educational problem, educational research, heliocentricity, in the student's head, reflection, student perspective, teaching, teaching approaches, thinking, threshold concepts, tools Leave a commentThere is a rather good new BBC version of Sherlock Holmes, called Sherlock because nobody likes confusion, where Holmes is played by Benedict Cumberbatch. One of the key points about Holmes’ focus is that it comes at a very definite cost. At one point, Cumberbatch’s Holmes is being lightly mocked because he was unaware that the Earth goes around the Sun. He is completely unfazed by this (he may have known it but he deleted it) because it’s not important to him. This extract is from the episode “The Great Game”:

Sherlock Holmes: Listen: [gets up and points to his head] This is my hard-drive, and it only makes sense to put things in there that are useful. Really useful. Ordinary people fill their heads with all kinds of rubbish, and that makes it hard to get at the stuff that matters! Do you see?

John Watson: [brief silence; looks at Sherlock incredulously] But it’s the solar system!

Sherlock Holmes: [extremely irritated by now] Oh, hell! What does that matter?! So we go around the sun! If we went around the moon or round and round the garden like a teddy bear, it wouldn’t make any difference! All that matters to me is the work!

Sherlock’s (self-described) sociopathy and his focus on his work make heliocentricity an irrelevant detail. But this clearly indicates his level of investment in his work. All the versions of Sherlock have extensive catalogues of tobacco types, a detailed knowledge of chemistry and an unerring eye for detail. If someone had walked up to him and said “Captain Ross smokes Greenseas tobacco” and they were wrong then Sherlock’s agitation (and derision) would be directed at them: worse if he had depended upon this fact to draw a conclusion.

We are all well aware that such indifference to whether Sun or Earth occupies the centre of the Solar System has not always been received so sanguinely. As it turns out, while there is widespread acceptance of the fact of heliocentricity, there is still considerable opposition in some quarters and, in the absence of scientific education, it is easy to see why people would naturally assume by simple (unaided) observation that the Sun is circling us, rather than the reverse. You have to accept a number of things before heliocentricity moves from being a sound mathematical model for calculation (as Cardinal Bellarmine did when discussing it with Galileo, because it so well explains things hypothetically) to the acceptance of it as the model of what actually occurs (as it makes the associated passages of scripture much harder to deal with). And the challenge of accepting this often lies in the degree to which that acceptance will change your world.

Your reaction reflects your investment.

Sherlock didn’t care either way. His world was not shaken by which orbited what because it was not a key plank of his being, nor did it force him to revise anything that he cared about. Cardinal Bellarmine, in discussions with Galileo, had a much greater investment, acting as he was on behalf of the Church and, one can only assume, firm in his belief in scripture while retaining his sensibilities to be able work in science (Bellarmine was a Jesuit and worked predominantly in theology). As he is quoted:

If there were a real proof that the Sun is in the center of the universe, that the Earth is in the third sphere, and that the Sun does not go round the Earth but the Earth round the Sun, then we should have to proceed with great circumspection in explaining passages of Scripture which appear to teach the contrary, and we should rather have to say that we did not understand them than declare an opinion false which has been proved to be true. But I do not think there is any such proof since none has been shown to me.

It’s easy to think that these battles are over but, of course, as we deal with one challenging issue, another arises. This battle is not actually over. The 2006 General Social Survey showed that 18.3% of those people surveyed thought that the Sun went around the Earth, and 8% didn’t know. (0.1% refused. I think I’ve read his webpage.) (If you’re interested, all of the GSS data and its questions are available here. I hope to run the more recent figures to see how this has trended but I’ve run out of time this week.) That’s a survey run in 2006 in the US.

Why do nearly a quarter of the US population (or why did, given that this is 2006) not know about the Earth going around the Sun? As an educator, I have to look at this because if it’s because nobody told them, then, boy, do we have some ‘splaining to do. If it’s because they deleted it like Sherlock, then we have some seriously focused people or a lot of self-deleting sociopaths. (This is also not that likely a conjecture.) If it’s because someone told them that believing this meant that they had to spit in the face of one god or another, then we are seeing the same old combat between reaction and investment. There are a number of other correlations on this that, fortunately, indicate that this might be down to poor education, as knowledge of heliocentricity appears to correlate with the number of words that people got correct in the vocabulary test. Also, the number of people who didn’t accept heliocentricity decreased with increasing education. (Yes, that can also be skewed culturally as well but the large-representation major religions embrace education.)

So, and it’s a weird straw to clutch at and I need to dig more, it doesn’t appear that heliocentricity is, in the majority of cases, being rejected because of a strong investment in an antithetical stance, it’s just a lack of education or retention of that information. So, maybe we can put this one down, give more money to science teachers and move on.

But let me get to the meat of my real argument here, which is that a suitably alien or counter-intuitive proposition will be met with hostility, derision and rejection. When things matter, for whatever reason, we take them more seriously. When we take things so seriously that they shape how we live, consciously or not, then there is a problem when those underpinnings are challenged. We can make empty statements like “well, I suppose that works in theory” when the theory forces us to accept that we have been wrong, or at least walking on the less righteous path. When someone says to me “well, that’s fine in theory” I know what they are really saying. I’ve heard it before from Cardinal Bellarmine and it has gained no more weight since then. So it’s hard? Our job is hard. Constantly questioning is hard, tiring and often unrewarding. Yet, without it, we would have achieved very, very little.

People of all colours and races are equal? Unthinkable! Against our established texts! Supported by pseudo-science and biased surveys! They appear to be more similar than we thought! But they can’t marry! Wait, they can! They are equal! How can you think that they’re not?!

How many times do we have to go through this? We are playing out the same argument over and over again: when it matters enough (or too much), we resist to the point where we are being stubborn and (often) foolish.

And, that, I believe is where we stand in the middle of all of these revelations of unconscious and systematic bias against women that I referred to in my last post. People who have considered themselves fair and balanced, objective and ethical, now have to question whether they have been operating in error over all these years – if they accept the research published in PNAS and all of the associated areas. Suddenly, positive discrimination hiring policies become obvious as they now allow the hiring of people who appear to be the same, that the evidence now says have most likely been undervalued. This isn’t disadvantaging a man, this is being fair to the best candidate.

When presented with something challenging I find it helpful to switch the focus or the person involved. Would I be so challenged if it were to someone else? If the new revelation concerned these people or those people? How would I feel about if I read it in the paper? Would it matter if someone I trusted said it to me? Where are my human frailties and how I can account for them?

But, of course, as an educator, I have to think about how to frame my challenging and heretical information so that I don’t cause a spontaneous rejection that will prevent further discussion. I have to provide an atmosphere that exemplifies good practice, a world where people eventually wonder why this part of the world seems to be better, fairer and more reasonable than that part of the world. Then, with any luck, they take their questioning and new thinking to another place and we seed better things.

Bad Writing, Bad Future?

Posted: October 5, 2012 Filed under: Education | Tags: advocacy, community, curriculum, design, education, educational problem, educational research, in the student's head, measurement, principles of design, student perspective, teaching, teaching approaches, thinking, tools, universal principles of design Leave a commentI was recently reading an article on New Dorp public high school on Staten Island. New Dorp had, until recently, a low graduation rate that was among the bottom 2,000 across the United States. (If you’re wondering, there were 98,817 public schools in the US in 2009-10, according to the National Center for Education Statistics, 65,840 being secondary. So New Dorp was in the bottom 2% across all schools and bottom 3% of secondary.) New Dorp’s primary intake is from poor and working-class families and, in 2006, 82% of the freshmen entered the school with a reading level lower than the required grade.

However, it was bad writing that was ultimately identified as the main obstacle to success: students couldn’t turn their thoughts into readable essays. Because this appeared to be the primary difference between successful and unsuccessful students, Deirdre DeAngelis (the principal) and the faculty decided that writing would become a focus. If nothing else, New Dorp’s students would learn to write well.

And, apparently, it has paid off. Pass rates are up, repeating rates have dropped, scores are higher than any previous class. Pre-college enrolments are up but, interestingly, the demographic makeup has remained the same and graduations rates have leapt from 63% to a projected 80% this Spring. (Yes, I know, projected data. I’ll try to check this again after it’s happened.)

The article, which I encourage you to read, goes on to discuss why this change of focus was so important. There was resistance – the usual response of “We’re doing our job, but the students aren’t smart enough (or are too lazy)” from certain groups of educators. Yet, students responded with increased participation and high attendance, rewarding efforts and silencing critics. What was interesting is that analysis of why students couldn’t write indicated that most could decipher the underlying texts and could comprehend sentences but had major deficiencies in the use of key parts of speech. Simple speeches were fine but compound sentences and sentences with dependent clauses were hard to decipher and very difficult to write. How can you use ‘although’ correctly when you don’t know what it means?

As understanding of speech grew, so did reading comprehension. Classroom discussion encouraged students to listen, think and speak more precisely, giving them something to repeat in their writing.

It’s an interesting article and I’m re-reading it at the moment to see exactly what I can extract for my own students and where I have to read to verify and expand upon the ideas. From a personal perspective, however, I think of one of the best reminders of what it is like to be a student who is confused or intimidated by writing.

I go to Hong Kong.

If you’ve been to Hong Kong, you’ll know that there is a vast amount of signage, some in Chinese characters and some in English, but there is really far more Chinese neon and it is all over the landscape. I can read some Chinese (duck, soup, daily yum cha, restaurant, men, exit – you get the gist) and I understand some Chinese so I can get a feeling for some of what is happening. But I have no grasp of any subtlety. I cannot create a sentence or write a character reliably. I am a very good communicator in my own language but in Chinese I sound ridiculous. I scrawl characters that good natured colleagues interpret very generously but it is the scribbling of a child.

In Hong Kong, unless I am speaking English, I appear illiterate. It is easy to say “Well, don’t feel bad, why would you have learned Cantonese in Australia” but let’s remember that when looking at the students of New Dorp. Why would anyone have encouraged them in creative writing, written expression and the development of sophisticated literary argument when a large percentage of their parents have English as a second language, effectively reduced access to schooling and, most likely, a very tight time budget to spend with their children due to overwork and job crowding?

A student who can’t write effectively, if we haven’t actually really tried to teach them how to write, is no more stupid or lazy than I am when I don’t try to learn all of Cantonese before going to Hong Kong. We have both been denied an opportunity. I am lucky in that I can choose when I go to Hong Kong. A student who can’t write has a much harder road because their future will be brighter and better if they can write, as evidenced by the increased success rates at New Dorp.

I look forward to seeing what New Dorp gets up to in the future and I take my hat off to them for this approach.