How the Internet Works: For You and On You

Posted: April 7, 2012 Filed under: Education | Tags: education, higher education, randall munroe, teaching, teaching approaches, umwelt, xkcd 1 CommentI would link to a recent April 1st post on Randall Munroe’s XKCD (it’s http://www.xkcd.com/1037/ if you need to know) but I can’t because I’m not sure what you’ll see when you get there and some of the possible images are not safe for work. The theme of his post is umwelt, the notion that your perception of your own world is highly personal, influenced by your previous experiences and the lens that you have on your environment.

This is the image I see now – using my default browser. It is, very much, what you make of it. (I attribute the image to XKCD, but I can’t link to it for obvious reasons.)

A well? The Ring? The Sun? Your perception of what this mean depends on your senses, your experiences and your world to date.

Some people saw images of unlikely earthquakes. Some saw images of groups being stalked by velociraptors – but only when they elongated their screens enough to see everything on the page. Serving military saw a supportive post that encouraged them to keep an eye on the missiles. Panels adjusted based on who you were, which browser you used, where you were, even what size screen you used.

How did this work? Your browser provides a great deal of information as to what it is, to allow sites to adjust for browser variation. At the same time, the Internet Protocol (IP) address of your computer, the address that other computers use to get to it, gives away your location and your organisation. That’s why students at MIT got MIT jokes and people in Israel got an XKCD comic in Hebrew.

I can’t think of a better way to introduce students to the idea that gazing into the abyss also involves the abyss gazing into you. There are two great summary pages here (Reddit) and here (Google document).

This is the Internet and it’s ubiquitous. This is a great way to discuss the amount of information that is being sent back by every browser, from every platform. The amount of information that can be obtained by underlying systems that most people are unaware of.

For me, what I found most amusing is that a friend sent the link of the image to me saying “Hey, Voight Kampf test” (from Blade Runner) and I saw a picture of something completely different and thought my friend was mad. That’s something else to talk about – what it would mean if information itself mutated depended on who you were and where you were.

Putting the pieces together: Constructive Feedback

Posted: April 6, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, teaching, teaching approaches, tools Leave a commentOver the last couple of days, I’ve introduced a scenario that examines the commonly held belief that negative reinforcement can have more benefit than positive reinforcement. I’ve then shown you, via card tricks, why the way that you construct your experiment (or the way that you think about your world) means that this becomes a self-fulfilling prophecy.

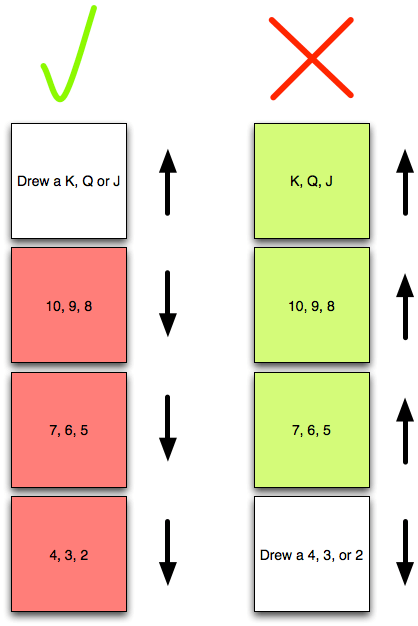

Now let’s talk about making use of this. Remember this diagram?

This shows what happens if, when you occupy a defined good or bad state, you then redefine your world so that you lose all notion of degree and construct a false dichotomy through partitioning. We have to think about the fundamental question here – what do we actually want to achieve when we categorise activities as good or bad?

Realistically, most highly praiseworthy events are unusual – the A+. Similarly, the F should be less represented across our students than the P and the “I’ve done nothing, I don’t care, give me 0” should be as unlikely as the A+. But, instead of looking at outcomes (marks), let’s look at behaviours. Now I’m going to construct my feedback to students to address the behaviour or approach that they employed to achieve a certain outcome. This allows me to separate the B+ of a gifted student who didn’t really try from the B+ of a student who struggled and strived to achieve it. It also allows me to separate the F of someone who didn’t show up to class from the F of someone who didn’t understand the material because their study habits aren’t working. Finally, this allows me to separate the A+ of achievement from the A+ of plagiarism or cheating.

Under stress, I expect people to drop back down in their ability to reason and carry out higher-level thought. We could refer to Maslow or read any book on history. We could invoke Hick’s Law – that time taken to make decisions increases as function of the number of choices, and factor in pilot thresholds, decision making fatigue and all of that. Or we could remember what it was like to be undergraduates. 🙂

Let me break down student behaviours into unacceptable, acceptable and desirable behaviours, and allocate the same nomenclature that I did for cards. So the unacceptable are now the 2,3,4, the acceptable and 5-10, and the desirable are J-K. (The numbers don’t matter, I’m after the concept.) When I construct feedback for my students, I want to encourage certain behaviours and discourage others. Ideally, I want to even develop more of certain behaviours (putting more Kings in the deck, effectively). But what happens if I don’t discourage the unacceptable behaviours?

Under stress, under reduced cognitive function and reduced ability to make decisions, those higher level desirable behaviours are most likely going to suffer. Let us model the student under stress as a stacked deck, where the Q and K have been removed. Not only do we have less possibility for praise but we have an increased probability of the student making the wrong decision and using a 2,3,4 behaviour. It doesn’t matter that we’ve developed all of these great new behaviours (added Kings), if under stress they all get thrown out anyway.

So we also have to look at removing or reducing the likelihood of the unacceptable behaviours. A student who has no unacceptable behaviour left in their deck will, regardless of the pressure, always behave in an acceptable manner. If you can also add desirable behaviours then they have an increase chance of doing well. This is where targeted feedback comes in at the behavioural level. I don’t need to worry about acceptable behaviours and I should be very careful in my feedback to recognise that there is an asymmetric situation where any improvement from unacceptable is great but a decline from desirable to acceptable may be nowhere near as catastrophic. Moderation, thought and student knowledge are all very useful concepts here. But we can also involve the student in useful and scaleable ways.

Raising student awareness of how their own process works is important. We use reflective exercises midway through our end-of-first year programming course to develop student process awareness: what worked, what didn’t work, how can you improve. This gives students a framework for self-assessment that allows them to find their own 2s and their own Kings. By getting the students to do it, with feedback from us, they develop it in their own vocabulary and it makes it ideal for sharing with other students at a similar level. In fact, as the final exercise, we ask students to summarise their growth in their process awareness during the course and ask them for advice that they would give to other students about to start. Here are three examples:

Write code. Go beyond your practicals, explore the language, try things out for yourself. The more you code, the better you’ll get, so take every opportunity you can.

If I could give advice to a future … student, I would tell them to test their code as often as possible, while writing it. Make sure that each stage of your code is flawless before going onto the next stage.

If I could give one piece of advice to students …, it would be to start the coding early and to keep at it. Sometimes you can have a problem that you just can’t work out how to fix but if you start early you leave yourself time to be able to walk away and come back later. A lot of the time when you come back you will see the problem very quickly and wonder why you couldn’t see it before.

There are a lot of Kings in the above advice – in fact, let’s promote them and call them Aces, because these are behaviours that, with practice, will become habits and they’ll give you a K at the same time as removing a 2. “Practise your coding skills” “Test your code thoroughly” “Start early” Students also framed their lessons in terms of what not to do. As one student reflected, he knew that it would take him four days to write the code, so he was wondering why he only started two days out – you could read the puzzlement in the words as he wrote them. He’d identified the problem and, by semester’s end, had removed that 2.

I’m not saying anything amazing or controversial here, but I hope that this has framed a common situation in a way that is useful to you. And you got to shout at cards, too. Bonus!

Card Shouting: Teaching Probability and Useful Feedback Techniques by… Yelling at Cards

Posted: April 5, 2012 Filed under: Education | Tags: card shouting, education, feedback, fiero, games, higher education, resources, teaching, teaching approaches, tools 2 CommentsI introduced a teaching tool I call ‘Card Shouting’ into some of my lectures a while ago – usually those dealing with Puzzle Based Learning or thinking techniques. What does Card Shouting do? It demonstrates probability, shows why experimental construction is important and engages your class for the rest of the lecture. So what is Card Shouting?

(As a note, I’ve been a bit rushed today and I may not have put this the best way that I could. I apologise for that in advance and look forward to constructive suggestions for clarity.)

Take a standard deck of cards, shuffle it and put it face down somewhere where the class can see it. (You either need very big cards or a document camera so that everyone can see this in a large class. In a small class a standard deck is fine.)

Then explain the rules, with a little patter. Here’s an example of what I say: “I’m sick and tired of losing at Poker! I’ve decided that I want to train a pack of cards to give me the cards I want. Which ones do I want? High cards, lots of them! What don’t I want? Low cards – I don’t want any really low cards.”

Pause to leaf through the deck and pull out a Ace, King and Queen. Also grab a 2,3 and 4. Any suit is fine. Hold up the Ace, King and Queen.

“Ok, these guys are my favourites. What I’m going to do, after this, is shuffle everything back in and draw a card from the top of the deck and flip it up so you can see it. If it’s one of these (A, K, Q), I want to hear positive things – I want ‘yeahs!’ and applause and good stuff. But if it’s one of these (hold up 2,3 and 4) I want boos and hisses and something negative. If it’s not either – well, we don’t do anything.” (Big tip: put a list of the accepted positive and negative phrases on the board. It’s much easier that having to lecture people on not swearing)

“Let’s find out what works best: praise or yelling- but we’re going to have measure our outcomes. I want to know if my training is working! If I draw a bad card and abuse it, I want to see improvement – I don’t want to see one of the bad cards on the next draw. If I draw a good card, and praise it, I want the deck to give me another good card – if it’s lower than a J, I’ve wasted my praise!” Then work through with the class how you can measure positive and negative reaction from the cards – we’ve already set up the basis for this in yesterday’s post, so here’s that diagram again.

In this case, we record a tick in the top left hand box if we get a good card and, after praising it, we get another good card. We put a tick in top right hand box if we draw a bad card, abuse the deck, and then get anything that is NOT a bad card. Similarly for the bottom row, if we praise it and the next card is not good, we put a mark in the bottom left hand box and, finally, if we abuse the card and the next card is still bad, we record a mark in the bottom right hand box.

Now you shuffle and start drawing cards, getting the reactions and then, based on the card after the reaction, record improvement or decline in the face of praise and abuse. If you draw something that’s neither good NOR bad to start with – you can ignore it. We only care about the situation when we have drawn a good or a bad card and what the immediate next card is.

The pattern should emerge relatively quickly. Your card shouting is working! If you get a card in the bad zone, you shout at it, and it’s 3 times as likely to improve. But wait, praise doesn’t do anything for these cards! You can praise a card and, again, roughly 3 times as often – it gets worse and drops out of the praise zone!

Not only have we trained the deck, we’ve shown that abuse is a far more powerful training tool!

Wait.

What? Why is this working? I discussed this in outline yesterday when I pointed out it’s a commonly held belief that negative reinforcement is more likely to cause beneficial outcome based on observation – but I didn’t say why.

Well, let’s think about the problem. First of all, to make the maths easy, I’m going to pull the Ace out of the deck. Sorry, Ace. Now I have 12 cards, 2 to K.

Let me redefine my good cards as J, Q, K. I’ve already defined my bad cards as 2, 3, 4. There are 12 different card values overall. Let me make the problem even simpler. If we encounter a good card, and praise it, what are the chances that I get another good card? (Let’s assume that we have put together a million shuffled decks, so the removal of one card doesn’t alter the probabilities much.)

Well, there are 3 good card values, out of 12, and 9 card values that aren’t good – so that’s 9/12 (75%) chance that I will get a not good card after a good card. What about if I only have 12 cards? Well, if I draw a K, I now only have the Q and the J left in my good card set, compared to 9 other cards. So I have a 9/11 chance of getting a worse card! 75% chance of the next card being worse is actually the best situation.

You can probably see where this is going. I have a similar chance of showing improvement after I’ve abused the deck for giving me a bad card.

Shouting at the cards had no effect on the situation at all – success and failure are defined so that the chances of them occurring serially (success following success, failure following failure) are unlikely but, more subtly, we extend the definition of bad when we have a good card – and vice versa! If we draw any card from 5 to 10 normally, we don’t care about it, but if we want to stay in the good zone, then these 6 cards get added to the bad cards temporarily – we have effectively redefined our standards and made it harder to get the good result. Here’s a diagram, with colour for emphasis, to show the usually neutral outcomes are grouped with the extreme.. No wonder we get the results we do! The rules that we use have determined what our outcome MUST look like.

So, that’s Card Shouting. It seems a bit silly but it allows you to start talking about measurement and illustrate that the way you define things is important. It introduces probability in terms of counting. (You can also ask lots of other questions like “What would happen if good was 8-K and bad was 2-7?”)

My final post on this, tomorrow, is going to tie this all together to talk about giving students feedback, and why we have to move beyond simple definitions of positive and negative, to focus on adding constructive behaviours and reducing destructive behaviours. We’re going to talk about adding some Kings and removing some deuces. See you then.

It’s Feedback Week! Yelling at Pilots is Good for Them!

Posted: April 4, 2012 Filed under: Education | Tags: card shouting, design, education, feedback, higher education, measurement, pilot training, reflection, teaching, teaching approaches 1 CommentThe next few posts will deal with effective feedback and the impact of positive and negative reinforcement. I’ll start off with a story that I often tell in lectures.

There’s a well established fact among pilot instructors, in the Air Force, that negative reinforcement works better than positive reinforcement. Combat pilots are very highly trained, go through an extremely rigorous selection procedure and have to maintain a very high level of personal discipline and fitness. The difference between success and failure, life and death effectively, can come down to one decision. During training, these pilots are put under constant stress, to try and prepare them for the real situation.

The instructors have observed that these pilots respond better to being yelled at than being congratulated. What’s their evidence? Well, if a pilot does something really well, and is praised, chances are his or her performance will get worse. Whereas, if the pilot does something bad, or dangerous, and you yell at him or her, his or her performance will improve. Well, that’s a pretty simple framework. Let’s draw it as a diagram.

Now, of course, to see the impact of praise or abuse, you have to record what you did (the praise or abuse) and then what happened (improvement or decline). So we need to draw up some boxes. Ticks mean praise, crosses mean abuse. Up arrow mean improvement, down arrow means decline. A tick in the box that you reach by selecting the column that belongs to Praise (tick) and the row that belongs to Improvement (up arrow) means that the pilot improved after praise. Let’s look at a picture.

So here we can see that, much as an instructor would expect, when I’m nice to you, you get worse more often that you get better. You get better once when praised, compared to getting worse three times. But, wow, when I yelled at you, you get better far more often than you got worse!

There is, of course, a trick here. Yes, it appears that shouting works better than praising but, without giving the game away in the comments (or Googling), do you know why? (The data is a reasonable approximation of the real situation, so there are no hidden arrows or ticks anywhere. 🙂 )

Now, true confession, the picture above is not actually of pilot training – I don’t have an F18 on my desk – but it will be a reasonable approximation of the situation, although it comes from a different source. The graph above comes from a game called “Card Shouting” and I’ll tell you more about that in tomorrow’s post.

Improving, Holding Steady and Going Downhill – Giving Students Useful Feedback

Posted: April 3, 2012 Filed under: Education | Tags: education, higher education, learning, measurement, MIKE, reflection, teaching, teaching approaches Leave a commentI’ve written before about the slightly fuzzy nature of marks but that, overall, we can roughly class marks into ‘failing’, ‘doing okay’ and ‘doing really well’, One thing that I think is really useful is giving students an indication of how they are going in terms of getting better, staying where they are or falling behind.

This is hard throughout courses unless we’ve done some important things, and we’ve also committed to some things across an entire set of courses, like a degree. I’ve covered some of these before but this is a lot more focused.

- We’ve tied the assessment firmly into the course so that success in one aspect is a reasonable indicator of continued success.

- Students can get early indication when they’re not getting it – whether it’s quick quizzes, feedback on assignments or activities in lectures. Early warning signs are always there.

- WE follow up on the early warning signs as well to warn students of what’s going on.

It’s the whole instrumentation, measurement and action routine that I’ve been banging on about for three months now. But let’s put it into a tighter framework, in some senses, with a looser measurement system. We’re only worried about improvement, stability or decline. But is it ever that simple?

I honestly don’t really care if students get 87 or 90 in many ways – High Distinction is High Distinction. If a student gets a series of marks as 86, 91, 88, 90 they’re holding steady. I certainly don’t want them banging on my door, lamenting their decline, if their next mark is an 85 – this is stable and it’s good. But what about this sequence: 50, 52, 57, 53? This is a much riskier proposition, of course, because it’s so much closer to the fail line. Being under 55 is wandering into the zone where one bad mark or missed question could fail you. You don’t want to be stable here.

So, even with a simple three-way framework – it’s pretty obvious that stability is relative. What we really have is different zones where only some of these activities are valid, which should come as no surprise to anyone. 🙂

Below 60, stability doesn’t really cut it and decline is completely unacceptable. Below 60, you really want to be above 60. Yes, 50-60 is a pass but it’s also an indicator that you’re just scraping by – an unlucky day could cost you 6 months of work. Above 60, up to say 75? Stability is ok but we should really be aiming for improvement – if the student can. Above 75? Well, some people will never get much beyond that and all their striving will result in a hard-earned stability that may still taste a little bitter at times. This is where our knowledge of the student comes in.

Not knowing a student’s ability means that you risk telling them to improve when there’s nothing else left and they’ve given all they can. So let me throw out my classification framework and replace it with two questions for the student:

How do you feel about your mark?

Do you think you could have done better?

Balancing that with your knowledge of the student, and guiding them through the thinking process, will give them a better idea of what they can and can’t do. Did they really struggle to get that 66? No? Well, they could have worked harder and maybe got a better mark. That 75 nearly killed them and they really put their all into it? Well that’s one heck of a fine mark.

We all know that very few people know themselves and that’s why I like to try and help them understand why they’re doing what they’re doing and, maybe, once in a while, help them to either accept the fruits of their labours, or to strive that little bit more, if they still have something left to strive with and think it’s worthwhile.

Teaching CS in the 21st Century: CS as a fundamental skill.

Posted: April 2, 2012 Filed under: Education, Opinion | Tags: advocacy, education, higher education, reflection, resources, teaching, teaching approaches 1 CommentToday’s Guardian has a feature in their Computer Science and IT section that includes a lot of very interesting pieces, ranging from what’s scaring girls away from coding, to why we need to be able to program and, John Naughton’s proposal for rebooting the computing curriculum – as an open letter to the Education Minister for the UK. Feel free not to read the rest of my piece if you’re pressed for time – the links on the first page will keep you busy for quite a while.

For those who are still reading, here’s a picture of ubiquitous access to computers in the developing world – giving people the possibility of doing anything with their lives. (Image is from this World Food Programme page, the food aid branch of the UN, showing the Nepalese deployment of the XO Laptop, with a programme focused on bringing young people into education, combined with a cooking oil-based incentive scheme if daughters attend at least 80% of the time.)

What I took away from reading the Guardian feature is the overwhelming message that we should teach programming and computer awareness for the same reason that we teach maths and science to all students, regardless of where they’ll end up – because that’s the world in which they live. To quote Naughton’s article:

We teach elementary physics to every child, not primarily to train physicists but because each of them lives in a world governed by physical systems. In the same way, every child should learn some computer science from an early age because they live in a world in which computation is ubiquitous. (Item 3, A Manifesto for Teaching CS in the 21st Century.)

I’ve read too many articles about various government programs that try to raise standards but do so in a way that concentrates effort on some areas in a way that starves all of the other areas, or sidelines them at the least. If we don’t see Information Communication and Technology (ICT) skills as vital, then we won’t assign priority to them. They’ll get shunted out of the way for other topics, like Maths, Language skills and Science. ICT is not more important than these but, in the world that our students will have to occupy, ICT needs a seat at the table. As many other, and better, commentators have noted, the transformation of the workforce continues apace and programming and computer use is now a vital skill in many jobs.

We need the focus in schools, because then we can hire the teachers, which drives the job market, which causes the teacher training, which improves the quality, which improves the number of competent graduates, and ultimately leads to knowledgable and fully-participating members of our civilised democracies where those little boxes on desks aren’t a mystery or intimidating. I can’t take more people into my Uni-level courses than are being produced by schools – and, sadly, not everyone who has the skill or training at school goes on to use it. I can’t wave a wand and turn the “less than 20%” of women who start my degree into 50% by the end. (Well, yes, I can, but I can’t do it fairly or ethically.) I can do the best I can with the people I get but I’d really love to get a lot more people with the skills!

We all know this is a challenge because we have so many acronyms that might mean ‘Computer training’ – are we teaching ICT, IS, IT, CS, CSE? To step back from the acronyms, and their deliberate placement for emphasis, are we teaching computational or algorithmic thinking (problem solving and solution design), are we teaching computer usage at a fundamental level, are we teaching people how to use certain packages, certain techniques – where does programming fit into all of this?

All of us are need at least a subset of these skills now, in the 21st century. On a daily basis, I download more software updates and modifications and program more items around my house, than I ever did in the years before 1995.

As always, time and resource budgets are tight and, because of this, this is not a problem we can solve at one college, one school or even one state. This is why governments have to make this a national priority if initiatives like this will succeed. This doesn’t have to mean standardised testing or fixed curricula – it means incentive to provide quality education in certain areas, with supportive high-level goals and curriculum consideration, as well as allocated money for training and community building. Of course, there are many existing initiatives like the UK revamp of the high school curriculum and available on-line resources but, here in Australia, we still don’t seem to have strong linkage between a senior school course and University entry and it must make it hard to direct students into a certain path if there is no benefit for them. There are some excellent starting points, however, such as the Australian Government’s Digital Education Revolution, so there is certainly some hope for the future, but we need long-term vision and bipartisan support for these initiatives if they’re going to continue and make real change over time.

Beautiful Posters and Complicated Concepts Don’t Always Work – But That’s OK.

Posted: April 1, 2012 Filed under: Education | Tags: education, higher education, principles of design, reflection, resources, teaching, teaching approaches, universal principles of design Leave a commentI was recently reading Metafilter, a content aggregator, when I came upon a set of labels that came from the Information is Beautiful site and described a number of logical fallacies. Unfortunately, while these were quite nice to look at, the fallacy descriptions are at times inaccurate, and the diagrams don’t really convey the core idea sometimes. (There was an example of applying these labels to a speech and it was a bit of a stretch in many regards.) What disappointed me in the ensuing discussion on Metafilter was how overwhelmingly negative people were about this. There was a lot of “well, this is terrible logic” (and that statement was at times true) and “the application of labels simplistically leads to trouble” (which is also true) but let’s step back for a moment and look at the core idea.

Would it be helpful to use strong visual cues that students can attach to text for a subset of logical fallacies or rhetorical tricks to help in them marking up essays? How about the ability to click an ‘Ad Hominem’ button on Wikipedia when you’ve selected a box of text that contains an attack upon the person rather than their ideas?

While the original labels certainly need refinement and work, taking this as a starting point would have been both useful and constructive. Attacking it, deriding it and rejecting it because it isn’t perfect seems a wasted opportunity to me. It’s very easy to be dismissive but I’m not sure that there’s much long-term benefit in burning everything that’s not perfect. I much prefer a constructive approach – is there anything I can use from here? Can I take this and make it better? How can I achieve this and make it awesome? The Information is Beautiful site has lots of good stuff but there is the occasional miss, but you’re bound to learn something interesting anyway, or pick up a new way of seeing. Would I teach directly from it? No! Of course not. (Look at some of the labels, especially for Novelty and Design and tell me if this is all serious.)

I should note that Metafilter user asavage, who some of you will know from burning off his eyebrows on Mythbusters, also noted that the IIB link wasn’t great but suggested an excellent alternative – A Visual Guide to Cognitive Biases.

Yes, asavage doesn’t much like what he read in the original links, and there’s good reason for it to be modified, but he provides a constructive suggestion. Now, fair warning, it’s a scribd link to get the slide pack, which is big and requires you to log in to the site or use a Facebook login, but if you teach any kind of logical thinking at all, it’s an essential resource. It’s the Cognitive Bias Wikipedia page with good graphics and it’s a great deal of fun.

Are either of these approaches the equivalent of a full lecture course on logic, reasoning and rhetoric? No. With thought, could you use elements from both in your teaching? I think the answer is a resounding yes and I hope that you have fun reading through them.

Grand Challenges in Education – When we say grand, we mean GRAND!

Posted: March 31, 2012 Filed under: Education | Tags: education, educational problem, grand challenge, higher education, reflection, teaching, teaching approaches, tools 2 CommentsSome time ago, Mark Guzdial posted on the Grand Challenges in the US National Educational Technology Plan. If I may summarise the four, huge, challenges, they were:

- A real-time, self-optimising difficulty-adjusting, interactive learning experience delivery system.

- A similarly high-end system for assessment of cross-discipline complex aspects of expertise and competencies.

- Integrated capture, aggregation, mining and sharing of content, learning and financial data across all platforms in near real-time.

- Identify the most effective principles of online learning systems and on/offline systems that produce equal or better results than conventional instruction in half the time and half the cost.

Wow. That’s one heck of a list. Compare that with the list of grand challenges from the March, 2011, report of National Science Foundation Advisory Committee for Cyberinfrastructure Task Force on Grand Challenges, which defines the grand challenge problems for my discipline, Computer (Cyber) Science and Engineering. By looking at some very complex problems, they arrived at the following list of areas in which great strides can, and should, be made:

- Advanced Computational Methods and Algorithms

- High Performance Computing

- Software Infrastructure

- Data and Visualisation

- Education, Training and Workforce Development

- Grand Challenge Communities.

Let me rewrite this last list in simpler, discipline free, terms:

- Better methods for solving hard problems.

- Big machines for solving hard problems.

- Good systems to run on the big machines, to support the better methods.

- Ways to see what results we have – people can see the results to make better decisions.

- Training people to make steps 1-4 work.

- Bring people together to make 1-5 work better with greater efficiency.

Now, lets look back at the four USNETP educational grand challenges to see if we can as easily form such a cohesive flow – we want to be able to see how it all works together.

- Smart learning systems.

- Smart assessment systems.

- Data and Visualisation. (Nick note: get into data and visualisation! 🙂 )

- Fusing the best of the old and the best of the new.

Now, the USNETP focus is on useful R&D and these challenges are part of their overall view of “they all combine to form the ultimate grand challenge problem in education: establishing an integrated, end-to-end real-time system for managing learning outcomes and costs across our entire education system at all levels. ” but what immediately leaps out at me are the steps 5 and 6 from the previous list. Rather than embed the training and community aspects somewhere in the rest of a document, why not embrace this at the same level if we’re talking about grand challenges in Education? That would give us:

- Training educators to make steps 1-4 work.

- Forming communities of practice to make 1-5 work better with greater efficiency.

Now these last two steps, of course, are what we’re doing with the conferences, the journals, the meetings and blogs like this but it makes a lot of sense when we see it inside my discipline, so it seems to make sense in the general field of education. There’s no doubt that these two last steps are easily as hard to manage at scale as the other projects, even interoperating with them. In fact, by making them huge challenges we increase their worth, justify effort and validate the research community built up around them. These are financially-sensitive times, where academics have to provide a value for their work. Allocating these important tasks to the grand challenge level recognises the difficulty, the uncertainty of being able to solve the problem and the sheer amount of work that may be involved.

These are, of course, only my thoughts and I have a great deal to learn in this space. I’m still searching for answers but if there’s a nice convenient report that says “Well, duh, Nick, we’re doing that right here, right now” I look forward to correction and enlightenment.

But, if it’s not already part of the USNETP grand challenges – what do you think? Should it be?

Teaching Tools (again): Balancing Price, Need and Accessibility.

Posted: March 30, 2012 Filed under: Education | Tags: education, higher education, reflection, resources, teaching, teaching approaches, tools 4 CommentsI’ve spoken before on open source and teaching tools but I’ve been reviewing some interesting data on textbook purchasing. As some of you may know, book purchases are dropping in many areas because students feel that they don’t need to (or can’t afford to) buy the text. Some of the price burden of textbooks is the size, printing and shipping costs associated, so eBooks, which can be and often are, cheaper should be addressing this problem.

Is that our experience? Well, we’re still collecting data but, anecdotally, no. Despite eBooks being substantially cheaper, students aren’t buying them in any greater numbers. (Early indications are that it may actually be less.)

Price is always going to be an issue. 60-70% of a large number is still a large number (to a student).

Need is an issue – do students need the book as a text or a reference? Will they be able to get by on lecture notes? How is the course structured? There are important equity issues associated with forcing a student to buy a book as you don’t know what they’ve had to give up to do that, and the resale market for secondhand books is not what it once was.

But one of the big concerns of my students is accessibility. They are well aware that buying an electronic book may give it to them in a very constrained form – a book that can only be read on one machine and may not survive upgrades, a book that may not have a useful search mechanism, a book where you can’t easily highlight the text. Worse, it may be a book that, sometime in the future, just stops working and can never be read again.

Yes, publishing companies are pouring millions of dollars into solving this problem but books are special in a very important way. Books enable knowledge transfer, they don’t own or restrict the knowledge transfer. When you produce a physical book, people can expend effort to do what they like. Make a house out of it, read it, re-index it, tear out the pages and put them together in print density order. None of this is possible with an eBook unless someone lets you. (Ok, you can build a house but it will use your laptop or tablet.)

I can’t help thinking that most of the effort seems to be going into providing the experience that publishing companies want us to have, in terms of usage, ownership and access – focusing on controlling us rather than enabling us. Perhaps this is the point we should address first?

(If you haven’t read my post on Hal Abelson’s talk, you might want to get to that after this.He talks a lot about the problems with the walled garden and his terminology,including the very useful term generative, is a very interesting read.)

The Facts of Undergraduates

Posted: March 28, 2012 Filed under: Education | Tags: education, higher education, reflection, teaching, teaching approaches Leave a commentIf you’re driving through the city and you’re in a hurry, you’re probably going to arrive at your destination and be under the impression that every light was red. You notice the red lights because they get in your way – worse, you may have convinced yourself that you need greens all the way and anytime that this situation doesn’t occur you have to deal with your expectations being thwarted.

Some of you, reading this, are getting angry or frustrated just reading this.

Setting up false expectations is the best way to get disappointed, frustrated and angry. I always set myself up with a positive mindset before lecturing or student contact, because starting a lecture or a meeting angry or frustrated is just going to set you up so that every negative interaction makes it worse. But, throughout an entire course, there are certain things that some people are going to do, we can’t just prepare for a course full of quiet, always attentive, highly intelligent, well-prepared and engaged students. Let’s look at a short list of other behaviours and my list of positive preparation for it.

- Students may not show up to lectures. Some students will stop coming and won’t come back – a lot of students will come back if the lecture is useful, interesting AND you have things like recordings or notes to let people catch up with missed lectures. Unless 100% attendance is required (and the question there is always “Why? Where is the educational value?”), giving people a mechanism to get back in is probably going to work better than holding a hard line. Recordings, and podcasts, let you collect your thoughts and review what you said – these help you as much as they help students.

- Students may not prepare. Once you start requiring preparation and you give students some value for that (participation, marked quizzes, things like that) students tend to re-optimise and prepare. This is a great opportunity to add some formative or small summative exercises in that can get great discussion or participation going.

- Students aren’t always focused on your lecture. There are many things going on for our students and they’re trying to work out where to spend their effort. This is a challenge, sure, but what a great opportunity to invest enthusiasm, talk to people and try to bring out the passion that brought at least some of your students in. Getting students talking to each other gives you a huge scaling factor and a communications network where your students can’t hide.

- Students just don’t do assignments sometime. I’m a great believer in giving a clear indication of what is required at the start of the course. This isn’t just me being prescriptive, this is a fantastic opportunity for me to review my expectations, my thoughts, my grading schemes, review changes, integrate new content. My course profile is not just a way to let students know what will happen if they don’t do things, it lets me frame the whole course to lead students into the necessity of the assignments and integrate that knowledge from lecture to tutorial to assignment to final exam.

Accepting that some things will not match your vision of the ideal student doesn’t mean that we have to walk into every lecture under a thundercloud. Yes, there’s effort involved, but there almost always is to achieve a good outcome.